I’ve been thinking a lot about the role of verification in a world increasingly shaped by autonomous software. The conversation around AI often focuses on capability—how intelligent systems are becoming, how quickly they can make decisions, and how many tasks they can automate. But the more I observe these systems interacting with financial platforms, digital services, and other automated agents, the more I find myself asking a simpler question: who verifies what they actually did?

That question is where Mira Network starts to look interesting.

The idea of an oracle is familiar in blockchain infrastructure. Traditional oracle networks supply external data to smart contracts—price feeds, weather information, or event confirmations. These systems bridge the gap between blockchain environments and the outside world. Mira appears to be exploring a similar role, but instead of verifying external data, it focuses on verifying the behavior of AI systems themselves.

At first glance, that might seem like a subtle distinction, but I think it reflects a deeper shift in how digital systems operate.

Autonomous agents are no longer theoretical. AI-driven systems are already executing trades, routing logistics decisions, moderating content, and coordinating complex workflows. In many cases, these agents interact directly with other automated systems rather than humans. When one agent triggers an action that affects another system—say a financial transaction or an automated service request—there needs to be a way to confirm what actually happened.

Traditionally, that confirmation comes from the system operator. Logs are stored internally, and if something goes wrong, engineers review those records and reconstruct the sequence of events. That model works well when everyone involved trusts the organization maintaining the system. But as autonomous agents begin operating across platforms, institutions, and jurisdictions, relying on internal logs becomes less comfortable.

From my perspective, this is the gap Mira is trying to address.

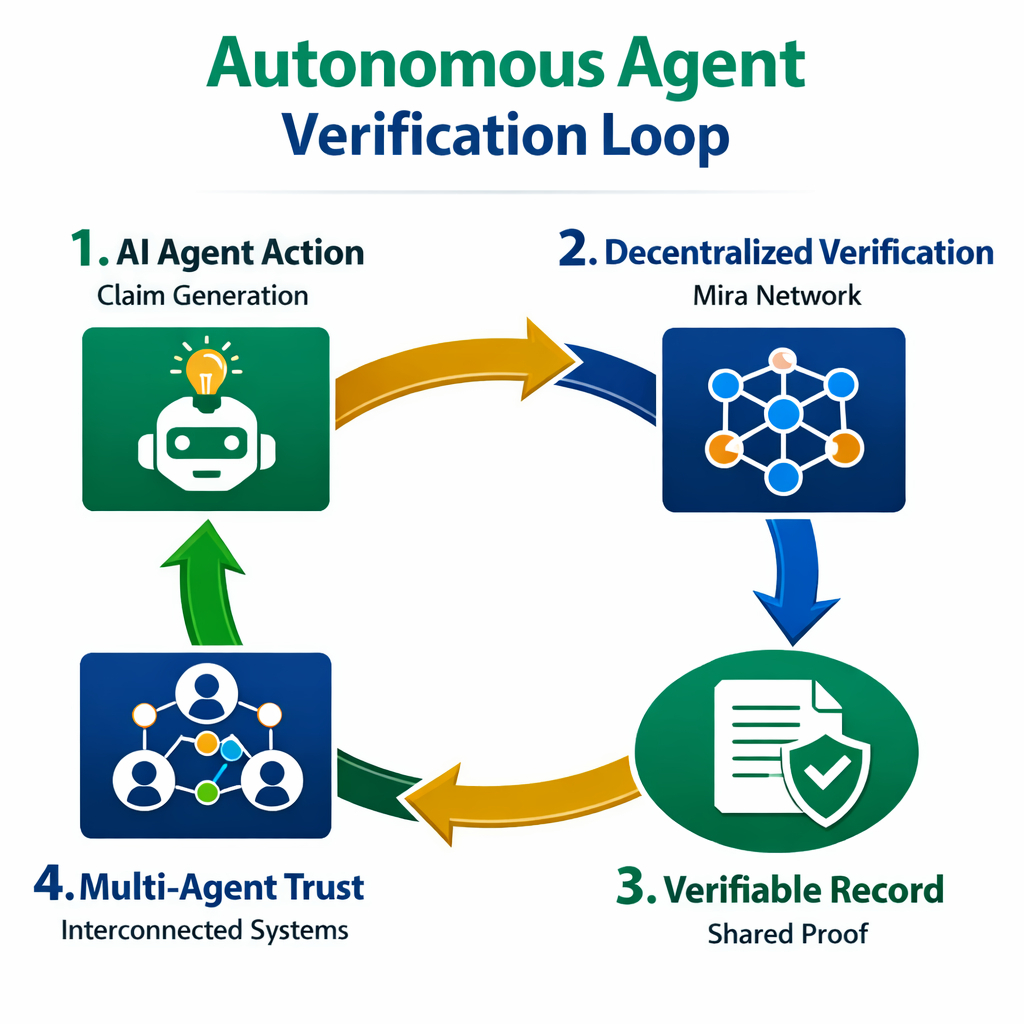

Rather than treating AI outputs as unquestionable results, Mira’s infrastructure attempts to treat them as claims that can be verified. Inputs, execution parameters, and outputs can be recorded through a decentralized verification layer. The idea is that when an AI system performs an action, there is a shared mechanism to confirm that the action occurred under the conditions it claims.

I think of it less as proving intelligence and more as proving activity.

That distinction is important because autonomous agents don’t need to be perfectly accurate to be useful. They simply need to operate within predictable boundaries. If a system can demonstrate that it followed defined rules, used approved data sources, and executed within expected constraints, other systems may be more willing to trust its outputs.

Still, I approach the concept with a fair amount of caution.

Verification infrastructure sounds straightforward in theory, but implementing it across diverse AI environments is complicated. Autonomous agents operate in different industries with different data formats, compliance requirements, and operational constraints. A verification layer that works smoothly in financial systems might encounter challenges in healthcare or logistics environments.

Another factor I keep in mind is the balance between transparency and efficiency. Verification networks introduce additional steps—validators, consensus processes, and data anchoring mechanisms. Those steps improve accountability but can also introduce latency. For many AI-driven systems, speed is critical. If verification slows down operations too much, developers may choose simpler internal logging instead.

At the same time, the direction of technological development makes the problem difficult to ignore. As AI agents become more autonomous and interconnected, the consequences of their actions extend beyond the systems that created them. Financial markets, infrastructure networks, and digital services increasingly depend on automated decision-making.

In that environment, shared verification layers may become more valuable than they appear today.

What I find interesting about Mira’s positioning is that it doesn’t try to compete directly with AI model developers. It doesn’t claim to build better intelligence or faster inference. Instead, it attempts to create infrastructure around the activity of those systems something closer to an auditing layer than an intelligence layer.

If autonomous agents eventually form large networks of interacting systems, some form of decentralized verification might become necessary simply to maintain trust between them.

Whether Mira evolves into that kind of infrastructure remains uncertain. Many technologies that look promising at the conceptual level struggle to prove themselves once they encounter the operational complexity of real deployments.

For now, I see Mira less as a definitive solution and more as an experiment in redefining what an oracle might mean in an AI-driven world one where the question is no longer just what data exists, but what intelligent systems actually did with it.