I tend to pause when I hear phrases like “verifiable consciousness” attached to artificial intelligence. The language sounds dramatic, almost philosophical, and I’ve learned that infrastructure projects rarely benefit from being framed in those terms.

Still, when I look more closely at what Mira Network is attempting, the concept begins to feel less mystical and more procedural. It’s not really about proving that machines are conscious.

It’s about proving what they did, how they did it, and whether those actions can be trusted by others.

That distinction matters more than the headline suggests.

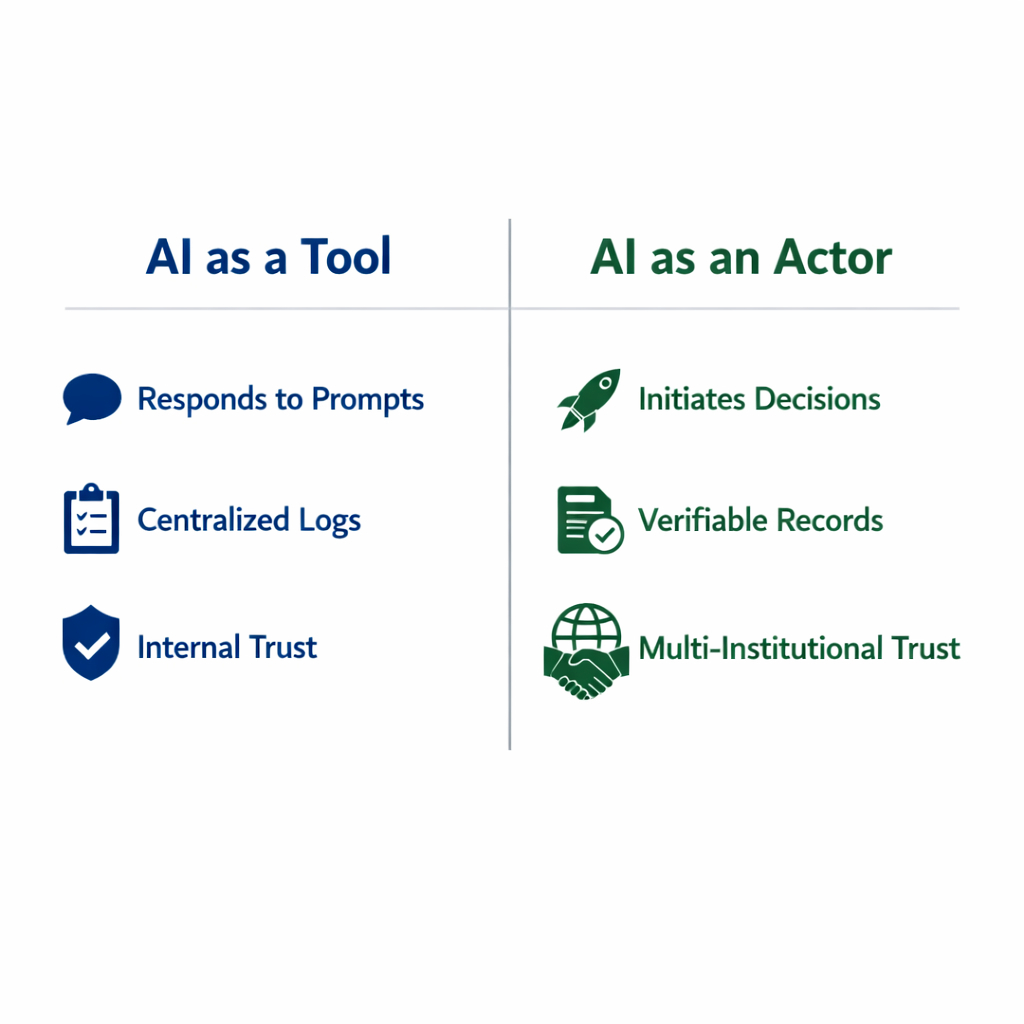

AI systems today operate in increasingly complex environments. They generate predictions, execute financial trades, filter content, coordinate logistics, and increasingly act as agents that initiate decisions rather than simply responding to prompts.

The question that keeps surfacing in my mind isn’t whether these systems are intelligent enough. It’s whether their actions can be verified in a way that multiple parties accept as credible.

Traditionally, verification happens through centralized logging systems. The organization running the AI records its activity and produces explanations when needed.

That approach works reasonably well inside a single company. But the moment AI systems interact across institutional boundaries between financial platforms, regulatory systems, or automated services those centralized records become harder to rely on.

Trust begins to depend less on the system itself and more on the organization maintaining it.

That’s where Mira’s infrastructure becomes interesting to me.

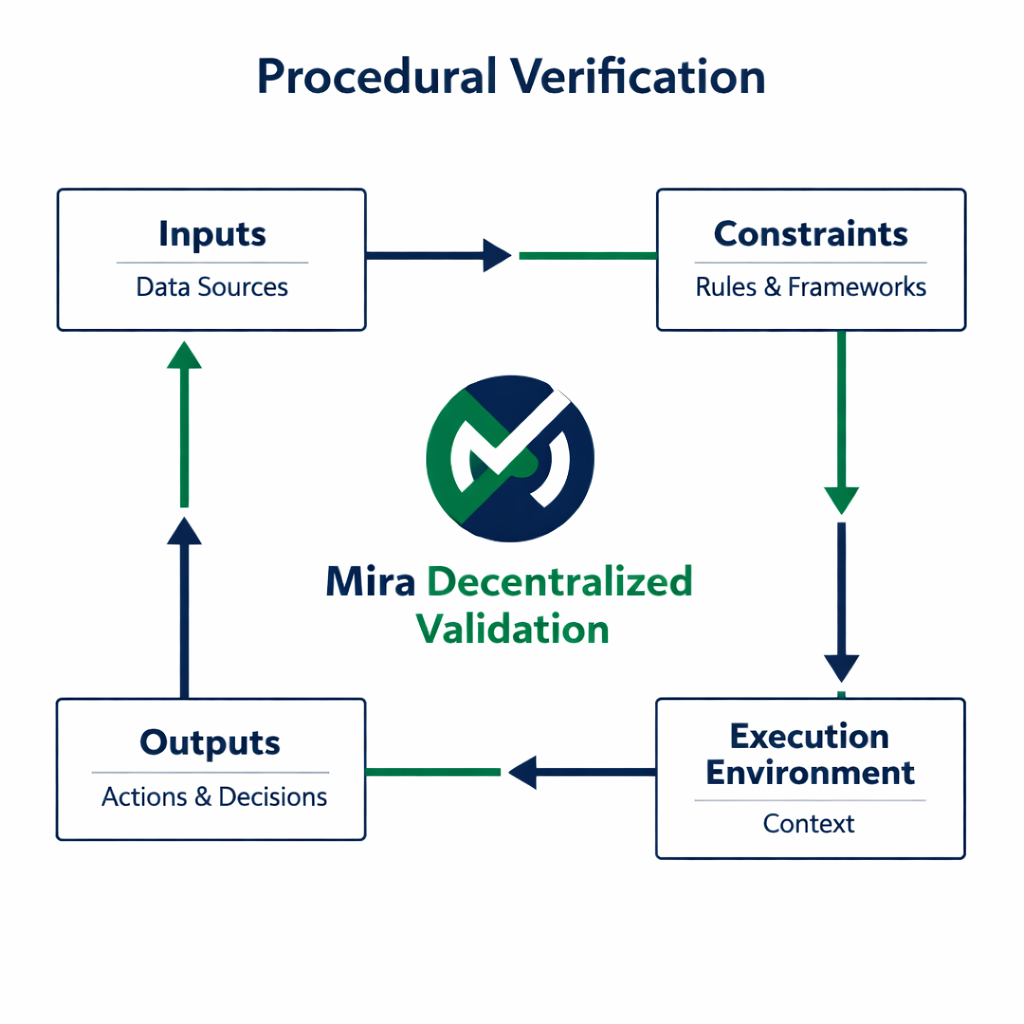

Rather than focusing on building smarter AI models, the network focuses on verifying claims about AI behavior. Inputs, constraints, execution environments, and outputs can be recorded and validated through a decentralized system.

In practical terms, this means that the record of what an AI system did does not live entirely within the operator’s own infrastructure.

I think of it less as verifying consciousness and more as verifying activity.

Still, the metaphor of “verifiable consciousness” reflects something about the direction AI is moving. As AI systems become more autonomous, they begin to resemble actors rather than tools.

They make decisions, interact with other systems, and influence outcomes that carry economic or social consequences.

In that environment, understanding what an AI system did and being able to prove it becomes increasingly important.

But I try not to assume that decentralization automatically solves this problem.

Verification networks introduce their own complexities. Validators must have incentives to act honestly. Data formats must remain consistent across different applications.

Integration must be simple enough that developers actually use the system rather than bypassing it when speed matters more.

These are not trivial challenges, and many infrastructure projects struggle when they move from theoretical design to operational reality.

Another question I keep returning to is scope. AI systems operate in very different domains: finance, healthcare, logistics, robotics, and more.

A verification infrastructure that works well in one environment may encounter friction in another. Mira’s architecture will need to adapt to these differences if it hopes to become broadly relevant.

What I do find compelling, however, is the narrowness of Mira’s focus. Instead of promising to revolutionize AI itself, it concentrates on something more specific: creating verifiable records of AI behavior.

That kind of restraint often signals infrastructure thinking rather than product marketing.

Infrastructure rarely captures attention because it operates quietly beneath the surface. Financial settlement networks, identity protocols, and logging systems rarely make headlines, yet they shape how entire industries function.

If AI systems continue expanding their influence, the infrastructure that verifies their actions could become similarly foundational.

Whether Mira ultimately plays that role remains uncertain. Many promising verification systems fail because they introduce too much friction or because centralized alternatives remain simpler to operate.

Adoption will likely depend less on technical elegance and more on whether developers find the network practical to integrate.

For now, when I hear the phrase “verifiable consciousness,” I interpret it less as a claim about AI awareness and more as an attempt to describe a new layer of accountability.

If AI systems are going to act more independently, the records surrounding those actions will need to become more reliable and more widely trusted.

Mira Network appears to be exploring how such a layer might work.

Whether that exploration eventually becomes a standard part of AI infrastructure or simply another step in the broader search for trustworthy automation is something that will likely reveal itself slowly, as real systems begin to test how much verification they actually need.