Every generation faces a technology that changes daily life so deeply that people don’t even notice the moment it becomes normal. Electricity, the internet, smartphones. At first they feel extraordinary. Then slowly they become invisible. Artificial intelligence is now entering that same stage. We wake up and search with it, study with it, write with it, and sometimes even share our personal worries with it. The machine responds instantly and politely. It feels helpful, almost comforting.

Yet something inside us still hesitates.

The strange thing about modern AI is not that it is weak. It is that it is powerful and uncertain at the same time. It can explain science, solve math, write code, and tell stories, but it does not actually know reality. It predicts words using patterns it learned from enormous datasets. When patterns match facts, the answer is correct. When patterns don’t match perfectly, the system still answers. It fills the gap and presents it with confidence. This is why people say AI sometimes hallucinates.

The emotional effect of this is bigger than the technical explanation. Humans naturally trust confident communication. When a response is detailed and well written, our brain relaxes. We assume knowledge exists behind it. But with AI, confidence and correctness are not always connected. The machine does not intentionally lie. It simply does not understand truth the way humans do.

This creates a silent problem in modern society. We are surrounded by answers but unsure about which answers we can safely rely on. Students verify homework twice. Professionals double check AI generated reports. Even developers test outputs repeatedly before trusting them. Artificial intelligence has become useful, yet it has not become dependable.

Mira Network appears exactly at this point of tension. Instead of creating another chatbot, the project tries to solve the deeper issue underneath all AI systems, reliability.

The core idea is simple but powerful. An answer should not be trusted because it sounds intelligent. It should be trusted because it has been verified.

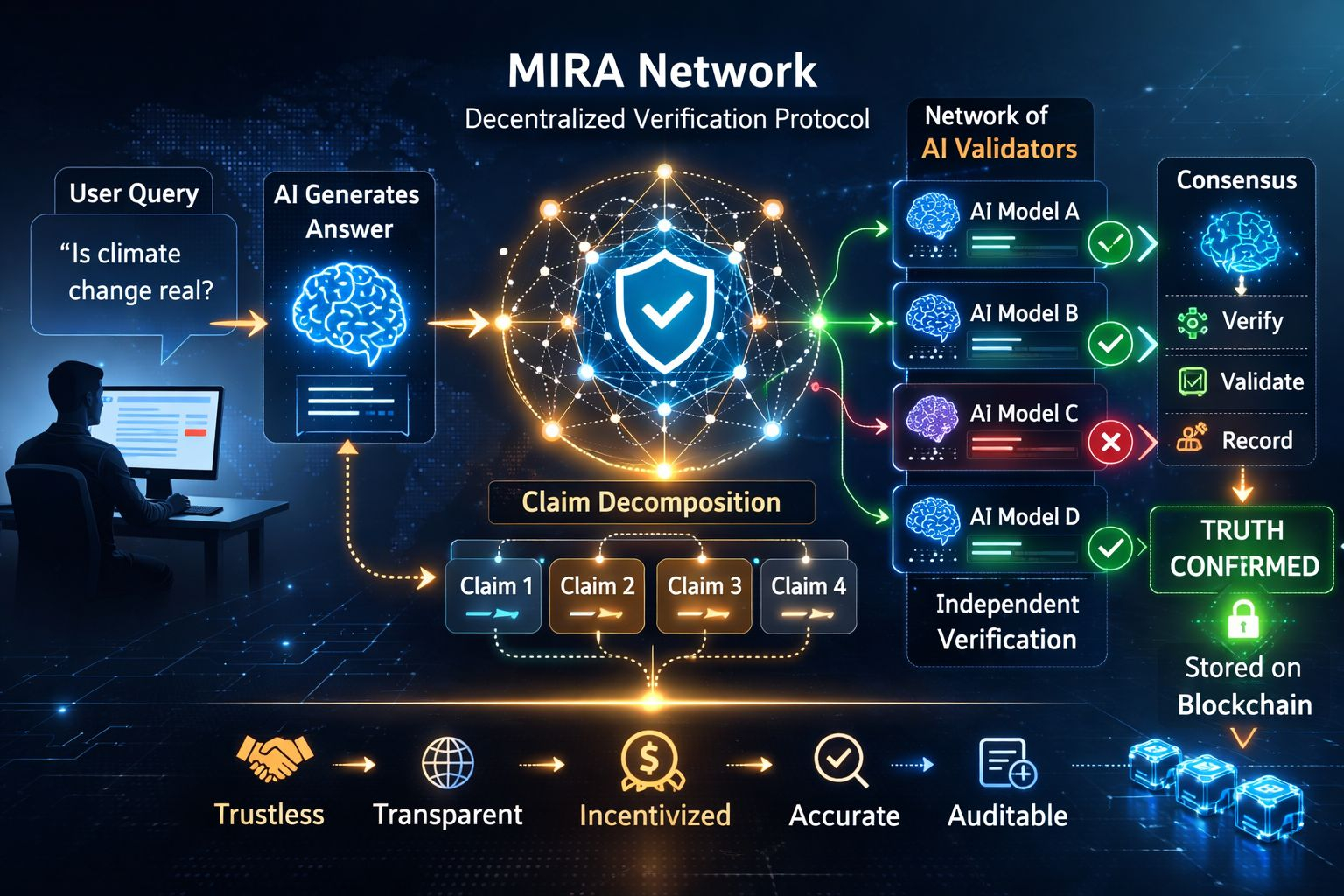

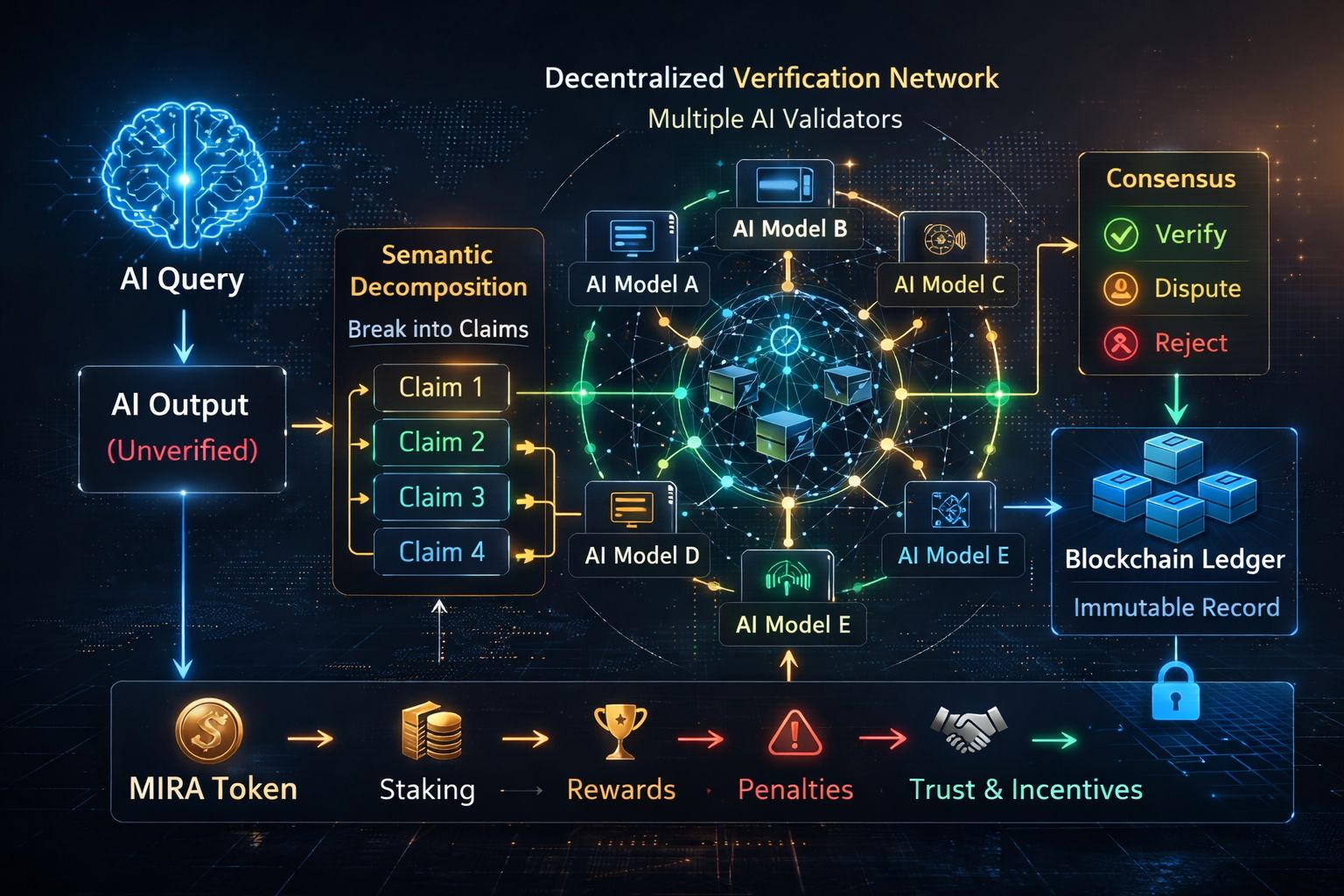

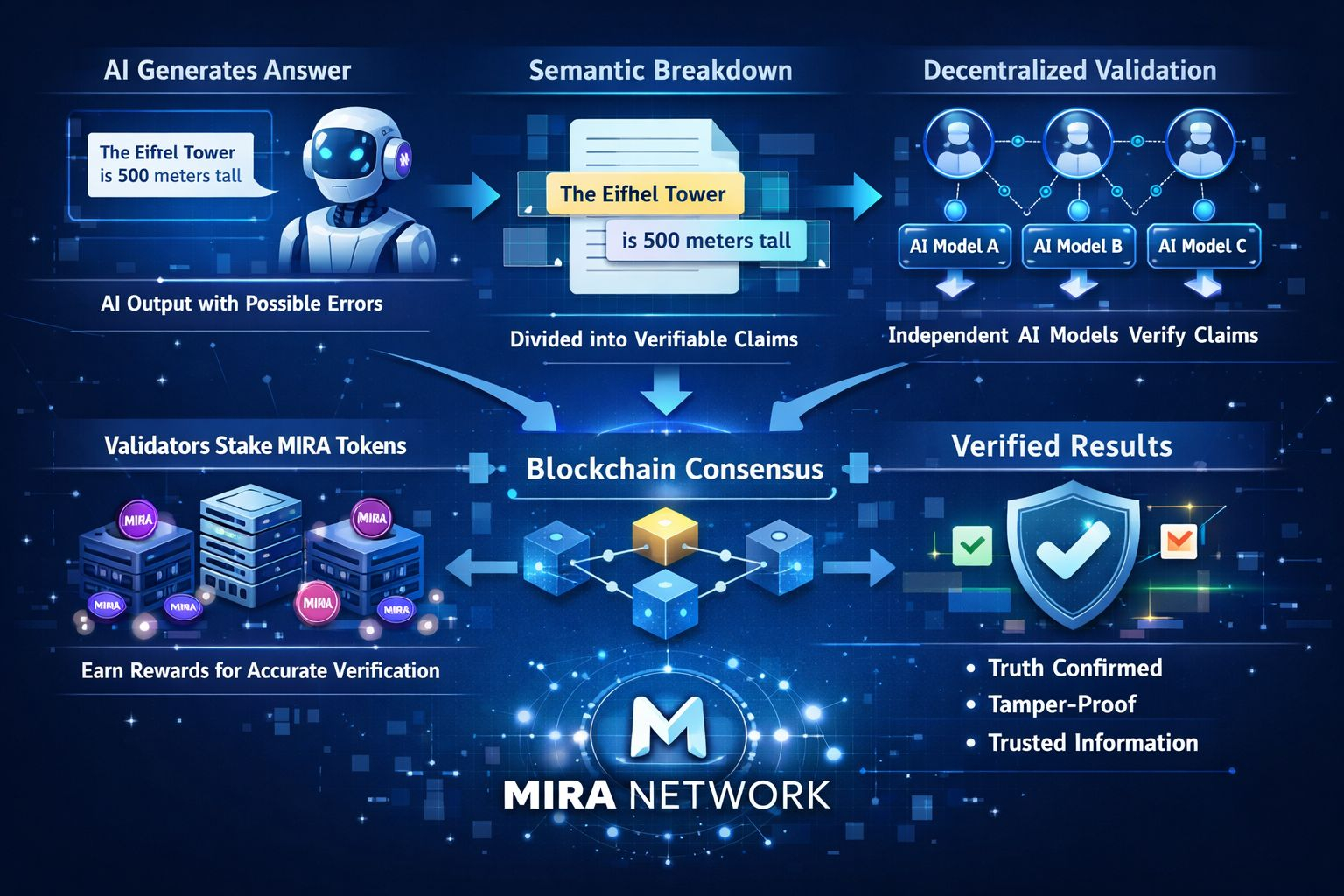

Mira treats every AI output as a claim that must prove itself. When an AI model generates a response, the system does not immediately accept it as final information. The text is broken into smaller statements. Each statement becomes a factual unit that can be examined. These units are sent into a decentralized verification process where multiple independent AI models analyze them.

Different models evaluate the same statement separately. Each one checks consistency with knowledge, logic, and data. Instead of one machine deciding, many machines participate. After analysis, a consensus mechanism determines whether the claim is reliable. Only verified information is accepted. Uncertain or disputed statements are rejected or marked unreliable.

The most important part is that this process does not rely on a central authority. It uses blockchain infrastructure to record verification results. Once verified, the claim is stored permanently. It cannot be secretly edited later, and anyone can audit it. Information becomes traceable and accountable.

This changes the nature of AI interaction. Today, users trust AI based on reputation of the company behind it. In Mira’s vision, users trust AI because the answer itself has proof. The trust shifts from organization to process.

The network operates through participants known as validators. These are node operators who help verify claims. To participate they must stake MIRA tokens. Their stake represents responsibility. If they behave honestly and verify accurately, they earn rewards. If they act dishonestly or attempt manipulation, they lose value. The economic structure encourages careful verification.

Tokenomics plays an important role beyond trading. The MIRA token functions as the fuel of the system. It is used to pay verification fees, reward validators, and participate in governance decisions. Holders can vote on network upgrades and parameters. A large portion of supply supports ecosystem incentives, validator rewards, and development. By connecting economic value with truthful verification, the network aligns financial interest with accuracy.

Another feature of the network is semantic decomposition. Complex responses are not checked as a single block of text. Instead, they are separated into small factual components. This approach allows precise verification. If a long answer contains one incorrect claim, the system can identify the exact part instead of rejecting everything. This increases both reliability and usability.

Developers can integrate Mira through interfaces that route AI requests into the verification layer. Applications using this system could provide responses already validated. A learning platform, research tool, financial assistant, or automated support service could show users not just an answer but a verified answer. The long term vision is a trust layer for artificial intelligence, something operating quietly in the background of many digital services.

The roadmap reflects gradual expansion. Early stages involve test networks and validator onboarding. Later stages focus on ecosystem development and developer tools. The final stage aims for widespread integration across industries where accuracy matters most such as education, healthcare information systems, and decision support tools.

However, the project faces realistic risks. Verification requires computational resources and coordination between models. The system must maintain efficiency so users do not experience slow responses. Adoption is another challenge. Companies may prioritize speed and cost over verification at first. Market speculation in the crypto space may also distract attention from long term infrastructure goals.

There is also a philosophical limitation. Consensus improves reliability but does not guarantee absolute truth. If multiple models share similar biases, verification could still reflect imperfect data. Continuous improvement and diversity of validators remain necessary.

Despite these uncertainties, the emotional significance of the project is clear. Humanity is entering a period where knowledge is increasingly produced by machines. When machines become teachers, assistants, and advisors, reliability becomes more important than raw intelligence.

People do not only want fast answers. They want reassurance. They want the confidence that the information guiding their decisions is grounded in reality.

Mira Network attempts to build a system where AI must justify its output. The machine is no longer simply trusted. It is examined. It must pass a form of digital peer review before influencing human action.

If such a system becomes widespread, the relationship between humans and artificial intelligence could change. Instead of treating AI as a clever but unpredictable helper, people could rely on it for serious tasks. Automation in research, education, and business decisions would feel safer because verification stands behind it.

The deeper meaning of the project is not about blockchain or tokens. It is about restoring certainty in an age of overwhelming information. The internet created unlimited data but weakened shared truth. Artificial intelligence accelerated this problem by producing content faster than humans can check. Mira proposes a structure where information itself carries evidence.

In the future, when someone asks an AI an important question, they may no longer feel the need to cross search multiple websites or consult several sources. The answer would arrive with confirmation built in. Trust would come from verification rather than assumption.

Artificial intelligence gave humanity knowledge at incredible speed. Mira Network is an attempt to make sure that speed does not come at the cost of reliability. Instead of a world where machines confidently guess, the project imagines a world where machines must demonstrate correctness.

The real value lies not in technology alone but in psychological comfort. When decisions depend on digital information, confidence becomes essential. A verified response does more than provide data. It removes hesitation.

In a time when truth often feels uncertain and information spreads faster than understanding, a system dedicated to checking knowledge before presenting it may become one of the most important layers of future technology.

Intelligence changes how we live.

Trust changes how we feel about living with it.