AI infrastructure has exploded, yet reliability remains a stubborn bottleneck. Models keep hallucinating, biases creep in, and high-value use cases hesitate to deploy without safeguards. Mira steps in with a blockchain-backed verification layer, routing outputs through multiple independent models for consensus-based checks. In the AI x Blockchain vertical, where trust layers like oracles once transformed DeFi, Mira aims to do something similar for intelligence itself. The project isn't chasing bigger models—it's building the plumbing to make existing ones dependable. That's where things get interesting: as AI agents proliferate, a neutral, decentralized verifier could become essential infrastructure rather than a nice-to-have.

What is Mira?

Mira Network is a decentralized protocol focused on verifying AI outputs to combat hallucinations and unreliability. It breaks down generated content into discrete, verifiable claims, distributes them across a network of independent verifier nodes (often running varied LLMs), and aggregates results via consensus. Once agreement hits a set threshold, the network issues a cryptographic certificate proving the output's validity. Built on blockchain principles, it uses a hybrid PoW/PoS model where nodes stake MIRA to participate, face slashing for dishonesty, and earn rewards from verification fees. Launched mainnet in late 2025, Mira targets domains like finance, healthcare, and legal where single-model errors carry real costs. The system emphasizes collective intelligence over any one model's strength, creating auditable, tamper-proof results without centralized oversight.

Focus: Where Mira Fits in the Emerging AI Infrastructure Layer

The AI stack is layering up fast: base models at the bottom, inference providers and tooling in the middle, applications on top. Mira carves out space as the verification middleware—think of it as the "trust oracle" for intelligence. It doesn't train models or host compute; instead, it plugs in post-generation, adding a consensus step that filters outputs before they reach users or downstream agents. Developers can integrate via API to get verified responses, paying in $MIRA , while node operators contribute diversity and earn from the process. This positions Mira below agent frameworks and above raw LLMs, addressing a gap where raw power meets real-world deployment risks. In practice, it enables autonomous AI in regulated sectors by providing mathematical proofs of accuracy, potentially unlocking trillions in value trapped behind trust concerns.

Why this matters now: With AI agents moving toward autonomy and blockchain enabling tokenized incentives, the lack of a reliable verification primitive risks fragmented, insecure ecosystems. Mira's timing aligns with surging demand for verifiable outputs amid regulatory scrutiny and enterprise caution—without it, the infrastructure layer stays incomplete, limiting scale in high-stakes verticals.

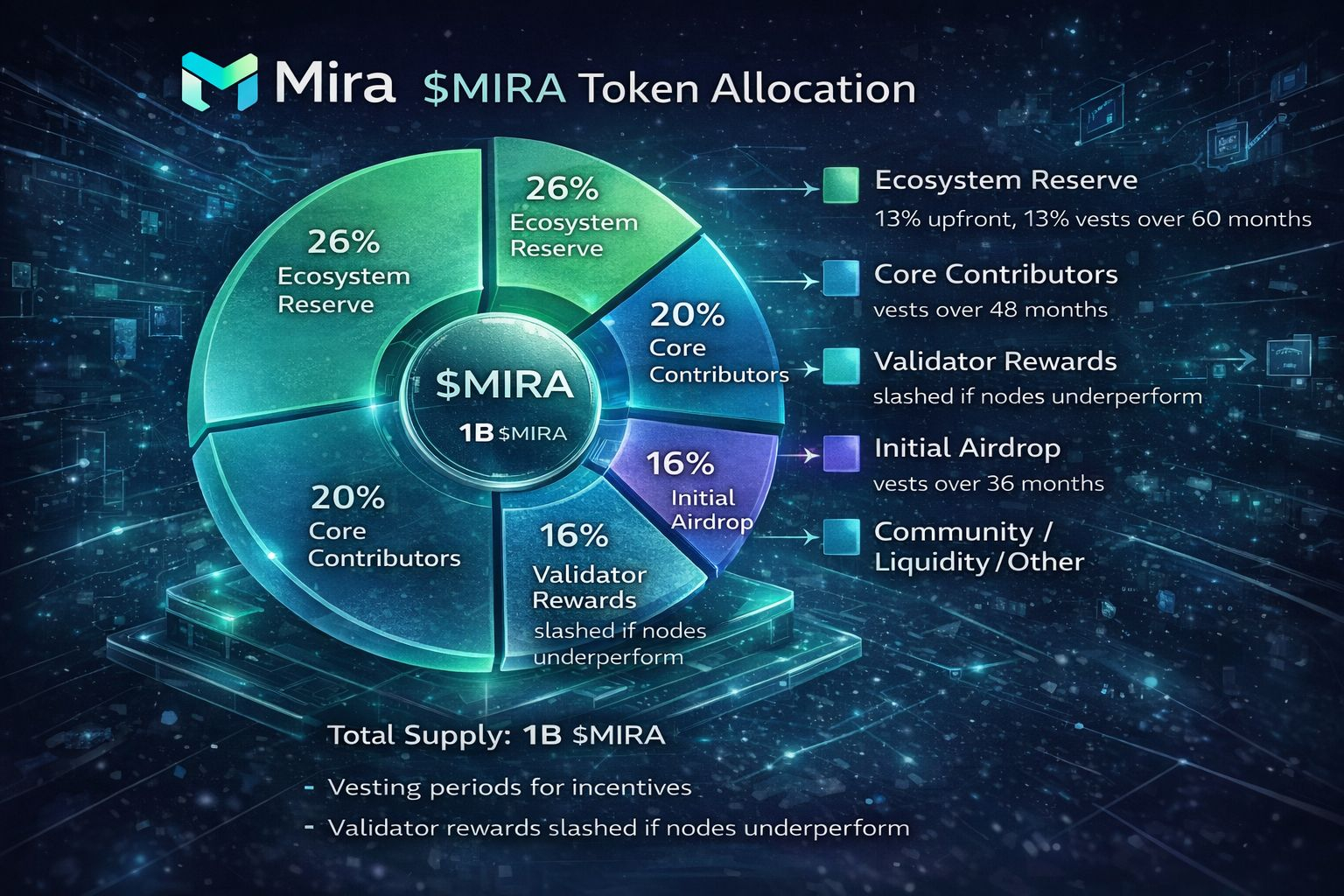

Tokenomics & Economic Design

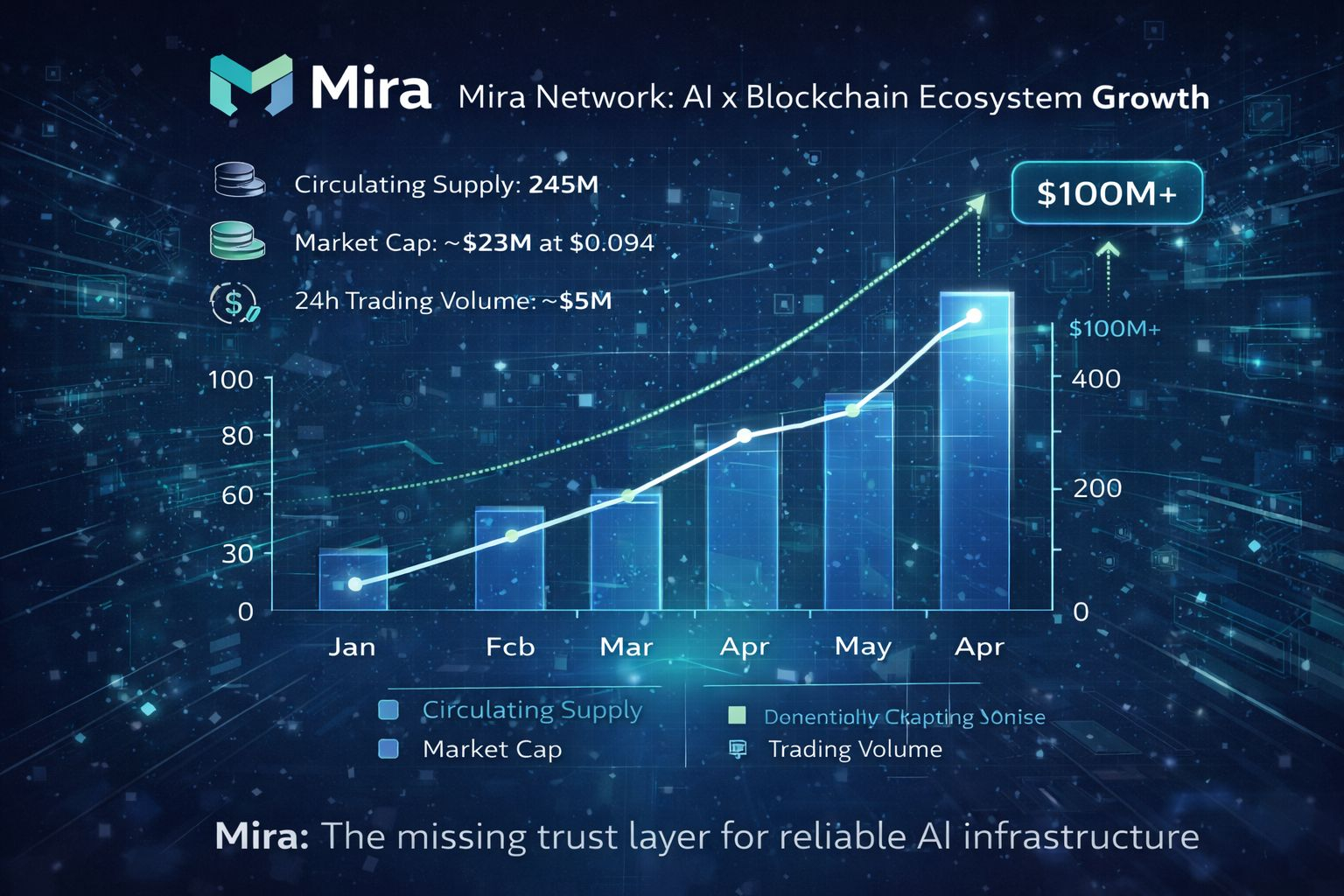

$MIRA has a fixed total supply of 1 billion tokens. Circulating supply sits around 245 million (roughly 24.5% as of recent data), yielding a market cap near $23 million at approximately $0.094 per token. Allocation includes 26% ecosystem reserve for grants and growth, 20% to core contributors (vested over years), 16% for future validator rewards, 6% initial airdrop, and the rest spread across community, liquidity, and other incentives.

The token powers staking for node operation (required for verification participation), pays for services like API verification, enables governance voting, and rewards honest verifiers through fee distribution. An original calculation: With 16% allocated to validator rewards released programmatically over time, and assuming linear distribution across a 48-month horizon post-TGE, roughly 3.3 million tokens could enter circulation monthly from this bucket alone—creating moderate but predictable supply pressure unless offset by growing fee capture (example based on public allocations).

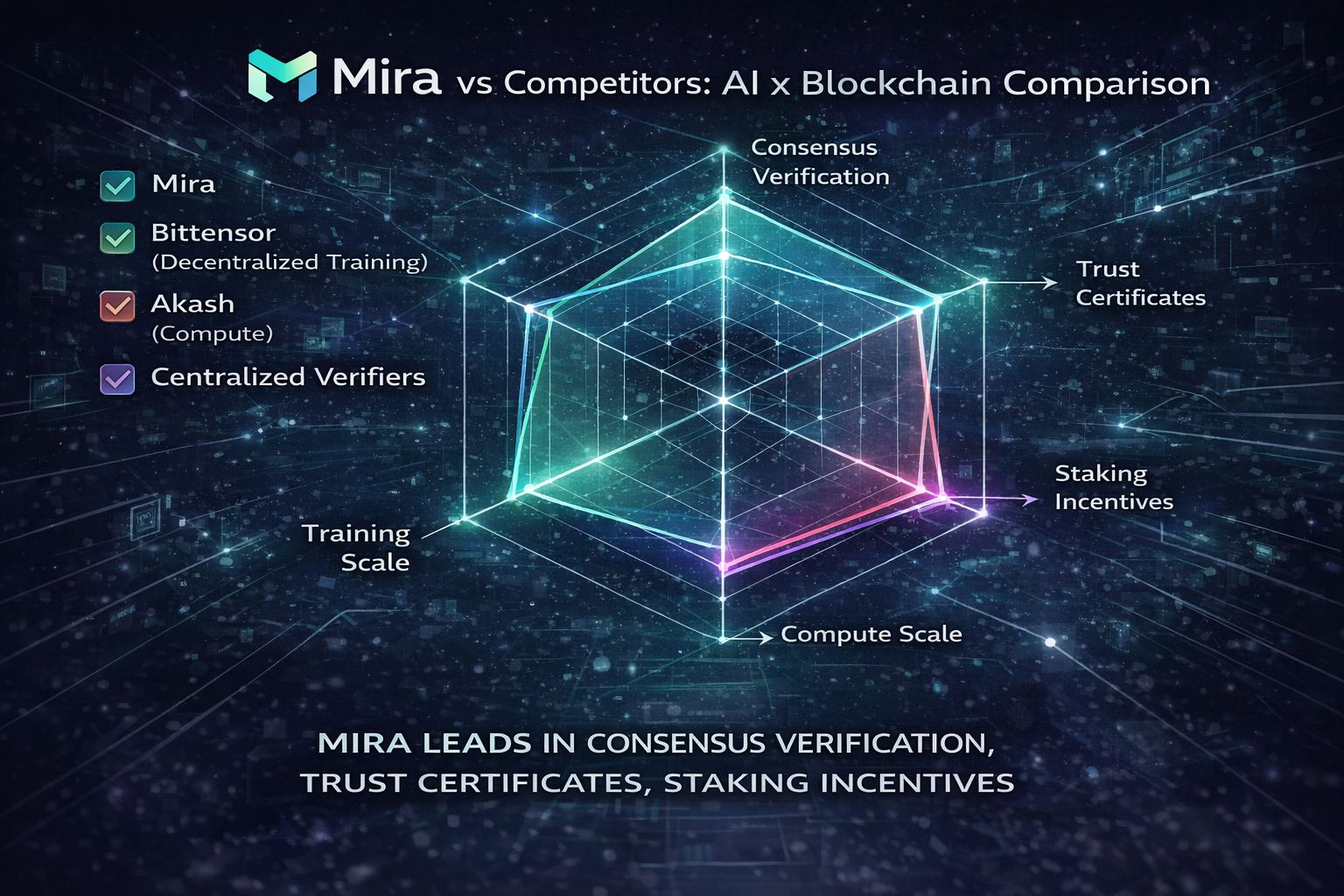

Competitive Landscape

Mira competes in a crowded AI x Blockchain field. Projects like Bittensor focus on decentralized training and inference markets, while Render or Akash handle compute. Closer peers include protocols emphasizing verification, but few match Mira's consensus-of-diverse-models approach. Centralized alternatives (e.g., proprietary fact-check layers from OpenAI or Anthropic) offer speed but lack transparency. Mira differentiates with blockchain-secured certificates and economic alignment via staking/slashing, potentially appealing to DeFi-adjacent apps needing auditable AI. Still, it trails in adoption scale compared to established players with billions in TVL or compute.

Risks & Reality Check

Competition is intense—centralized providers iterate faster on latency, while other crypto projects scale compute incentives more aggressively. Token dilution remains a factor; vesting unlocks from contributor and ecosystem buckets could add steady pressure if verification fees lag. Execution risk looms large: building diverse, performant verifier nodes at scale requires sustained incentives and model variety, and any consensus failures could erode trust. Market narratives shift quickly—if AI reliability improves natively in base models or regulations favor closed systems, demand for decentralized verification might soften. Early node participation is promising but concentrated among incentivized early users.

Forward Outlook (6–12 Months)

Over the next 6-12 months, Mira could solidify its niche if verification volume grows with agent adoption. Partnerships in finance or healthcare might drive real usage, pushing transaction fees higher and rewarding stakers meaningfully. Mainnet maturity should attract more diverse nodes, improving consensus robustness. Watch for integrations with popular LLM frameworks or agent platforms—success here could compound network effects. Challenges persist around latency and cost, but if Mira captures even a sliver of high-stakes AI queries, it positions well in an infrastructure layer hungry for trust primitives.

Conclusion

Mira offers a pragmatic take on one of AI's thorniest issues: making intelligence verifiable without reinventing the models themselves. By layering blockchain consensus atop collective AI, it carves a defensible spot in the infrastructure stack. Adoption will hinge on seamless integration and real fee generation, but the design feels aligned with where the vertical is heading.

@Mira - Trust Layer of AI #Mira