Let’s be honest: We’ve all seen AI "hallucinate." Whether it’s a chatbot making up facts or a model showing clear bias, the "black box" of AI has a massive trust problem. If we can't trust AI, how can it ever truly run our world?

Enter @Mira - Trust Layer of AI 🌐

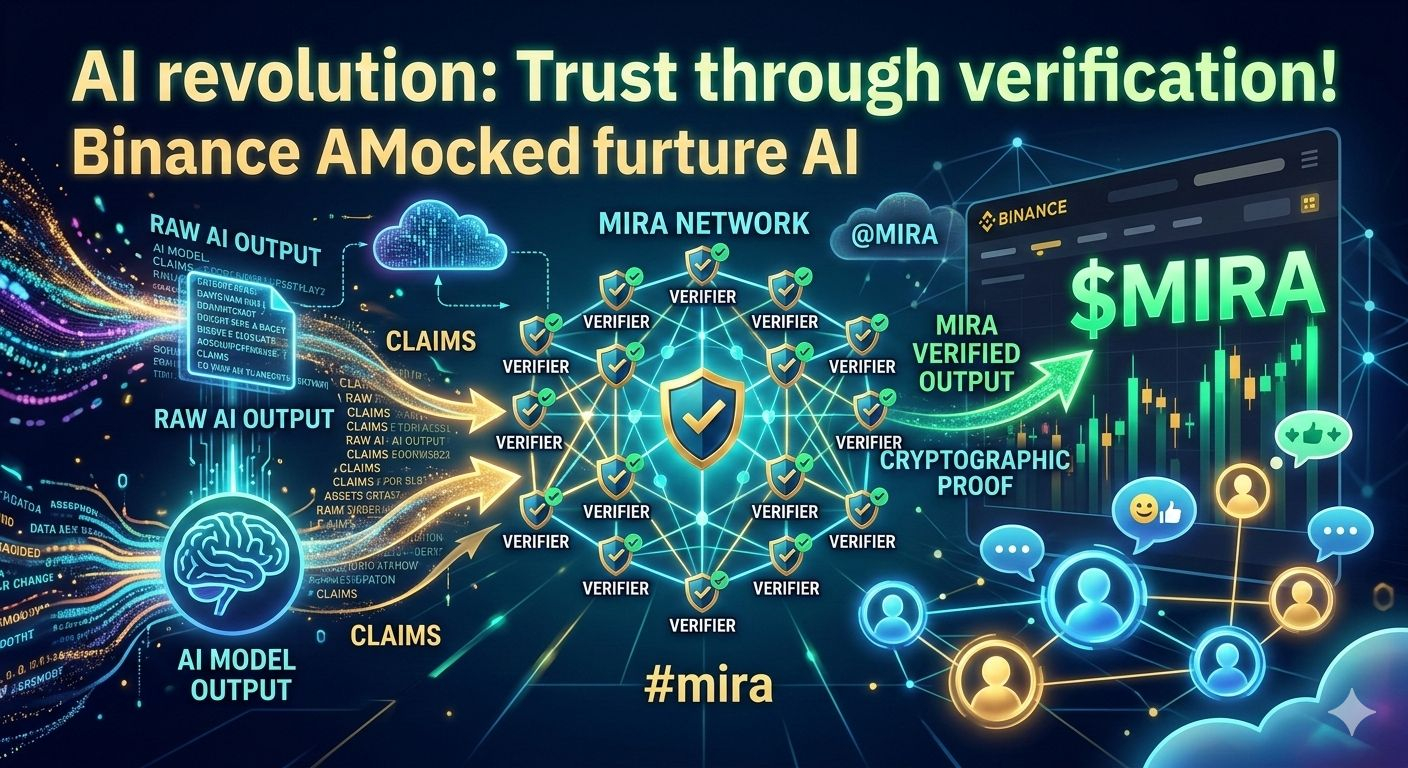

The Mira Network isn't just another AI project; it is the decentralized verification layer the world desperately needs. Instead of taking an AI's word for it, Mira breaks down complex outputs into verifiable claims. These are then cross-checked by a distributed network of independent models.

🛡️ Why This Matters for You:

Truth via Consensus: Using blockchain technology, Mira ensures AI outputs are cryptographically verified.

Economic Incentives: Validators are rewarded for accuracy, creating a trustless environment where "fake news" and "AI bugs" have nowhere to hide.

The Future of Autonomy: For AI to handle finance, healthcare, or legal tasks, it needs the verification that only $MIRA provides.

We are moving away from centralized control and toward a future where AI is held accountable by the community. This is where tech meets transparency, and the potential is limitless!

🔥 Join the Conversation!

The AI narrative is shifting from "Generation" to "Verification." Don’t just watch the revolution—be part of it.

Are you bullish on Decentralized AI?

What’s the craziest AI "hallucination" you’ve ever seen?

Drop your thoughts in the comments below! Let's discuss the future of the $MIRA ecosystem. 👇