Inshallah this is a good coins I got drawn into decentralized AI verification after reading about how different networks try to judge AI outputs without putting all the power in one person’s hands.The whole idea of spreading out verification letting a group,not just a single authority,decide what counts feels like a natural step as AI keeps weaving itself deeper into digital life.

One big challenge:it’s tough to check AI output transparently.Most old school systems lean on centralized reviewers or scoring models that nobody really gets to see.That breeds trust problems.If you can’t see how something’s judged,how do you know it’s fair or even accurate?It’s like grading tests,but only one teacher ever sees the answers.No one else can double check.

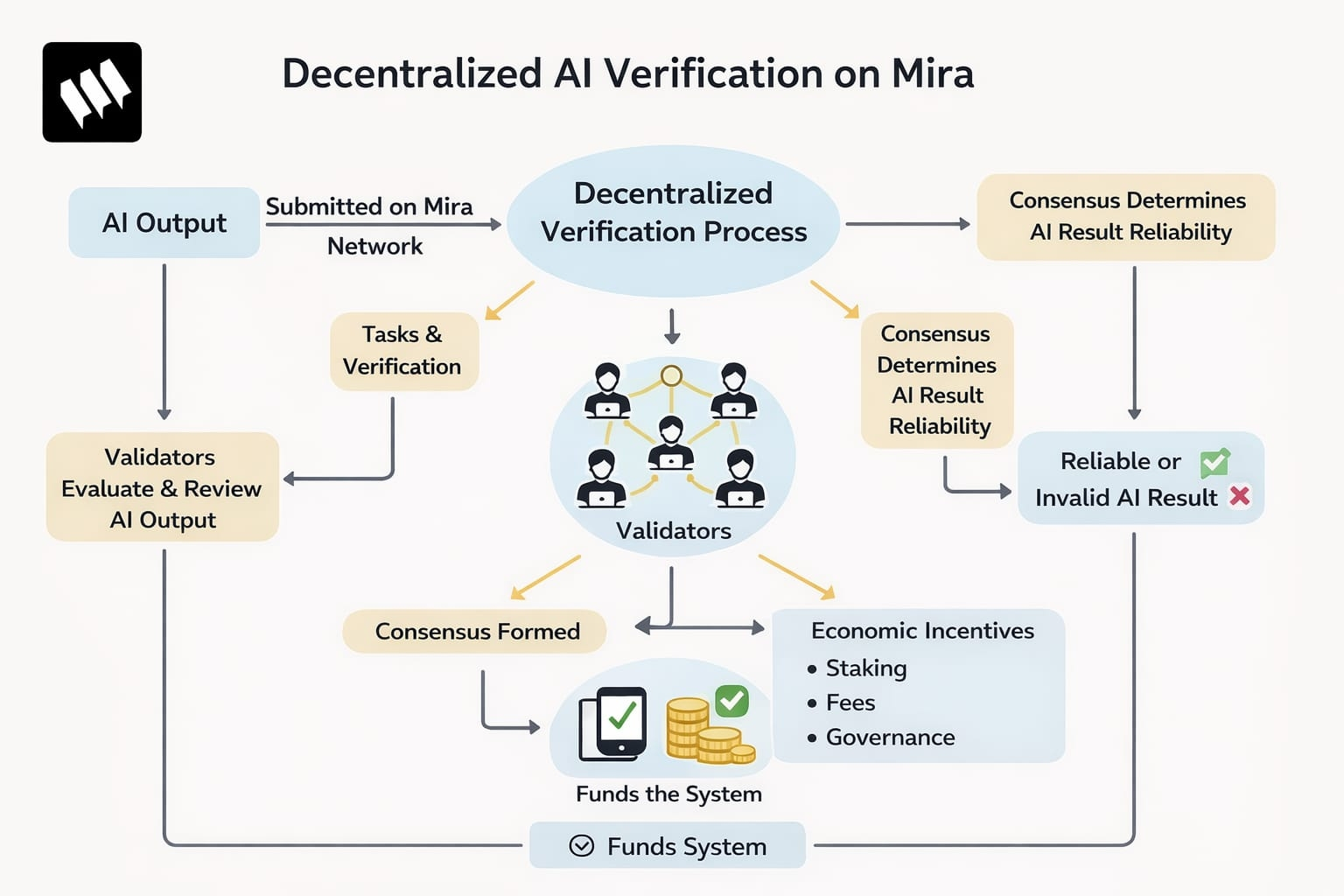

Mira flips that on its head.Here,verification happens out in the open,with a bunch of people validators each taking a look at the AI’s work.Instead of trusting one person’s call,the network turns verification into a group effort.Reliability doesn’t just come from authority.It comes from many independent checks,all stitched together.

The process centers on validators,who walk through a series of structured tasks.They each look at the submitted outputs and,through back and forth checks,build consensus about whether something’s trustworthy.If enough validators agree,the response is considered reliable.This creates a new kind of trust one based on broad agreement,not on someone’s title or power.

Mira’s network also brings in economic incentives.People can stake assets to show they’re serious,and fees keep the system running by supporting the folks who do the work.Governance rules shape how verification changes over time who gets to decide,what counts as consensus,and so on.

Still,decentralized AI verification is new ground.Nobody really knows yet how far these systems can stretch as more data flows in and tasks get more complex.The model’s promising,but its limits are still unfolding.