I keep coming back to a simple question when I read about Fabric’s onchain attestations, and it is this: if a robot does something in the world, how would anyone else know what really happened? My first instinct is not to think about tokens or blockchains at all, because I think about receipts and about the basic human need to be able to point to something solid after the fact. Fabric’s published materials describe a system in which robots have onchain identities, while tasks are settled onchain, early deployments collect structured operational data, and later phases tie incentives to verified task execution and data submission. In that setup, an attestation is basically a signed and checkable claim attached to a robot’s work history, so that this machine was assigned this task, it reported this result, validators or other checks looked at it, and the network recorded the outcome in a form other people can inspect later. That is a more modest idea than saying the chain knows the truth, yet it is also more useful for the harder and more ordinary question of how people keep track of what happened.

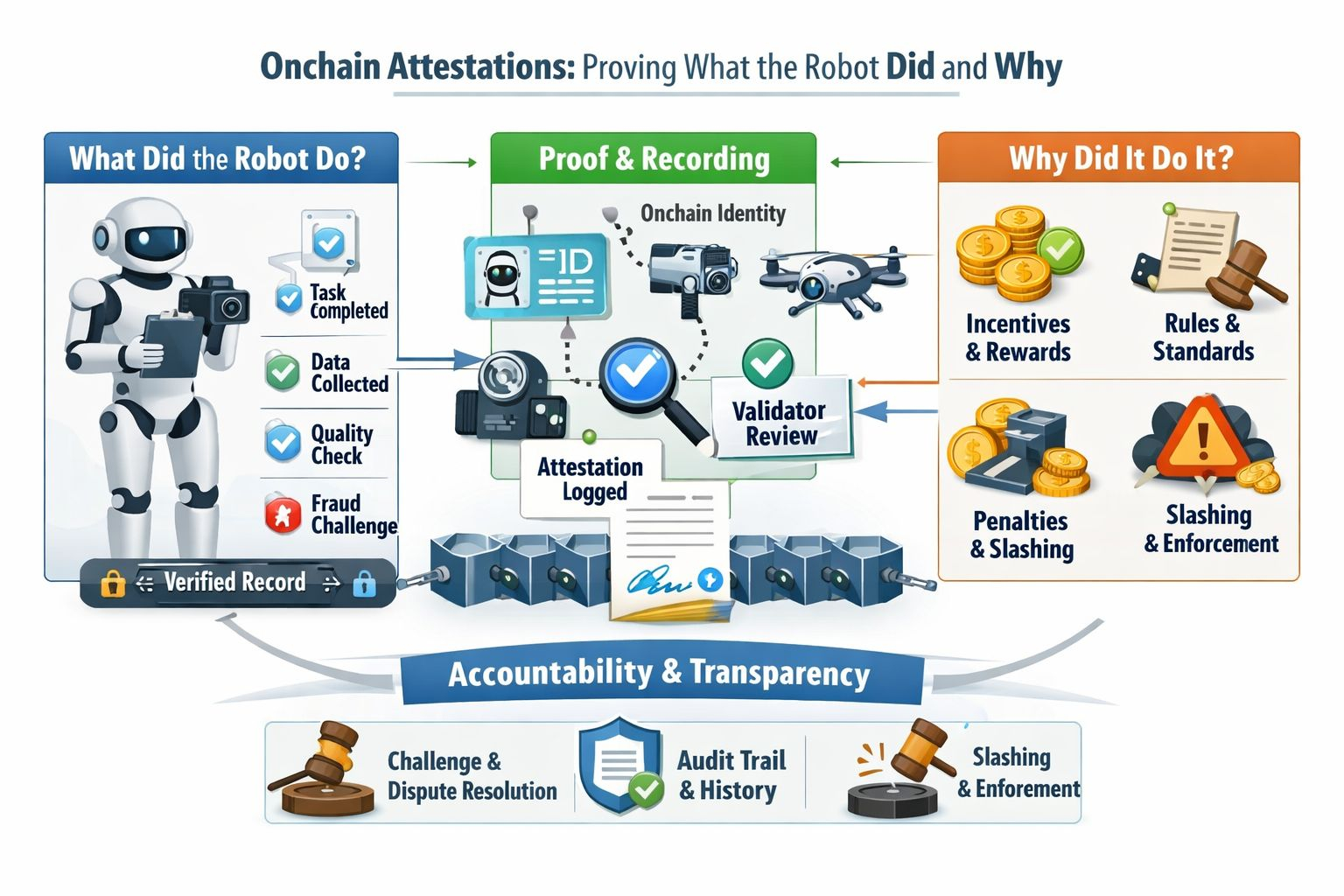

What I find helpful is to separate two different questions that tend to get blurred together, because they are related, but they are not the same. When I break it down, “what did the robot do?” is really about proof that something actually happened. Did it finish the task, stay online, provide the promised data, do the compute work, pass quality checks, or get flagged in a fraud challenge? “Why did it do it?” is a separate question. That takes us into the logic around the work itself, including what the robot was asked to do, how payment was tied to the outcome, what standards it was expected to meet, and what consequences it faced if it cut corners or came up short. Fabric’s whitepaper is interesting here because it tries to bind rewards to specific and verified contributions instead of vague claims of future value. It says contribution scores can come from completed robot tasks, verified training data, compute backed by cryptographic attestation, and quality attestations from validators, while quality scores can be adjusted by validation outcomes and user feedback. In other words, the record is meant to explain both the act itself and the incentive structure around it, so that the behavior does not float free from the reasons that produced it.

I do not think this idea would have hit the same way five years ago, because the context around it has changed. Accountability feels more immediate now and less like a distant policy question. Reuters noted in January that CES 2026 was full of what people are calling physical AI, with robotics, humanoids, and autonomous driving presented as the next phase after software AI. Around the same time, NIST published a concept paper focused on software and AI agent identity and authorization, and it explicitly called out logging, transparency, and provenance as practical needs for systems that can take actions with limited human supervision. A recent survey on autonomous agents and blockchains makes a similar point from another direction by arguing that, once agents move from observation to execution, auditability, policy enforcement, and recovery stop looking like optional extras and start looking like basic infrastructure. That is why Fabric’s idea gets attention now instead of earlier, because the conversation is shifting away from whether a model can act at all and toward how we govern the action after it acts in ways that touch real systems.

I also think the word attestation sounds heavier than it needs to sound because, in plain language, it just means giving other people evidence they can verify instead of asking them to take your word for it. Google’s confidential computing documentation puts it neatly by describing attestation as a digital verification mechanism used to establish trust in an execution environment. Fabric is applying that general instinct to robots and robot work. The chain is not there because every sensor reading belongs on a public ledger, and I find it helpful to keep that distinction in view, because otherwise the whole thing starts to sound stranger than it is. It is there to anchor the parts that matter for accountability, such as identity and timing and settlement and challenge results and quality claims and a durable history that is harder to quietly rewrite after the fact. I used to think that was mostly a technical flourish, but the more I look at it, the more it feels like an attempt to solve a social problem about how different operators and users and validators can share one version of events when no single company should get the last word.

Still, I would not pretend this makes the truth automatic, because Fabric’s own whitepaper is fairly candid that verifying every task would be too expensive, and that is why it proposes challenge based verification and validator monitoring and dispute resolution and onchain heartbeats for availability and slashing when fraud or poor performance is proven. That matters because it admits the hard part, which is that a blockchain can preserve claims and rulings, but it cannot magically rescue bad sensors or weak incentives or a flawed checking process. Garbage can still become permanent garbage, and that remains a real risk. So when I think about Fabric’s onchain attestations, I do not read them as a final answer, and I do not think that is their value anyway. I read them as an attempt to make robot behavior legible and contestable and economically accountable at the moment when autonomy is moving out of demos and into systems that touch money and labor and safety. To me, that is the real point, because it is not about proving everything forever so much as making it much harder for the phrase the robot did this to mean please just trust us.

@Fabric Foundation #ROBO #robo $ROBO