When I first came across Mira Network while scrolling through the latest crypto-AI buzz, I have to admit, my expectations were pretty low. Another project promising to revolutionize AI? We've heard that before. But as I started reading up on it, peeling back the layers, I realized this one was approaching things from a refreshingly practical angle – not with grand promises, but with a system designed to actually solve the mess of unreliable AI outputs.

You know how AI can be a double-edged sword? It's incredibly powerful, spitting out answers faster than we can blink, but it's riddled with issues like hallucinations – those moments when it confidently spits out total nonsense – or biases that creep in from training data. In everyday stuff, that's annoying. But in serious areas like finance, healthcare, or even legal decisions, it's a disaster waiting to happen. You can't have an autonomous system making calls on your investments or medical advice if there's no way to double-check its work without trusting some central authority. Crypto was supposed to fix centralization problems, yet AI verification has been stuck in the old world, relying on big tech gatekeepers.

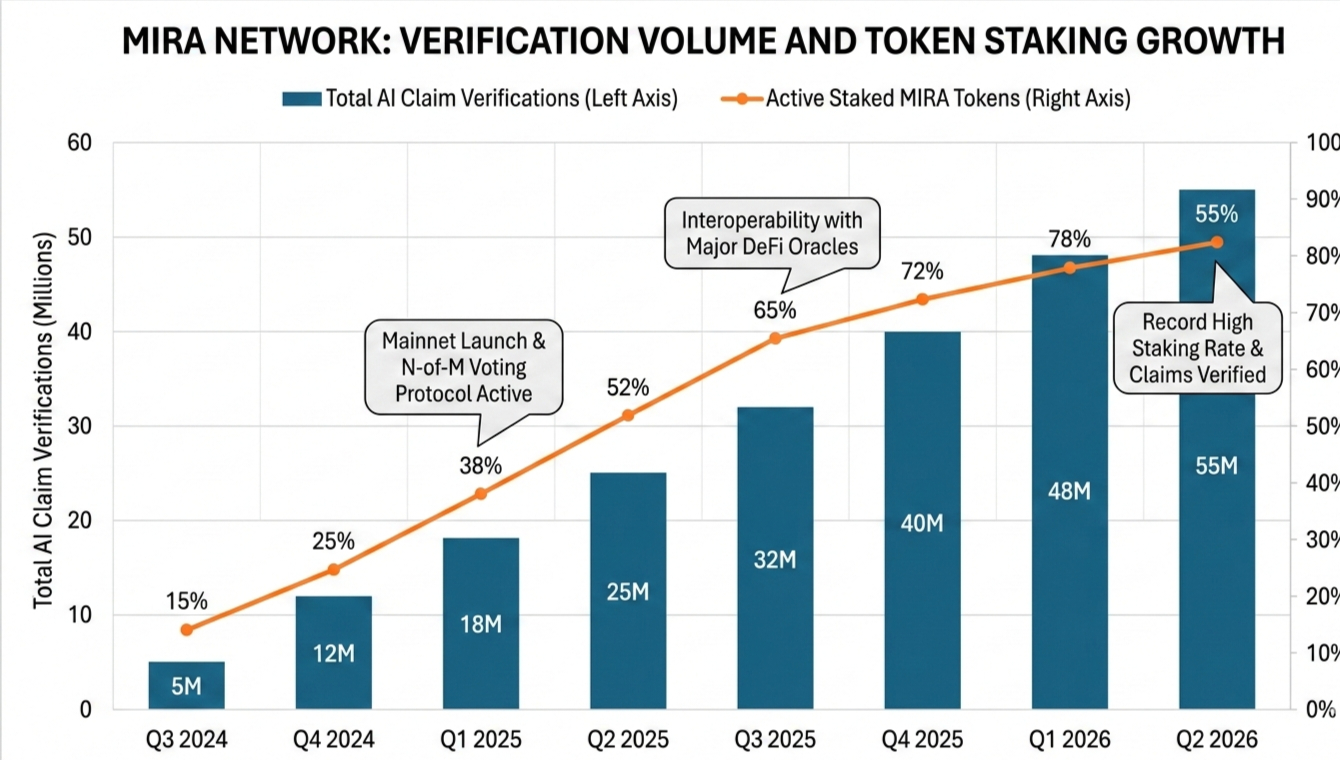

That's where Mira Network comes in, and it's what hooked me. It's a decentralized protocol built from the ground up to verify AI outputs using blockchain smarts. The idea is straightforward: take a complex AI response, break it down into bite-sized, verifiable claims – think individual facts, logic steps, or data points – and then farm those out to a bunch of independent AI models across a network. These verifiers stake their own MIRA tokens to join in, checking each claim for accuracy. Get it right, you earn rewards; mess up, you risk getting slashed. It's all tied together with cryptographic proofs and consensus mechanisms, so the final output isn't just an answer – it's a tamper-proof certificate you can audit anytime.

What really struck me as clever is how it sidesteps the usual pitfalls. Instead of depending on one dominant AI like GPT or whatever's hot, Mira spreads the work across rivals, which naturally fights bias through cross-checks. Privacy stays intact too – no single node sees the full picture, just fragments. And the economic incentives? They're tight, making sure honesty pays off more than gaming the system. I've looked at other verification plays, but Mira's focus on scalable blockchain consensus, with options like N-of-M voting, makes it feel ready for prime time, not just a proof-of-concept.

Looking ahead, the potential here seems solid. AI is embedding itself everywhere in web3 – DeFi oracles, DAO decisions, even NFT authenticity checks – and all of it needs reliable verification to scale. If Mira nails adoption, especially with token staking driving network effects, it could become the go-to layer. Sure, there are hurdles: coordinating thousands of nodes without slowing things down, or getting enough diverse AI models onboard in a crowded field. Competition from faster centralized alternatives won't vanish overnight either. But from what I've seen in early updates, they're moving methodically, which gives me confidence.

Diving into Mira left me rethinking how we even measure trust in AI. It's not the flashiest project out there, but in a world drowning in hype, one that quietly builds reliability might be the smartest bet. I'm keeping an eye on it – who knows, this could be the foundation for how we finally make AI safe for the big leagues.

@Mira - Trust Layer of AI #Mira $MIRA