As AI systems move from assistants to decision-makers, the real question is no longer capability — it is accountability. Autonomous agents can analyze markets, trigger transactions, manage compliance workflows, and even coordinate capital. But when these systems act independently, small inaccuracies are no longer harmless errors. They become operational risks.

Most AI architectures still treat model output as a finished product. If it sounds coherent, it is accepted. If it looks structured, it is deployed. That assumption creates a dangerous gap between fluency and factual reliability. Confidence scales faster than verification.

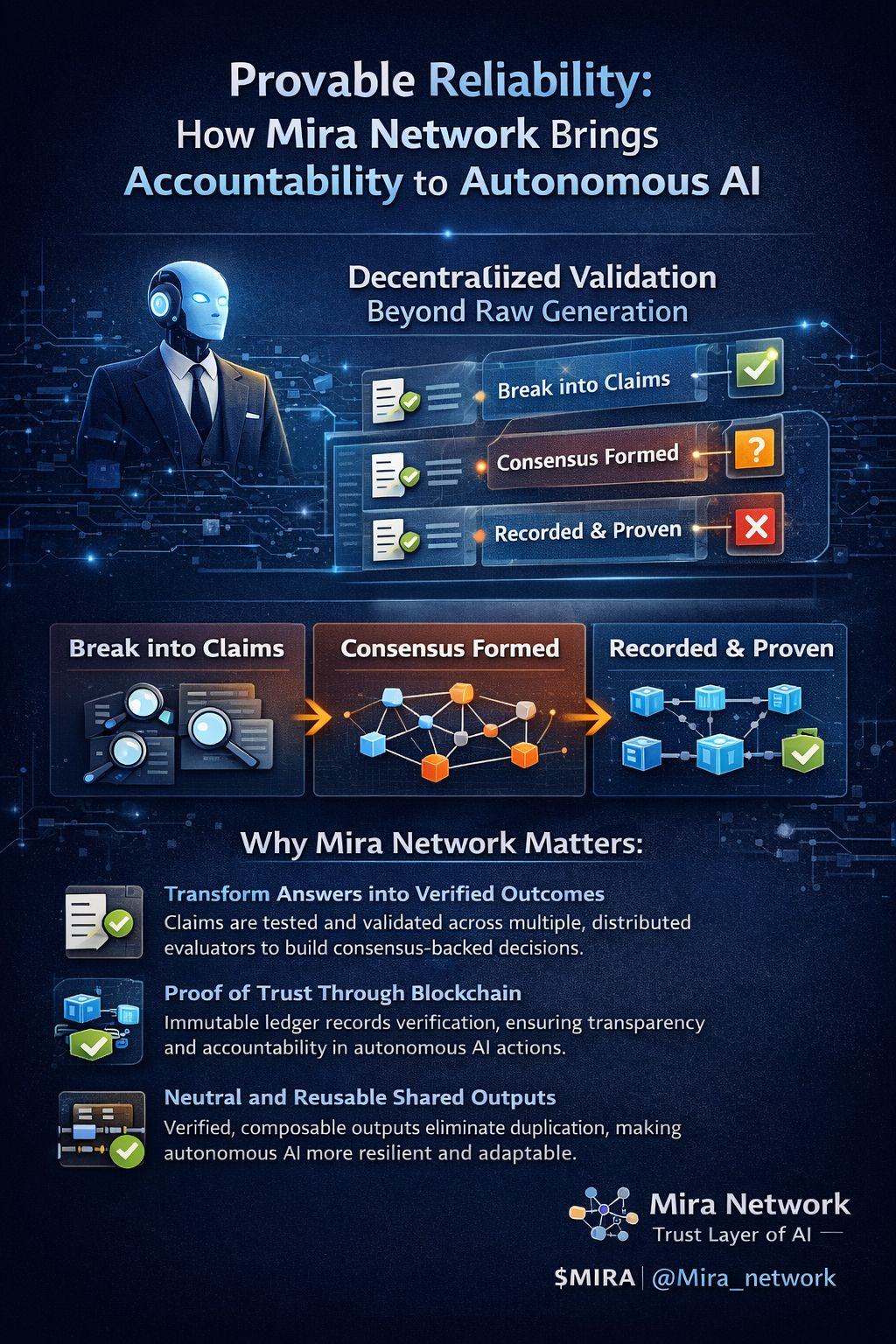

Mira Network approaches this differently. Instead of accepting an output as a single authoritative response, the protocol decomposes it into granular claims. Each claim becomes independently verifiable, challengeable, and measurable. Validation does not rely on one model’s authority — it emerges through decentralized consensus across multiple evaluators. What survives scrutiny is recorded. What fails is rejected.

This transforms AI from a prediction engine into a verifiable system of review. Decisions are no longer driven by isolated model confidence but by structured validation outcomes. For autonomous agents operating in finance, governance, analytics, or compliance, this shift is critical. Execution is grounded in consensus-backed verification rather than unchecked generation.

The blockchain layer functions as transparent proof of that validation process. It provides shared memory — not for branding, but for accountability. Incentives align around accuracy because verification is measurable and recorded. There is cost and latency involved, but reliability has always required friction. Removing all friction removes responsibility.

Mira also promotes neutrality across AI providers, allowing verified outputs to remain composable and reusable. This reduces duplication of validation efforts and strengthens efficiency across ecosystems. Instead of competing claims, the network builds shared certainty.

As AI autonomy expands, the debate must evolve from “Can it think?” to “Can it be trusted to act?” Mira Network reframes the conversation by embedding verification directly into the AI lifecycle. It does not attempt to eliminate risk through scale. It manages risk through provable accountability.

In a world where autonomous systems increasingly influence real-world outcomes, verified intelligence is not optional infrastructure — it is foundational.