I’ve started to think that robot security gets explained way too narrowly. Most people hear the word security and jump straight to hacks, wallet drains, or broken smart contracts.

I don’t think that’s all there is to it.

When a machine is out there doing tasks, operating in the real world, and getting paid for the result, the real issue feels more basic to me.

Who checks whether it actually did the job right?

Who steps in if something looks wrong?

And what happens when it messes up?

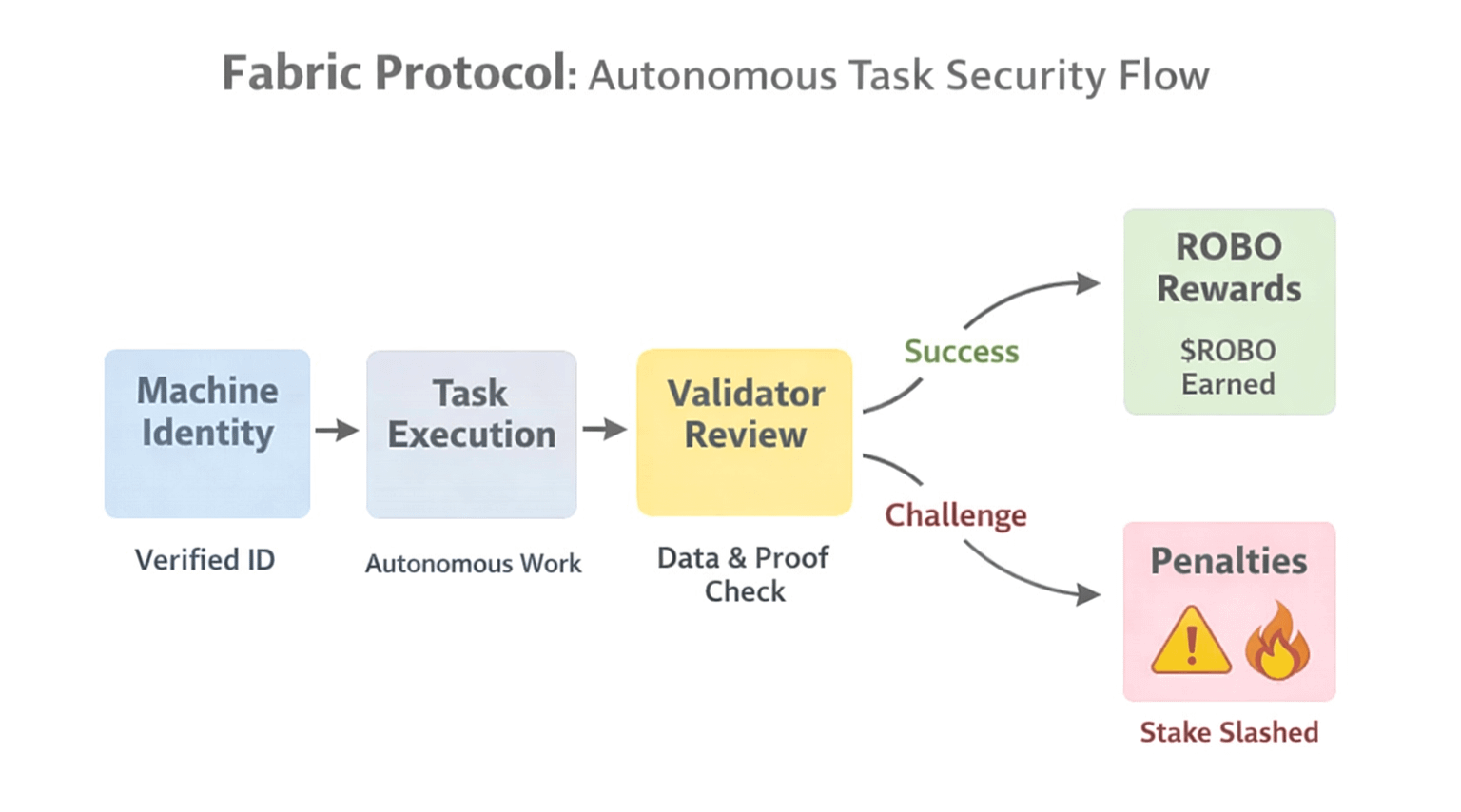

That is why Fabric Protocol feels interesting to me. The project is not framing security as a single technical shield. It is framing security as a coordination system for humans and machines working together under visible rules.

Fabric’s own materials make that pretty clear.

The Foundation says it is building governance, economic, and coordination infrastructure so humans and intelligent machines can work together safely and productively.

On the infrastructure side, it specifically points to machine and human identity, decentralized task allocation and accountability, location-gated and human-gated payments, and machine-to-machine communication and data conduits.

To me, that already changes the conversation. Security here is not just “protect the robot.” It is “make the robot observable, attributable, and governable inside a live economic network.”

What caught my eye next is how $ROBO fits into that design.

In Fabric’s official February 24, 2026 post, $ROBO is described as the core utility and governance asset, used for network fees tied to payments, identity, and verification.

Fabric also says the network will initially deploy on Base. Builders and businesses that want access to the robot network are expected to buy and stake $ROBO, and rewards are described as being paid for verified work such as skill development, task completion, data contributions, compute, and validation.

I like that emphasis because it moves the system away from passive, abstract staking logic and closer to accountable participation.

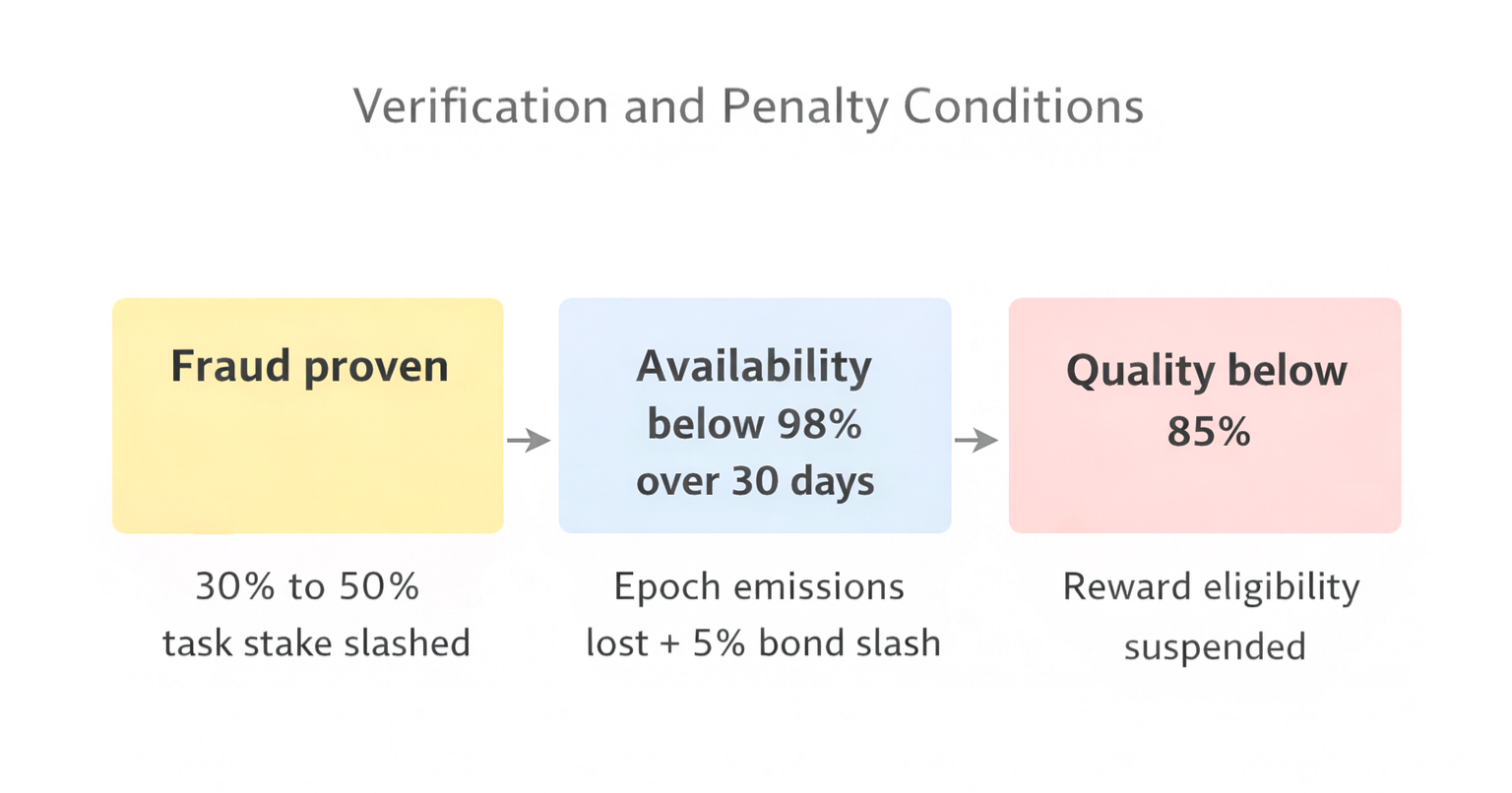

The strongest part of the security model, at least on paper, is the penalty structure.

Fabric’s whitepaper says proven fraud can slash 30% to 50% of the earmarked task stake. Part of that goes to a successful challenger as a truth bounty, and part is burned.

If robot availability falls below 98% over a 30-day epoch, the robot forfeits that epoch’s emission rewards and takes a 5% bond slash.

If its aggregated quality score drops below 85%, it loses reward eligibility until the issue is fixed.

I keep coming back to that because it shows Fabric is not treating bad behavior, downtime, and low quality as separate side issues. They are all part of protocol security.

There is also a governance layer here, and that matters.

The whitepaper says holders can escrow $ROBO into veROBO for onchain voting and signaling on limited protocol parameters and improvement proposals, including quality threshold changes, verification and slashing rules, and network upgrades. That means Fabric is not pretending its first security settings will be perfect forever. It is leaving room for the network to tune how autonomous coordination should be checked and enforced over time.

I’m also paying attention to the roadmap because it connects the theory to actual deployment steps.

The 2026 roadmap mentions early components for robot identity, task settlement, and structured data collection in Q1, contribution-based incentives tied to verified task execution and data submission in Q2, support for more complex tasks and multi-robot workflows in Q3, and then reliability, throughput, and operational stability improvements in Q4.

That sequence makes sense to me.

Fabric seems to be saying that secure autonomy is not one feature. It is identity first, then settlement, then validation, then scale.

Honestly, that feels like a much more serious security paradigm than just calling something “AI plus blockchain” and hoping people fill in the blanks themselves.