I’ve noticed that whenever a new protocol appears in the AI and blockchain space, the first thing people talk about is the promise. Faster systems, smarter networks, better incentives. But after watching several waves of innovation come and go, I’ve learned that the real story usually sits beneath the excitement.

It’s rarely about the headline features. It’s about the infrastructure decisions that determine whether a system actually works in messy, real-world environments. That perspective is what led me to look more closely at Fabric Protocol and the concept it calls PoRW, or Proof of Real Work.

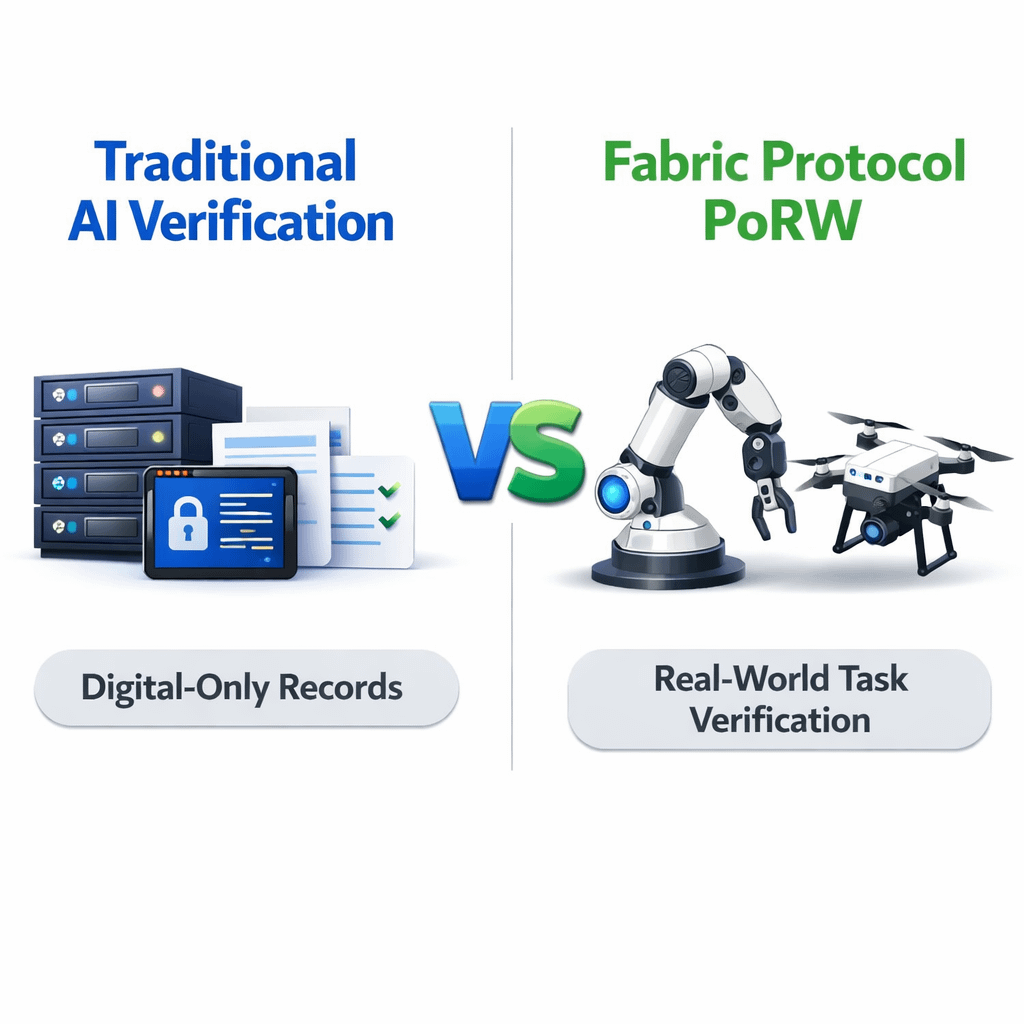

Most verification models in AI today are surprisingly centralized. When an AI system performs a task, the organization running it records the results internally. Logs track what happened, engineers analyze outputs, and internal monitoring tools confirm whether the system behaved as expected.

This structure works reasonably well in controlled environments where one company owns the entire system.

But the moment AI systems begin interacting across multiple organizations, things become more complicated. Imagine autonomous machines working across logistics networks, infrastructure monitoring systems, or robotics platforms operated by different companies. Each system produces its own records. Each organization has its own monitoring tools.

And each participant ultimately trusts its own data more than anyone else’s. In those environments, verification becomes less about technical accuracy and more about shared trust.

This is where Fabric Protocol’s concept of Proof of Real Work (PoRW) begins to enter the conversation. The idea behind PoRW seems simple at first glance. Instead of verifying purely digital transactions or computational activity, the network attempts to verify real-world tasks performed by machines.

Robots, drones, or autonomous systems complete physical work, and the network focuses on validating that the work actually happened.

What caught my attention is that this shifts the meaning of verification itself. Traditional AI verification models focus mostly on evaluating outputs. Engineers measure whether a model produced the correct prediction or whether a system performed well on benchmark datasets.

These methods are useful for improving models, but they do not necessarily prove that a specific task happened in the real world.

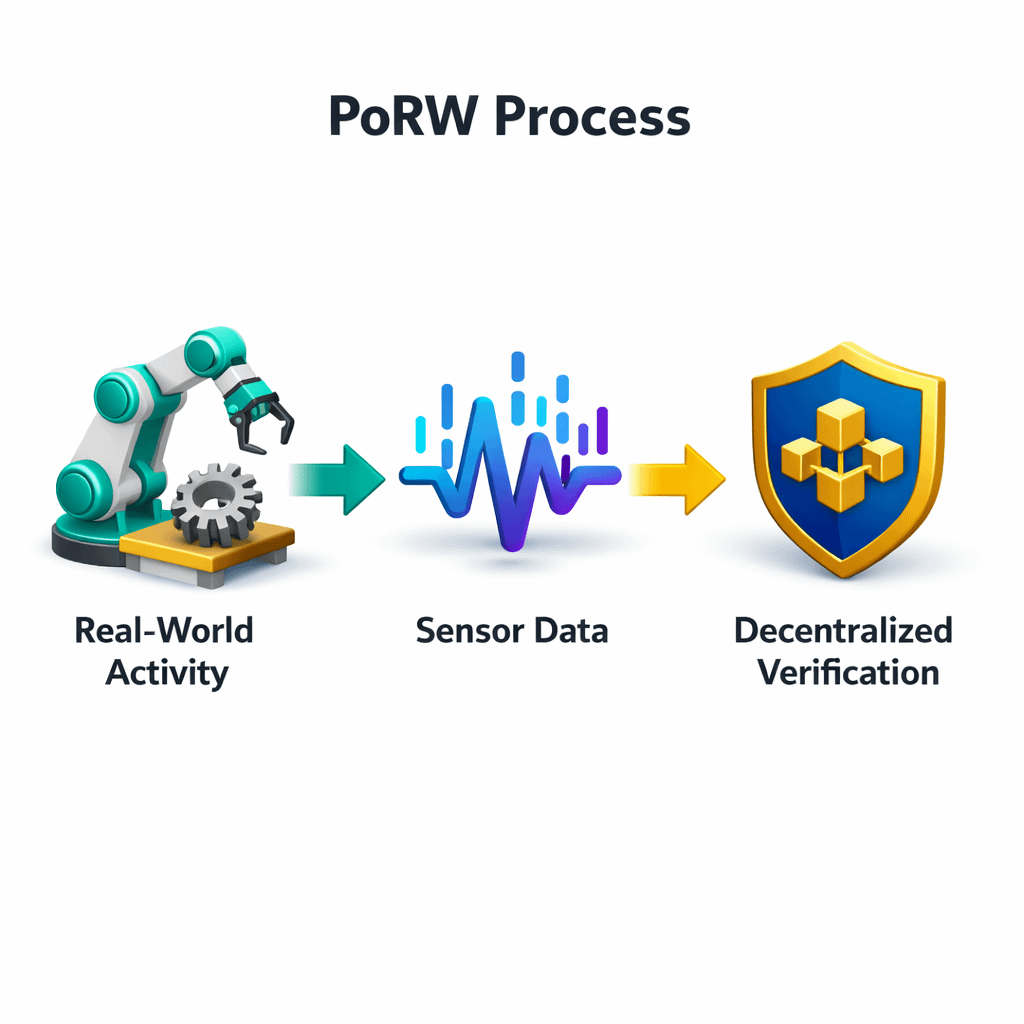

PoRW approaches the problem differently. Instead of asking whether an AI produced the best answer, the network attempts to confirm that a machine performed a real task under specific conditions. If a robot inspects infrastructure, if a drone scans a location, or if an autonomous system completes a job, the network records and verifies that activity.

From my perspective, this moves the conversation away from intelligence alone and toward verifiable machine activity.

Still, turning that idea into reliable infrastructure raises several questions. Physical environments are unpredictable. Sensors fail. Data streams can be incomplete. Machines behave differently depending on weather, terrain, or unexpected obstacles.

Verifying real-world activity is far more complicated than verifying a digital transaction on a blockchain.

Traditional AI verification models avoid many of these issues because they operate inside controlled digital environments. Every action can be logged precisely and replayed during audits. PoRW does not have that luxury. Instead, it must interpret signals from machines operating in the physical world.

That means verification systems must deal with imperfect data and uncertain conditions. Designing a decentralized system that can handle those realities without becoming unreliable is not trivial.

This is where I start to see both the potential and the difficulty of Fabric Protocol’s approach. If the network can verify machine-generated events in a reliable way, it could create a new coordination layer for robotics ecosystems.

Machines operating across different organizations could produce records of their activity that multiple participants recognize as trustworthy.

But building that layer requires more than clever design. It requires infrastructure that can handle inconsistent sensor data, complex machine behavior, and large volumes of activity. That is why I try to look at PoRW less as a finished solution and more as an experiment.

The concept itself acknowledges something important. As robotics and AI systems expand into the physical world, verification cannot remain purely digital. Systems will need ways to confirm that machines actually performed the tasks they claim.

Fabric Protocol’s PoRW model explores how decentralized networks might handle that challenge.

Whether it becomes a practical framework for coordinating machine activity remains something that will likely emerge slowly. Real deployments will reveal how well the idea holds up once machines start interacting across industries and environments that rarely behave as neatly as software systems.

And if those experiments succeed, the infrastructure verifying real-world machine work may eventually become just as important as the machines themselves.