I’ve spent a lot of time watching how artificial intelligence systems are deployed across different industries. The models themselves have improved dramatically over the past few years. They process more data, generate more sophisticated outputs, and operate at scales that would have seemed unrealistic not long ago. But the more capable these systems become, the more I notice another issue quietly emerging in the background. The problem is not always intelligence. It is verification. That realization is what led me to look more closely at Mira Network and the idea that decentralized verification might offer an advantage over traditional centralized AI infrastructure.

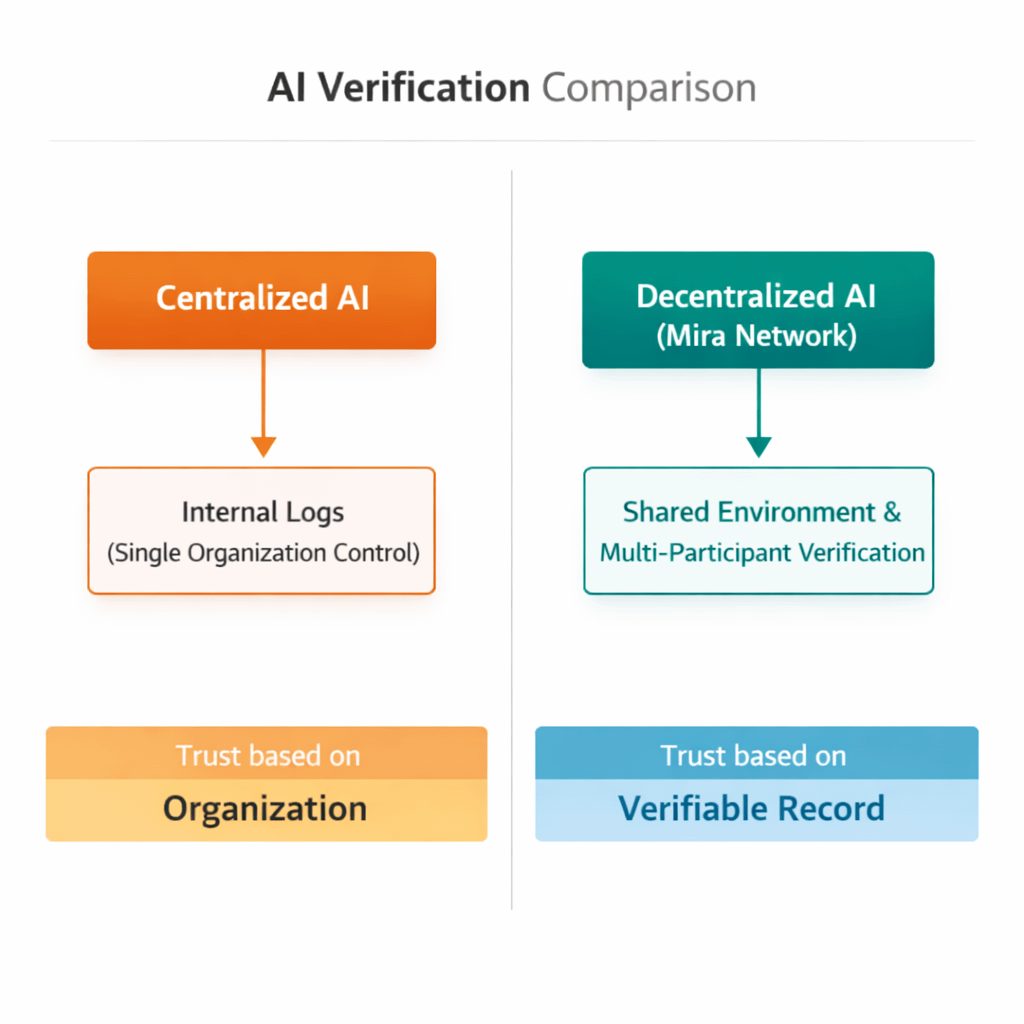

Most AI systems today operate inside controlled environments. A company trains a model, deploys it on its own servers, and records the system’s behavior internally. If something unexpected happens, engineers review internal logs and attempt to reconstruct what occurred. For many applications that approach works reasonably well. But it also means that the record of what an AI system did remains under the control of the same organization responsible for running it.

That arrangement introduces an interesting tension.

When AI systems begin influencing financial decisions, infrastructure management, or automated services used by multiple organizations, the question of trust becomes more complicated. If a model produces a result that affects several parties, those participants may want to understand how that result was generated. Internal logs can provide answers, but those records still depend on trusting the organization that operates the system.

This is where Mira’s approach begins to look different to me.

Instead of focusing on building larger or more powerful models, the network attempts to verify the behavior of AI systems through decentralized infrastructure. Inputs, execution conditions, and outputs can be recorded in a shared environment where multiple participants can observe the record of events. The idea is not necessarily to explain every internal detail of the model, but to confirm that it operated under the conditions it claimed.

From my perspective, this creates a different form of accountability.

Centralized AI systems often rely on internal auditing processes to validate results. Mira’s architecture attempts to move that verification process into a decentralized network where no single participant controls the record of activity. If an AI system produces an output, the network can confirm that the execution followed the specified rules or constraints.

I find that distinction important because it shifts the conversation about accuracy.

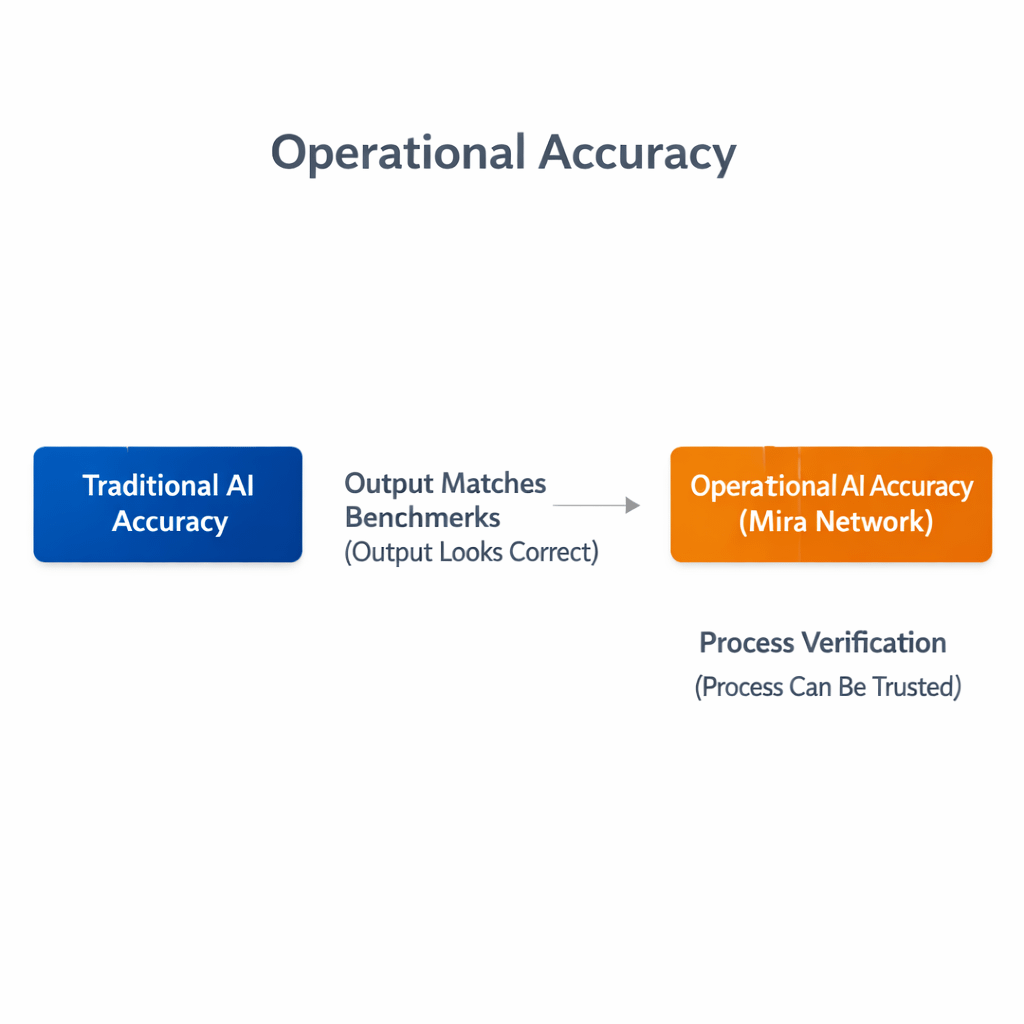

Accuracy in AI is usually measured through benchmarks or performance metrics. A model is considered accurate if its predictions match expected results within certain thresholds. But operational accuracy is slightly different. It involves confirming that the system actually executed under the conditions it claims to follow.

In other words, accuracy is not only about whether the output looks correct. It is also about whether the process that produced that output can be trusted.

Still, I try to approach these ideas carefully.

Decentralized verification introduces its own challenges. Networks require validators, consensus mechanisms, and incentive structures that must operate reliably. If the verification layer becomes inconsistent or slow, the credibility of the entire system could suffer. Trust does not automatically emerge from decentralization. It must be maintained through careful design and continuous operation.

Another factor I keep thinking about is integration. Developers already rely on monitoring tools and internal logging systems to track AI behavior. For a decentralized verification network to become useful, it must fit naturally into those existing workflows rather than replacing them entirely.

At the same time, the direction AI systems are moving makes verification increasingly important.

Autonomous agents are beginning to interact with financial platforms, logistics networks, and automated services without constant human oversight. As those systems coordinate with each other, the consequences of their decisions expand beyond the organizations that built them.

In those environments, internal logs may no longer be enough.

What Mira attempts to provide is a shared infrastructure where records of AI activity can be validated in a way that multiple participants recognize. Whether that approach ultimately outperforms centralized verification systems will depend on how reliably the network operates and how widely it is adopted.

For now, I see Mira’s verification advantage less as a guaranteed replacement for centralized AI infrastructure and more as an alternative architecture for building trust around increasingly autonomous systems. If AI continues expanding into areas where decisions carry real economic or operational consequences, the ability to confirm what those systems actually did may become just as important as the intelligence inside the models themselves.