I’ve noticed that when people talk about blockchain projects connected to artificial intelligence, the conversation often gravitates toward hype very quickly. Grand predictions appear about revolutionary models, decentralized AI markets, or entirely new digital economies. Over time, I’ve learned that those narratives can be interesting, but they rarely tell the full story. What usually matters more is the underlying infrastructure and the problem the system is actually trying to solve. That’s the perspective I try to keep when examining Mira Network and its long-term value proposition.

The core idea behind Mira seems relatively simple when stripped of marketing language. Instead of competing in the race to build the most powerful AI models, the network focuses on verifying the behavior of those systems. Inputs, execution conditions, and outputs can be recorded and validated through a decentralized infrastructure. The goal appears to be creating a shared system where AI activity can be confirmed rather than simply reported.

From my perspective, that approach targets a problem that may become more important as AI systems grow more autonomous.

Today, most AI deployments still operate inside centralized environments. A company trains the model, runs it on its own servers, logs its activity, and investigates problems internally. In many situations, that arrangement works efficiently. But it also means that the evidence of what an AI system actually did is controlled by the same entity responsible for operating it.

As long as AI systems remain confined to single organizations, that structure is usually acceptable. But the moment automated systems begin interacting across institutions, things become more complicated.

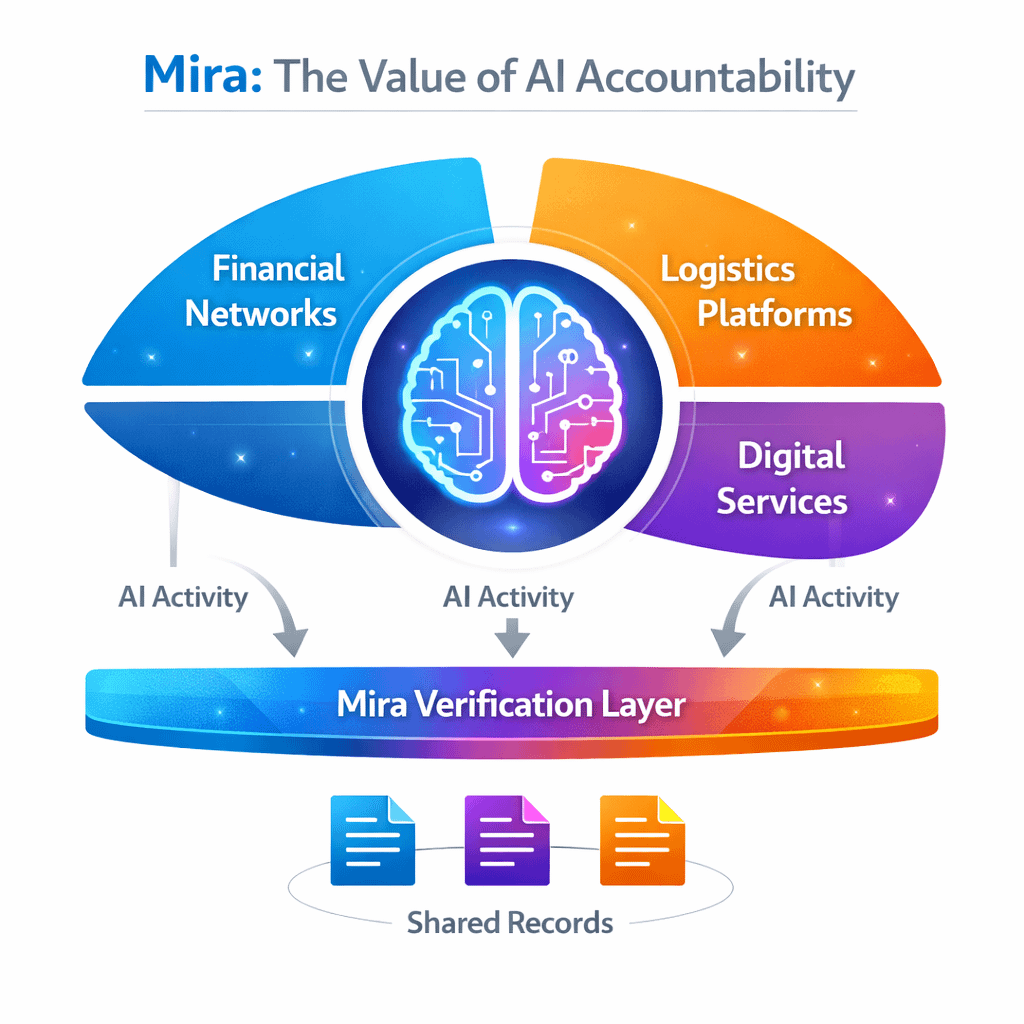

I keep thinking about scenarios where AI agents operate across financial networks, logistics platforms, or automated digital services. In those environments, multiple participants depend on the actions of machines they do not directly control. The question of verification becomes more important because decisions made by those systems can affect several stakeholders simultaneously.

This is where Mira’s infrastructure begins to make sense to me.

Instead of focusing on intelligence itself, the network attempts to build a verification layer around AI activity. When a model produces an output or executes a process, the relevant information can be anchored in a decentralized record. That record does not necessarily reveal every detail about the system, but it provides a shared reference point for confirming what happened.

I find that idea interesting because it shifts the focus of value creation.

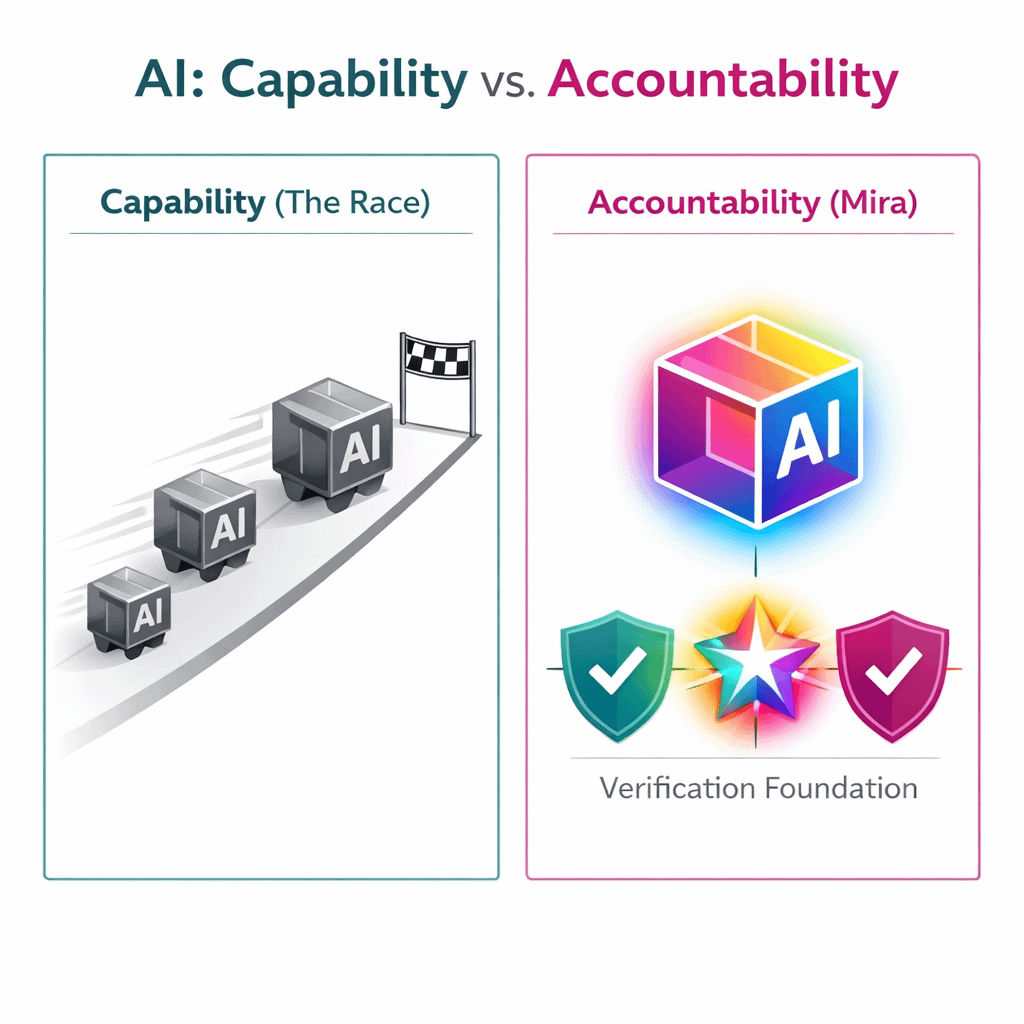

Most AI companies compete by improving capability. They build larger models, train on more data, and attempt to outperform competitors on benchmarks. Mira’s value proposition seems to revolve around accountability rather than capability. If AI systems become deeply integrated into financial, industrial, and digital infrastructure, the ability to verify their behavior may become increasingly valuable.

Still, I try to remain careful about assuming that this automatically translates into long-term value.

Specialized infrastructure networks face several challenges. Adoption depends on whether developers and institutions actually integrate the system into their workflows. If verification mechanisms add too much complexity or cost, organizations may prefer to rely on internal auditing systems rather than decentralized alternatives.

Another factor I think about is competition. The broader AI ecosystem is evolving quickly, and other verification or auditing solutions could emerge from both centralized and decentralized platforms. Mira’s long-term relevance will likely depend on whether its infrastructure becomes widely recognized as reliable and practical.

Scalability also remains an important question. If AI systems continue expanding across industries, the number of verification events could increase significantly. Networks responsible for recording those events must maintain performance while preserving the integrity of the verification process.

These are not trivial technical challenges.

Despite these uncertainties, the underlying problem Mira addresses feels increasingly real to me. As AI systems become more autonomous and interconnected, the need for reliable records of their behavior will likely grow. Institutions tend to rely on verifiable infrastructure rather than informal trust when automated systems begin influencing important outcomes.

For now, I see Mira less as a definitive solution and more as an experiment in how decentralized systems might support accountability in AI. Its long-term value proposition depends not only on technology but also on whether the broader ecosystem begins to view verification as a necessary layer of infrastructure.

If that shift happens, networks focused on verifying AI behavior could become more important than they initially appear. But, as with most infrastructure projects, the true value will probably reveal itself gradually through real adoption rather than through predictions about the future.