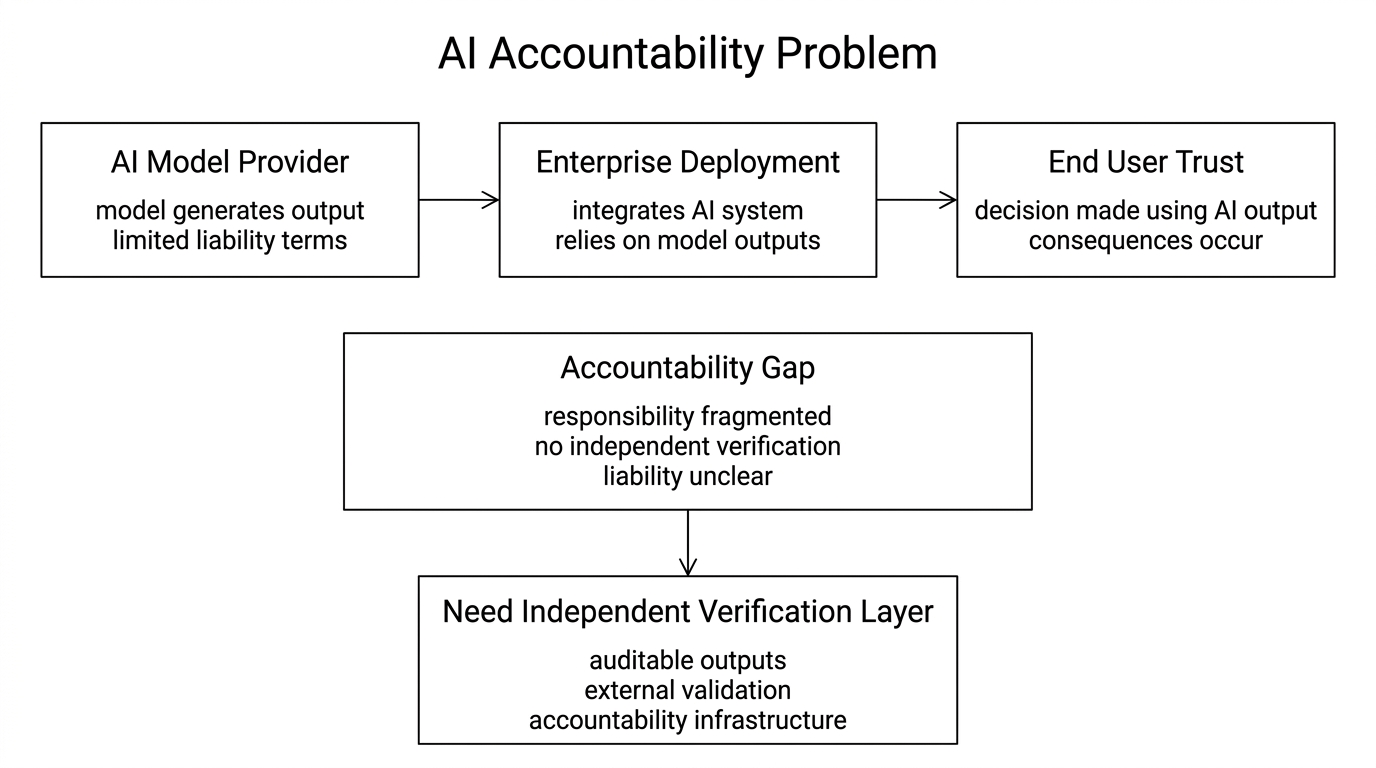

Something I keep noticing in conversations about enterprise AI deployment is that the accountability question always comes last. Teams spend months evaluating model performance, latency, cost per token, integration complexity. Then someone in legal or compliance asks the question that should have come first: if this system produces a wrong output that causes harm, who is responsible? The room gets quiet. There is no clean answer. The model provider has terms of service limiting liability. The enterprise deploying it made the integration decision. The end user trusted the output. Accountability is distributed so thinly across that chain that it effectively disappears. That is not a legal edge case. It is the central unresolved problem of deploying AI in any context where being wrong has real consequences. And it is the problem Mira Network is building infrastructure to address.

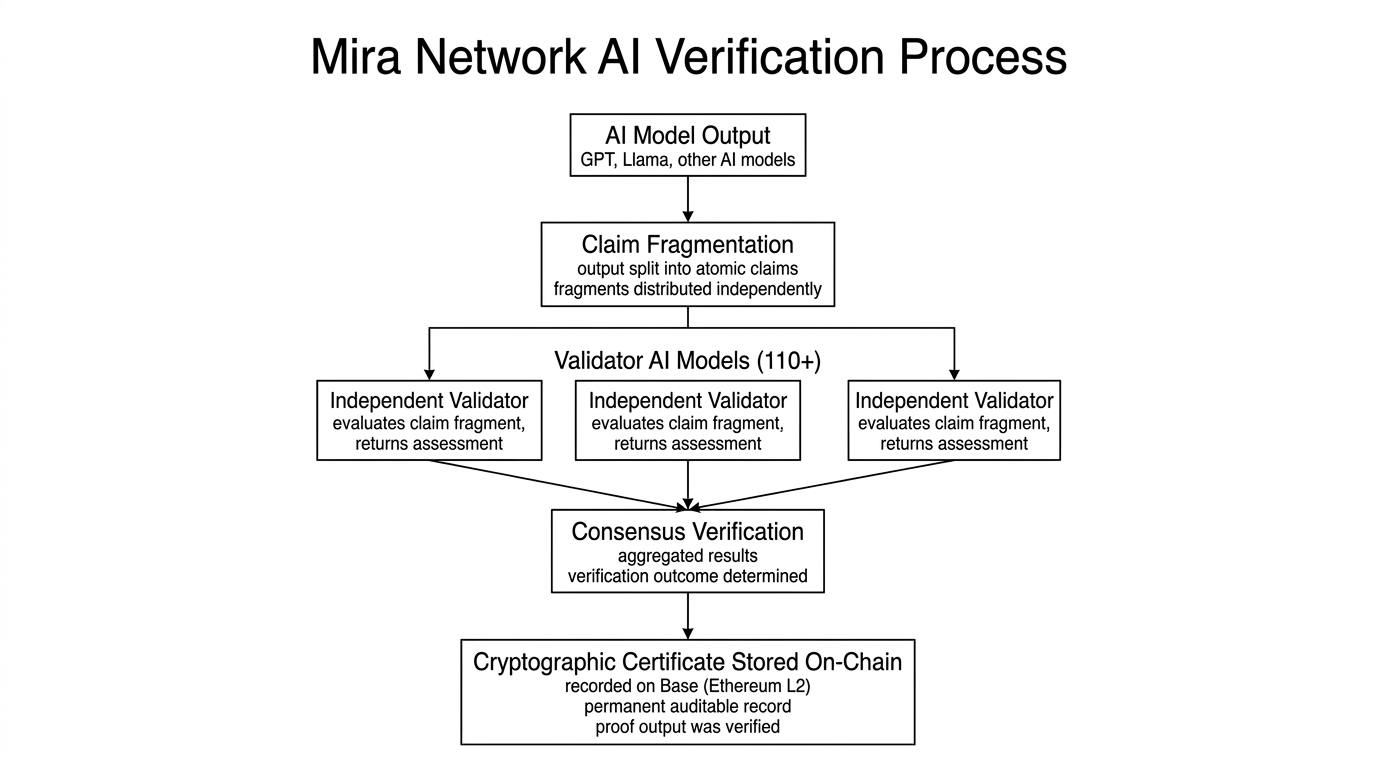

Mira is not trying to build a better AI model. That distinction is worth sitting with. The project is building a verification layer that sits above existing AI models entirely — source-agnostic, meaning it works regardless of which model produced the output. The protocol takes an AI response, breaks it into individual verifiable claims, and routes those fragments across a network of over 110 independent AI models that assess them separately without coordination. Consensus across those assessments produces a cryptographic certificate recorded permanently on Base, Ethereum's Layer 2. That certificate is the receipt the accountability chain currently lacks. It does not prove the output is correct with absolute certainty. It proves the output was independently checked by a decentralized network and that the result is auditable by anyone, forever. In regulated environments, that distinction between probably right and verifiably checked matters enormously.

What changed my thinking about whether this is serious infrastructure was understanding the fragmentation design more carefully. Each AI output is decomposed into atomic claim fragments before distribution. No single validator node ever receives the complete original content — only fragments. That architectural choice means coordinating a manipulation attack requires compromising enough independent nodes simultaneously to shift consensus, which is computationally expensive and statistically detectable. The network is not relying on node operators to be honest because they agreed to terms of service. It is making dishonesty structurally difficult and economically irrational at the same time. Those are different kinds of security guarantees, and the second one is considerably stronger than the first.

The MIRA token sits inside this security model in a way that is load-bearing rather than decorative. Validators stake MIRA to operate nodes. Honest verification earns protocol rewards. Incorrect assessments trigger slashing — automatic, code-enforced capital loss, not a governance discussion or a warning. Delegators backing misbehaving validators face the same exposure. That design is trying to solve the mercenary capital problem that plagues most infrastructure networks: participants who show up for yield and leave when it compresses, leaving the network with degraded security and thin participation exactly when stability matters most. Whether slashing achieves that filtering in practice, or whether it sits mostly dormant as theoretical deterrence while real bad actors slip through, remains the most important operational question about Mira that outside observers cannot yet answer.

Now let us talk about the friction problem because it is real and deserves honest treatment. Decentralized verification adds latency and cost relative to a direct model query. Mira's own documentation acknowledges this. For the majority of AI use cases — drafting, summarizing, customer service, content generation — that tradeoff makes no commercial sense. Nobody is going to pay a verification premium on a product description or a marketing email. The buyers who will pay are concentrated in a specific vertical: regulated industries where unverified AI outputs carry legal liability, compliance exposure, or patient safety risk. Healthcare systems, legal services, financial audit, government procurement. That is a narrower market than the total AI space, but it is a market where buyers have budget, urgency, and no viable alternative. The adoption question for Mira is really a question about how quickly those enterprise buyers move from awareness to production integration — and enterprise sales cycles are long, slow, and expensive to run.

The market picture today is uncomplicated. MIRA is trading at $0.0822, down 0.96% on the session, sitting below EMA20 at $0.0834, EMA50 at $0.0857, and EMA200 at $0.0932. RSI14 is 41.95 — weak but not yet oversold. MACD is showing a marginal positive divergence at 0.0003, which is worth noting without overstating. Volume on the 24H reached 9.62 million MIRA, above recent averages, with Binance Square running a 250,000 MIRA token campaign currently active. BaseScan shows approximately 13,000 holders on Base against a 1 billion hard cap with roughly 22.5% circulating. The next scheduled unlock is March 26 — 10.48 million MIRA releasing across ecosystem reserve, foundation, and node reward allocations. That supply event is not large enough to be alarming in isolation, but it lands in a period of compressed price momentum and needs watching in context.

Where this gets interesting is the retention question. Mira's published network figures — 4.5 million users, billions of tokens processed daily — represent activity. But infrastructure retention is a different measurement. Retention means developers are paying for Verify API access in successive billing cycles because the integration is improving real outputs in production, not because they received a grant or an incentive to try it. It means node operators are maintaining stake and running verification infrastructure during quiet markets, not just during high-reward periods. The Irys partnership for permanent on-chain storage of verification certificates matters here precisely because it extends the useful life of those certificates into regulatory and legal contexts where a document needs to be auditable a decade from now, not just verifiable today. If that integration delivers and a serious enterprise client uses it for a compliance workflow, the project's trajectory changes in a way that a price chart cannot capture in advance.

The accountability gap that opened up when AI started making consequential decisions is not going to close because the technology gets more confident. Confidence and accuracy are not the same thing and never have been. What closes the gap is a mechanism for independent, verifiable, auditable proof that an output was checked before it was trusted. Mira is building that mechanism. Whether it ships fast enough, scales cleanly enough, and reaches enterprise buyers before the window narrows is genuinely uncertain. But the problem being solved is real, growing, and unlikely to become less urgent as AI moves further into systems where the cost of being wrong falls on people who never touched the model.

@Mira - Trust Layer of AI #Mira $MIRA