I still remember the moment it clicked. A friend, a software engineer, was testing an AI model for a finance client. Simple request. Predict the next quarter’s market movement. The model spat out a number. She double-checked it. Then she tried to verify the computation itself. That’s when her jaw dropped. The verification cost literally checking if the model did what it claimed was higher than actually running the model in the first place. That’s not just odd. That’s terrifying. Because if this is the future of AI, who’s paying for trust? And who gets to decide what is true?

Enter Fabric Protocol, with its token $ROBO. Think of it less like a flashy crypto stunt and more like a quiet experiment in making AI accountable without giving all the power to a single corporation. The premise is deceptively simple: distribute compute and verification tasks across a network of nodes. Sounds like blockchain 101 applied to AI. But the devil, as always, is in the execution. Running AI is expensive. Verifying it? Usually more so. Fabric wants to flip that equation.

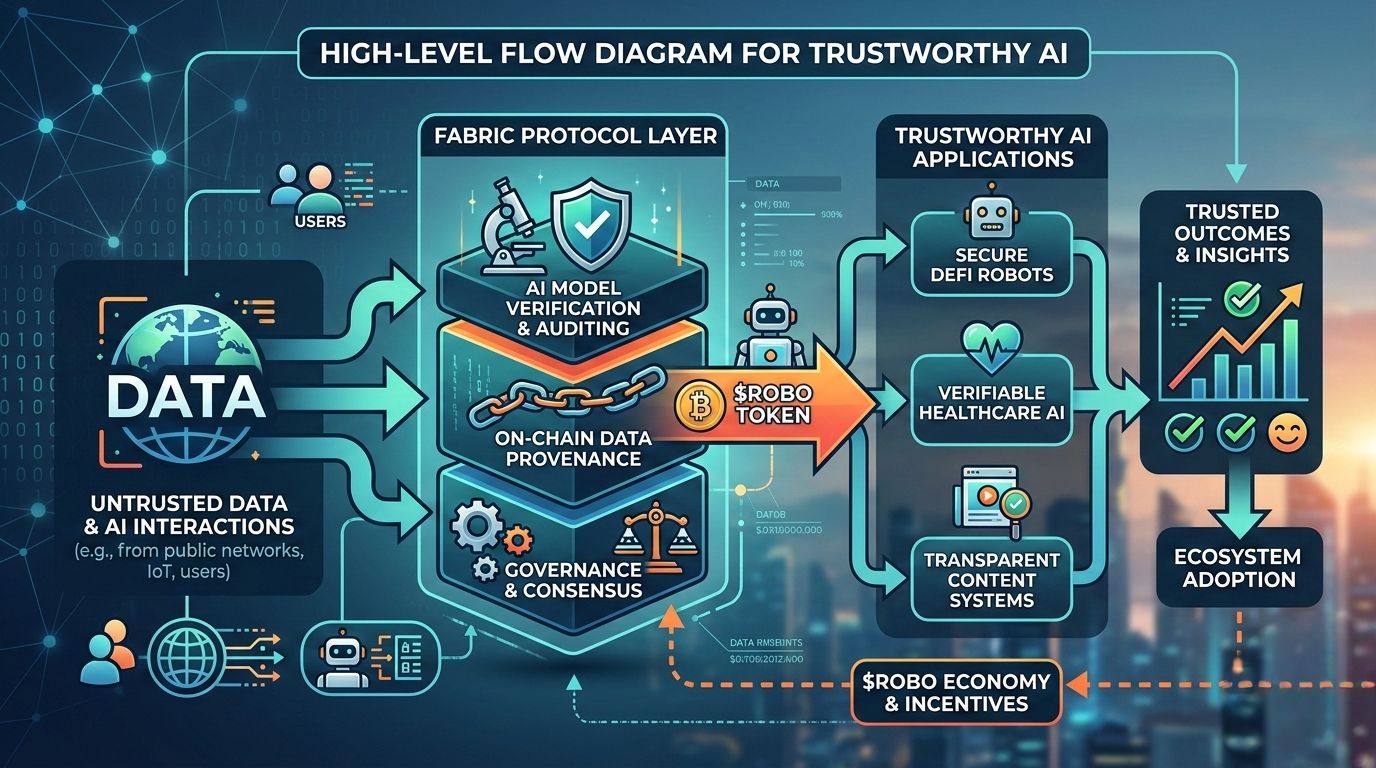

Here’s how it works. A user asks for an AI output a market report, a climate simulation, a scientific prediction. That request enters the network, gets assigned to a node, the node runs the computation, and other nodes check that the math, the model, and the inputs were all correct. Only then does the result reach the user. ROBO enters here as the grease — rewarding nodes, incentivizing validators, making the entire system tick. The logic is elegant, but elegant rarely means easy.

Here’s how it works. A user asks for an AI output a market report, a climate simulation, a scientific prediction. That request enters the network, gets assigned to a node, the node runs the computation, and other nodes check that the math, the model, and the inputs were all correct. Only then does the result reach the user. ROBO enters here as the grease — rewarding nodes, incentivizing validators, making the entire system tick. The logic is elegant, but elegant rarely means easy.

Consider the hurdles. Verification at scale is brutal. Large models generate outputs so complex that repeating computations for every node verification could choke even the most robust infrastructure. Then there’s latency. A network spread across thousands of nodes is not exactly a speed demon. Add to that the challenge of keeping token incentives aligned — if the rewards aren’t balanced, nodes could game the system, fake computations, or sit idle. And don’t forget security: in a decentralized AI world, a few malicious actors could potentially throw off results or manipulate outputs.

Yet there’s something compelling here. Imagine AI outputs you can actually trust because the computation can be verified independently. Picture a scientist running a climate model or a biotech researcher simulating protein folding, knowing the result didn’t come from a black box, but a distributed, verifiable system. Or think of financial institutions using AI predictions that carry audit trails baked into the network. Fabric is attempting to build not just infrastructure, but a layer of accountability.

The economic angle is fascinating, too. ROBOisn’t just a token to hype investors; it’s the glue. Nodes earn ROBO for contributing compute. Validators earn it for verification. Users pay it to access AI services. There’s a self-reinforcing loop here if the tokenomics are designed well, the network could run on autopilot, with incentives naturally steering participants toward honest behavior. But if mismanaged, the whole thing collapses like a house of cards. Token economics in practice is always messier than theory.

And yet, the promise is undeniable. Traditional AI is centralized. That centralization has perks: speed, uniformity, simplicity. But it also carries enormous risks. A single flawed model, a rogue update, or biased training data can have cascading consequences. Decentralized AI attempts to spread risk, distribute control, and provide verifiable outputs. It’s a hedge against blind trust.

But let’s be real. This is early-stage work. Fabric is building on ideas that are technically complex and economically delicate. The verification methods, the distributed computation protocols, the incentive mechanisms all of it is untested at the scale the world might demand. Yet if even part of this vision works, it could redefine how we consume AI, how businesses trust it, and how global compute resources are used.

The “so what?” is tangible. For end users, it could mean AI you can audit. For developers, it’s a chance to tap global compute without owning a datacenter. For businesses, it’s potential accountability baked into AI outputs. For investors and observers, it’s a peek into a system that treats AI not just as a tool, but as infrastructure infrastructure that needs trust as much as it needs power.

In the end, Fabric Protocol and $ROBO are asking questions we’ve mostly ignored: Who owns AI? Who verifies it? Who decides what’s right? The answers aren’t simple. The system isn’t perfect. But the pursuit is worth watching. Because the future of AI isn’t just about intelligence; it’s about credibility, verifiability, and whether we can trust what these increasingly autonomous systems tell us. That’s the story Fabric is trying to write and we’re just at the first chapter.