Artificial intelligence has entered an era where its capabilities feel almost magical. Systems developed by companies like OpenAI, Google DeepMind, and Anthropic can write essays, analyze markets, generate code, and even simulate human reasoning with impressive fluency. Tools such as ChatGPT demonstrate how quickly AI can transform raw information into coherent narratives. Yet the more i observe these systems, the more i notice a fundamental contradiction hidden beneath their intelligence: they can sound incredibly confident while being completely wrong.

The problem is not a small technical bug. It is something deeper. Large language models do not truly “know” facts in the way humans do. They predict patterns in language. Their job is to produce the most statistically likely sequence of words based on training data. When they generate an answer, they are not verifying truth; they are estimating probability.

This is why hallucinations happen.

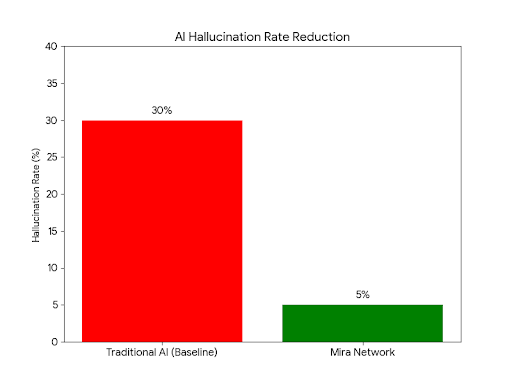

Sometimes the model fills in gaps with information that sounds perfectly reasonable but is entirely fabricated. A research paper might be cited that never existed. A statistic may appear convincing but have no real source. In casual conversation this might be harmless, even amusing. But once AI begins operating in critical environments—medicine, finance, governance, autonomous systems—the consequences become serious.

I often think about this moment in AI history as a transition from impressive tools to decision-making systems. And that transition forces a new question: how can we trust machine-generated knowledge?

For me, this is where the concept behind Mira Network becomes fascinating. Instead of trying to build a single AI model that never makes mistakes—a goal that may be unrealistic—the idea is to build an infrastructure that verifies AI outputs.

In other words, instead of asking whether an AI is intelligent, the system asks whether its answer can be proven.

The architecture borrows ideas from decentralized networks originally pioneered by Bitcoin and later expanded by ecosystems like Ethereum. Those systems solved a different but related problem: how to create trust in an environment where participants do not know each other.

Blockchains achieve this by distributing verification across many independent nodes. No single authority decides which transactions are valid. Instead, the network reaches consensus.

Mira attempts to apply a similar philosophy to artificial intelligence.

When an AI produces an output, the system breaks the response into smaller claims. Each claim can then be evaluated independently. These claims are distributed across a network of different AI models and verification agents that attempt to validate them.

Instead of trusting one model, the system relies on multiple perspectives.

If enough independent verifiers agree on the accuracy of a claim, the information becomes cryptographically validated. What begins as a probabilistic answer gradually transforms into something closer to verified knowledge.

From my perspective, this shift changes how we should think about AI entirely. Today most discussions focus on building better models—larger datasets, more parameters, more computational power. But verification layers introduce a new dimension. Rather than perfecting intelligence itself, we build systems that check intelligence.

It is a subtle but powerful idea.

Researchers at institutions like MIT and Stanford University have increasingly argued that reliable AI may require exactly this kind of architecture. The future might not be dominated by one superintelligent model but by networks of models that continuously verify each other.

I find this perspective both elegant and pragmatic.

Instead of chasing perfection, it embraces the reality that errors will always exist. What matters is building systems that detect and correct them.

Another intriguing aspect of the Mira approach is its economic structure. Verification is not just a technical process; it is incentivized. Participants who correctly validate claims receive rewards, while inaccurate verification can lead to penalties.

In theory, this creates a marketplace for truth.

Economic incentives encourage participants to act honestly because accuracy becomes profitable. The system attempts to align financial motivation with informational integrity.

But this is also where deeper questions emerge.

Markets can be powerful tools for coordination, yet they are not immune to manipulation. History shows that decentralized systems sometimes develop power concentrations. Mining pools in blockchain networks, validator cartels, and coordinated governance attacks all demonstrate how economic incentives can be exploited.

So i wonder: what happens if similar dynamics emerge in AI verification networks?

Imagine a situation where many verification agents are trained on the same flawed dataset. They might collectively reinforce the same incorrect assumption. Consensus could emerge—not because the claim is true, but because the verifiers share the same bias.

This reveals a profound challenge that technology alone cannot solve.

Verification systems depend on the diversity and independence of their participants. Without that diversity, consensus can become an echo chamber.

Still, despite these complexities, the broader direction feels inevitable. As AI moves deeper into critical infrastructure, society will demand stronger guarantees about the reliability of machine-generated information.

Trust will no longer be optional.

We are already seeing early examples of verification layers emerging in other domains. Autonomous vehicles cross-check sensor data from cameras, radar, and lidar to confirm what they perceive. Financial algorithms use multiple risk models to verify trading decisions. Even search engines developed by Google increasingly compare outputs across systems before presenting answers.

These systems follow the same underlying principle: intelligence should not operate without verification.

Projects like Mira suggest that AI itself might eventually operate on top of verification networks. Instead of a single model answering questions, multiple agents could collaborate, challenge, and confirm information before it reaches the user.

If that vision becomes reality, artificial intelligence will evolve into something more accountable.

Not just a generator of answers, but a participant in a distributed process that continuously tests the validity of knowledge.

#Mira $MIRA @Mira - Trust Layer of AI