We are living through an uncomfortable moment in the evolution of artificial intelligence. While generative models like GPT and Llama stun us with their fluency, they simultaneously introduce a profound ethical crisis: the complete lack of verifiable accountability. As AI systems begin making decisions with real-world consequences—from legal summaries to medical advice—we face a terrifying reality: we are often just taking a machine’s word for it.

The problem isn't just about a model "hallucinating." It's that the current AI architecture optimizes for confidence, not truth. A probabilistic model will confidently state a falsehood, and because it is fluent, we are conditioned to believe it. This is where the @mira_network integration becomes less about technical API calls and more about fundamental human safety.

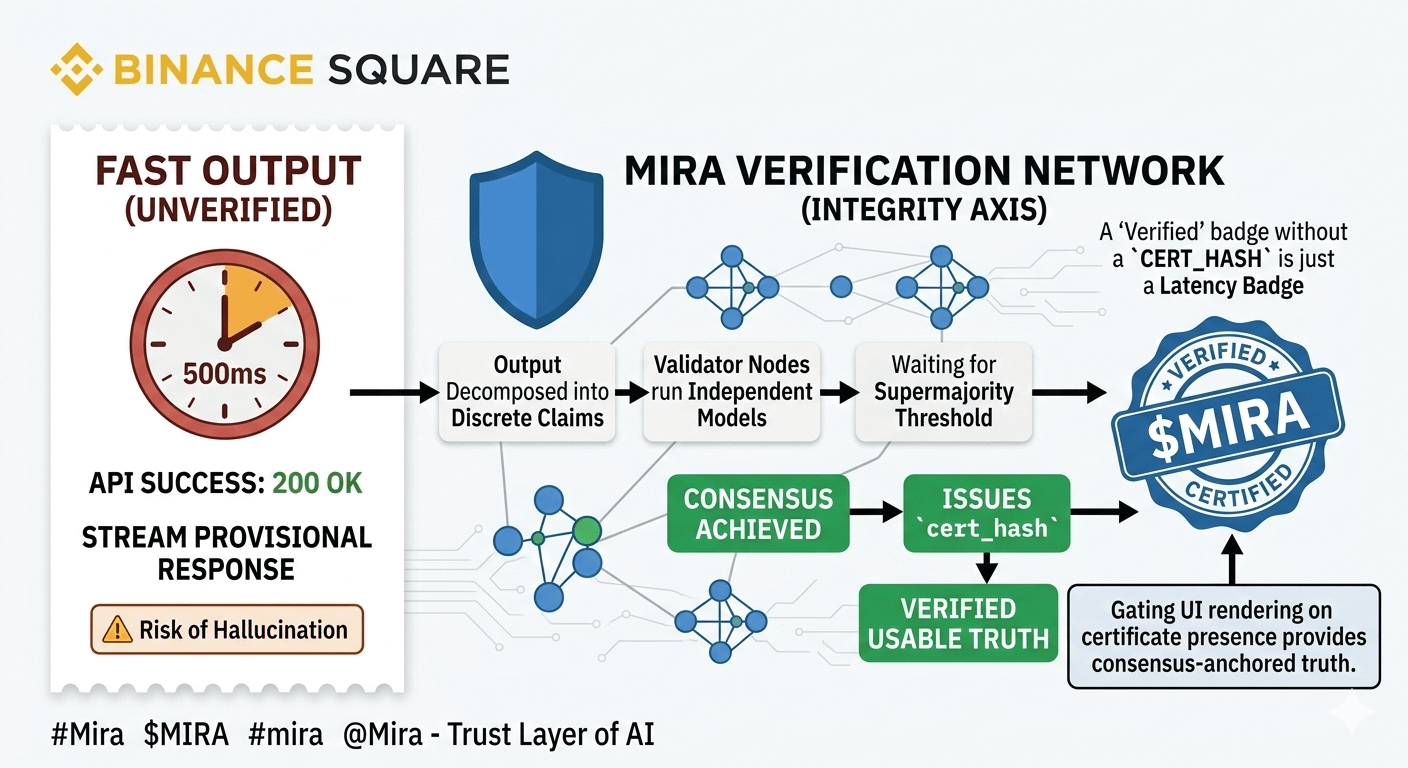

Mira's decentralized verification layer creates a missing moral compass for these models. When a critical output is generated, it shouldn't just be rendered and consumed. It needs a cryptographic anchor—the $MIRA cert_hash—that proves it has passed distributed consensus. Without this verification, we are simply amplifying unproven claims. The industry must move beyond "just fast" AI and embrace "accountable AI".

A system is only as trustworthy as its verification layer. If we fail to establish this now, the ethical rot in AI will become systemic, and public trust will collapse. #Mira isn’t just building technology; it is building the necessary infrastructure for ethical AI usage, and we must all pay close attention.

"The Gap Between 'Fast' and 'Right'. Most AI today is built for speed, but speed without verification is just a fast way to spread misinformation. As shown in the chart above, @mira_network introduces a mandatory 'Integrity Axis.' We shouldn't settle for an API that just says '200 OK.' We need the $MIRA cert_hash to prove that the data has survived scrutiny from a distributed network of validators. In the world of #Mira, truth isn't probabilistic—it's anchored."