Verifying Machine Work Before Trusting Machine Power

What stands out to me about Fabric is that it is not mainly trying to put AI on a blockchain for branding purposes. It is trying to use a ledger as a public verification and coordination layer for robot identity, task execution, payments, and human oversight, with ROBO positioned as the token that ties those functions together.

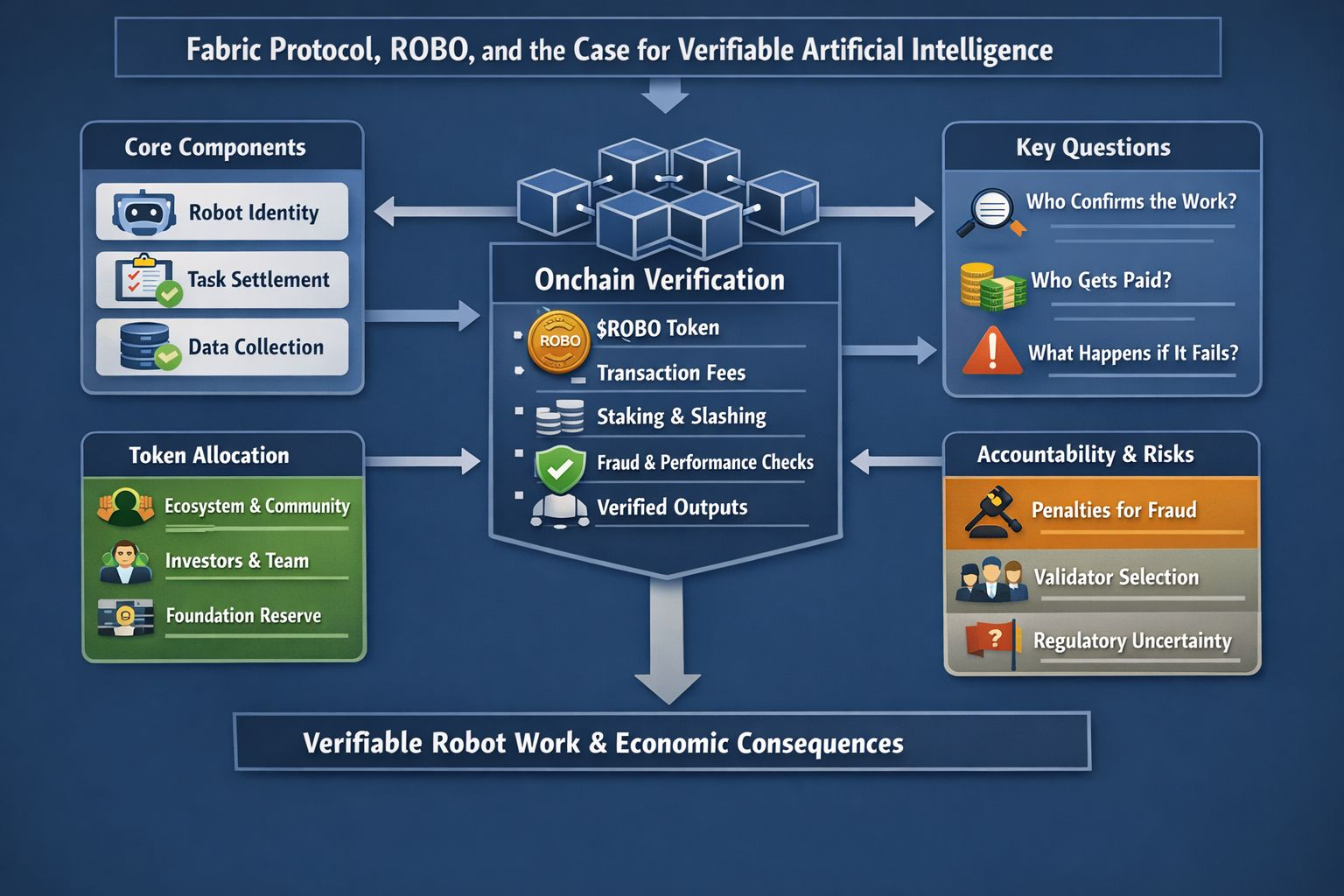

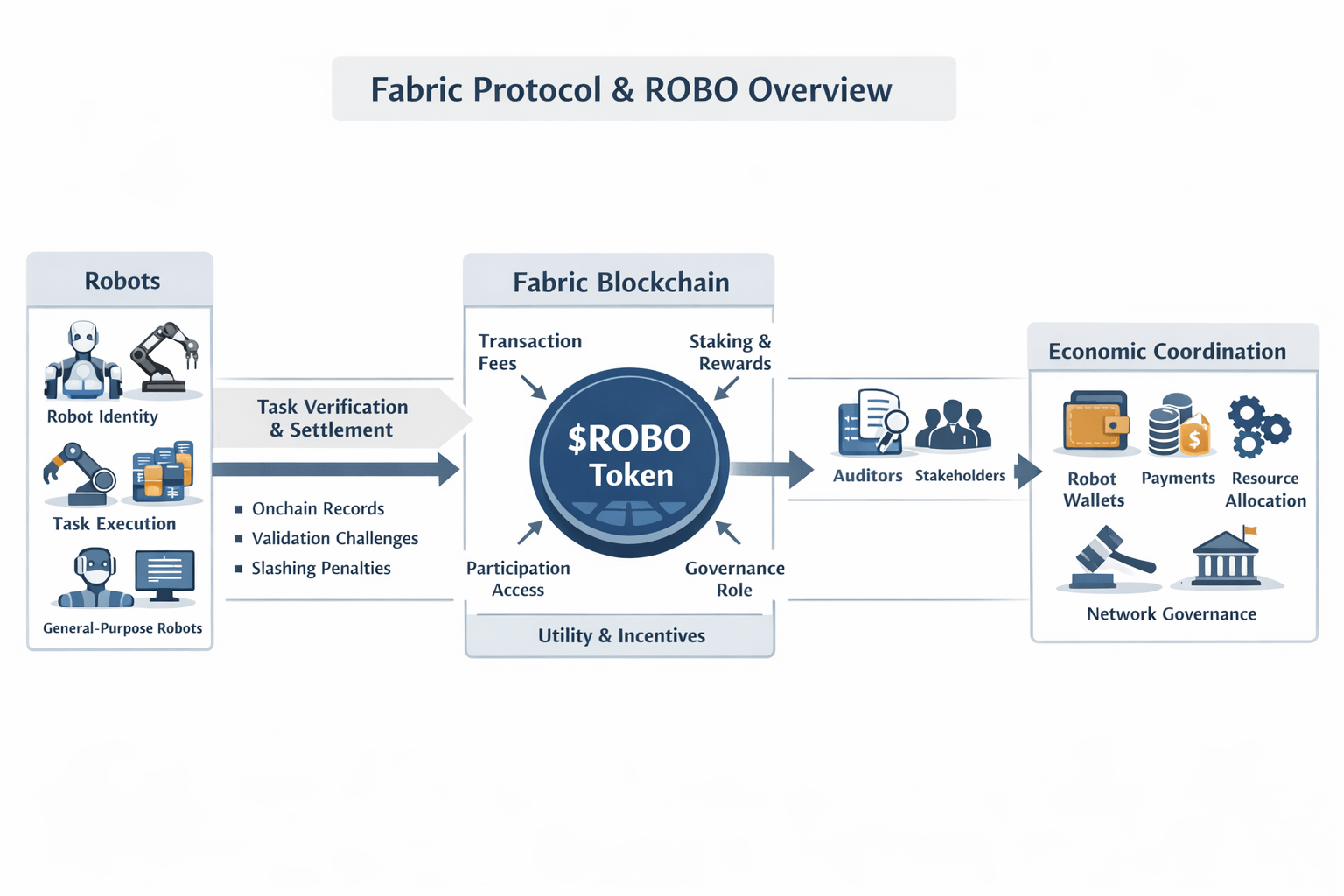

I read the project less as a robotics startup and more as an attempt to build rules for machine participation in economic life. Fabric’s own framing is unusually direct. The protocol is meant to build, govern, own, and evolve general-purpose robots through public ledgers, while the Foundation describes its broader mission as creating infrastructure for open and verifiable human to machine alignment.This is important because it changes the focus from AI ability alone to practical questions like who confirms the work, who gets paid for it, and what people can do if the system breaks. The mechanism is fairly plain once I strip away the grand language. Robots need persistent identity, wallets, and a way to prove that a task was completed under agreed conditions. Fabric says those functions should live onchain, with $ROBO used for transaction fees, identity-related activity, verification, participation staking, and eventually governance over operational policies such as fees. In that design, blockchain is not replacing the robot’s intelligence. It is supplying a shared record and settlement layer around the robot’s behavior.

That is where the title’s real meaning sits for me. Fabric is not claiming that a ledger verifies intelligence in the abstract. It is trying to verify bounded outputs of machine systems inside an economic process. The whitepaper repeatedly centers task settlement, structured data collection, fraud challenges, slashing, and contribution tracking. So the operative question is not whether the chain can prove a robot is generally “smart.” It is whether the network can make narrower claims legible: this robot did the work, this data was submitted, this contribution improved the system, this payment should settle, this failure should be penalized.

I think that distinction is important because it makes the project more serious than many AI-token pairings, but it also makes it harder. Verifying outputs in the physical world is expensive and messy. Fabric’s answer is a mix of operational data, challenge mechanisms, staking, slashing, and what it calls verified work. The whitepaper describes penalties for proven fraud, availability failure, and quality degradation, including slashing ranges of 30% to 50% for fraudulent work and a 5% bond slash for availability failure in certain conditions. Those numbers reveal that the protocol is not designed as a loose reputation system. It is trying to impose enforceable economic consequences on robot operators and validators.

ROBO matters inside that structure because Fabric is making the token do more than one job. According to the Foundation’s February 2026 material, all transaction fees are intended to be paid in $ROBO, participants coordinating robot genesis must stake it, developers and businesses entering the network are expected to buy and stake a fixed amount, and rewards are paid for verified work across skills, tasks, data, compute, and validation. The token, in other words, is being cast as the unit that prices access, secures participation, and routes incentives across the network. I read that as a deliberate attempt to bind token demand to actual protocol use rather than leave it floating as a narrative asset. Whether that linkage holds in practice is still unresolved.

The current data tells a useful story about priorities. The whitepaper explains that there will only ever be 10 billion ROBO tokens. Those tokens are split into different parts for community use, investors, the team, the Foundation’s reserve, free token distribution, market launch needs, and a small public sale portion.

The percentages matter because they show Fabric is not pretending this will bootstrap as a purely grassroots system. It is reserving a large share for managed ecosystem formation and long-duration coordination, while using vesting cliffs and multi-year linear release schedules to slow immediate supply release. That supports stability, but it also means power and discretion remain fairly concentrated in the early phase.

The timeline is also concrete enough to judge.According to the December 2025 whitepaper, Fabric’s 2026 plan begins in Q1 with the first tools for robot identity, paying for tasks, and gathering structured data. In Q2, the focus shifts to giving rewards for verified work and expanding developer involvement.Q3 is aimed at more complex tasks, repeated usage, and selected multi-robot workflows, while the longer-term plan points toward a machine-native Layer 1 informed by real-world usage. I find that sequence more credible than projects that start with chain sovereignty and only later ask what exactly is being coordinated. Here, at least on paper, the chain grows out of operational data rather than the other way around.

There is also a broader pattern here that I do not think should be missed. Fabric’s website describes public-good infrastructure around machine and human identity, decentralized task allocation, location-gated and human-gated payments, and machine-to-machine communication. Its blog adds a simple but telling premise: robots cannot open bank accounts or hold passports, so they need wallets and verifiable onchain identities to operate as economic actors. That is a strong systems view.It does not look at AI as only a program that responds to prompts. It looks at AI as something involved in deals, delivery networks, service tasks, and responsibility tracking. At the same time, the risks are real and significant.Fabric itself acknowledges open questions around validator selection and governance before mainnet deployment, including whether the initial validator set is permissioned, permissionless, or hybrid. The project is also explicit that $ROBO does not confer equity, debt, profit share, or ownership of robot hardware, and the Foundation notes that regulatory treatment can vary by jurisdiction. To me, that creates a strange but honest tension: the protocol wants to coordinate real machine economies, yet its core token is intentionally framed as functional access rather than a claim on cash flows or assets. That may reduce some legal exposure, but it also means users have to believe network utility will become meaningful enough to sustain the system.

My own view is that Fabric becomes interesting precisely when I stop reading it as an AI thesis and start reading it as an accountability thesis. If it works, the project could make a narrow but important contribution: turning some parts of machine behavior into something inspectable, challengeable, and payable in public. If it fails, it will probably fail for ordinary reasons, weak deployment traction, governance concentration, poor real-world verification, or a token layer that never becomes as necessary as the design assumes. Those are real risks. They are also the right risks for a project attempting to verify machine work instead of merely describing machine intelligence.

@Fabric Foundation #ROBO $ROBO