There is a version of the autonomous future that nobody is advertising. In that version, a robot makes a consequential mistake — mishandles a medical delivery, misjudges a warehouse interaction, executes a task in a way that causes real harm — and nobody can explain how the decision was made, who authorized it, or how to prevent it from happening again. The machine processed inputs, produced outputs, and the chain of accountability dissolved somewhere between the algorithm and the action. This is not a hypothetical failure mode. It is the default outcome when autonomous systems scale without governance infrastructure.'

The question of who watches the machines is not a philosophical one. It is an engineering problem.

Fabric Protocol is one of the few projects treating it as such. The Global Robot Observatory embedded in the Fabric Protocol architecture is not a monitoring dashboard built for appearances. It is a mechanism through which human participants anywhere in the world can observe robot behavior in real time, flag anomalies, submit critiques, and have that feedback incorporated into how robots learn and operate. The loop between human observation and machine behavior is closed on-chain, verifiable, and ongoing.

What interests me about this design is that it inverts the usual assumption about who benefits from robot oversight. Most safety frameworks are built by the companies deploying the robots, for the regulators those companies need to satisfy. The incentive is to make oversight look thorough rather than be thorough. Fabric Protocol's model distributes that oversight function across a global network of participants who are compensated in $ROBO for genuine, verified contributions — not for rubber-stamping behavior that was already approved.

This is what programmable accountability looks like in practice. Not a compliance checkbox. A living system where the quality of human feedback is measured, rewarded, and fed back into machine behavior continuously.

ROBO at $0.0404 today, with 270 million tokens traded in the last twenty-four hours. The price range has been tight — the market is consolidating around a level that reflects genuine uncertainty about near-term catalysts rather than any structural weakness in the project. What the volume tells me is that participation remains active while the network moves through the operational phase that matters most: the period between infrastructure deployment and scaled adoption.

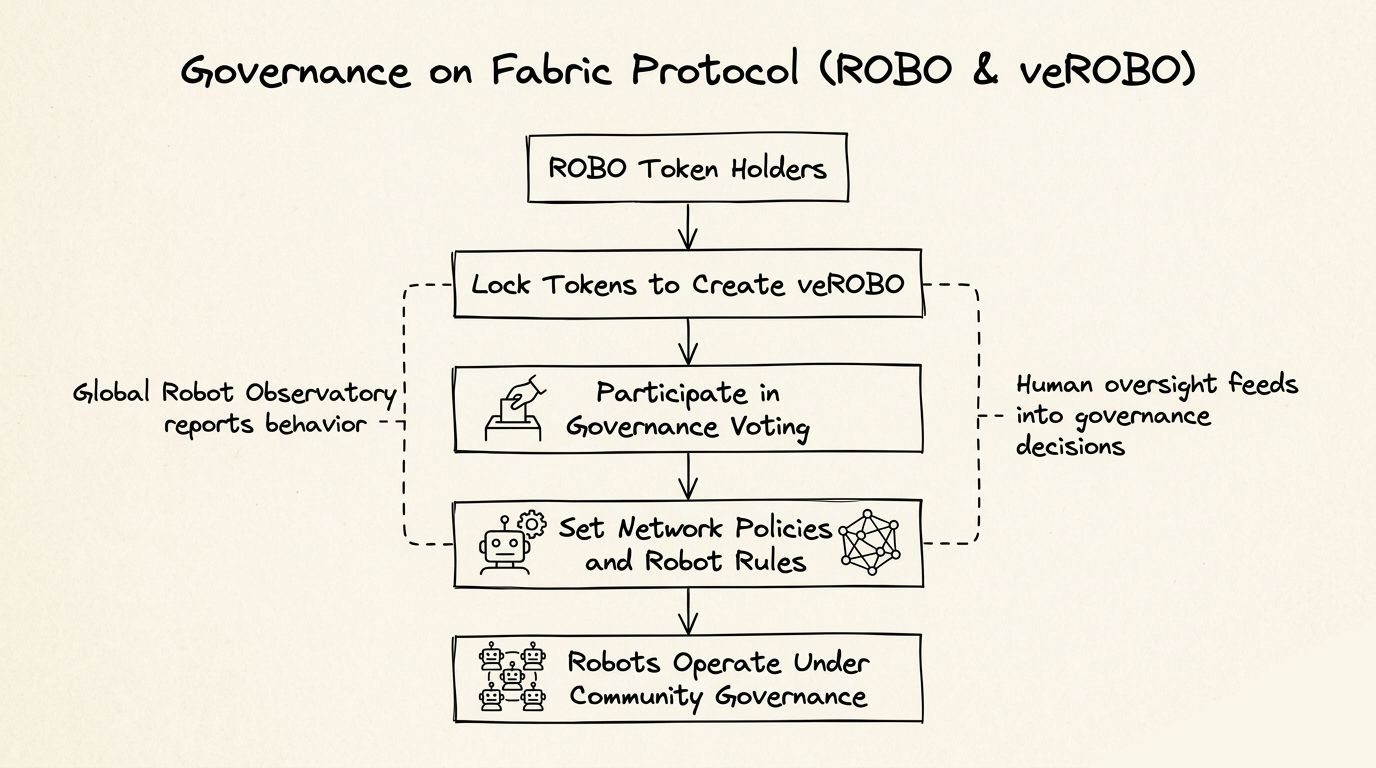

The governance layer of Fabric Protocol extends beyond safety observation. Token holders participate in setting network fees, operational policies, and the parameters that determine how robots are onboarded, verified, and allocated tasks. The veROBO mechanism — where ROBO is locked to gain weighted governance influence — is not novel as a design pattern, but its application here carries more consequence than in most DeFi contexts. Governance decisions in Fabric Protocol shape how physical machines operate in the real world. The stakes are categorically different from voting on a treasury allocation.

That weight is either a strength or a liability depending on how seriously the participant community takes it. Governance systems built on token-weighted voting have a documented tendency toward plutocracy — the largest holders set the terms, smaller participants disengage, and the decentralization the system was designed to provide gradually hollows out. Whether Fabric Protocol's design avoids that trajectory is something I am genuinely uncertain about. The architecture acknowledges the problem. Acknowledging it and solving it are different things.

The deeper question sitting underneath all of this is one the industry has not answered cleanly for any project at meaningful scale: can a decentralized network maintain the coordination discipline required to govern physical infrastructure? Software protocols can recover from governance failures through forks and upgrades. A robot fleet operating under compromised governance parameters causes problems in the physical world that do not roll back.

ROBO is live. The network is operational. Mainnet is approaching the transition point where these governance mechanisms stop being theoretical and start being tested by real conditions. The design choices Fabric Protocol has made — distributed human oversight, on-chain verification, token-weighted governance with structured incentives — reflect a team that has thought carefully about what happens when things go wrong, not just when they go right.

Whether that careful thinking translates into a governance model that holds under pressure from real operators, real developers, and real machines making real mistakes is the question I keep returning to. It is also the only question that ultimately matters.

#ROBO @Fabric Foundation $ROBO