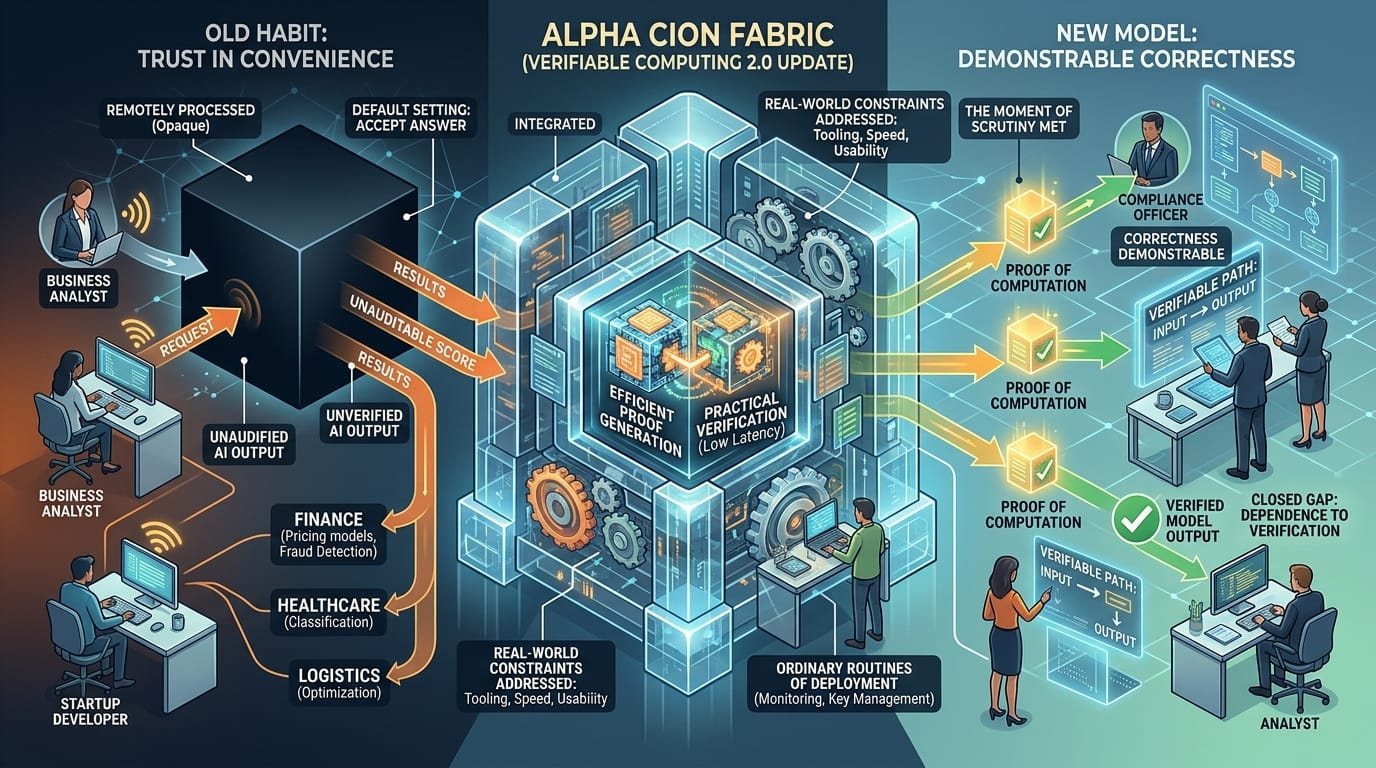

Most computing still runs on trust disguised as convenience. An image is generated. A machine learning model returns a score. A backend system settles a calculation you cannot inspect directly. The work happens somewhere else, inside infrastructure you do not control, and in most cases you accept the answer because there is no practical alternative. Verifiable computing begins where that habit starts to look inadequate.

What gives it renewed urgency now is the changing texture of digital systems. More of the world’s computation is happening remotely, opaquely, and at scale. AI inference is outsourced. Data pipelines stretch across vendors. Cloud services return outputs that may be expensive or impossible for the end user to reproduce independently. The more central these systems become, the less satisfying it is to treat trust as a default setting.

That is the backdrop for Alpha CION Fabric’s future update. Whatever branding sits around it, the underlying challenge is real. A verifiable system is not just one that produces a result. It is one that can produce evidence about how that result was obtained, in a form that another party can check without taking the entire workload back in-house. That changes the conversation from “trust me” to “verify this,” which sounds subtle until you think about where the friction lives. In finance, a model output can influence credit, pricing, or fraud detection. In healthcare, a remote system might process sensitive data and return a classification that affects treatment steps. In logistics, an optimization engine may assign routes, costs, or priorities across a network no single participant fully sees. In each case, the result matters. So does the ability to prove that the process was sound.

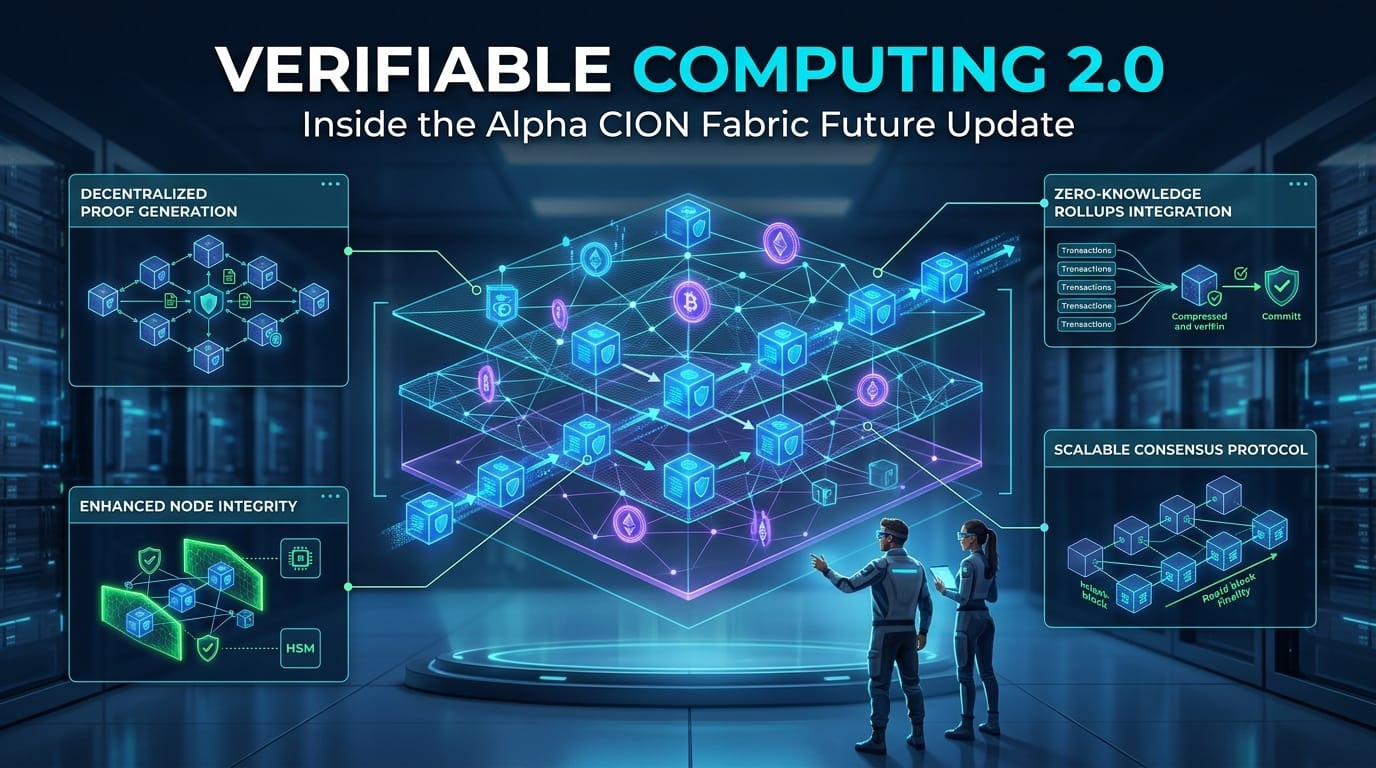

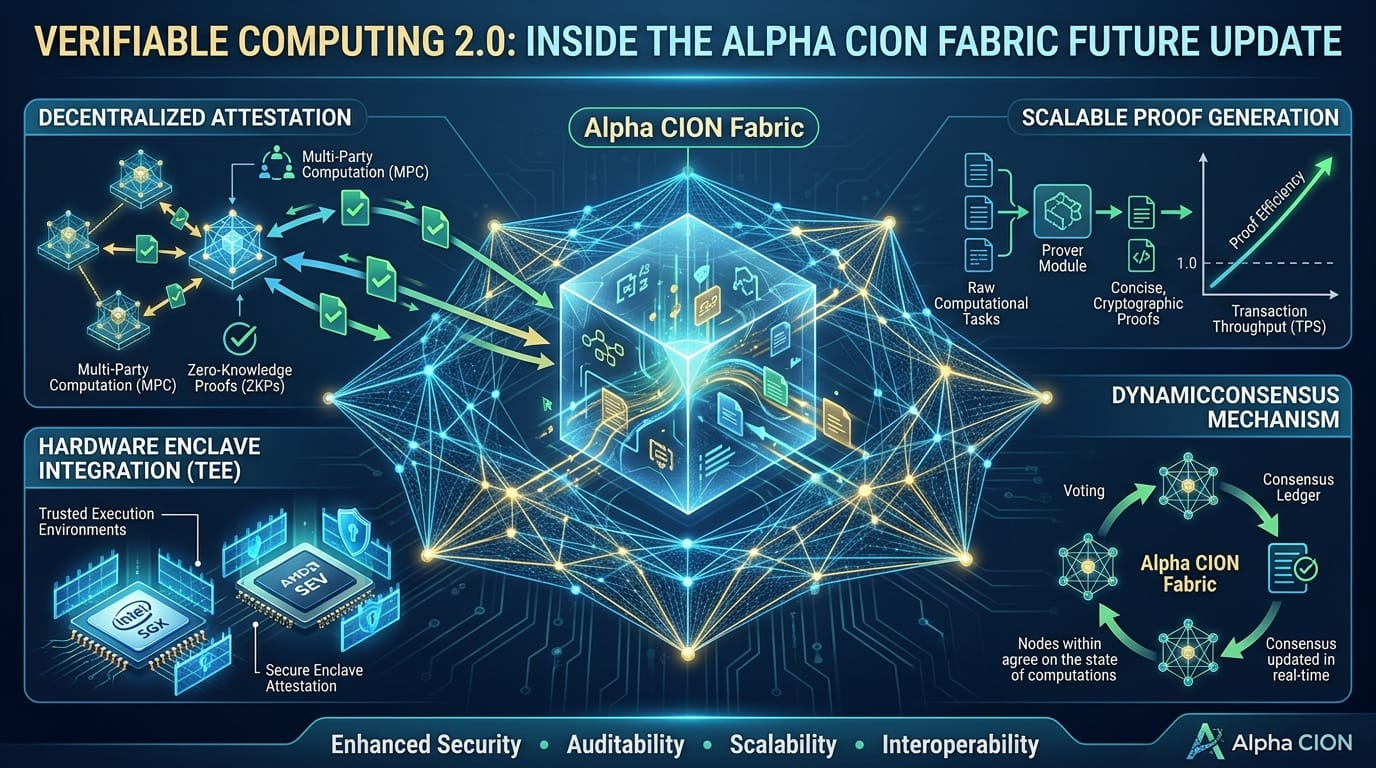

The promise of Verifiable Computing 2.0, if the phrase is to mean anything, is not that proof becomes magical. It is that proof becomes practical enough to use outside a narrow set of demonstrations. That is a much harder ambition. Verifiable computing has always had a speed problem, a tooling problem, and a usability problem. Proof generation can be computationally expensive. Verification may be easier than reproducing the original work, but still heavy in contexts where low latency matters. Developers often face steep complexity just to integrate proof systems into ordinary software. And users, by and large, do not want to become amateur cryptographers just to trust a service they are already paying for.

So the value of Alpha CION Fabric’s update will depend on whether it narrows those gaps. Not in principle. In practice. Can proofs be generated with costs that make sense for live systems rather than lab exercises? Can verification be performed efficiently enough to fit into applications where time matters? Can developers work with it without rearranging their entire stack around specialized infrastructure.

You can see why this matters by looking at the current shape of trust online. A business analyst uploads data to a service and receives a score she cannot independently audit. A startup calls a remote AI model through an API and ships the output into customer workflows without any direct proof of what model version produced it or whether the environment was manipulated. A procurement team depends on software that claims to optimize decisions but cannot show a verifiable path from input to output. This is not necessarily fraud. Often it is just opacity. The systems work, until someone needs to know more than the interface is willing to tell them.

That demand for proof tends to arrive late. Not on launch day, when demos are smooth and confidence is high, but after an error, a dispute, an outage, or a compliance review. Then the missing record becomes obvious. Then someone wants to know exactly which computation ran, under what assumptions, on which data, and with what guarantees that the result was not altered. Verifiable computing is strongest when treated not as a futuristic add-on but as a response to that ordinary moment of scrutiny.

The challenge, of course, is that digital systems are full of tradeoffs. Stronger guarantees often mean more overhead. More proof means more computation, more complexity, more decisions about what gets attested and how. If Alpha CION Fabric wants to move verifiable computing forward, it has to deal honestly with those constraints. A system that generates beautiful proofs but slows operations to a crawl will not last outside niche use cases. A framework that offers airtight correctness but requires developers to become specialists in unfamiliar cryptographic workflows will narrow its own audience. There is no escaping these tensions. The only serious approach is to work through them.

That is why the most interesting part of any future update in this field is rarely the headline feature. It is the engineering judgment underneath. What has been simplified? What has been pushed closer to the developer instead of buried in theory.

There is also a deeper cultural shift here.Verifiable computing pushes against that bargain. It suggests that correctness should be demonstrable, not merely asserted, especially when computation is becoming more consequential and less visible to the people affected by it. That does not mean every workflow needs a proof attached to it. It means the old assumption—that remote computation can remain a black box as long as it is useful enough—looks less stable than it once did.

Alpha CION Fabric’s future update sits inside that transition. Whether it succeeds will depend on whether it makes verifiability feel less like a specialist’s discipline and more like a workable layer in everyday systems. That is a demanding standard. But it is the right one. Computing does not become more trustworthy because we describe it better. It becomes more trustworthy when a result can withstand inspection after the convenience wears off. If this update gets closer to that condition, even by degrees, it will be doing something more important than adding features. It will be helping close the gap between computation we depend on and computation we can actually verify.