I keep thinking about how often people talk about robotic work like it’s simple. A task is either done or it failed. That sounds like a great idea on paper, but in reality work rarely goes that way.

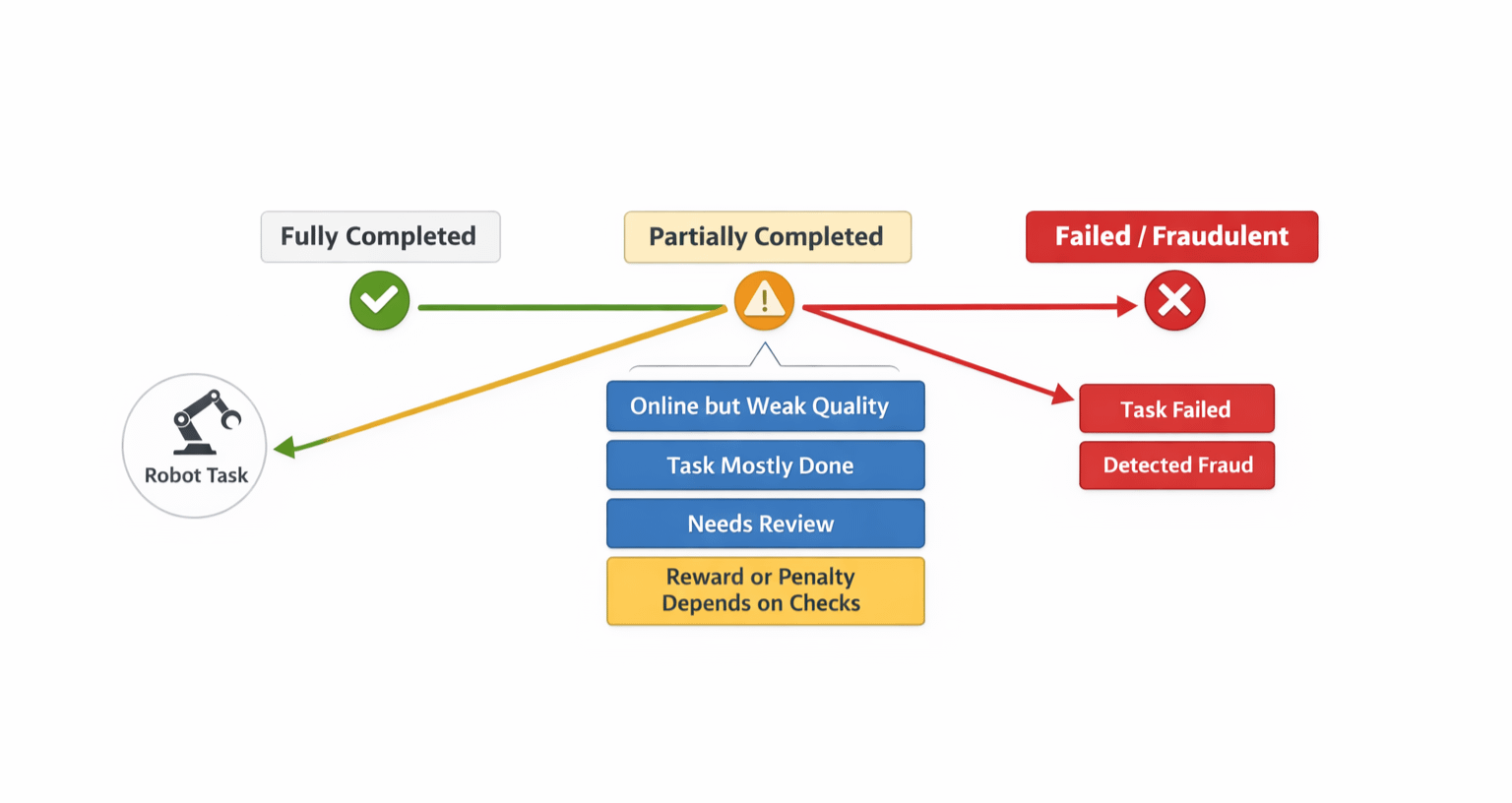

Sometimes a robot can do the major part of the job, but still cannot do it all. It gets to its destination but somehow forgets the final handoff.. It stays online, but the performance is weak. It logs the task as completed, but the actual result still leaves a problem behind. That middle area is messy, and honestly, it matters a lot more than people like to admit.

That’s why this part of Fabric Protocol caught my attention.

What I find interesting is that Fabric’s own whitepaper doesn’t try to oversimplify this. It doesn’t act like every robotic task can be verified in a perfect, clean way. In fact, it says full universal verification would be too expensive. So instead of forcing everything into a strict yes or no model, Fabric leans on a challenge based system, validator checks, user feedback, and economic penalties.

To me, that feels more grounded in how robot work actually looks in the real world.

And once you look a bit closer, the design starts to make more sense.

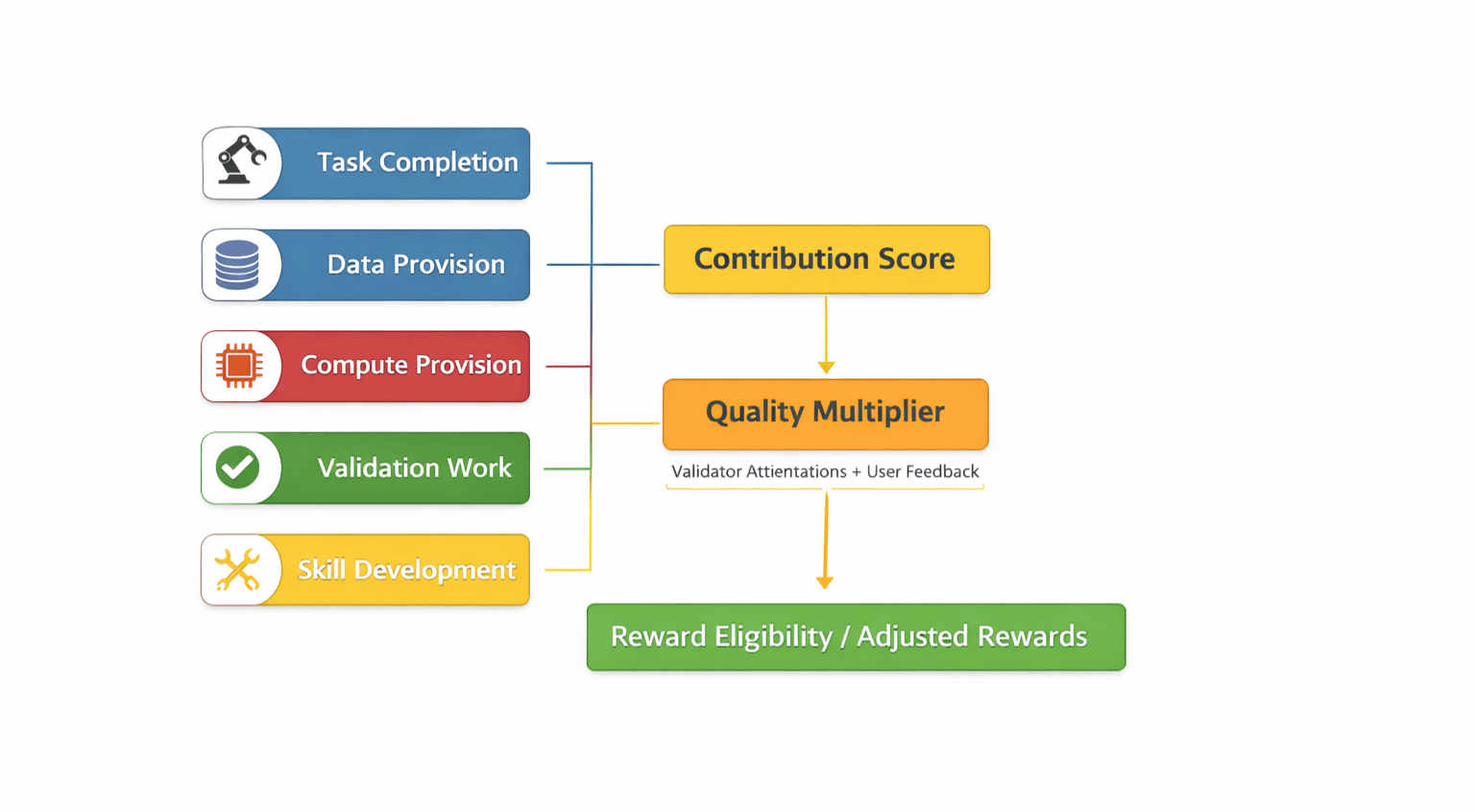

Fabric doesn’t reduce contribution to one generic score. It breaks it into different kinds of verified work, task completion, data provision, compute provision, validation work, and skill development. That already tells me the protocol is trying to look at robotic activity in a more detailed way.

Then there’s the quality layer. Rewards are not just based on whether something happened. Fabric also adds a quality multiplier, built from validator attestations and user feedback, with a target range around 0.85 to 0.95. So the system is not only asking whether work was performed. It is also asking whether that work was actually good enough to trust.

I think that’s the real point here.

Complete fraud is easy to understand. A robot lies, gets caught, and gets punished. Most people get that part right away. But partial execution is harder. It sits in that gray area where the task is not fully fake, but not really reliable either. A robot might look active while doing poor work. It might stay available but keep slipping in quality. It might do enough to avoid looking broken, while still making the network worse over time.

That kind of half-done work can slowly damage a system if the protocol has no way to notice it.

Fabric seems to be built with that in mind. If availability falls below 98 percent over a 30 day epoch, the robot loses emission rewards for that period and takes a 5 percent bond slash. If the quality score drops below 85 percent, it loses reward eligibility until things improve. Proven fraud gets punished harder, with 30 to 50 percent of the earmarked task stake slashed.

That matters because it shows Fabric is not only designed for obvious failure. It is also trying to deal with inconsistency, weak execution, and unreliable performance.

Robo fits into this in a practical way too. It is tied to work bonds, network settlement, delegation, and governance signaling. Operators have to post bonds to provide services, so the conversation around robotic work is connected to actual responsibility inside the network. That makes partial execution more than a technical detail. It becomes an economic issue inside the protocol itself.

I also don’t think this should be framed like Fabric has everything solved already. The whitepaper leaves open questions. It suggests the early validator set may start as permissioned or hybrid, and it leaves room for community input on sub economy design and incentive rules. Even the roadmap feels like a gradual buildout. Q1 2026 focuses on robot identity, task settlement, and structured data collection. Then later phases move toward verified task incentives and more complex multi robot workflows.

So for me, that’s the honest takeaway.

Fabric gets more interesting when I stop thinking about robots in terms of success or failure, and start thinking about the messy reality in between. Partial execution is not some small edge case. It might be the main case. And if a protocol wants to coordinate robotic work seriously, that middle state can’t be ignored.