Artificial intelligence is rapidly becoming part of everyday digital systems. From financial analysis to automated research tools, AI models are increasingly used to process large amounts of information and generate useful insights. While these systems are powerful, one challenge still limits their full potential — trust in the information they produce.

AI models often generate answers that sound confident and logical, yet parts of the response may still contain incorrect or misleading details. This phenomenon is commonly referred to as AI hallucination. Even advanced models can occasionally produce information that appears credible but lacks reliable sources.

This is where Mira Network introduces a different perspective. Instead of assuming that AI responses are correct, the network treats each response as a collection of claims that should be verified before being trusted.

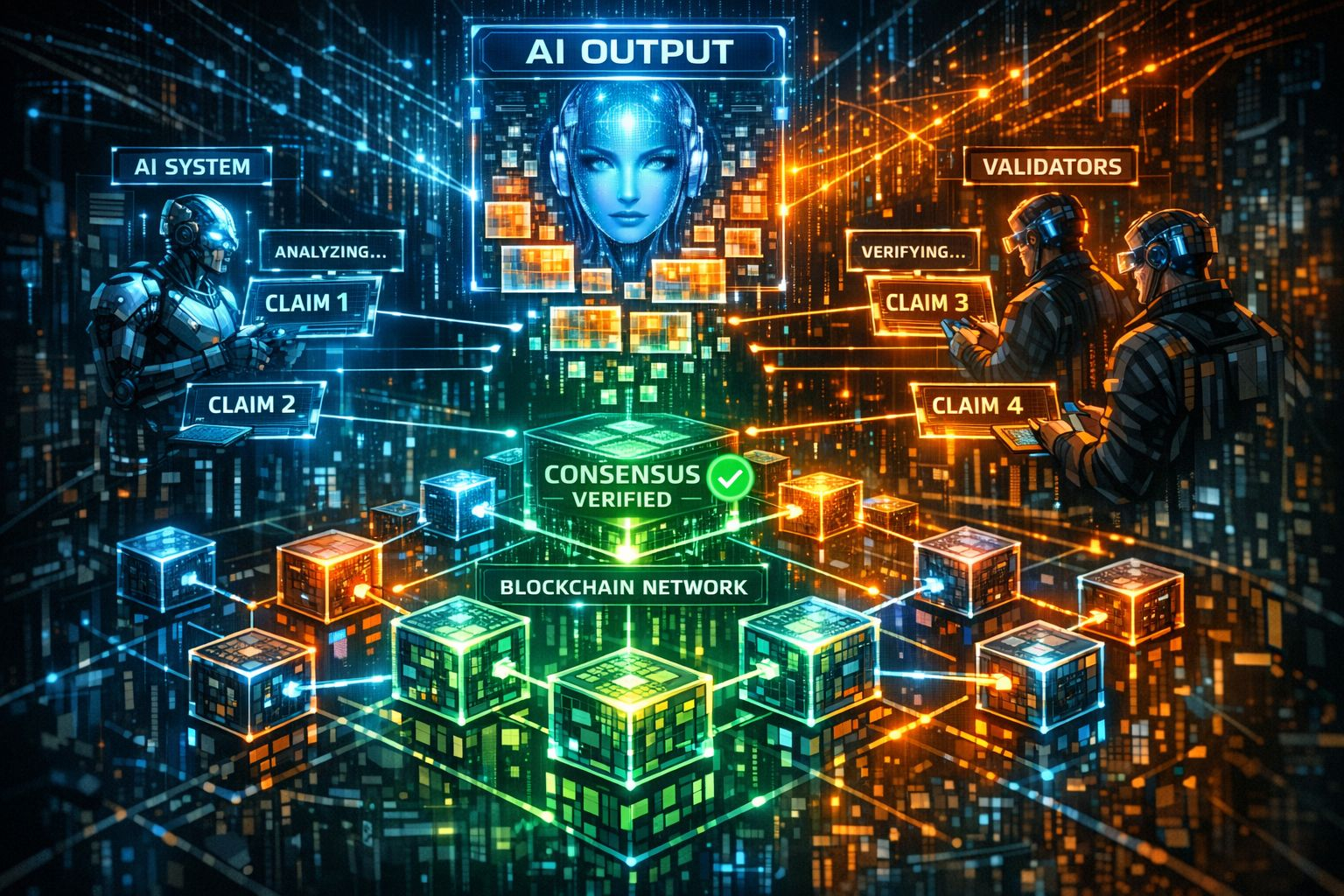

Within the system, complex AI outputs are divided into smaller statements. These individual claims are then evaluated by multiple AI systems and validators across a decentralized network. Each participant analyzes the information independently, helping the network reach a broader consensus regarding the accuracy of the response.

By relying on collective verification rather than a single model, this approach reduces the risk of biased or inaccurate outputs. If a majority of validators agree on a claim, it becomes part of the verified result. If there are disagreements or uncertainty among validators, the system can flag the information for further review.

Another important element of the infrastructure is the integration of blockchain technology. Recording verification activity on-chain allows the process to remain transparent and traceable. Users and developers can better understand how specific conclusions were reached rather than treating AI systems as unexplained “black boxes.”

Of course, building such a network also introduces several challenges. Maintaining fairness among validators, preventing manipulation, and designing effective incentive structures are all critical factors in ensuring that the verification system operates reliably.

Despite these challenges, the broader idea behind decentralized verification is significant. As AI continues to expand into areas such as research, finance, and automation, the demand for trustworthy outputs will likely grow.

Projects like Mira Network highlight an emerging direction in AI development — focusing not only on making AI systems smarter, but also on making their results more reliable and verifiable.