Imagine you’re using an AI doctor to diagnose a rare condition. It gives you a recommendation, but you have no way of knowing if the data it used was biased, if the model was tampered with by a hacker, or if it’s just hallucinating. In a world where AI-generated content is exploding, we are facing a "trust deficit."

This is where Verifiable AI Infrastructure steps in. It’s not just "AI on the blockchain"—it’s a fundamental shift toward an internet where every AI response comes with a cryptographic receipt.

The Architecture of Trust: How It Works

Traditional AI is a "black box." You send a prompt, and a server in a giant data center sends back an answer. You have to trust the company running that server. Verifiable AI replaces "Trust Us" with "Verify This."

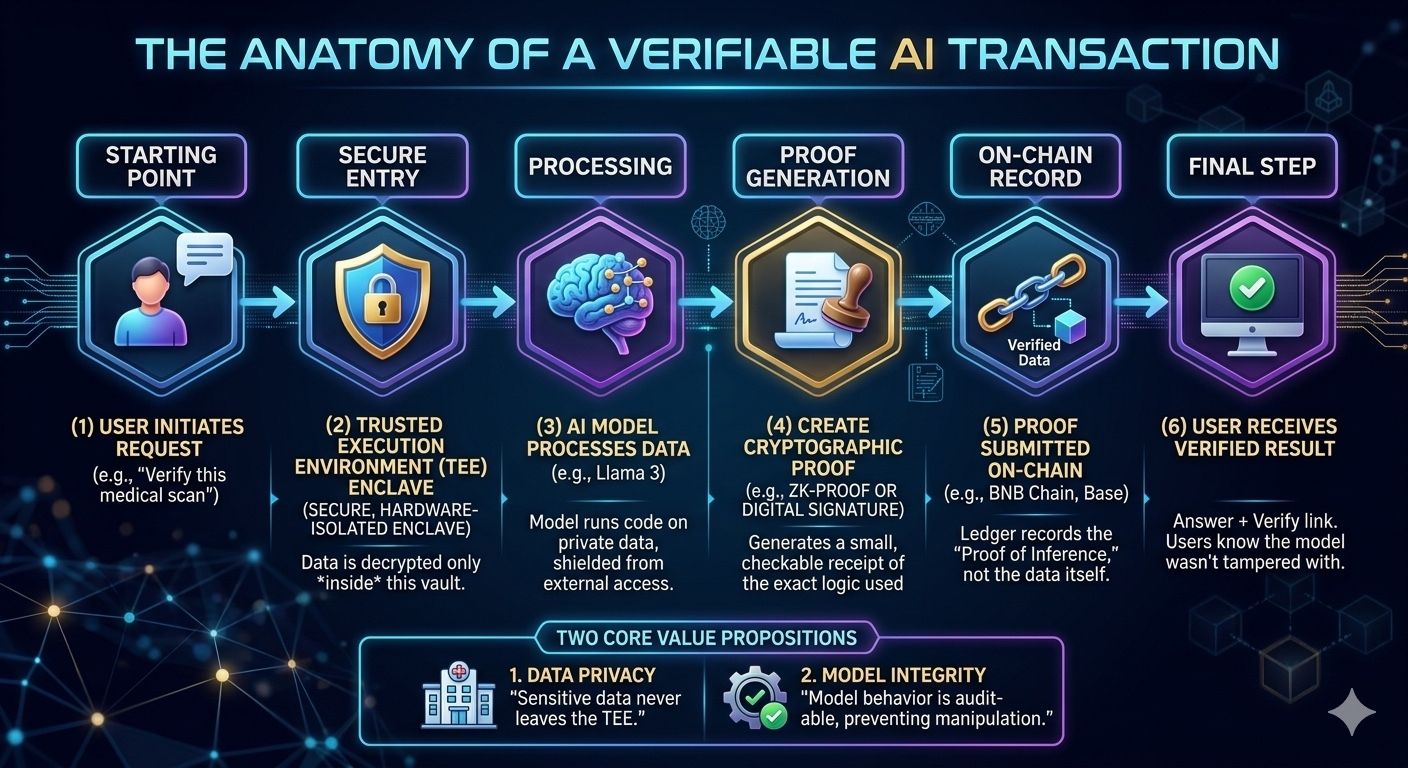

The Flow of a Verifiable AI Call:

1. Request: You send a prompt to a decentralized network.

2. Isolated Execution: The task is processed inside a Trusted Execution Environment (TEE)—essentially a "secure vault" in the hardware (like Intel TDX or NVIDIA H100s) that even the machine owner can’t peek into.

3. Cryptographic Proof: As the AI generates the answer, it creates a Zero-Knowledge Proof (ZKP) or a digital signature.

4. Verification: The blockchain acts as a ledger that confirms: "Yes, this specific model processed this specific prompt without any human interference."

Real-World Scenarios: From Agents to Healthcare

Verifiable AI isn't just a technical flex; it’s a necessity for the "Agentic Economy" of 2026.

• The Autonomous CFO: In 2026, AI agents are already managing corporate treasuries. If an agent executes a $50,000 swap on a DEX, the stakeholders need a verifiable audit trail proving the agent followed its programmed logic and wasn't "hijacked" by a malicious actor.

• Privacy-Preserving Medical Research: Imagine a network where hospitals share encrypted patient data to train a cancer-detection model. Through verifiable infrastructure, the model learns from the data without the data ever being "seen" by a human or leaving its source.

• Deepfake Defense: As deepfakes become indistinguishable from reality, verifiable AI allows creators to "sign" their content at the moment of generation. If a video doesn't have an on-chain "proof of origin," it’s treated as suspicious.

Why This Matters for Your Portfolio (The 2026 Shift)

We are moving away from the "GPP era" (General Purpose Platforms) toward DeAI (Decentralized AI) specialized stacks.

• Compute Powerhouses: Networks like Akash and 0G (Zero Gravity) are providing the raw, decentralized GPU power needed to run these models without the "Big Tech" tax.

• Incentive Layers: Projects like Bittensor are turning AI development into a global competition, where models are constantly ranked and rewarded based on their actual performance, not marketing hype.

• Verifiable Inference: This is the new frontier. It’s the difference between an AI that "claims" to be smart and one that "proves" it.

The Future: Agents that "Own" and "Verify"

The real breakthrough happens when AI agents can hold their own keys and execute transactions. We are seeing the rise of ERC-8004 and "Trustless Agents" on chains like BNB Chain. These agents don't just talk; they act. And because their infrastructure is verifiable, we can finally give them the keys to the digital economy without losing sleep.

The transition from "AI as a tool" to "AI as a verifiable entity" is the biggest narrative of the year. It bridges the gap between the chaotic innovation of Web3 and the massive utility of Artificial Intelligence.

If you could delegate one financial task to a fully autonomous, verifiable AI agent today, what would it be? Let’s talk about the risks and rewards in the comments.

Would you like me to create a deep-dive analysis on a specific project within this verifiable AI stack, such as 0G or Bittensor?

@Mira - Trust Layer of AI #Mira #mira $MIRA