Robots are often discussed like finished products, but the more closely we examine them, the more they resemble ongoing negotiations with the world. Every action a robot takes is the result of a continuous debate between what it senses, what it predicts, what it is allowed to do, and what it can safely execute. In that context, Fabric Protocol emerges as a practical solution for keeping those internal “arguments” from turning into confusion—especially when multiple autonomous systems must cooperate without blindly trusting one another.

Accountability That Can Be Verified

One of the most compelling aspects of Fabric Protocol is its emphasis on verifiable computing as the default language of accountability. This is not the kind of accountability that exists only as a promise on a website. Instead, it is accountability that persists across systems, teams, and environments.

When a robot claims it checked a safety boundary, followed a rule, or relied on a particular data input, there should be a way to verify that claim independently. No private logs. No appeals to authority. Just evidence that can be validated by others.

The support from @FabricFoundation suggests a broader effort to anchor robotic progress in shared standards of proof rather than a race to release technology first and explain it later.

Why a Public Ledger Matters

Disagreements between systems are inevitable. One module may interpret a scene differently from another. A safety mechanism might flag a risk that a planning system overlooked. Regulators, developers, and collaborators all need a reliable way to determine which rules were applied and why.

This is where a public coordination layer becomes essential.

A public ledger allows different systems to validate decisions using shared evidence. Instead of saying “trust us,” developers can say “check this.” In a world of general-purpose robots—where tasks, environments, and toolchains constantly evolve—such a neutral infrastructure moves from optional feature to fundamental necessity.

Built for Autonomous Agents

Fabric’s “agent-native” philosophy carries an important implication: the system is designed around how autonomous machines actually operate.

Robots rarely execute a single program and stop. Instead, they run continuous loops involving data ingestion, computation, permission checks, and policy enforcement. For these loops to remain safe and reliable, they need a memory system that is:

Difficult to falsify

Easy to audit

Compatible with different developers and hardware systems

Fabric attempts to provide that memory layer.

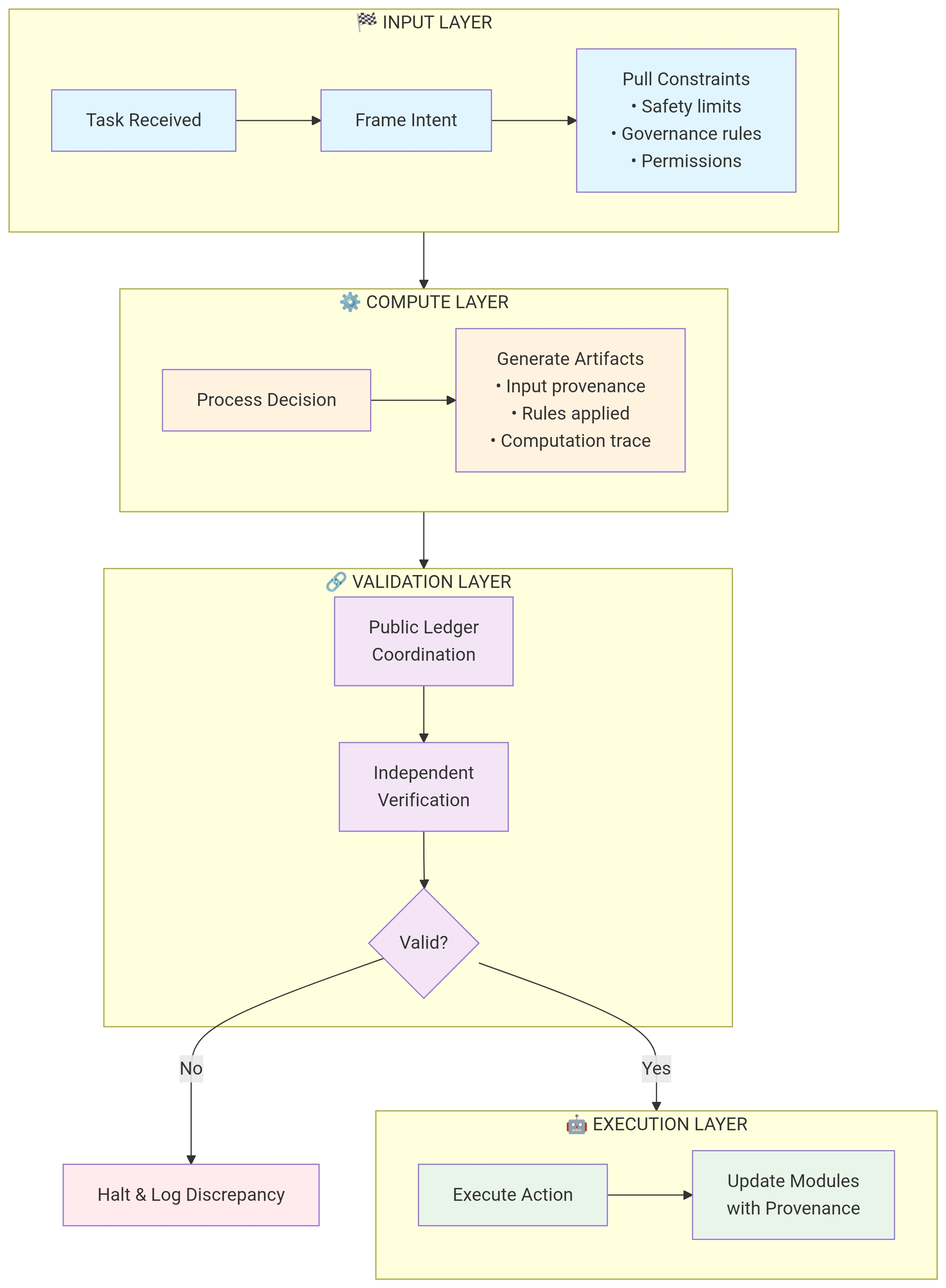

The Operational Flow

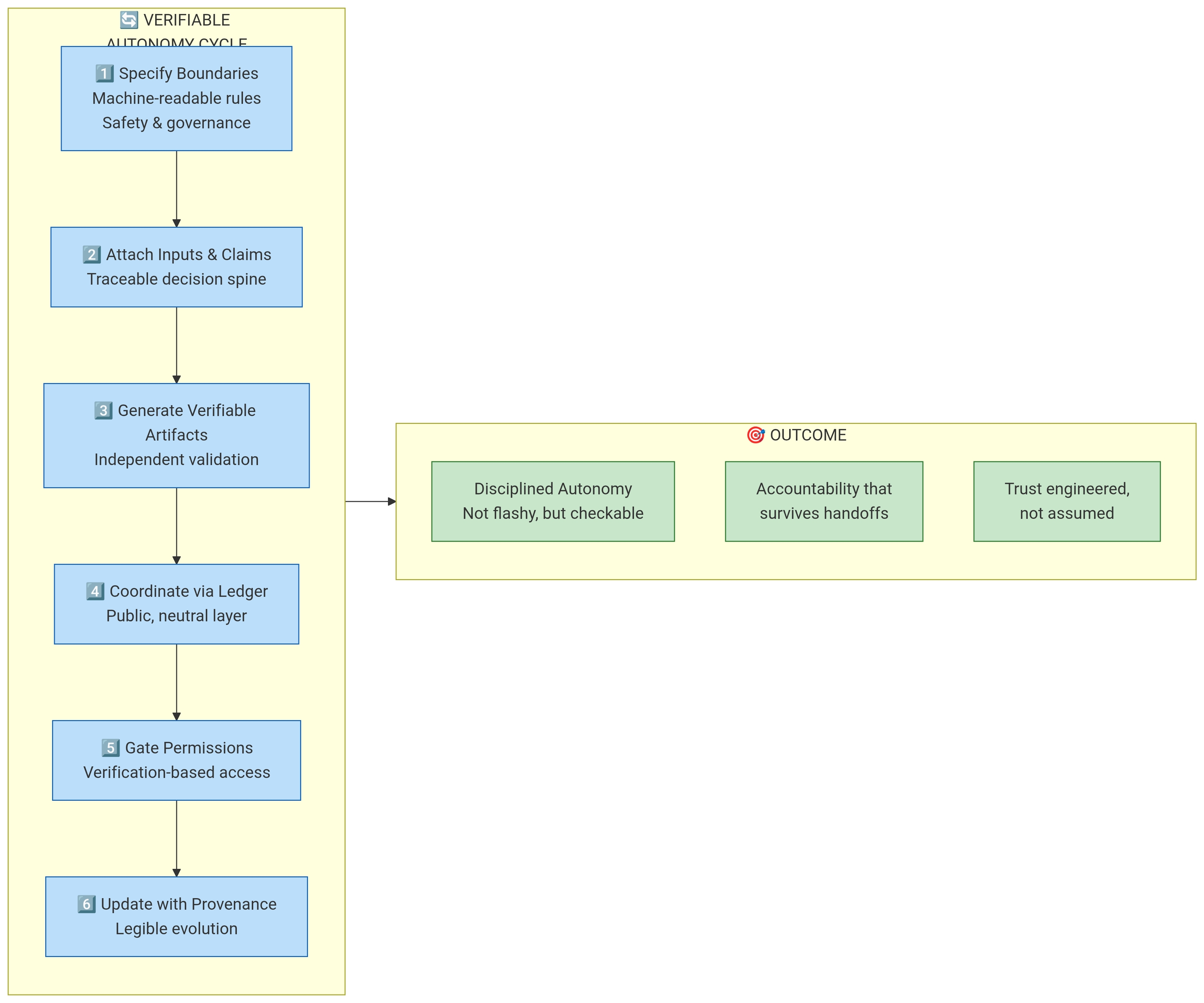

The real story is not the concept itself but the process it enables. A robot’s decision cycle could follow a verifiable sequence like this:

Define boundaries using machine-readable safety and governance rules.

Attach data and computation claims to every decision.

Generate verifiable artifacts proving what inputs and checks were used.

Coordinate validation through a shared ledger accessible to other systems.

Gate permissions based on verified behavior, expanding capabilities only when trust is earned.

Update modules with provenance, ensuring every improvement remains transparent and traceable.😨😊🦅

This flow transforms robot decision-making from an opaque process into something measurable and auditable.

Disciplined Autonomy

Fabric Protocol ultimately promotes disciplined autonomy rather than flashy autonomy.

It does not assume that AI models will be perfect. Instead, it assumes mistakes will happen—and focuses on making those mistakes measurable, attributable, and correctable. Systems can tighten constraints and evolve without hiding failures or halting progress.

Where $ROBO Fits In

The role of $ROBO becomes clearer in this ecosystem. Rather than simply acting as another token, it can serve as a coordination signal that rewards the foundational work behind trustworthy robotics:

Building verification infrastructure

Funding open tooling

Incentivizing transparent collaboration

If the future involves a global, open network of robots that build, govern, and evolve together, then incentives must encourage proof over promises.

At its best, #Robo represents the idea that cooperation can scale—because trust is engineered, verified, and shared rather than assumed.🚨🤪👍🧧