If a chain begins to store “meaning,” who is responsible when meaning changes?

When I look at most Layer 1s, even the ambitious ones, they still feel like the same old deal: keep a ledger, execute deterministic logic, replicate state, and let the world above them fight about interpretation. The base layer is supposed to be boring on purpose. Not because boring is trendy—but because determinism is the one kind of truth blockchains can actually defend in court, in audits, and in ugly real-world disputes.

Vanar tries to push against that boundary. It doesn’t merely say “we can host apps.” It hints at something stronger: that the chain can compress data, store logic, and verify truth inside the chain, and that it’s built “for AI workloads,” including ideas like vector storage and similarity search baked into the infrastructure. On paper, this is a power move: the chain is no longer just rails—it becomes a place where AI-flavored operations can live closer to consensus.

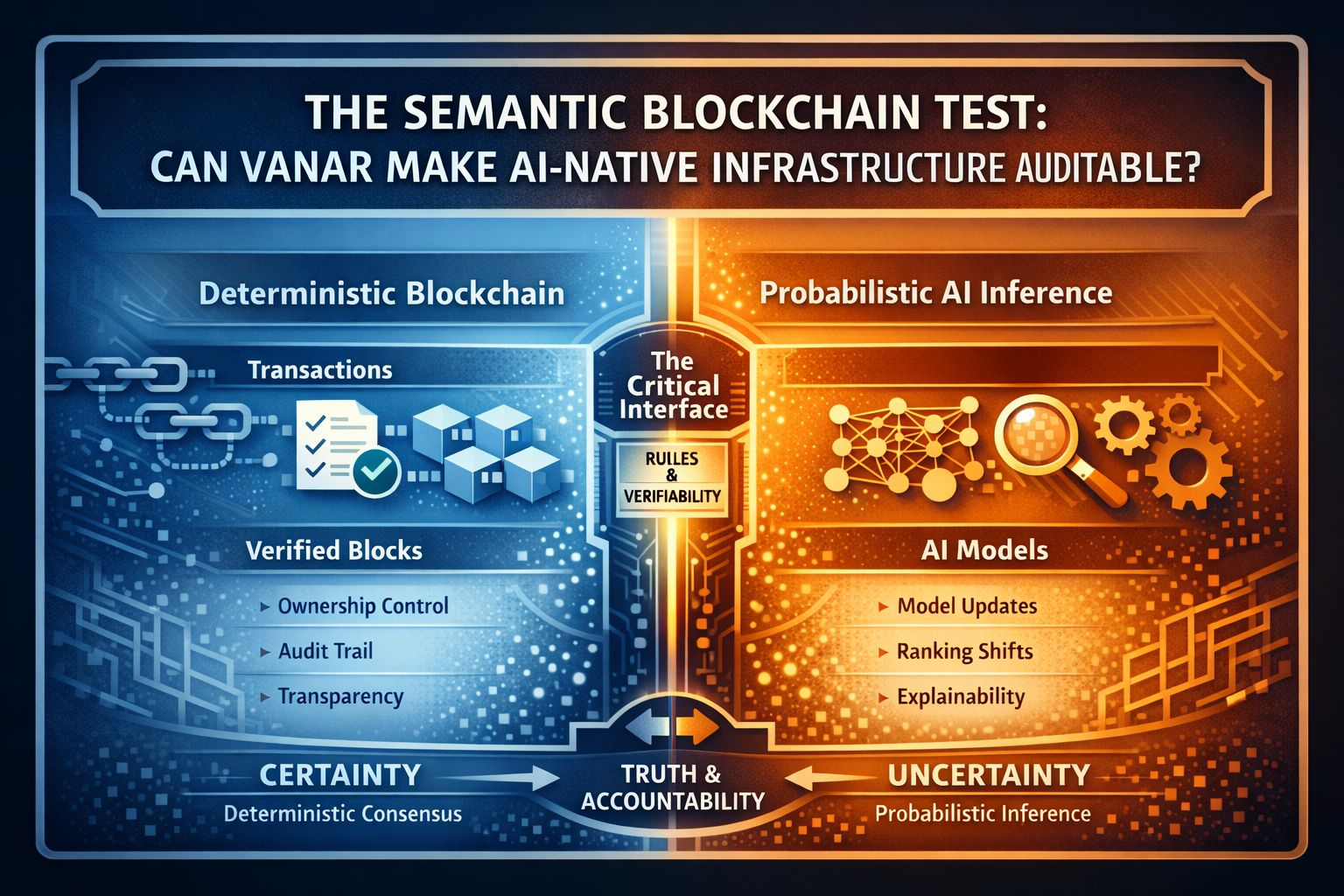

But the moment you invite “AI semantics” into the core story, you inherit a new kind of problem: blockchains are designed to be reproducible; AI systems are designed to be adaptive. Those two instincts don’t naturally coexist.

A deterministic contract gives the same output for the same input. That’s the whole point. AI systems—especially those involving embeddings, ranking, similarity, and “best match”—operate in a world where outputs can shift even when inputs appear unchanged, because the representation changes. The model updates, the embedding space drifts, the corpus expands, the thresholds get tuned. Even if Vanar’s goal is to make Web3 “intelligent by default,” intelligence is not a fixed property. It’s a moving target.

So the real question becomes: what does “auditability” mean in a semantic chain?

If Vanar’s infrastructure includes semantic operations (vector search, similarity), then “correctness” can’t always be proven the way we prove correctness in math. You can prove a signature is valid. You can prove a hash matches. You can prove a Merkle proof. But how do you prove that a similarity result is the “right” one—especially if the embedding model or indexing method evolves?

In normal AI systems, this is handled socially and operationally: versioning, offline evaluation, rollback plans, monitoring, and human accountability. In blockchains, we tend to pretend we can replace human accountability with protocol guarantees. Vanar’s bet seems to be that you can bring more of the AI stack into the chain itself. But the moment you do that, you also bring the most inconvenient parts of AI into the “shared truth” layer: model governance, dataset governance, evaluation standards, and liability when the system’s output harms someone.

This is where I think Vanar’s most interesting risk sits—not in throughput wars, not in “EVM compatibility,” not even in the token design. It’s in decision rights.

Vanar is EVM-compatible, which means it wants to feel familiar to existing Ethereum developers and tooling. That familiarity is useful. But EVM familiarity doesn’t solve the semantic governance problem; it just makes it easier to deploy the old patterns on top of a new promise. And the new promise is: “the chain can help intelligent applications learn, adapt, and improve.”

Here’s the uncomfortable part: if an AI-native chain is real, then upgrades aren’t just “bug fixes.” Upgrades become epistemic changes—changes to how the system interprets the world.

Even Vanar’s own documentation emphasizes that $VANRY is used for gas and includes staking via a delegated proof-of-stake mechanism. That suggests a standard governance/security surface: validators, staking incentives, and protocol upgrades. But if the chain’s differentiator includes AI-specific primitives, then validator governance is no longer only about block production and consensus parameters. It can quietly become governance over what counts as a “good match,” a “relevant result,” or a “verified truth.”

And that’s a very different kind of power.

In a normal chain, if someone complains, you can often point to deterministic rules: “the contract did what it did.” In a semantic chain, the dispute may sound like: “the system ranked me unfairly,” “the agent selected the wrong result,” “the chain’s similarity search buried my content,” “the verification logic flagged me incorrectly,” or “the model update changed outcomes overnight.” Those are disputes about interpretation and bias, not just computation.

So if Vanar succeeds at making semantic operations cheap and native, it will eventually face a question that most blockchains avoid: will “fairness” be a protocol matter or an application matter? Because once the base layer provides meaning-tools, the base layer can no longer pretend it’s neutral about meaning.

This is also where “enterprise” or regulated adoption becomes tricky. Many regulated systems don’t fear transparency alone; they fear unexplainable decisions. If an onchain system participates in decisions (ranking, matching, verification signals), then explainability becomes part of infrastructure, not just UI. Vanar’s messaging about “verifying truth” inside the chain is bold—because in the real world, “truth” requires a threat model, a definition, and a dispute process.

To be clear: none of this is automatically bad. It might be exactly what the market needs if the goal is to support AI agents and intelligent apps without rebuilding the same off-chain pipelines over and over again. There is a real “assembly tax” in Web3—projects stitching together storage, compute, indexing, identity, and distribution. Reducing that tax can matter. But the cost you pay is that the chain’s responsibility expands from “state integrity” to “decision integrity.

And decision integrity is a harder promise to keep.

If I had to summarize the unique test Vanar faces (without selling it, without dismissing it), it’s this: Can a blockchain host semantic primitives while staying credible as an auditable system?

Because the moment outcomes depend on “similarity,” “relevance,” or “learning,” you are no longer only competing with other chains. You are competing with the operational maturity of modern AI platforms—versioning discipline, evaluation rigor, rollback culture, incident response, and the humility to admit when the system’s outputs are not “truth,” just an answer produced under assumptions.

If Vanar gets that layer right—if it treats semantic power as something to govern carefully, not just something to advertise—then the project isn’t just another L1 story. It becomes a story about whether decentralization can handle meaning without turning meaning into politics by default.