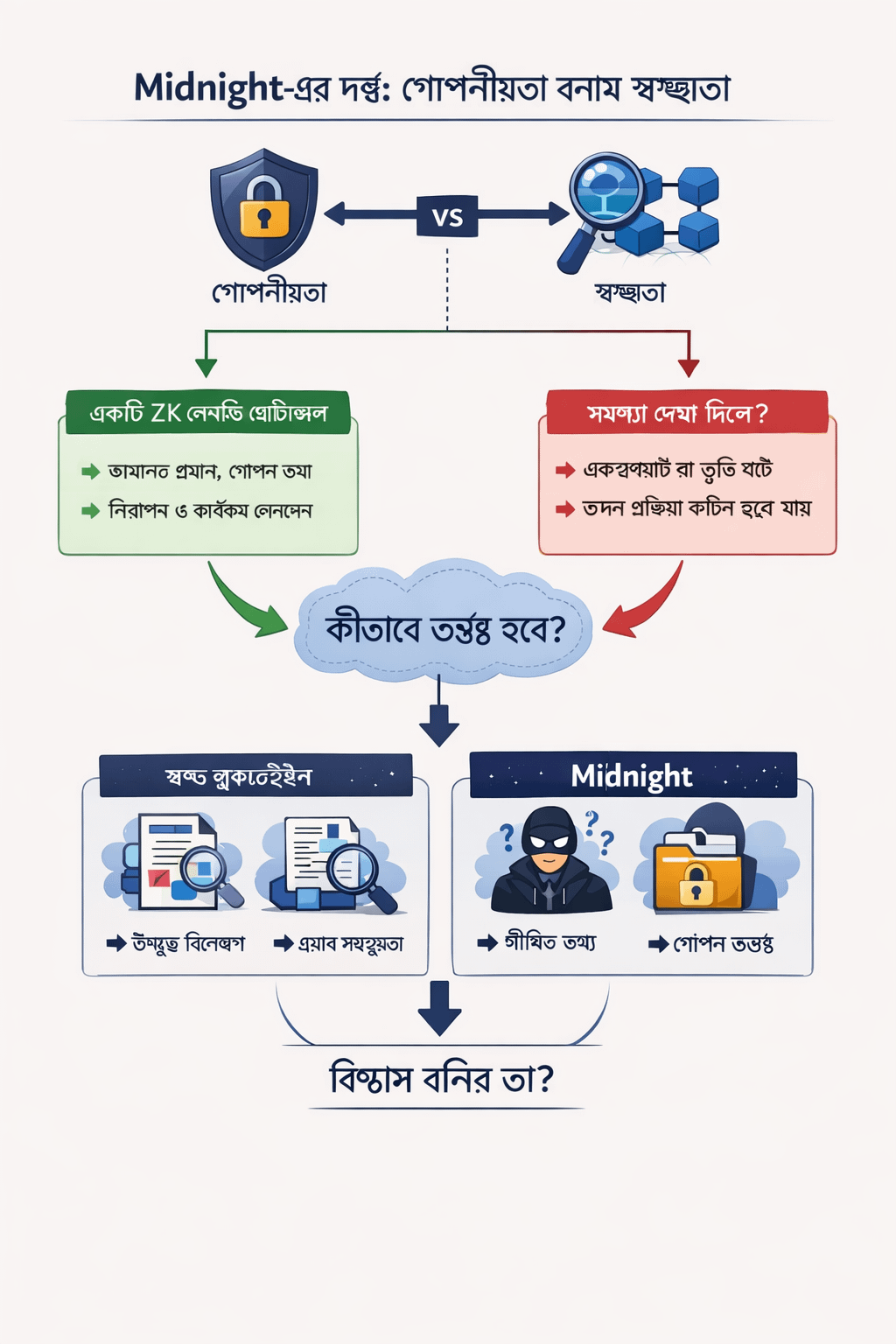

I really want to believe in what Midnight is building. The problem it addresses is genuine. Anyone who has seriously considered where blockchain infrastructure needs to evolve knows that transparency-only models eventually hit a wall.

Public ledgers are excellent for trustless verification, but they fall apart when sensitive commercial data, personal information, or regulated institutional involvement enters the picture. Midnight steps into that gap with a compelling offer: zero-knowledge proofs baked into a programmable smart contract environment, a familiar language for developers, and privacy treated as a foundational layer—not an afterthought. On paper, it makes sense.

But underneath that vision sits a tension the project hasn’t fully addressed.

Privacy and verifiability aren’t just technical opposites. They’re social opposites. How Midnight handles this balance matters more than any cryptographic detail.

Here’s what I mean.

Imagine a lending protocol built on Midnight. A borrower proves they meet collateral requirements without revealing their full financial position. The lender gets the assurance they need without overexposure. The ZK proof does its job. Clean and efficient.

Now imagine that same protocol gets exploited.

Maybe the proof logic contains an edge case the developers didn’t foresee. Maybe the Compact contract has a subtle flaw that lets someone manipulate the collateral check under the right conditions. Funds move. Something clearly broke. And now the community needs to investigate what happened—inside a system intentionally designed to obscure the details of what happened.

This isn’t a hypothetical edge case. It’s the central design dilemma of privacy-first financial infrastructure.

Traditional blockchains are ugly when they fail, but they’re transparent about why. Every transaction, every interaction, every state change lives on a public record that independent analysts can examine. Exploits are reconstructed. Postmortems are written. The community learns because the evidence is visible.

Midnight’s confidentiality features intentionally limit that visibility. The same mechanism that protects users in normal operation becomes a barrier to accountability when something breaks.

The project might respond that ZK proofs themselves provide a verification layer—that the network can confirm correctness without exposing data. But that answer sidesteps the harder question.

Proofs verify what they’re programmed to verify. They don’t catch what they weren’t designed to check. When a contract behaves unpredictably, the real question isn’t whether the proof verified correctly. It’s whether the contract logic was ever sound to begin with. Auditing logic you can’t fully inspect from the outside is a fundamentally harder problem than auditing a transparent contract.

Compact lowering the barrier for developers is a genuine improvement. But lower barriers also mean more developers—with varying levels of cryptographic experience—writing contracts that users will rely on for privacy. The combination of accessible tooling and opaque execution isn’t obviously safe.

Midnight talks about "rational privacy" as a solution. But rational privacy requires rational implementation. And rational implementation requires accountability mechanisms that don’t conflict with the privacy model itself.

The question I keep circling back to is simple.

When a Midnight-based application fails in a way that hurts users, what does the investigation actually look like? And if the answer depends heavily on developer cooperation rather than public auditability—has the network quietly reintroduced the trust assumptions it was supposed to remove?