I remember driving my car while I was kind of zoned out thinking about a lot of things. Since the future will involve AI and robots I started wondering how will humans learn to trust them.

@Fabric Foundation $ROBO #ROBO

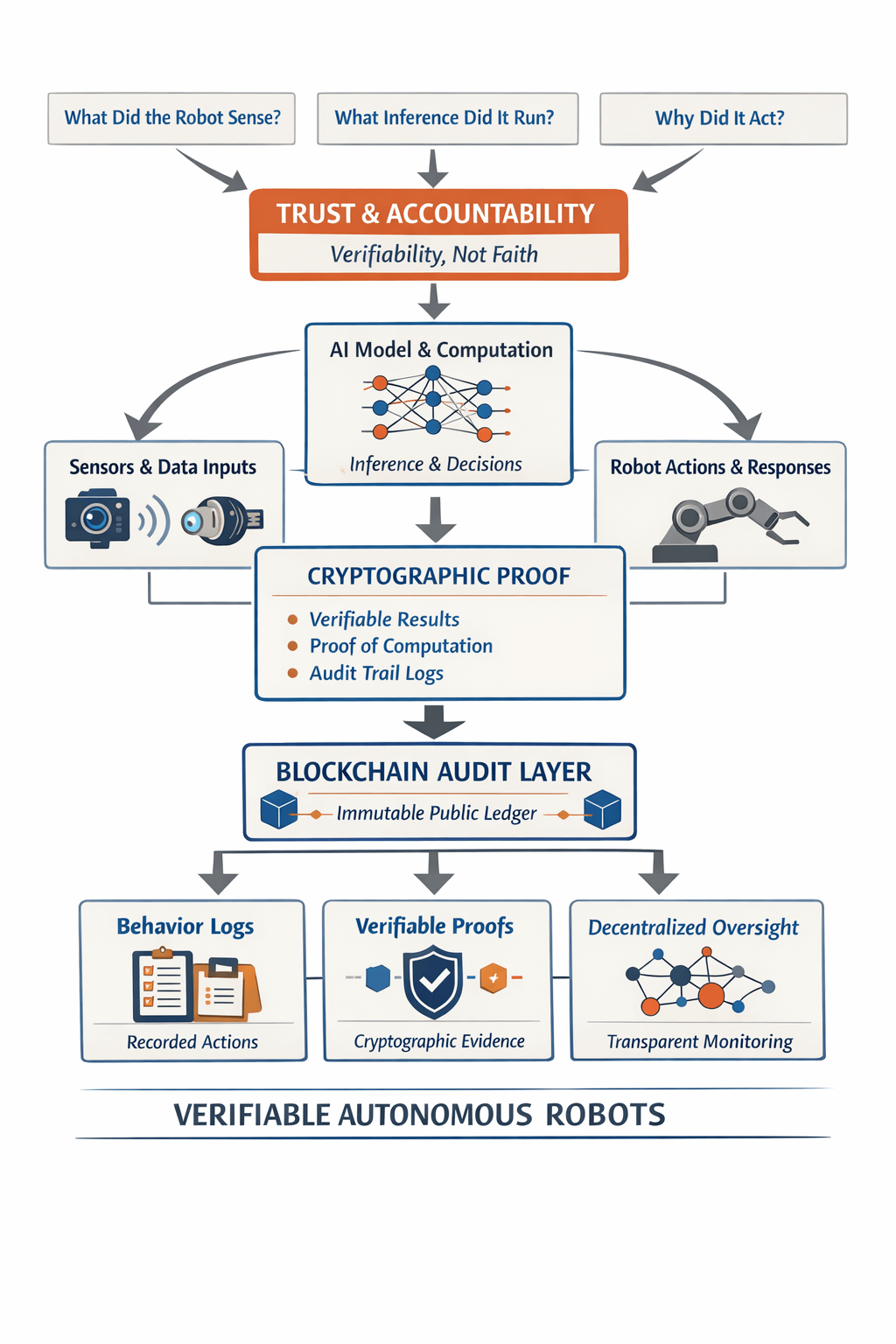

I write as a researcher who cares about both code and consequences. Trust is not a single technical property. It is a bundle of practices. Accountability means knowing what a robot sensed. Accountability means knowing what inference it ran. Accountability means knowing why it acted. For autonomous systems that operate among people we need verifiability not faith.

Verifiable computing makes it possible to attach a cryptographic proof to a particular computation. That proof shows a model ran on specific inputs and produced the outputs claimed. A blockchain can serve as an audit layer that anchors those proofs in an immutable record.Agent native infrastructure can make each robot behave like a verifiable actor in a larger network. When behavior logs and evidence are coordinated via a public ledger oversight becomes possible at scale

This is where @Fabric Foundation and Fabric Protocol come into view for me. Fabric provides modular building blocks for coordinating data computation and governance across fleets. The $ROBO token can play a practical role in aligning incentives for validation and for dispute resolution. Tokens can reward third party verifiers who run proofs. Tokens can allocate governance weight to participants who contribute robust sensors or high integrity compute. In short tokens can help make the economics of verification consistent with safety goals.

There are real limitations to face. Proof latency can make real time control difficult. Privacy concerns arise when sensor logs contain personal information. Scalability is not trivial when each robot produces large volumes of evidence. Incentive design is delicate because poor rewards create perverse verification incentives. Governance must be resilient against capture and manipulation. These are not abstract problems. They are engineering and social design problems that must be solved together.

Imagine a delivery robot that must choose between crossing a busy street or waiting. A verifiable trace could show sensor snapshots model confidence and the exact sequence of actions. That trace could be audited after an incident. With ledger anchored proofs communities can learn systematic failure modes and can update governance rules. Yet access rules must protect personal privacy and legal frameworks must tie proofs to remedies for harmed people.

I am cautiously optimistic. Technical tools like verifiable computing blockchains and agent native layers can expand what we can audit and who can oversee. Social institutions will decide which proofs are meaningful and how rights are protected. Trust in machines is both a technical problem and a social one. The work by @Fabric Foundation and the coordination role of $ROBO warrant careful study as practical architectures for accountable autonomy.

@Fabric Foundation #ROBO