The moment that made me start thinking seriously about machine coordination was surprisingly ordinary. I was watching a short video of warehouse robots moving shelves across a floor. One robot paused, another rerouted, a third waited for a path to clear. It looked smooth. But what stayed with me was the quiet question underneath the movement. How do all these machines actually agree on what is happening?

That question gets more interesting once machines stop operating inside closed systems. Inside a single warehouse, one company controls the robots, the software, and the data. Trust is easy because the environment is controlled. But once machines start operating across open environments, coordination becomes something else entirely.

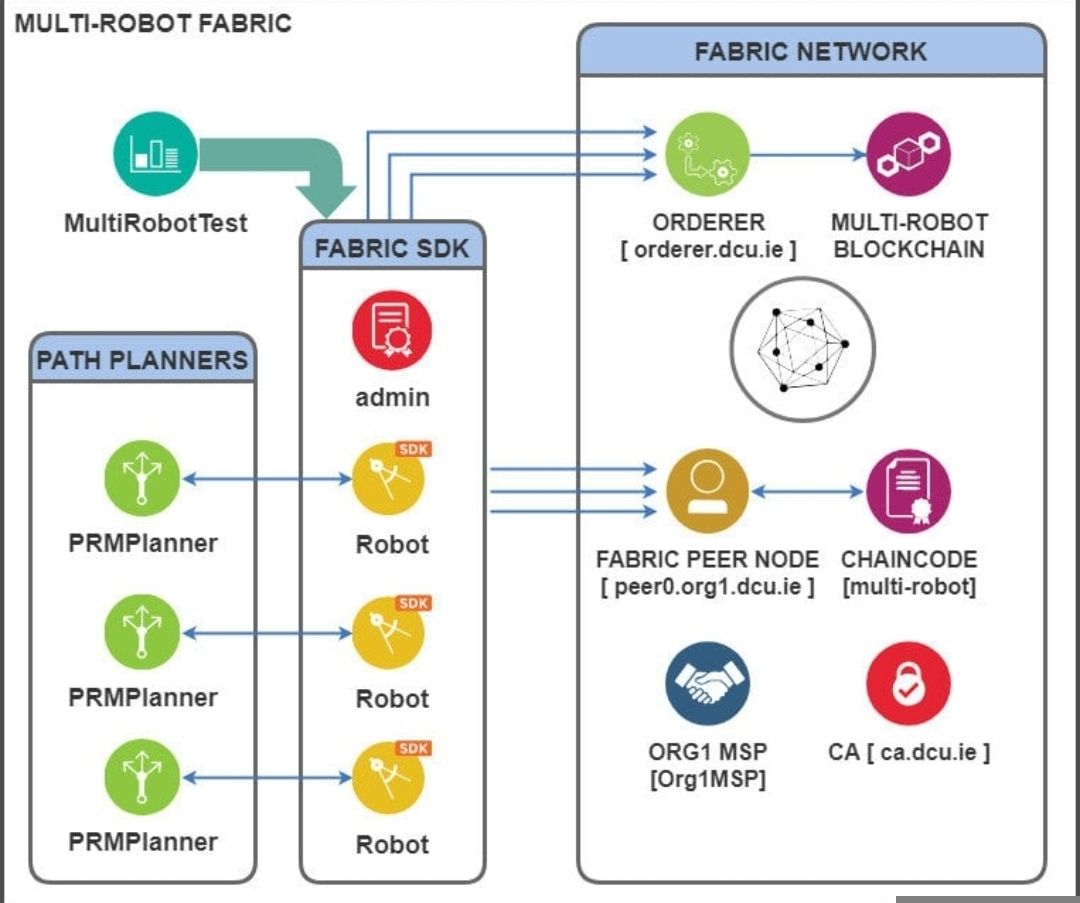

That is the problem the Fabric Foundation is trying to approach. Not by building robots themselves, but by building a system that records and verifies machine activity.

When I first looked at this idea, it sounded abstract. A ledger for robots. But the logic becomes clearer once you think about how autonomous machines actually work.

Every machine produces data while it operates. Sensors track movement. GPS signals record location. Internal logs measure speed, temperature, battery usage. This stream of operational data is usually called telemetry. It is basically the machine’s diary of what it did.

On the surface, Fabric’s system records actions using something they call proof of action. The term simply means evidence that a machine completed a task. If a robot claims it cleaned a floor, inspected a bridge, or delivered a package, the system collects signals that support that claim.

Underneath that surface layer is the coordination system. Validators inside the network review those signals and determine whether the action is credible. In simple terms, validators check whether the machine’s data tells a believable story.

Understanding that layer helps explain why this idea exists at all.

The robotics industry is expanding quickly. Global spending on robots reached roughly 50 billion dollars in 2023. Meanwhile more than 3.5 million industrial robots are already operating worldwide. Those machines mainly live inside factories, but service robots are starting to appear in logistics, retail, and city infrastructure.

Now imagine those machines interacting across shared spaces.

A delivery robot crosses a city block. A drone inspects infrastructure above it. A cleaning robot maintains the sidewalk area nearby. Each machine belongs to a different operator. Each reports its work to different systems.

Without coordination, nobody has a reliable record of what actually happened.

That gap matters more than it first appears. Autonomous machines do not just move objects. They perform tasks that eventually connect to payments, maintenance logs, insurance claims, and public accountability.

If a drone reports inspecting a bridge, someone needs evidence that the inspection actually occurred. Otherwise the entire automation system becomes difficult to trust.

Fabric’s network tries to solve that by turning machine actions into verifiable records. Validators review the evidence. If enough validators agree that the signals look credible, the action becomes part of the network’s ledger.

In many ways the idea echoes early blockchain thinking. Bitcoin introduced proof of work, where miners prove computational effort by solving cryptographic puzzles. Fabric explores something different. It tries to measure physical effort performed by machines.

The difference is important.

Computational work is easy to verify. You can checkout the cryptographic results instantly. Physical work is far away from more complicated. Sensors drift. GPS signals is sometimes shift by several meters. Machines occasionally report incomplete data.

That means verification becomes a judgment problem rather than a purely mathematical one.

For example, imagine a delivery robot reporting a two kilometer route across a city. GPS data might confirms that most of the path but showing the small location in inconsistencies. Meanwhile wheel sensors inside the robot might report consistent movement during the same time period.

Validators looking at this evidence must decide whether the signals together form a believable record. No single piece of data is perfect. Credibility emerges from multiple signals pointing in the same direction.

This layered verification creates the coordination structure Fabric is experimenting with.

On the surface, validators receive token rewards for confirming machine actions. Incentives exist because verification takes time and attention. Someone needs to evaluate the signals.

Underneath that incentive layer sits the real economic question. Can machine labor become measurable in a shared system?

If a robot cleans a public park, that activity could become a recorded event. If a drone inspects power lines, the inspection becomes verifiable data. Over time these records could form a ledger of machine work performed in the real world.

When I first thought about this structure, it reminded me of how digital markets evolved. Early internet systems focused on sharing information. Later platforms began measuring activity, tracking engagement, and assigning value to those actions.

Machines might be entering a similar phase.

Still, coordination networks like this carry risks.

Validators are motivated by rewards, but incentives sometimes create strange behavior. Crypto history offers plenty of examples. During the liquidity mining boom of 2020, some protocols attracted billions of dollars in deposits almost overnight. But many participants were chasing short term rewards rather than supporting the system long term.

A similar dynamic could appear in machine verification networks.

If verification rewards become attractive enough, some validators might approve questionable data just to increase earnings. Others might reject legitimate actions if the signals look unfamiliar. Incentive design becomes extremely important.

Another challenge sits in the quality of sensor data itself.

Machines operating in the real world produce messy information. Urban GPS signals bounce between buildings. Environmental sensors degrade over time. Even honest machines sometimes produce confusing data patterns.

A ledger can organize information, but it cannot remove uncertainty from physical environments.

Meanwhile the broader market context makes this experiment interesting.

AI systems are spreading rapidly across logistics and automation. Warehouses are becoming increasingly autonomous. Inspection drones are already being deployed in infrastructure monitoring. Some forecasts suggest service robot markets could reach over 170 billion dollars by the early 2030s.

If even a small percentage of those machines interact through shared verification systems, coordination infrastructure becomes extremely valuable.

Early signs suggest the industry is starting to think about this layer more seriously. Companies deploying autonomous fleets are realizing that verifying machine activity across multiple organizations is not trivial. The problem is quiet, but fundamental.

Fabric’s approach tries to build that foundation early.

Whether the system scales remains uncertain. Robotics infrastructure is still evolving. Many machines today operate inside tightly controlled environments where external verification is unnecessary.

But the direction of travel seems clear. Machines are slowly moving into open environments where their actions intersect with public systems, markets, and regulations.

Once that happens, coordination becomes unavoidable.

When I step back and look at this idea, what stands out is how subtle the problem is. We spend a lot of time talking about how intelligent machines are becoming. We talk about AI models, autonomy levels, and performance benchmarks.

But intelligence alone does not create reliable systems.

At some point someone needs to answer a much simpler question.

Did the machine actually do the work it claimed to do.