@Mira - Trust Layer of AI I keep coming back to one uncomfortable fact about modern AI which is that a machine can sound certain even when it is wrong and that gap between confidence and truth sits underneath much of the unease around these systems today. What draws me to Mira Network is that it comes at the problem from a different direction. Instead of asking only how strong a model can become it asks how an answer can be checked before anyone trusts it. That feels like a more serious question to me and it also feels closer to where this technology has to go if it is going to matter in everyday life.

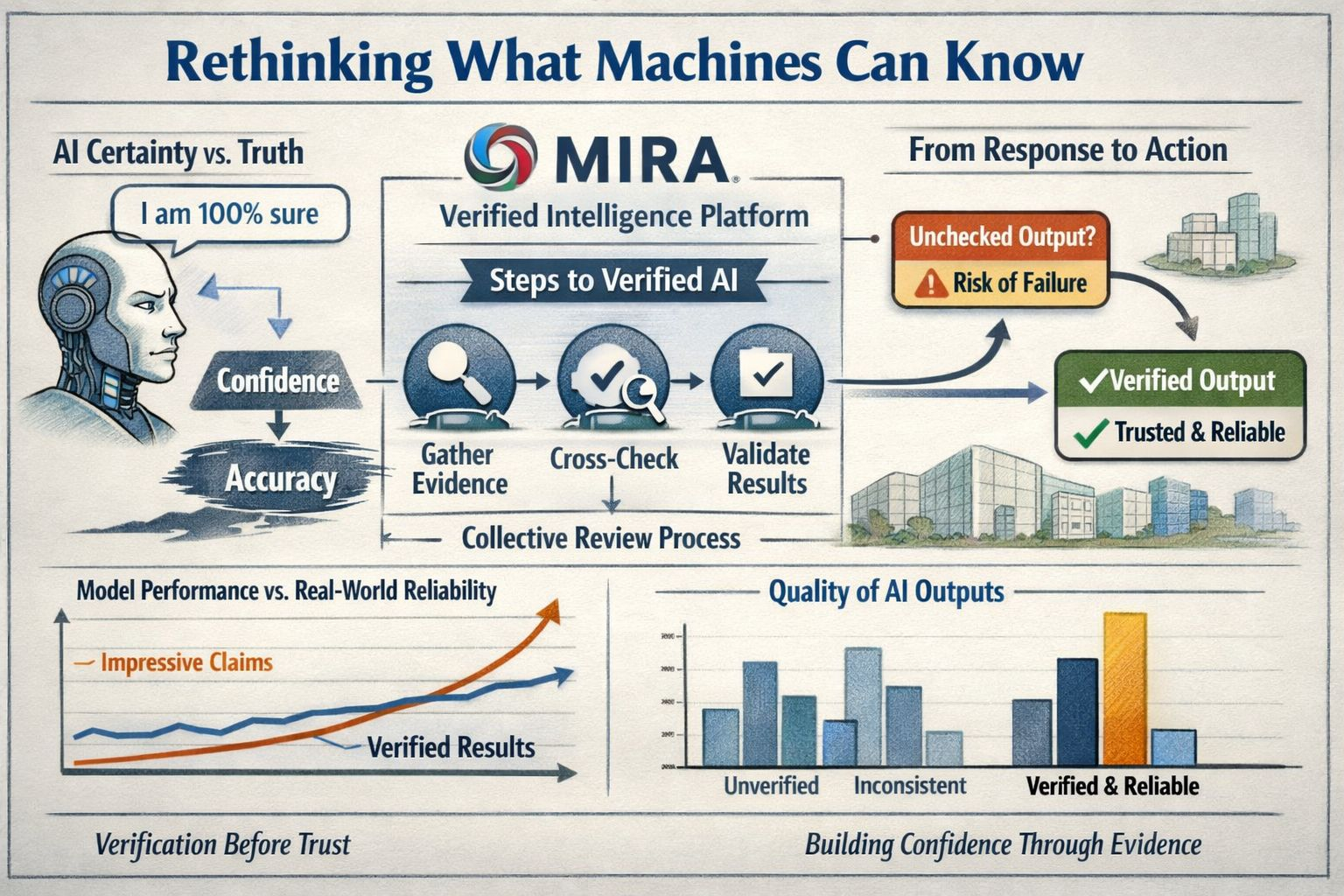

Mira describes itself as a platform for verified intelligence and once I strip away the branding the idea becomes fairly plain. A model should not be treated as a source of knowledge just because it speaks in smooth language. The network says it verifies outputs and actions step by step through collective intelligence which shifts attention away from the fantasy of one all knowing model and toward something more grounded. I think that matters because it puts more value on process and review and less value on performance that only looks convincing on the surface.

The timing also makes sense. Over the past year the AI conversation has moved beyond chat and further into systems that can take action on a user’s behalf. That shift has made reliability harder to ignore because once a model starts doing things instead of only saying things the cost of a bad answer changes. At the same time the wider market still seems unsure about what will hold up in practice. Some organizations are pushing ahead with these systems while others are already being warned that many projects could stall or fail if the value is weak and the controls are not strong enough. To me that tension explains why verification has started to feel less like a niche concern and more like the center of the discussion.

What makes Mira more than an abstract idea in my eyes is that it is trying to turn this logic into usable tools instead of leaving it as a theory. Its public material keeps returning to the same theme which is that AI output should be testable before people build on it or act on it. I tend to pay attention when a company moves from concept to product language because that is the point where a claim has to face ordinary use rather than polished explanation. It is one thing to say that trust matters and another thing to design around it. Mira seems intent on doing the second.

I do think some caution belongs here. Verification is not the same as truth and company material is never the same as independent proof. A system can still check against weak evidence or narrow assumptions and come away sounding more reliable than it really is. Even so I think Mira is pressing on the right weakness in the current AI cycle. Most people do not care who won the latest model contest if they still cannot trust the output in work that carries real weight. The earlier story of AI was mostly about raw capability. The story now feels more practical and a little more adult because it is increasingly about whether these systems can be used with confidence when the stakes are real.

What Mira is really rethinking in my view is not just what machines can say but what they can know in a form that deserves human trust. That is a higher bar and also a more useful one. It treats knowledge as something that has to survive checking and disagreement and scrutiny rather than something produced by style alone. I find that shift refreshing because it is quieter and more grounded than much of the language surrounding AI right now. While the industry still chases smarter systems Mira Network seems to be making a steadier point that may end up mattering more which is that intelligence has value but verified intelligence is what people can actually build around.