When you dive into the privacy architecture of decentralized verification, it is easy to get lost in dressed-up marketing language. However, looking closely at MIRA’s ($MIRA ) privacy sharding reveals an architecture that is genuinely sophisticated—yet arguably flawed in its earliest stages of data processing.

MIRA positions privacy sharding as a core confidentiality guarantee, enabling the trustless verification of sensitive enterprise data. But does the technology actually match the marketing? Here is a deep-dive analysis of what MIRA's sharding actually protects, and the critical vulnerabilities it quietly leaves exposed.

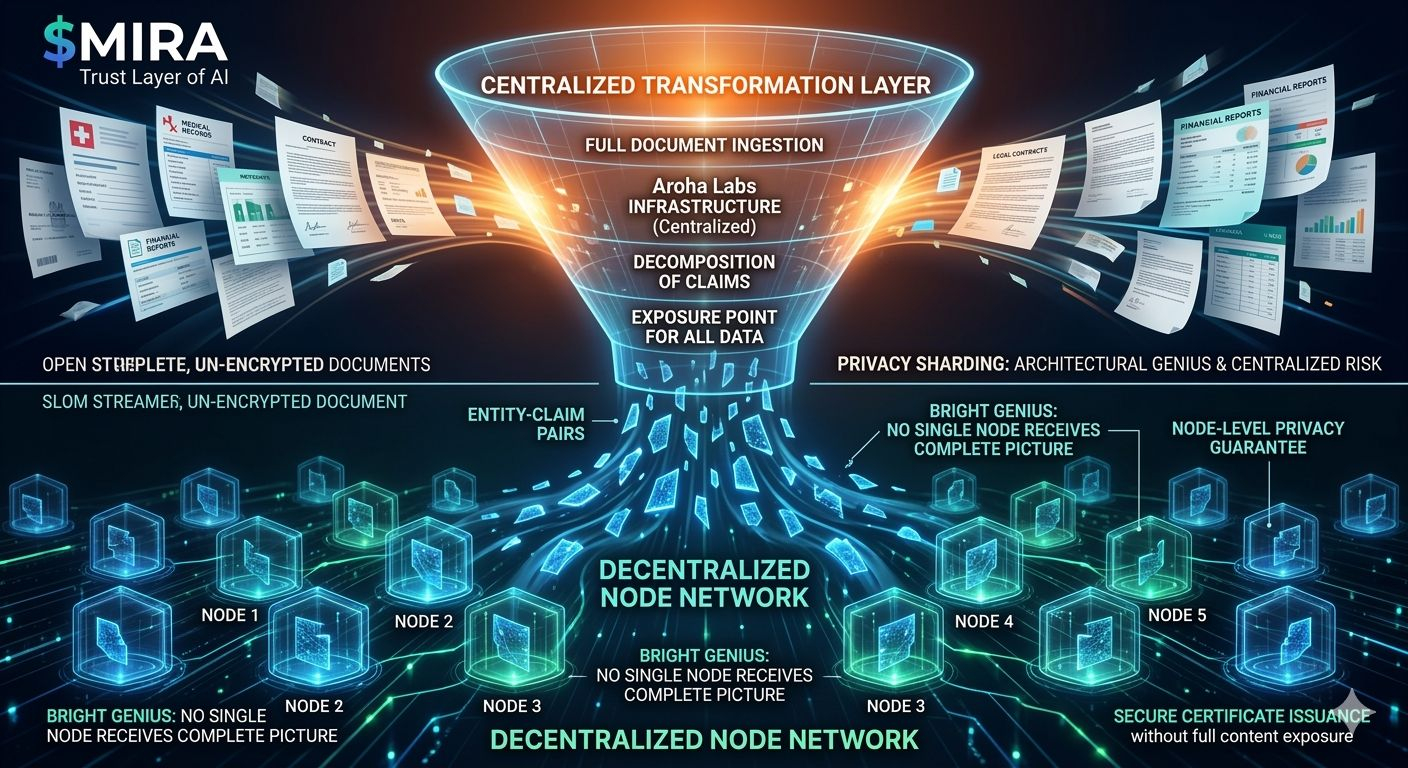

The Genius: Node-Level Privacy and Claim Decomposition

To understand MIRA’s value proposition, you have to look at how it handles the "exposure problem." Verification typically requires full visibility. MIRA bypasses this through a process called claim decomposition.

When a sensitive document—whether it is a medical record, a legal contract, or a proprietary financial report—is submitted for verification, MIRA does not broadcast the full document to the network. Instead, it shards the data into isolated "entity-claim pairs" and distributes them across multiple nodes.

Node A only verifies claims about Entity 1.

Node B only verifies claims about Entity 2.

Node C only verifies claims about Entity 3.

No single node ever receives the complete contextual picture of the submitted document. This is a monumental leap for decentralized verification. By ensuring that certificates can be issued without exposing the entire payload to any single network operator, MIRA opens the door for strict-compliance sectors (healthcare, legal, institutional finance) to utilize decentralized networks. The protection against a malicious node operator trying to harvest sensitive data is robust and elegantly designed.

The Catch: The Centralized Transformation Layer

This is where the architecture gets complicated, and where institutional compliance teams will likely raise red flags.

Privacy sharding effectively protects content from the node operators. However, it does not protect content from the transformation layer itself.

Before a document can be sharded into those neat, verifiable entity-claim pairs, it must be ingested, read, and decomposed. Currently, this transformation software relies heavily on centralized infrastructure (run by Aroha Labs).

The architectural reality is this:

The centralized transformation layer receives the full, unencrypted, original content.

The centralized software reads every sensitive word, confidential clause, and proprietary financial metric to break it apart.

Only after this centralized processing does the privacy sharding occur.

Therefore, the privacy guarantee that applies at the network level does not exist at the ingestion level. Customers submitting sensitive content are trusting the decentralized nodes to see only fragments, but they are still forced to trust a centralized entity to handle the entire unredacted document appropriately. The gap between marketed decentralization and actual centralization during the transformation phase is a significant trust bottleneck.

The Silent Vulnerability: Metadata Leakage

Even if you accept the risks of the transformation layer, the current architecture faces a secondary hurdle: Metadata Analysis.

Sharding obscures the payload content, but it does not inherently obfuscate the metadata surrounding the transaction. In sophisticated network environments, metadata is just as revealing as the data itself. Unprotected metadata signals include:

Submission timing and latency.

Total content size and shard volume.

Domain specifications and routing patterns.

Query frequency from specific institutional wallets or IP addresses.

Advanced heuristic analysis of these patterns can allow external observers to reverse-engineer the nature of the data being verified, even without seeing the sharded content. Until MIRA implements robust metadata obfuscation (such as mixnets or delayed batch processing), this remains an unaddressed vector.

The Verdict: Viable Enterprise Solution or Marketing Illusion?

What MIRA gets right is substantial. Node-level privacy through claim decomposition is a real differentiator from legacy centralized verification models. The ability to issue cryptographic proof of accuracy without exposing the core data to the open network is exactly what the AI and Web3 spaces need to scale into enterprise markets.

However, the centralization of the transformation layer means the system is currently a highly secure vault with a glass front door.

The Path Forward:

For MIRA to truly lock down its privacy guarantees and satisfy strict enterprise compliance (like GDPR, SOC2, or HIPAA), the transformation pipeline must evolve. This will likely require:

Decentralizing the Transformation Layer: Distributing the AI parsing process itself.

Trusted Execution Environments (TEEs): Ensuring the centralized processing happens in secure hardware enclaves where not even the server operators can see the data.

Zero-Knowledge Proofs (ZKPs): Allowing the transformation to occur client-side before it ever touches MIRA's infrastructure.

Until an independent privacy audit of the full pipeline—from ingestion to sharding—is published, the market is left with a burning question.

Is MIRA’s privacy sharding the definitive solution for sensitive content verification, or is it simply a brilliant decentralized protection layer sitting on top of a highly vulnerable, centralized exposure point?

What is your take on MIRA's architectural trade-offs? Let the community know below. 👇

#Mira @Mira - Trust Layer of AI