$MIRA proposes a verification layer for AI that turns model outputs into verifiable claims and then checks those claims across multiple independent verifiers. That framing helps me translate the problem from one of model improvement into one of verification and incentives. If you treat verification like fact checking then the question becomes how to reward honest validators and how to measure meaningful adoption.

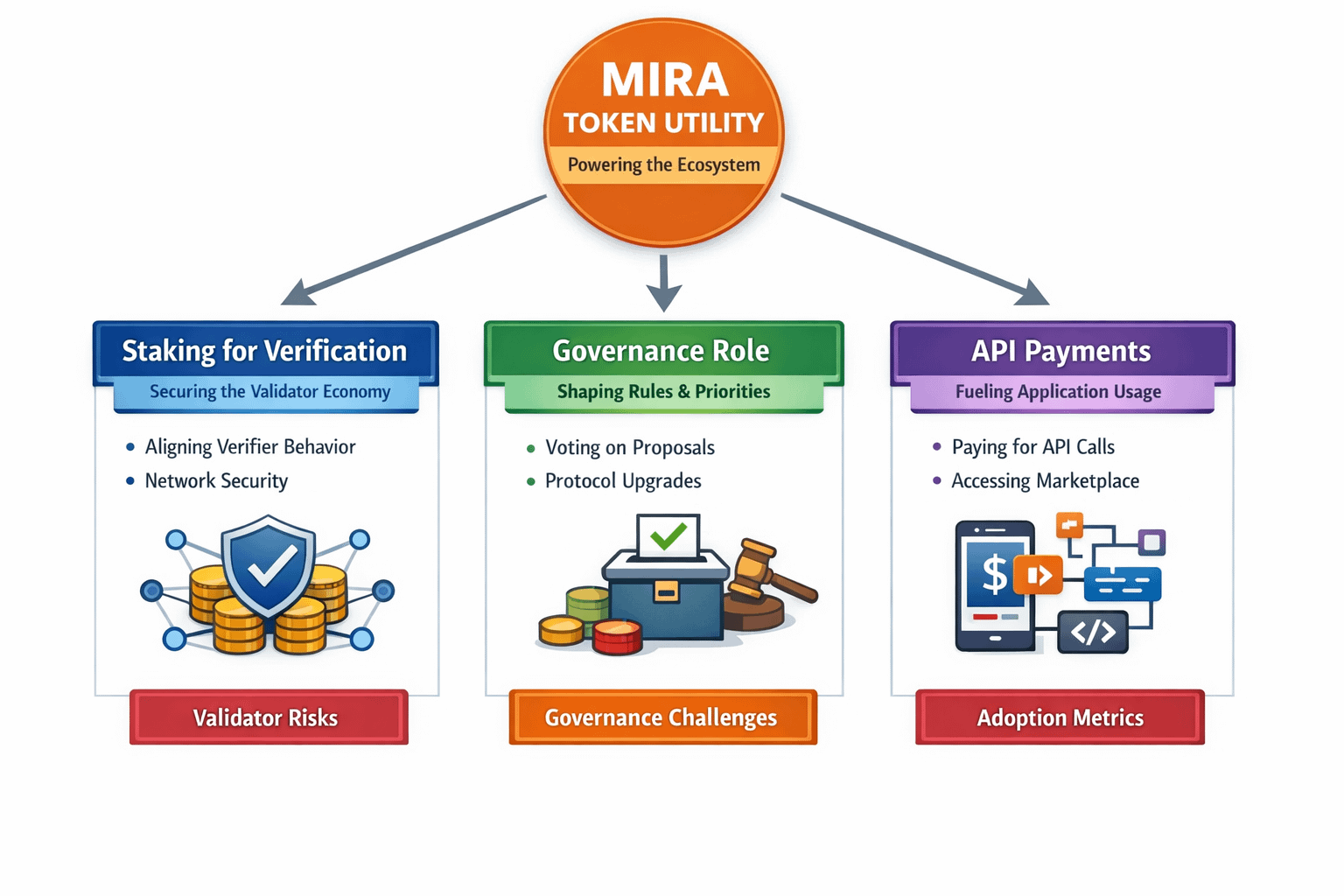

I have been looking at how @Mira - Trust Layer of AI positions $MIRA as a utility token that ties into staking governance and payments for API usage. The project documentation and research writeups make it clear that staking is meant to align verifier behavior and that APIs and a flows marketplace are meant to be the immediate surface where developers interact with the system. That makes intuitive sense to me as a potential pathway from research to real usage.

When I try to reason about token utility I break it down into three practical buckets. Staking for verification secures the validator economy. Governance lets participants shape rules and priorities. API payments create a direct usage link between applications and the network. Each bucket has its own failure modes and each will need measurable adoption to matter in practice.

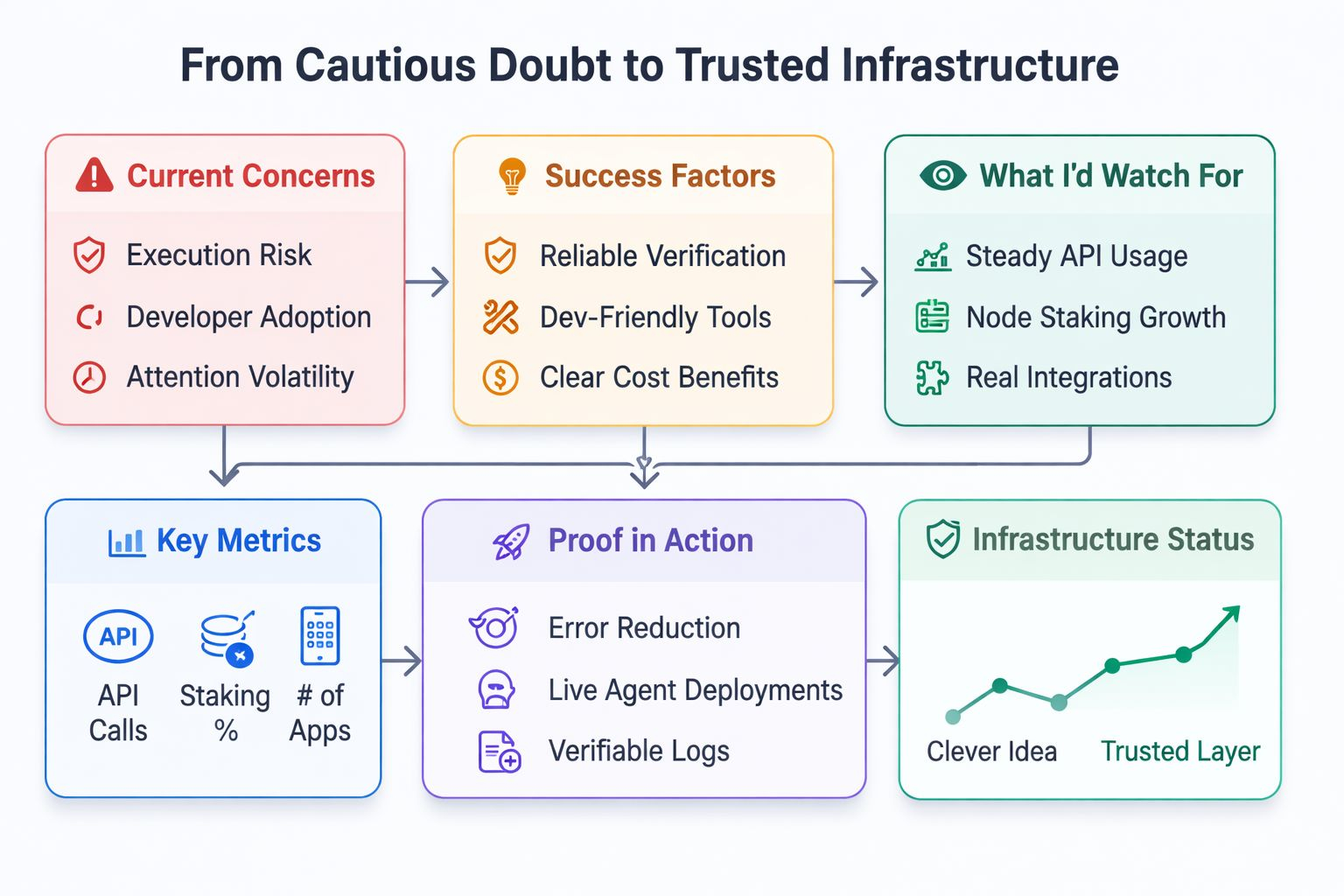

I am still cautious. Execution risk is real. Building secure and reliable verification across many models is hard. Convincing developers to integrate another layer will take clear developer ergonomics and cost benefits. Attention cycles in crypto and AI are fickle. A system that looks compelling in theory can stall if the developer story is weak or if integration costs are high.

So what would convince me this is working. I would look for steady API usage from independent apps. I would look for meaningful staking participation from node operators. I would look for verifiable integrations where the verification layer reduced real world errors in deployed agents. Those metrics would move this from a clever idea to infrastructure.

In the end I remain curious but cautious. I am interested to see whether AI verification becomes as fundamental as identity oracles and whether projects like this can supply clear evidence that they reduce risk in real deployments. Until I see sustained metrics and developer led integrations I will treat $$MIRA s an experimental infrastructure token that could matter if execution proves sound. #Mira