🖊️📌More explanation of my previous post for today.

🤔Take-home point: The future of AI should not only be about making machines smarter, but about making their outputs provably trustworthy.

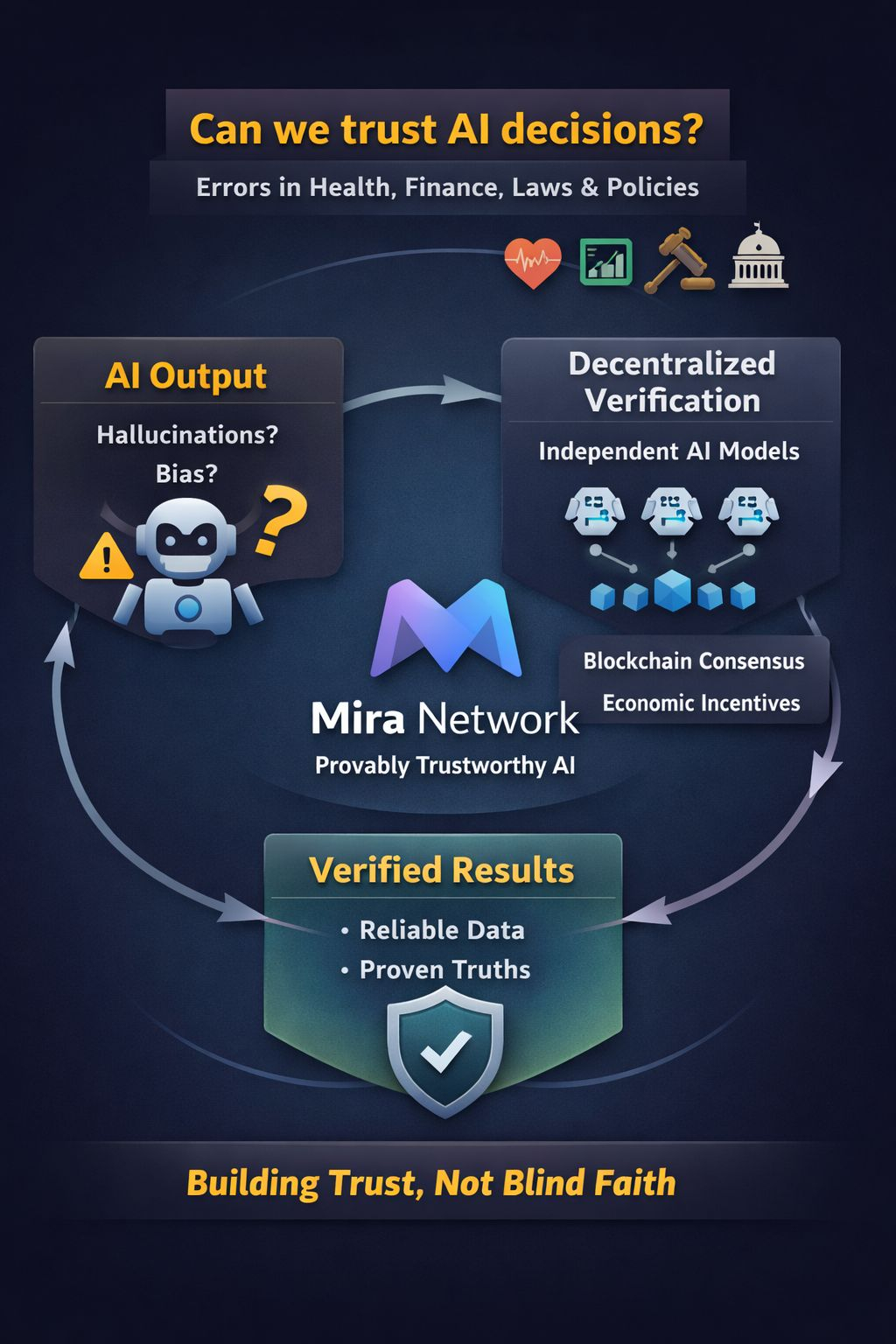

Artificial intelligence is quickly moving into areas that directly affect human lives. Today, AI can assist in diagnosing diseases, analyzing financial markets, and even helping governments make policy decisions. But there is a serious question we cannot ignore: What happens when AI is wrong?

Modern AI systems are powerful, but they still produce hallucinations, biased outputs, and incorrect conclusions. In entertainment or casual use this may not be a big issue. But imagine an AI system making a mistake in a medical diagnosis, legal recommendation, or national economic policy. The consequences could be severe.

This raises an important debate. Should society fully trust AI systems that cannot prove the reliability of their answers? Many current systems operate like a black box, where users receive results without a clear verification process.

This is where the idea behind @Mira - Trust Layer of AI becomes interesting. Instead of asking users to blindly trust AI outputs, Mira introduces a decentralized verification protocol. Complex AI outputs are broken down into verifiable claims and checked across multiple independent AI models. Through blockchain consensus and economic incentives, the network transforms AI results into cryptographically verified information.

The debate is not just about technology, but about trust in the future digital society. Should the truth produced by AI be controlled by a few centralized companies, or verified through decentralized consensus?

If AI is going to power healthcare, finance, science, and governance, reliability will become just as important as intelligence itself. That is why protocols like @Mira - Trust Layer of AI could become essential infrastructure in the AI era.

Do you agree? Answer in the comments🖊️