Alright, let’s start with something simple. It’s late at night somewhere on the planet. People are asleep. Lights off. Streets quiet. But the economy? Yeah, that thing never sleeps.

Warehouses are still moving orders. Supply chains are still pushing goods across oceans and highways. And inside some massive logistics buildings, hundreds of small robots are sliding across the floor like they’ve got somewhere important to be. They pick up shelves. Move them. Drop them somewhere else. Repeat. All night.

Hospitals are doing similar stuff. Little robotic carts rolling down hallways carrying medicine and equipment. Nobody really talks about those much, but they’re there. Agriculture too. Machines scanning crops, checking soil, collecting data farmers used to walk miles to gather.

Robots are everywhere now.

Not sci-fi anymore. Just… infrastructure.

And honestly, that shift brings up a question people don’t talk about enough. If all these machines are running around doing real work, who’s coordinating them? Who’s verifying what they’re doing? How do different machines — built by totally different companies — actually cooperate without turning everything into chaos?

That’s basically the problem Fabric Protocol is trying to solve.

Fabric Protocol is this global open network backed by the non-profit Fabric Foundation. The idea is pretty simple on the surface but kind of huge once you sit with it: create infrastructure where general-purpose robots and autonomous agents can operate together inside a shared system where data, computation, and governance all connect through a public ledger.

In plain English? Robots can collaborate inside a system that people can actually verify and trust.

And yeah. That matters more than people admit.

Because right now robots are getting smarter. Way smarter. But the infrastructure around them… honestly, it’s kind of messy.

To understand why Fabric Protocol even exists, you have to rewind a bit.

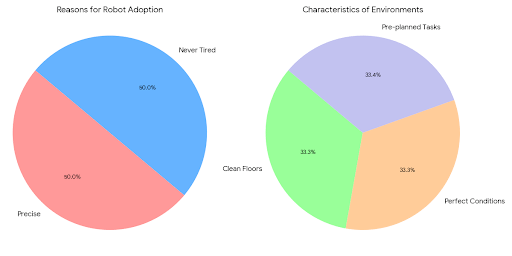

Robotics didn’t suddenly explode last year. The first industrial robots showed up in factories back in the 1960s. Mostly big robotic arms welding cars together or moving heavy parts around assembly lines. They were impressive machines, but let’s be real — they were basically programmable hammers. Same task. Same movement. Over and over.

No thinking. No adapting.

Factories loved them because they were precise and didn’t get tired. But those robots lived inside super controlled environments. Clean floors. Perfect conditions. Pre-planned tasks.

Outside those factories? They were useless.

Then tech started stacking up. Faster processors. Better sensors. Cameras that actually understand what they’re looking at. Machine learning models that can recognize patterns and make decisions. That combination changed everything.

Suddenly robots could see.

They could map spaces. Avoid obstacles. Analyze environments. Learn from data.

And the internet made things even crazier. Because now machines could share information. A robot in one location could learn something and that knowledge could travel instantly somewhere else.

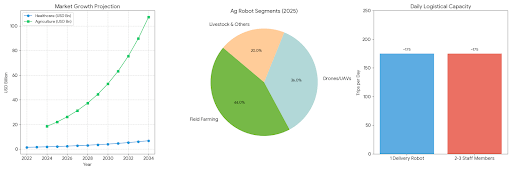

Now we’ve got autonomous drones inspecting infrastructure. Robots running warehouse logistics. Self-driving systems navigating roads. Agricultural machines monitoring crops while farmers watch dashboards miles away.

Pretty wild shift.

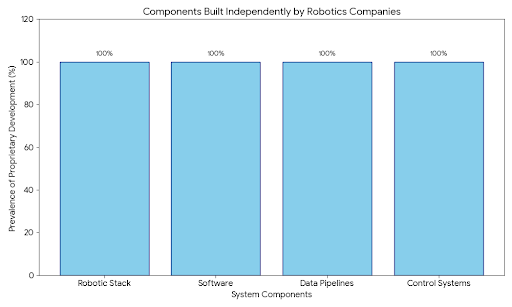

But here’s the messy part nobody likes admitting. All these systems are kind of… isolated.

Every company builds their own robotic stack. Their own software. Their own data pipelines. Their own control systems. Which means robots from different companies often can’t even talk to each other.

Not easily anyway.

Data gets trapped inside silos. Verification gets messy. Trust between organizations gets complicated.

And when robots start doing important stuff — inspecting bridges, delivering medical supplies, managing logistics — trust suddenly matters a lot.

Fabric Protocol steps right into that problem.

The core idea is a shared coordination layer. Fabric uses a public ledger to record interactions between machines, data flows, and computational outputs. That ledger works like a transparent record anyone on the network can verify.

Instead of trusting a single company’s system, participants trust the network itself.

Now here’s the part I think people underestimate: verifiable computing.

This thing is huge.

In normal computing, when software gives you an answer you mostly just trust it. The system ran the calculation. It produced a result. End of story.

But autonomous machines operate in the real world. Decisions matter. Mistakes matter. So Fabric introduces verifiable computing — systems that generate mathematical proofs confirming computations actually ran correctly.

Let’s say a robot analyzes environmental data. Or inspects infrastructure. Or calculates a delivery route. With verifiable computing, that robot can produce proof showing the algorithm executed correctly.

Not just “trust me bro.”

Actual proof.

That matters in situations where safety or accountability comes into play. Think infrastructure inspection drones. These machines scan bridges, pipelines, power grids — stuff that can’t fail quietly.

The drones capture images. Run analysis models. Flag potential structural problems.

Fabric Protocol allows the system to prove that analysis ran correctly and that nobody messed with the data afterward. Engineers reviewing the results can actually trust what they’re looking at.

And trust in automation? Yeah, that’s a big deal.

Another concept Fabric pushes is something called agent-native infrastructure.

Most current software systems treat robots like external devices. Plug them into platforms designed mainly for humans. That works… sort of. But it’s clunky.

Fabric flips that thinking.

The protocol treats autonomous agents — robots, AI systems, machines — as first-class participants inside the network. Robots can publish data directly. Request computation. Coordinate tasks. Interact with other machines through the protocol itself.

Machines talking to machines.

And honestly that’s where the world is heading whether people like it or not.

The architecture behind Fabric is modular. Different pieces handle different jobs. Robots generate data and publish it. Nodes in the network process computational workloads. The public ledger records interactions. Governance mechanisms let participants influence how the network evolves.

Developers can build robotic applications on top of the system instead of reinventing infrastructure every time.

That matters because robotics is spreading everywhere right now.

Warehouses rely heavily on robot fleets coordinating logistics. Amazon alone runs massive facilities full of them. Agriculture uses automated monitoring and harvesting systems. Healthcare uses robotic logistics platforms to move supplies around hospitals.

Even cities are experimenting with delivery robots.

But every system today runs inside its own bubble. Different vendors. Different software. Different rules.

Fabric Protocol pushes toward a world where these machines can actually interact across ecosystems.

Imagine a city running inspection robots monitoring roads, bridges, and utilities. Those robots publish verified reports to a shared network engineers can access.

Or supply chain robots from different companies coordinating tasks inside distribution hubs.

Or autonomous agricultural machines sharing verified crop data across regional networks.

Those kinds of things become possible when machines operate on shared infrastructure.

The benefits could be huge. Interoperability gets easier. Transparency improves. Developers build faster. Innovation speeds up because teams don’t start from scratch every time.

But let’s not pretend everything is perfect here.

Building a global network for autonomous machines is incredibly complicated. Security has to be rock solid. A vulnerability in infrastructure like this could create real problems.

Regulators will definitely get involved too. Governments won’t ignore fleets of autonomous machines operating in public environments.

Then there’s data ownership. Robots generate tons of data — sensor feeds, images, operational logs, location info. Somebody has to decide who controls that data and how networks share it.

And honestly some companies won’t like open infrastructure at all. If they built expensive proprietary systems, they might not want to plug into a shared protocol.

So adoption could be slow.

Still… the trend is obvious.

Robotics keeps improving. AI keeps getting smarter. Hardware costs keep dropping. Autonomous systems are spreading across industries faster every year.

Which means coordination infrastructure becomes more important every year too.

Fabric Protocol offers one vision of how that infrastructure might look. A decentralized network where robots, data, and computation interact through verifiable systems designed specifically for autonomous agents.

Will Fabric become the standard? No one knows yet. Tech ecosystems rarely follow neat plans.

But the idea behind it — building coordination layers for machine networks — feels inevitable.

Technology usually evolves in stages.

First we build tools.

Then we connect those tools.

Then we build ecosystems where everything works together.

The internet followed that exact path. Robotics might be entering that third phase right now.

The machines exist. They’re capable. They’re spreading into real industries.

Now we need systems that let them cooperate safely and transparently.

And honestly? That’s a much bigger challenge than building the robots themselves.

If coordination layers like Fabric actually work, they could reshape how logistics, manufacturing, agriculture, and healthcare operate. Humans and machines will share environments more often. Exchange data constantly. Collaborate on complex tasks.

That relationship needs trust.

Fabric Protocol tries to build the infrastructure for that trust.

Because robots aren’t just tools anymore.

They’re becoming participants in the global digital ecosystem. And the systems we build today will decide how that future actually works.

@Fabric Foundation #ROBO $ROBO