People often talk about how powerful modern AI systems have become. But if you use them often, you also notice something else. They can sound confident while giving incorrect information. Sometimes the answers shift slightly each time you ask the same question. Other times the reasoning contains hidden assumptions that are hard to detect.

This reliability gap has become an interesting problem in the AI space.

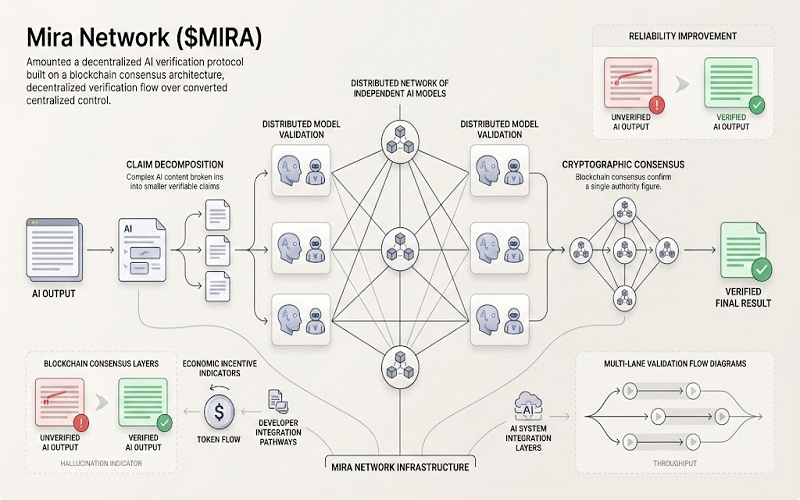

Mira Network is one attempt to approach it from a different direction. Instead of building another AI model, the project focuses on verification. The idea behind #MiraNetwork is fairly straightforward: check AI outputs before treating them as reliable information.

The process begins by separating an AI response into smaller factual claims. Rather than judging the full answer at once, each claim can be examined individually. Multiple independent AI models then review these claims and provide their own assessments.

That is where the blockchain layer enters the picture.

The network records these validation results using cryptographic proofs and distributed consensus. Instead of trusting one system or organization, the verification process becomes shared across participants. Conversations around @Mira - Trust Layer of AI often describe this as building a “trust layer” for AI reasoning.

The token $MIRA helps coordinate activity in the network. Participants who help validate claims can receive incentives, while the system maintains transparent records of how conclusions were reached.

Compared with centralized AI validation, this structure removes the need for a single authority to decide what counts as correct. Verification becomes a distributed process, which may reduce the risk of hidden control or quiet changes.

Of course, the approach is not without challenges. Running multiple models to verify information can be computationally expensive. Coordinating decentralized validators is also complex, especially while the ecosystem is still young.

Still, #Mira reflects a broader shift in thinking: generating answers is one step, but proving they can be trusted may become just as important.