What first caught my attention about Mira was not the token and not another AI-crypto sales narrative. That story has circulated through this market for years. New interfaces appear, new dashboards launch, and each project promises that this time the machine will be smarter, faster, and more reliable. But most of those systems still rely on the same weak foundation. They generate answers quickly but rarely explain how those answers were validated. The real limitation in modern AI systems is no longer intelligence or speed. It is verification. An output can look confident and still hide weak reasoning. A confidence score can make a result appear reliable while providing little proof that the reasoning behind it actually survived scrutiny.

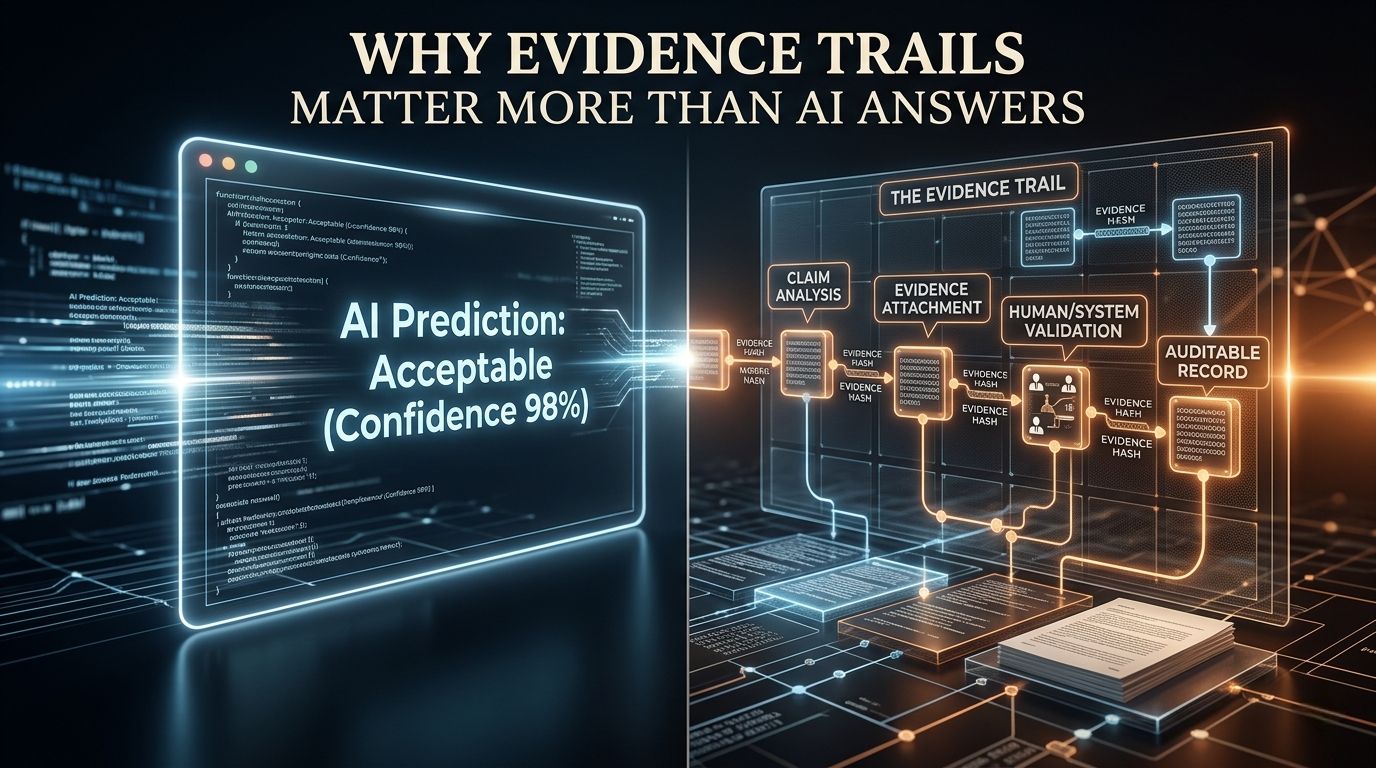

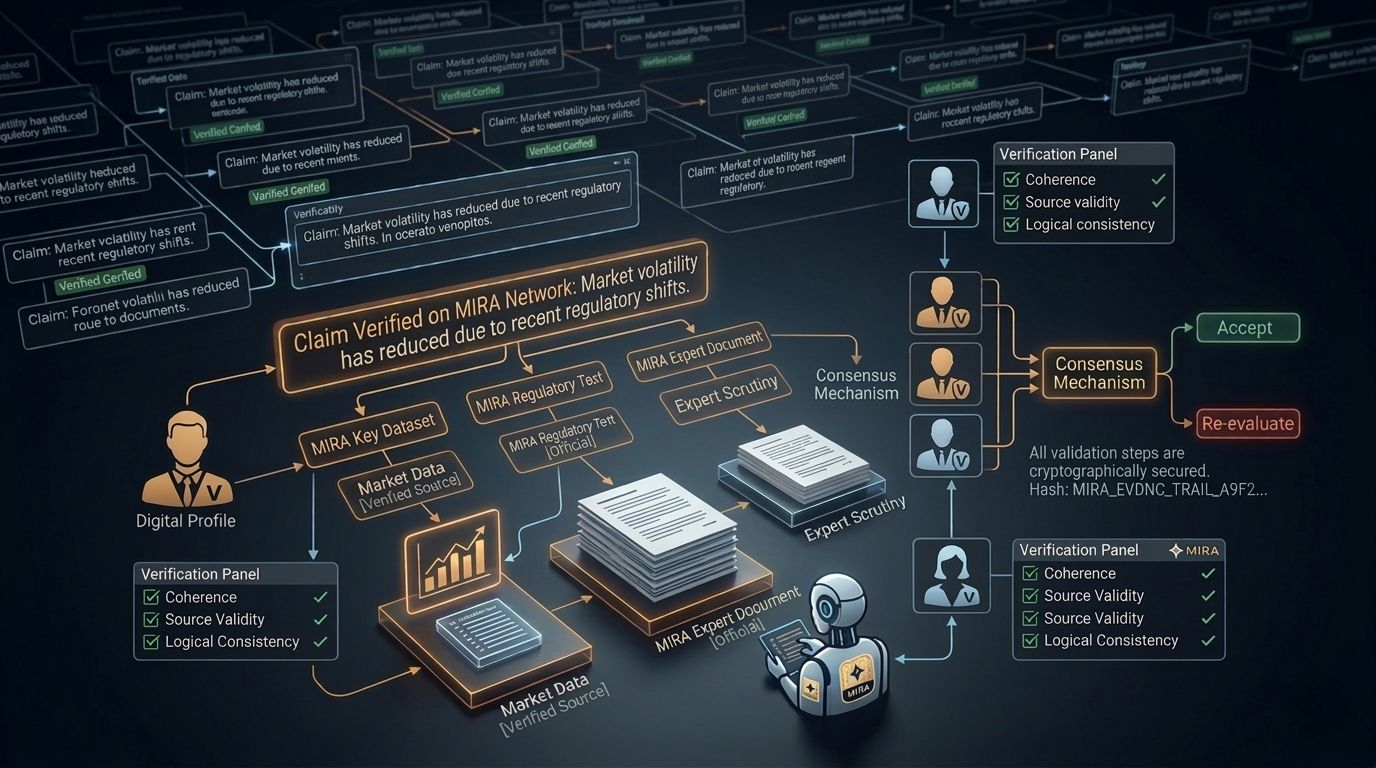

Most AI workflows follow a simple pattern. A model produces an output, the system attaches a score or ranking, and the result is presented as something that can be accepted immediately. The process ends where verification should actually begin. Mira approaches the problem from the opposite direction. Instead of treating the response as the final product, the response becomes the starting point of examination. The output can be separated into claims, evidence can be attached to those claims, and validators can examine whether the reasoning holds under review. Only after that process does agreement begin to matter. This structure shifts the focus away from presentation and toward defensibility. A response that looks convincing is not automatically treated as trustworthy. The question becomes whether the reasoning behind it can still hold up after deeper inspection.

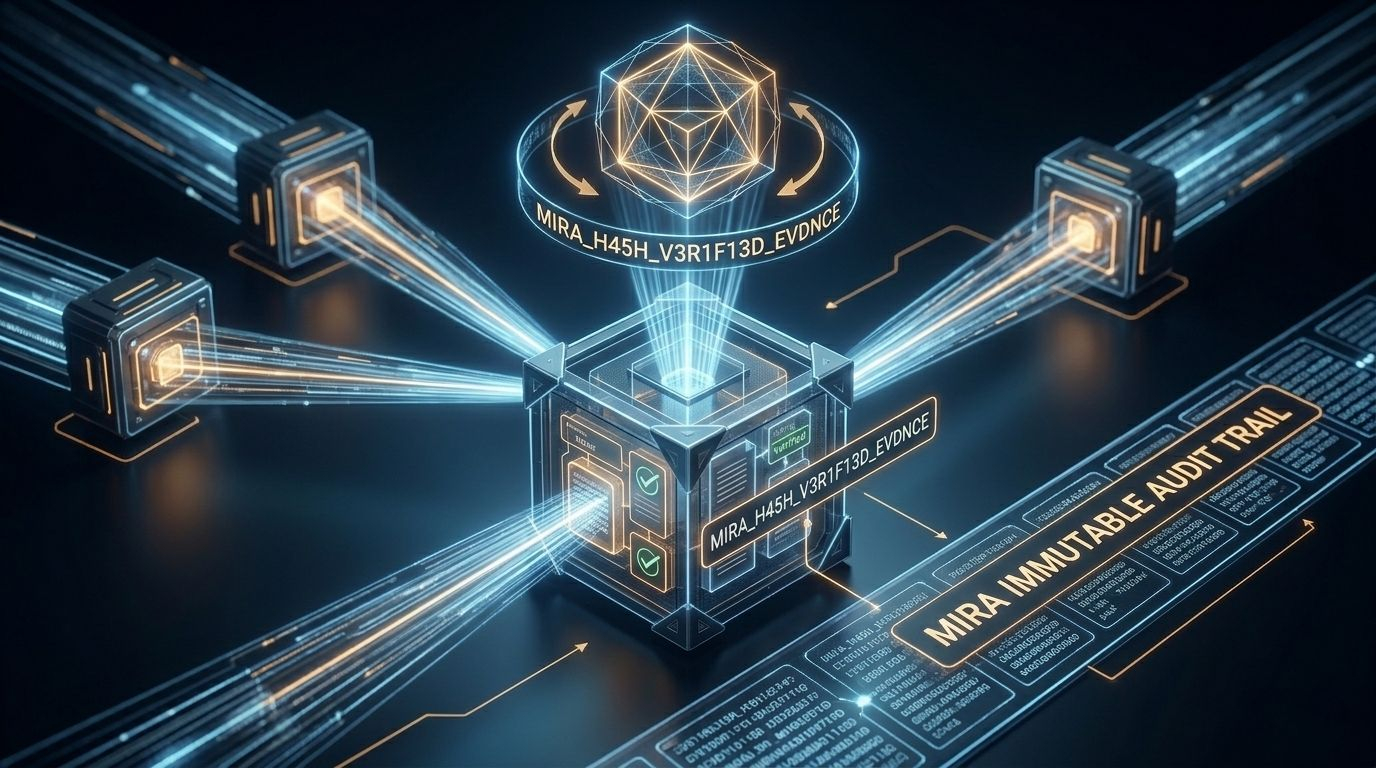

This is where the evidence hash concept becomes important. Rather than leaving behind only text, the system records proof that verification occurred. The output carries a reference to the process that examined it. In practical terms this works like a receipt attached to the decision. Instead of trusting that verification happened, the system creates a traceable record showing that it did. That small change alters the nature of an AI answer. It stops being only a statement and becomes something closer to an auditable result. The value is not the answer itself but the evidence trail that survives after the answer is produced.

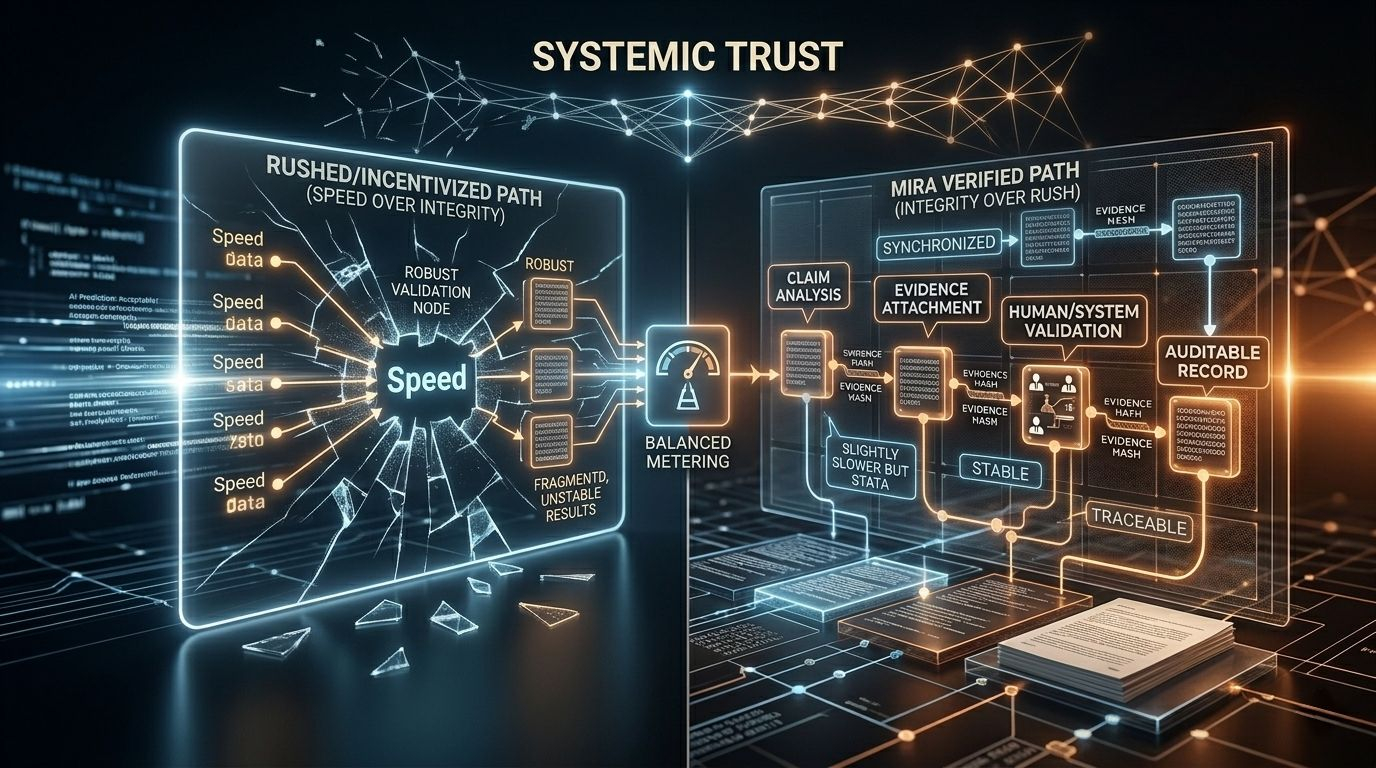

Of course building a verification structure introduces its own challenges. Real outputs are messy. Evidence can conflict. Validators can disagree. Some conclusions require time before they become clear. This creates tension between speed and certainty. If a network moves too quickly weak reasoning may pass through unnoticed. If it waits too long the system becomes inefficient and loses practicality. Incentives also play a role. Participants often optimize for the easiest path through a mechanism rather than the most accurate one. If verification becomes superficial then proof becomes cosmetic and the system slowly loses integrity. For a verification network to remain meaningful the path that produces real evidence must be stronger than shortcuts.

What makes Mira interesting is that it focuses on this exact weakness. Instead of trying to make AI outputs look more impressive it attempts to build infrastructure around verifiable reasoning. Machine decisions increasingly influence automated systems, digital infrastructure, and financial environments. In those situations the question of trust becomes unavoidable. People will eventually ask why a machine decision should be trusted and where the evidence behind that decision exists. Systems that cannot answer that question will struggle to maintain credibility. The concept behind Mira is not about producing better looking answers. It is about ensuring that answers leave behind evidence strong enough to be examined later. That difference may sound subtle but in complex systems small structural changes often determine whether trust can actually exist.