I often find myself returning to a simple worry about modern AI. It is not just that models sometimes say things that are wrong. It is that they can sound certain while being wrong.

Put plainly this matters because many decisions rely on confident sounding outputs. A developer might deploy an automation that looks correct at a glance and later discover a hidden failure. A reader might trust a summary that contains made up facts. For people and organizations the cost can be time money and reputation.

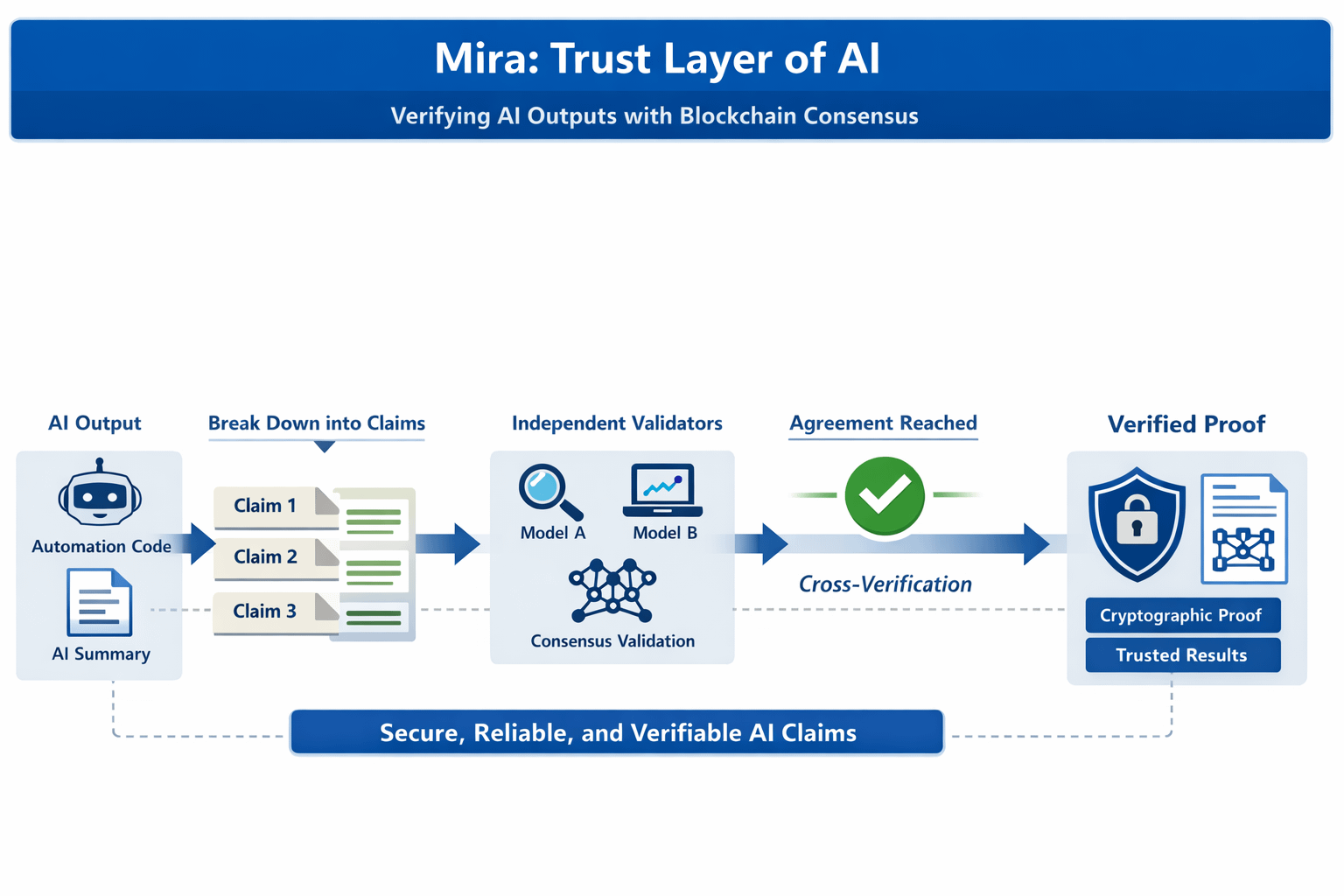

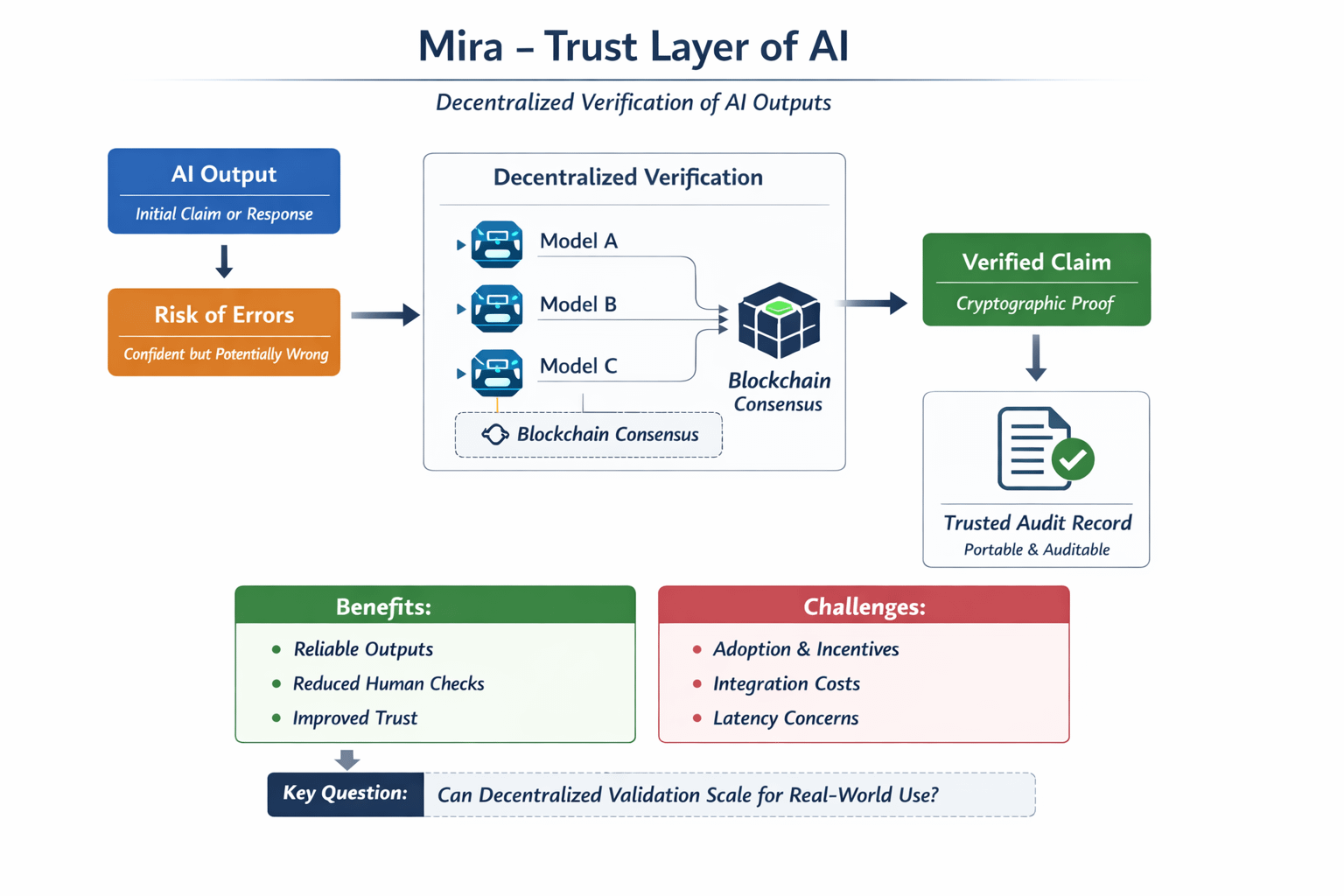

That is why I have been watching @Mira - Trust Layer of AI with interest. The idea as I understand it is to take AI outputs and turn them into verifiable claims that are validated by multiple independent models through a blockchain consensus mechanism. In other words the network breaks down complex content into smaller claims and seeks agreement across independent validators before attaching a cryptographic proof.

A simple analogy helps me picture it. Imagine you had three independent experts review the same technical claim and sign a short memo if they all agree. The memo becomes the artifact you trust later. $MIRA aims to create that artifact in a decentralized way and make it portable and auditable.

Thinking more analytically there are reasons to be cautiously optimistic and reasons to be skeptical. On the optimistic side decentralized verification addresses a real infrastructure gap and it may reduce the need for human in the loop checks in some use cases. On the skeptical side adoption depends on developer demand on clear economic incentives and on the friction of integrating another verification call into existing stacks. Will data providers and model hosts pay for verification at scale and will end users accept the extra latency and cost that verification brings This is not obvious.

From a market perspective $MIRA exists as a utility token and the project has secured listings and partnerships that make it visible to traders and builders. That matters because token economics shape how validators and node operators behave. But market visibility does not guarantee long term product market fit and it does not ensure sustained developer adoption.

I find the concept intellectually appealing and practically necessary if AI is to be trusted in high stake settings. At the same time I am mindful that infrastructure often wins or loses on developer ergonomics and on the strength of real world integrations. How much trust can a collective of models deliver in scenarios like regulated workflows or legal evidence and what incentives will align for honest verification These are the questions I would like to see the community debate more openly about the future of reliable AI systems. #Mira @Mira - Trust Layer of AI $MIRA