@Fabric Foundation I was at my kitchen table before sunrise with a cold mug and the tick of the radiator beside me while I reread Fabric material because the question felt urgent. When a robot does something consequential I want to know whether it was actually allowed to do it.

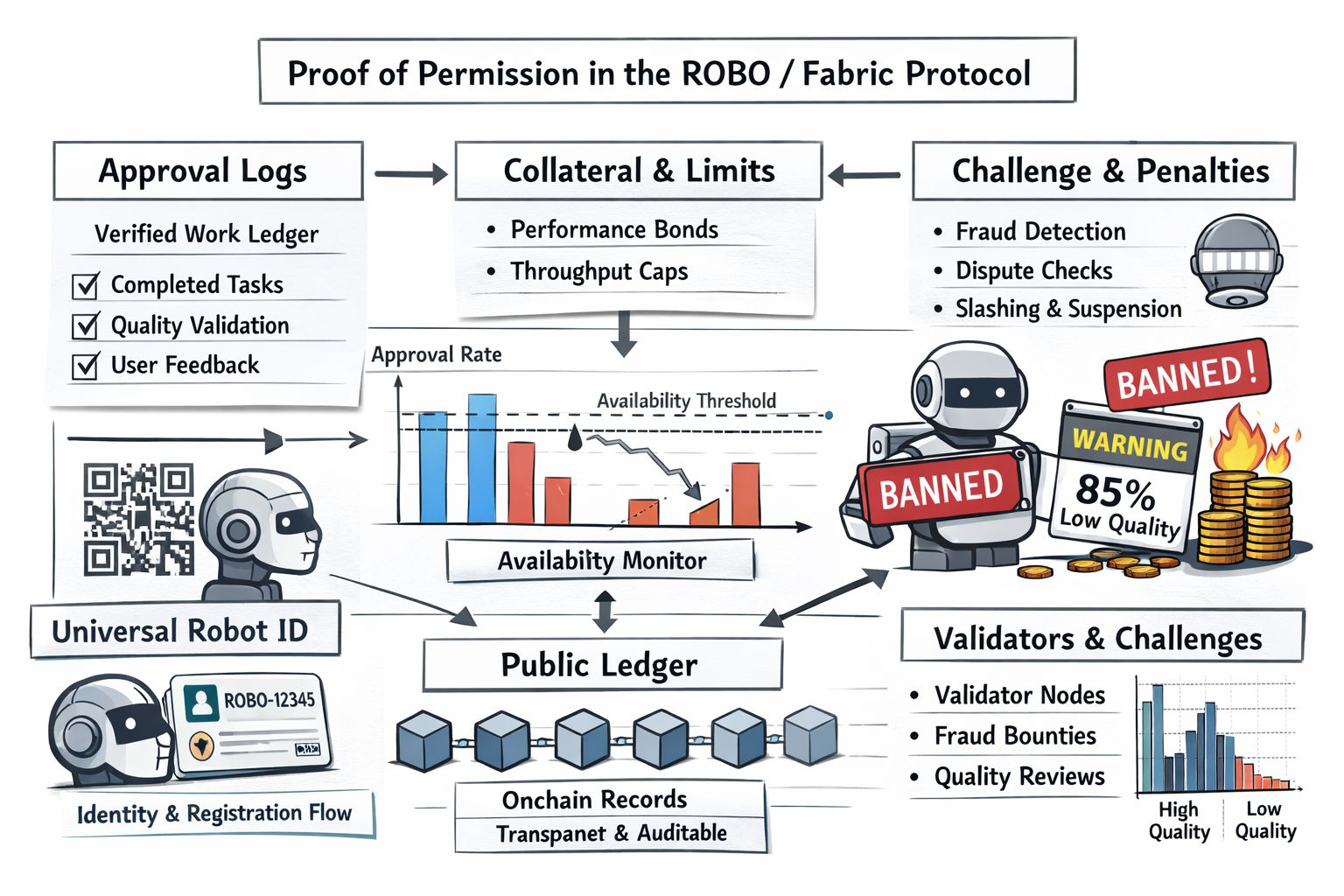

That is why proof of permission matters to me in the ROBO and Fabric conversation. I have not found an official Fabric section that uses that exact phrase but I do see a set of mechanisms trying to answer the same question more strictly than most robotics projects do. Fabric describes public ledgers and onchain identity along with verified work validator checks and penalty rules. In plain terms I read that as an effort to leave evidence behind before during and after a machine acts instead of asking me to trust a company dashboard later.

I think this topic is getting attention now because the project has moved from abstract ambition toward public rollout. OpenMind published the first beta release of its OM1 software stack in February and described it as open source modular hardware agnostic and built for autonomous decision making. Around the same time Fabric Foundation introduced the ROBO token and tied it directly to network fees for payments identity and verification. I also noticed developer docs showing a join flow for robots that includes a Universal Robot ID which lets a machine enter Fabric’s coordination system. That is still early infrastructure but it is real infrastructure and that is usually when a concept stops sounding theoretical.

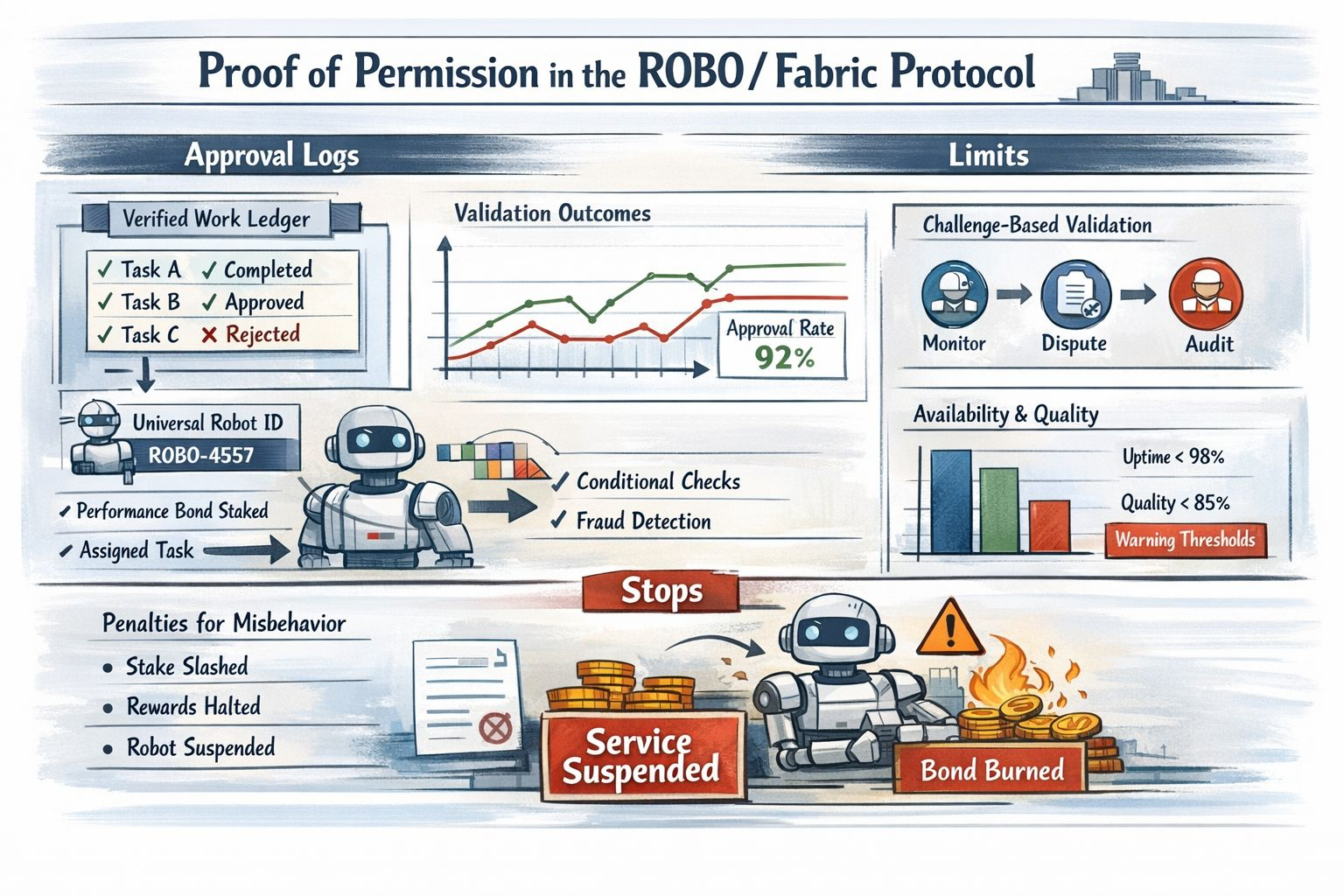

What I find useful is that Fabric does not treat permission as a single yes or no switch. It spreads authority across records and constraints. A robot operator posts a refundable performance bond to register hardware and provide services and that bond scales with declared throughput. When a specific task is assigned the protocol can earmark part of that reservoir as active collateral. The machine is not just waving a token badge around. It is putting something at risk before work happens which makes permission feel tied to stake identity and the cost of misbehavior rather than to a vague promise of good conduct.

The approval log part is more interesting to me than it first sounds. Fabric says rewards come from completed and verified work rather than passive holding and it links quality multipliers to validation outcomes and user feedback. I read that as a ledger of justified action rather than a ledger of mere activity. A robot can move send messages and submit tasks all day but if the network cannot connect those actions to verified completion and acceptable quality then the record should not count as approval. For me that is the core of permission in a robot economy because the log is only meaningful when it reflects work that passed a check.

The limits are where the design starts to feel more mature. Fabric’s whitepaper says universal verification would be prohibitively expensive so it uses a challenge based model instead. Validators monitor availability and quality investigate disputes and receive bounties when they prove fraud. That means permission is never absolute and never final. It is conditional revisable and open to challenge. I prefer that to the cleaner fantasy that every robotic action can be perfectly proven at all times because physical systems do not work that way and any serious protocol should admit that before it claims more certainty than it can deliver.

The stops are even clearer. If a robot submits fraudulent work then a significant share of the earmarked task stake can be slashed and the robot is suspended until it re-bonds. If availability drops below ninety eight percent over a thirty day epoch then the robot loses emission rewards for that period and part of the bond is burned. If the aggregated quality score falls below eighty five percent then reward eligibility is suspended until the operator fixes the problem. Those are not decorative warnings. They are actual stopping points built into the economic design and they make the title of this topic feel justified to me because stops are where permission becomes enforceable rather than symbolic.

I still see unfinished work here. Fabric itself says some governance choices remain open including whether the initial validator set should be permissioned permissionless or hybrid. That honesty helps my trust more than polished certainty would. I do not need a robotics protocol to pretend it has solved everything. I need it to show me where authority comes from where evidence lives where risk is capped and where intervention begins. Right now the most promising part of Fabric is not speed or spectacle. It is the attempt to make permission legible and that feels unglamorous in the best possible way because serious autonomy needs exactly that kind of restraint at launch. In robotics that may be the difference between a machine that merely acts and one I can responsibly let act near me.

@Fabric Foundation $ROBO #ROBO #robo