I spent some time reading about Fabric Foundation recently, and the more I looked into it, the more interesting the idea started to feel.

I first came across Fabric Foundation while looking into projects that talk about AI and robotics inside crypto. At the beginning I honestly assumed it would be another typical narrative. In this space you see a lot of projects using words like autonomy, machine economies, and intelligent agents, but once you look closer there is often very little underneath the story. Usually it ends up being a token, a few big promises, and a vague idea that machines will somehow transact onchain in the future.

Fabric caught my attention because it seemed to start with a different kind of question.

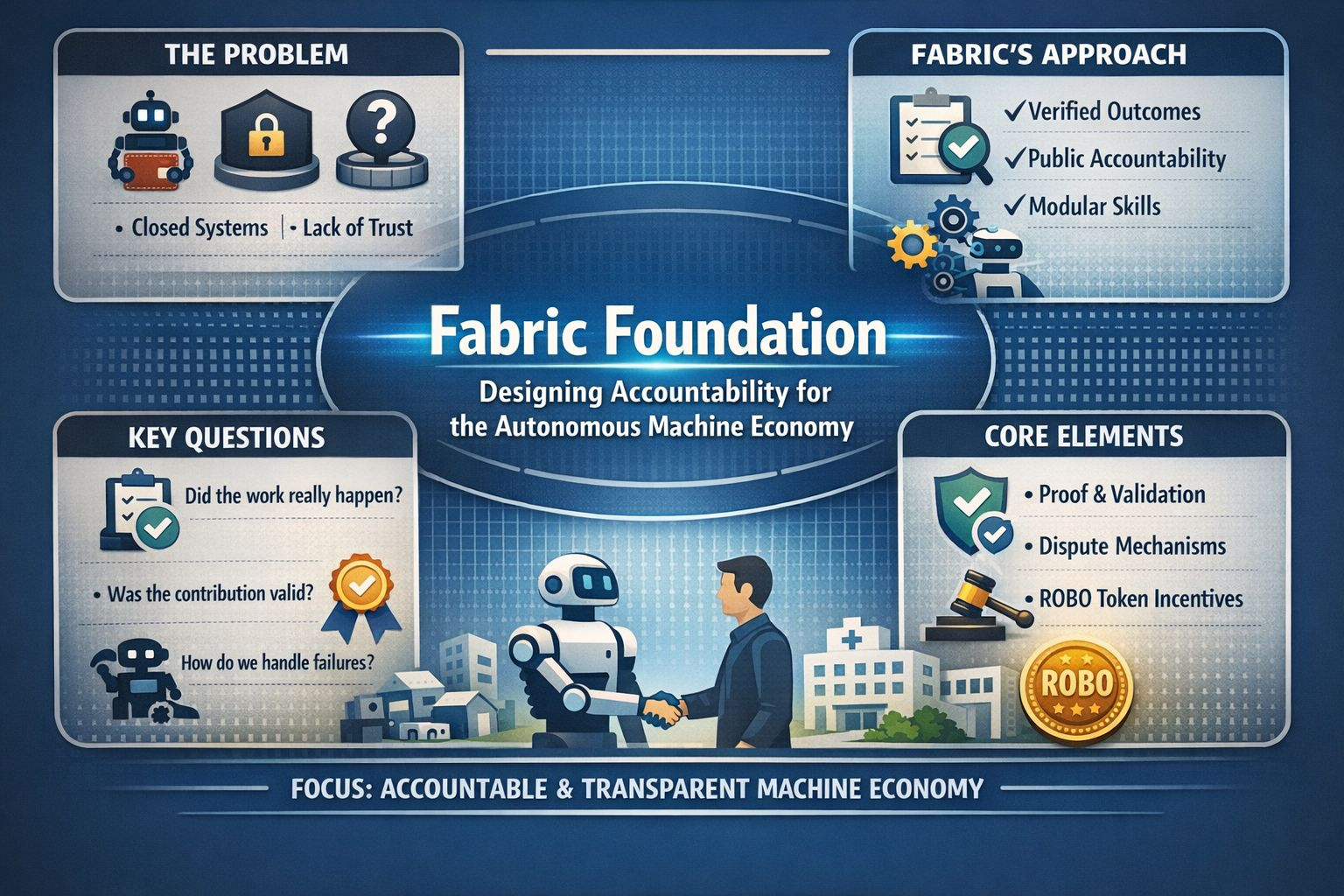

Instead of focusing only on how machines might transact or interact financially, the project seems to be asking something more uncomfortable. If autonomous systems actually begin doing meaningful work in the world, who checks them? Who challenges the results when something looks wrong? And who verifies that the value these systems claim to create is actually real?

That part is something many people skip over. It is easy to get excited about the idea of robots becoming more capable. It is much harder to think about what happens after that point. Once machines start operating in warehouses, hospitals, homes, schools, and factories, the real issue is no longer just whether they can perform tasks. The bigger question becomes whether anyone can trust the systems around them.

A robot might complete work, generate revenue, and interact with people in meaningful ways. But if all of that activity happens inside a closed platform controlled by a single company, the public has very little visibility into what is actually happening. In that situation trust depends entirely on whoever owns the platform.

Fabric seems to be trying to approach this problem differently. The idea appears to be creating a layer where machine activity becomes accountable rather than simply impressive.

That is one of the reasons the project stood out to me. It does not only focus on making robots useful. It seems more interested in making their actions understandable. That might sound like a small difference at first, but it actually changes the whole discussion. Fabric is built around the idea that the future of autonomous systems should not be shaped entirely behind closed corporate systems. If machines are going to take on more responsibility, there needs to be some public structure where claims can be verified, behavior can be challenged, and real contributions can be recognized.

Without something like that, the whole system ends up depending heavily on private trust.

This is also where Fabric starts to feel more thoughtful than many of the crypto projects talking about autonomous agents. A lot of those narratives jump straight to payments. They imagine a future where robots or software agents simply hold wallets, spend tokens, and transact with each other.

But that part is not actually the difficult problem.

Giving a machine a wallet is relatively simple compared to proving that the work it did actually mattered. Fabric seems to recognize that difference. Instead of only asking how machines can transact, it seems to be asking what those transactions actually represent. If a robot gets paid, what proves the job really happened? If someone improves the system, how is that contribution measured? And if a machine performs poorly or behaves incorrectly, how does the network respond?

Those questions feel much more important than most of the flashy ideas people usually talk about.

Another part of Fabric that I found interesting is how it treats accountability as part of the infrastructure itself. The project is not suggesting that every piece of machine data should suddenly become public. That would not be realistic. Robots operating in private homes, industrial sites, or sensitive environments cannot simply upload everything they see onto a public ledger.

Instead, Fabric seems to be exploring a middle ground. The underlying data can remain private where necessary, while the claims, results, and economic outcomes can still be verified in ways the broader network can evaluate. That shift from private activity toward verifiable proof seems to sit at the center of the project.

To me that feels like a more serious approach. It is not chasing transparency in the lazy sense. It is trying to create accountability without forcing complete exposure. Solving that balance is much harder, but it is also much more useful.

In practice that means the network is designed around verifying contributions, validating results, allowing disputes, and penalizing dishonest behavior. The idea is to build a system where fake work becomes costly, challenging suspicious results becomes worthwhile, and real contributions are rewarded in a way that others can see.

That mindset feels grounded.

It does not assume people will behave honestly just because the technology sounds exciting. It assumes incentives matter. It assumes fraud is possible. And it treats verification as something more important than marketing language.

Another reason the project feels more credible to me is the robotics context behind it. Fabric is connected to OpenMind and the OM1 robotics operating system. That gives the idea more weight than projects that exist purely as token narratives. When you read about Fabric, it does not only talk about chain mechanics. It also discusses modular robot skills, machine identity, teleoperation, interoperability, and systems designed to work across different hardware.

That does not guarantee the project will succeed, of course. But it suggests the people building it are thinking about the practical reality of machines operating in the physical world.

And robotics in the real world is messy.

Writing a clean whitepaper is one thing. Building systems that can survive unpredictable environments is something very different. Machines fail in strange ways. Verification can be complicated. Human environments are rarely neat or predictable.

Fabric’s design seems shaped by that reality. The emphasis on modularity, human oversight, and challenge mechanisms suggests the team understands that robotics will never be perfectly clean.

The modular side of the system is particularly interesting. Fabric describes a model where robot capabilities can be added or adjusted through individual skills. Instead of one massive intelligence system doing everything, the network can grow through separate functions developed over time.

That idea might sound technical, but it has a very practical implication. When a system is modular, it becomes easier to see where value actually comes from. A better navigation skill, a more reliable task behavior, or an improved reasoning module can be identified more clearly than if everything is hidden inside one opaque system.

Once value becomes visible, rewarding the people improving it becomes much easier.

This connects to another idea inside Fabric that I find compelling. The project appears to be trying to reward contribution rather than passive ownership.

That is important because crypto has struggled with this problem for years. Many networks claim to be decentralized, but most of the rewards still go to people who simply hold tokens rather than contribute anything meaningful. Fabric seems to be trying to move away from that model. The intention is that rewards flow toward participants who provide measurable value, whether that means task completion, data, compute, validation, skill development, or system improvements.

If Fabric can actually make that model work, it would be more significant than people might expect.

It would mean the network found a better way to connect rewards to effort and output rather than just capital. That could matter a lot in a future where humans, machines, and software systems all contribute to the same economic layer.

Another thing I appreciate about Fabric is that it does not treat full autonomy as some final stage where humans disappear completely. In several parts of the design, human observation and feedback still play a role.

That feels realistic.

Machines can do many things, but there will always be situations where human judgment matters. A robot might technically complete a task and still do it in a way that feels unsafe, awkward, or socially inappropriate. Metrics alone do not always capture that nuance.

Fabric’s idea of a broader public feedback layer acknowledges that machine accountability cannot be purely mechanical. Sometimes people still need to evaluate what happened and decide whether it was acceptable.

The ROBO token also makes more sense when you view it within this larger structure.

On its own it would simply look like another token with governance and utility features. That alone is rarely enough to make people care. But inside the Fabric system the token is supposed to tie together participation, validation, governance, access, and economic coordination.

The token sits at the intersection of machine actions, human contributions, and protocol decisions.

Whether that system becomes strong enough for the token to matter beyond speculation is still an open question.

Fabric has an interesting conceptual design, but concepts do not automatically become durable systems. The real challenge will be whether the network can attract enough activity, enough contributors, and enough credible proof mechanisms to justify the architecture it describes.

This is also where skepticism still feels healthy.

The project is early, and the machine economy it is trying to support is also early. There is a large gap between designing a model for machine accountability and becoming the infrastructure people actually rely on in everyday life.

Verification in the physical world is difficult. Governance is hard to decentralize. Token incentives can be gamed if they are not carefully designed.

Fabric is not immune to any of those pressures.

Still, I think the project deserves attention because it is aiming at the right type of problem. It is not simply asking how machines can become more autonomous. It is asking what autonomy requires once it becomes economically meaningful.

That perspective feels deeper than most of the projects grouped into the same trend.

At its core Fabric is making a larger argument about the future of intelligent machines. If robots and autonomous systems are going to become economically important, the systems around them cannot remain entirely private and opaque.

There needs to be a public layer where accountability exists. There needs to be a way for contributors outside a single company to improve the system and be rewarded for doing so. And there needs to be a structure where machine outputs can be questioned instead of automatically trusted.

Whether Fabric ends up delivering that vision is something time will decide.

But even at this early stage, the project stands out because it is asking better questions than most.

And in the long run, the questions usually matter more than the hype.

@Fabric Foundation #ROBO $ROBO