@Fabric Foundation What makes machine autonomy feel real is not the motion. It is the moment someone asks a plain question afterward: who allowed this, under what limits, and what happens if it goes wrong? That is where a lot of automation talk starts to thin out. The demo looks polished, the model sounds smart, the task appears complete, but the record of permission is often scattered across private dashboards, internal policies, and logs nobody else can inspect. Fabric’s broader pitch is that robots, agents, and the people around them need a more public and verifiable coordination layer than that.The whitepaper says Fabric is creating an open network for robots, where their development, coordination, and monitoring happen through shared public ledger systems. Its newer ROBO material frames the token around fees, identity, verification, and governance inside that network.

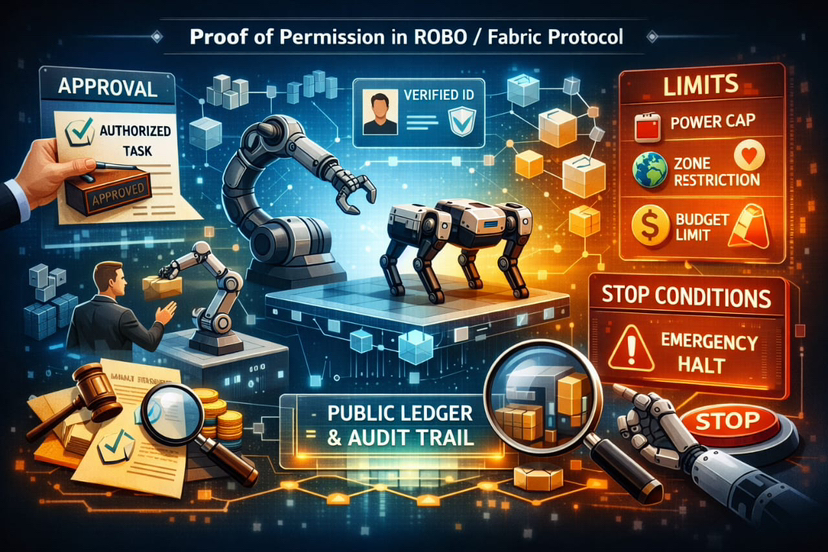

That is why I think “proof of permission” is a useful way to read the project, even if it is not the protocol’s formal label for one feature. In practice, proof of permission is not just a yes or no toggle. It is the chain of evidence behind machine action. Who issued the task. Which device or agent accepted it. What rules were attached. Whether any budget, safety boundary, or usage cap was part of the original instruction. And just as important, whether there was a credible stop condition when reality stopped matching the plan. Fabric does not present this as a cute UX problem. It treats trust as an infrastructure problem.

That matters because permission in robotics is never as simple as access control in software. A bot reading a file is one thing. A robot moving through a store, charging itself, using a model, handling tools, or completing a paid physical task is different.

The whitepaper’s message is that blockchains can act like a shared system of trust between humans and machines. They keep records safe from changes, make them easier to check, and let actions follow set rules. That means if machines become more independent, the record of what they were allowed to do and what they actually did should not be private.So when people talk about approvals, limits, and stops inside a ROBO or Fabric-style system, I would read them less as isolated controls and more as one accountability loop. An approval is the starting signature. It says this task was authorized by a real party under defined conditions. A limit narrows that authority so it does not silently expand. It might be economic, temporal, geographic, or tied to how often a model or skill can be used. A stop is the final safeguard. It is what turns permission from a one-time grant into a revocable operating envelope. Without that last piece, “permission” can quietly become open-ended trust.

One detail in the whitepaper stood out to me because it makes this feel less abstract. Fabric discusses One- and N-time models being developed by OpenMind and Nethermind, using trusted execution environments to impose limits on where and how many times specific skill models can be used. That is not the whole permission system, of course, but it points in a serious direction. It suggests that limits are not only social promises or policy documents sitting outside the machine. They can be embedded into the technical conditions of use. In other words, the system can record not only that a capability exists, but that its use was bounded from the beginning.

The same pattern shows up in Fabric’s economics. The network does not assume every task can be perfectly proven after the fact. In fact, the whitepaper says physical service completion can be attested but not cryptographically proven in general, which is one of the more honest lines in the document. Instead of pretending certainty, it leans on challenge-based verification and penalty economics. Validators stake bonds, perform routine monitoring, and investigate disputes. If fraud is proven, part of the task stake can be slashed, the robot can be suspended, and the successful challenger earns a truth bounty. Availability failures and quality degradation also trigger penalties or reward suspensions. That is not just incentive design. It is a way of giving “stop” teeth.

I think this is where the idea becomes more interesting than a standard audit trail. A normal log says something happened. A stronger system says something happened under declared constraints, and those constraints had consequences when breached. Fabric’s proposed checks are not perfect, and the project itself more or less admits perfection is unrealistic in physical environments. Still, there is a difference between unverifiable optimism and bounded accountability. The latter is much more useful for operators, counterparties, maybe even insurers one day. If a machine exceeds its allowed conditions, the question is no longer purely moral or interpretive. There is a structured path for challenge, suspension, and loss.

There is also a quieter benefit here. Good permission logs protect the system from its own success. Once a network grows, memory becomes political. People remember approvals selectively. Teams reinterpret limits after the fact. Stops that were supposed to be obvious suddenly look ambiguous when money is involved. An immutable ledger does not solve every dispute, but it narrows the room for convenient storytelling. That may be even more important in robotics than in pure software, because the gap between what a machine was supposed to do and what it actually did can turn into cost, liability, or physical harm very quickly. Fabric’s material repeatedly frames public ledgers as a place for oversight evidence, not just payment settlement, and I think that is the correct instinct.

None of this means the hard part is finished. It is not. The project still has to show that these ideas can survive real deployments, messy operators, and low-grade adversarial behavior. Public logs are only as good as the identity binding behind them. Limits only matter if they are hard to bypass. Stops only matter if governance or validators can act quickly enough when a task type, a model, or an operator becomes a problem. Fabric’s own design hints at that tension: it wants openness, but it also relies on structured governance, monitoring, and punishment to keep the network sane.

Even so, I think the framing is useful. In systems like ROBO/Fabric, proof of permission should not be treated as paperwork around automation. It is part of the product itself. The machine economy will not be trusted because robots become more fluent or more autonomous. It will be trusted when approvals can be traced, limits can be shown, and stops can be enforced without begging a single company for the truth. Fabric is still early, but that is the standard I would use to judge it.

@Fabric Foundation #ROBO $ROBO