One of the strange habits the robotics industry has developed is how quickly it celebrates intelligence.

Every new breakthrough seems to trigger the same reaction. Videos of machines navigating complex environments, sorting packages, interacting with humans. The demonstrations are impressive, and they make it easy to assume that intelligence is the defining feature of the next technological wave.

But after watching enough robotics deployments move from demos into real environments, that assumption starts to feel slightly incomplete.

Because the moment robots leave controlled environments, intelligence stops being the most important trait.

Reliability takes its place.

A robot completing a difficult task once is impressive. A robot completing that task every day, without interruption, across thousands of deployments, is something entirely different.

And that second scenario is where the real economy begins.

This is the perspective that made Fabric start reading differently to me.

At first glance the project looks like another attempt to connect robotics with blockchain infrastructure. Machines perform work, networks coordinate activity, tokens settle payments.

That story is easy to recognize because the industry has repeated versions of it many times.

But the deeper implication inside Fabric’s architecture might be less about machine labor and more about something the robotics industry rarely discusses directly.

Machine reliability.

The reason reliability matters is simple. Economic systems do not reward potential. They reward predictability.

Factories depend on machines that stay operational. Logistics networks depend on machines that complete routes consistently. Hospitals depend on systems that behave exactly as expected every time they are activated.

The moment reliability becomes uncertain, the entire system begins to fail.

This is why most large automation systems are designed around strict verification and monitoring frameworks. Operators need to know whether machines performed the tasks they were assigned and whether those tasks were completed within acceptable parameters.

Until now, those verification systems have largely remained internal to the organizations deploying the robots.

A company manages its own machines, collects its own operational data, and evaluates reliability within its own infrastructure.

That model works when robotics deployments remain relatively contained.

But as automation expands across industries and environments, something else becomes necessary.

Shared verification.

Networks need to know what machines are doing, how they perform over time, and whether their activity can be trusted.

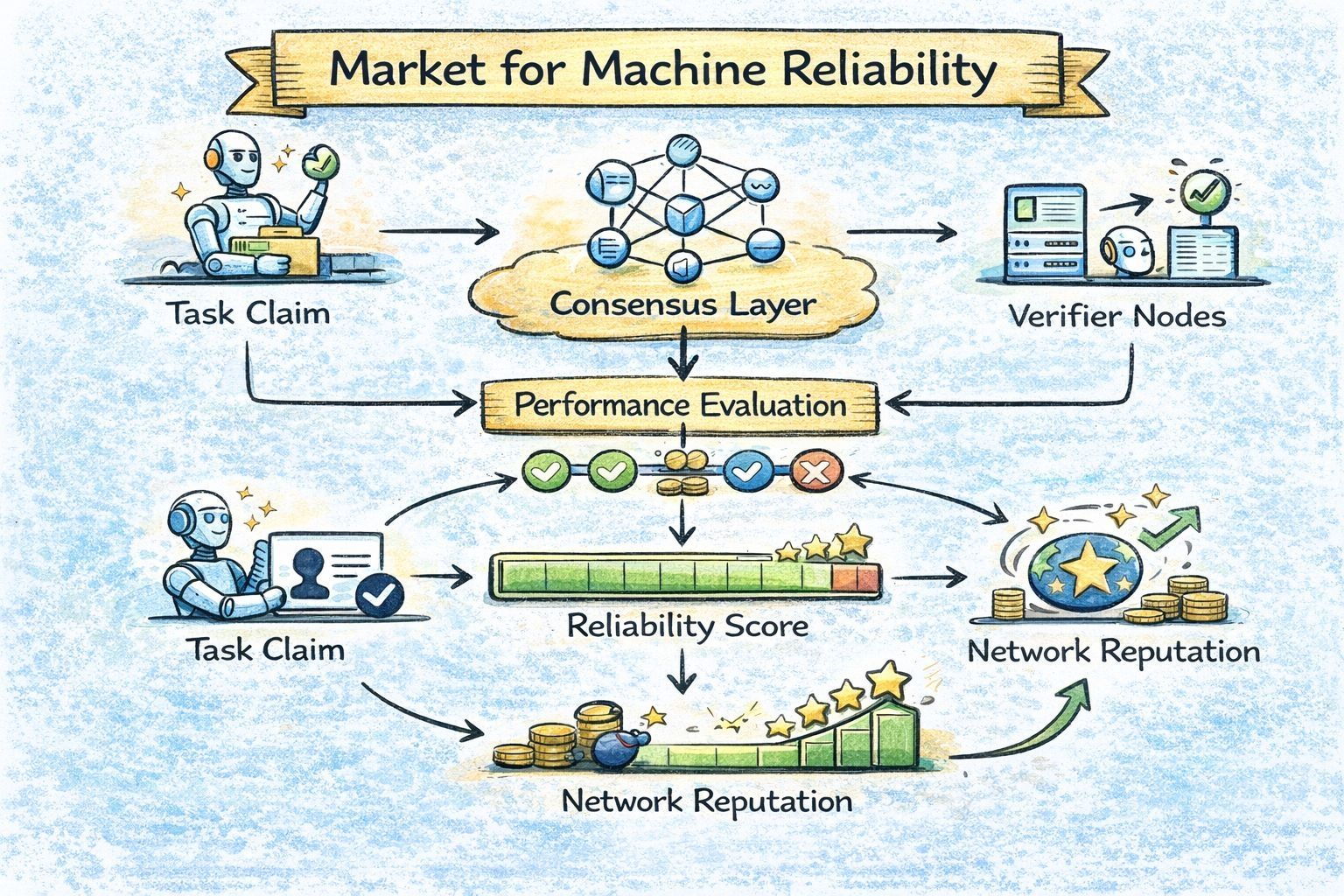

This is where Fabric’s identity and verification layer becomes interesting.

Instead of robots existing as isolated tools inside private deployments, machines can accumulate persistent identity inside a network. That identity can track their operational behavior over time.

Uptime.

Task completion.

Operational consistency.

What emerges from that system is something the robotics industry has never really had before.

A verifiable history of machine performance.

And once performance history becomes visible, something unexpected begins to happen.

Reliability becomes measurable.

This might sound like a small shift, but economic systems behave very differently once reliability becomes measurable.

Markets begin to differentiate.

Machines that consistently perform well become more valuable than machines that simply promise capability. Networks begin to allocate work based not only on availability, but also on demonstrated performance.

Reliability becomes a signal.

And signals eventually turn into pricing.

At that point the robot economy starts to resemble something closer to reputation markets.

Not reputation in the social sense, but in the operational sense. Machines building track records through repeated activity inside a network.

The interesting thing about this framing is that it changes how we think about automation entirely.

The conversation stops revolving around the smartest robot.

It starts revolving around the most dependable one.

In other words, the machine that performs the same task thousands of times without creating uncertainty.

This shift mirrors something we have seen in other technological systems. Early innovation often focuses on capability. Later adoption focuses on reliability.

The internet did not become infrastructure because networks were theoretically powerful. It became infrastructure because systems eventually proved stable enough to depend on.

Robotics may follow a similar trajectory.

The machines capable of performing tasks will continue to improve, but the systems that verify and coordinate those machines may ultimately determine how widely automation spreads.

Fabric appears to be positioning itself around that coordination layer.

Not by building the robots themselves, but by enabling networks to observe and verify what those machines are doing over time.

That is a subtle role, but potentially an important one.

Because if automation becomes widespread, the most valuable signal inside those networks may not be intelligence.

It may be reliability.

And the moment reliability becomes something networks can measure and recognize, the robot economy begins to look less like speculation and more like infrastructure.

Whether Fabric becomes part of that system remains uncertain. Infrastructure projects rarely move quickly, and the gap between theory and real-world usage can be wide.

But the direction itself feels different from most robotics narratives.

Instead of celebrating what machines might do someday, it asks a more practical question.

How do we know they did the work?

And in an economy built around automation, that question may end up mattering more than intelligence itself.

#ROBO $ROBO @Fabric Foundation