Disagreement is easy when it’s vague. It’s harder when it has to attach itself to a decision, a timestamp, and a person’s name in a change log. Mira and Cion learned that the second kind is the only kind worth having.

They work in the same organization, but they arrive at problems from different doors. Cion lives close to the running system. He knows which services wake him up at night, which dashboards lie by omission, which dependency will quietly throttle and take three other teams down with it. Mira lives close to the obligations the system creates. She reads contracts. She sits in the meetings where someone says, “We’ll handle that later,” and she writes down what “later” will cost when it arrives.

The first time people notice their friction is usually in planning. Product wants a feature that sounds small: “Add a smart reply suggestion in the support tool.” Cion asks the questions that feel like engineering reflex. What’s the traffic profile? Where does inference run? What happens when the model endpoint times out? Mira asks a question that lands differently. What data will we send to generate the suggestion, and where will it be stored?

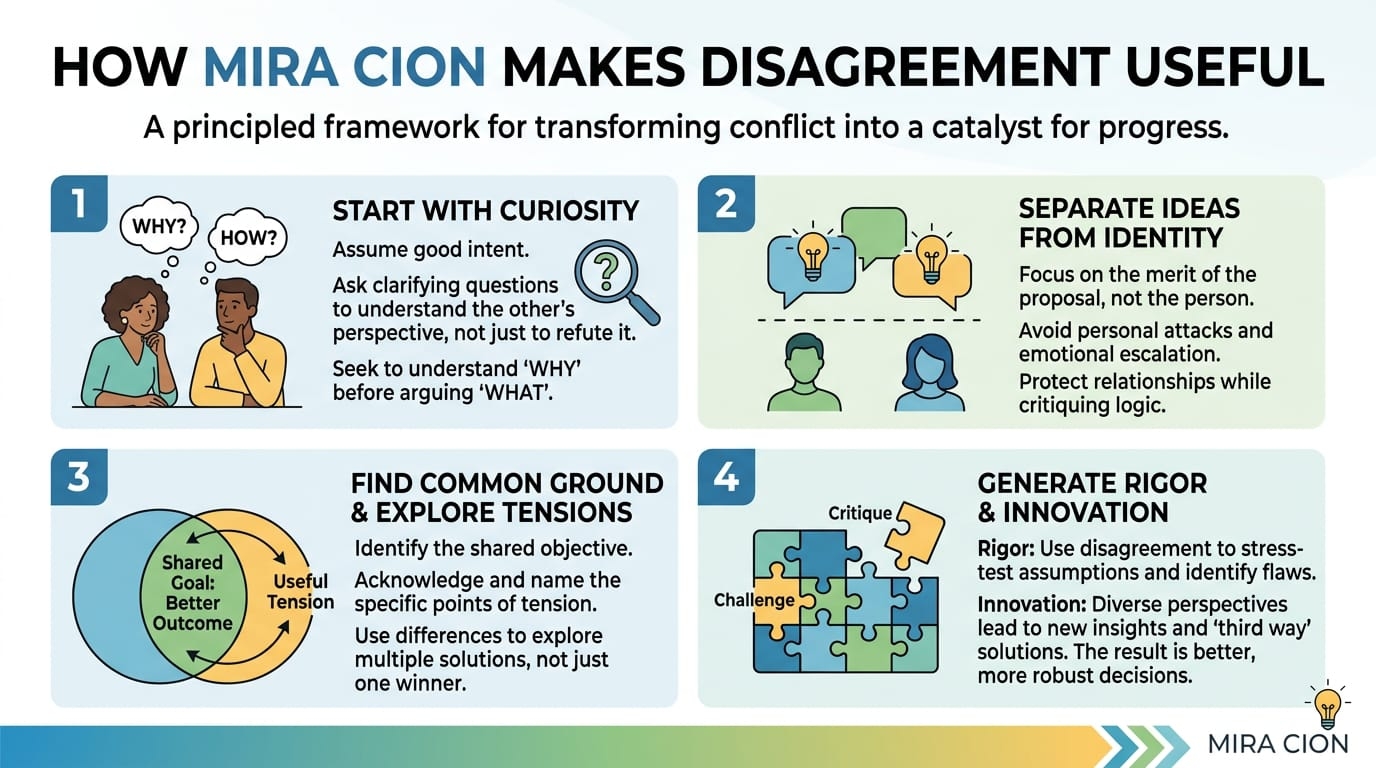

Someone will roll their eyes at one of them. Sometimes both. That’s the moment Mira and Cion start to make disagreement useful, because they don’t let it stay personal. They pull it down into the system.

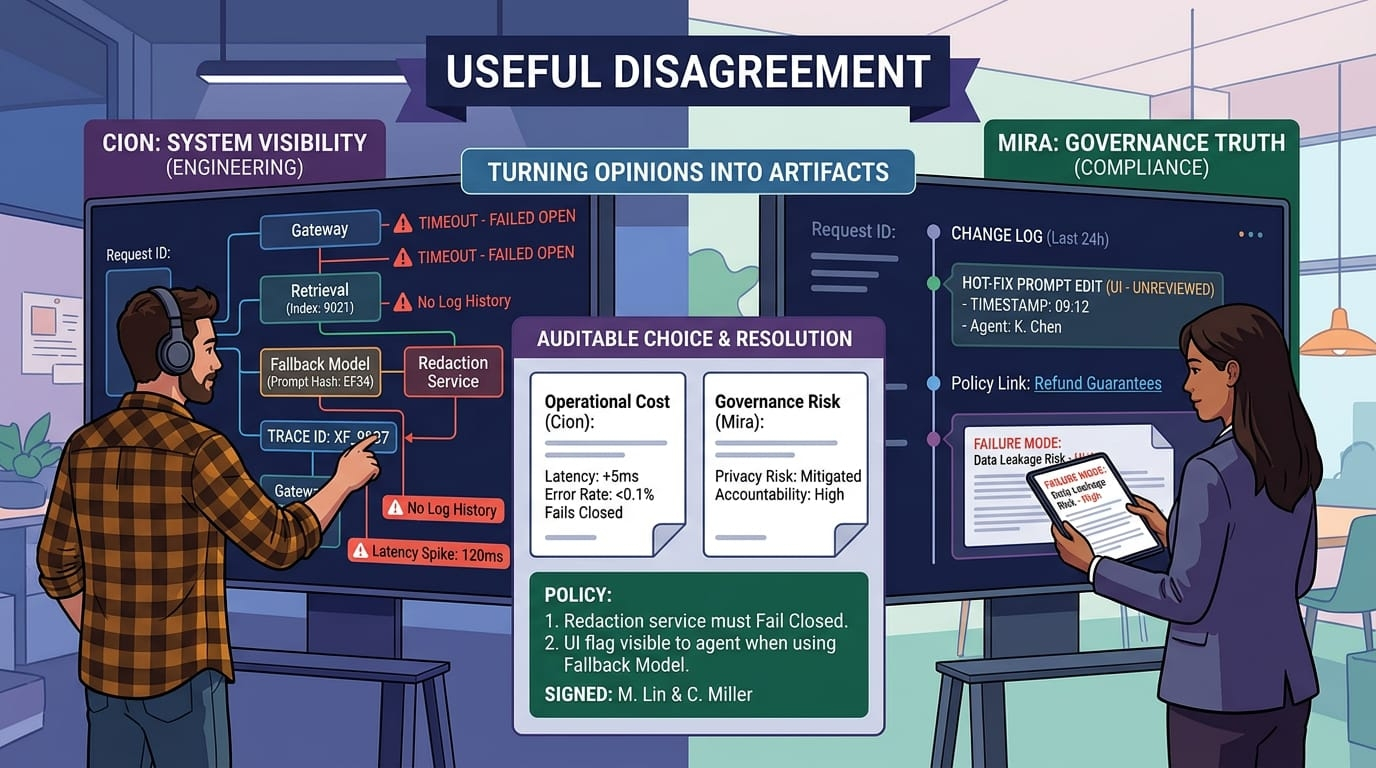

Cion shares his screen and draws the path: support tool to gateway to retrieval to model to redaction to response. Mira asks where logs are written and how long they’re kept. Cion answers honestly: right now, too long in one place and not long enough in another. Retrieval logs are sparse because they were noisy, and they turned them down to keep costs stable. Prompt edits are tracked, but the prompt can still be hot-fixed in a UI with no review. That’s a throughput shortcut. It is also a governance hole.

Mira’s disagreement isn’t “be more careful.” It’s “make the system able to tell the truth about itself.” Cion’s disagreement isn’t “stop slowing us down.” It’s “make the controls survivable under load.” Those are compatible goals. They just don’t look compatible when a deadline is two weeks away.

The way they bridge it is by turning opinions into artifacts. If Mira thinks a risk matters, she writes it as a failure mode with concrete consequences. If Cion thinks a control will break shipping, he writes the operational cost in plain terms—latency, error rates, on-call burden, dollars. They put those two documents side by side and force a choice that’s visible, not implied.

This approach shows its value most clearly during incidents, when disagreement is usually at its worst. A customer escalates a complaint: the assistant suggested a refund path that doesn’t exist, then quoted something that sounds like an internal policy name. The support lead is angry. The product manager is embarrassed. Someone says “hallucination” as if the word ends the conversation.

Cion asks for the request ID. Mira asks for the record of changes since the last stable day.

These sound like different instincts, but they’re complementary. The trace tells them what happened in the system. The change history tells them why it was possible.

The trace shows that the request went through a fallback model because the primary endpoint was saturated. The fallback model uses a different prompt and a different redaction step, tuned months ago for speed. The redaction step timed out and failed open, returning raw text. That timeout only happened because a caching change reduced latency and increased concurrency, pushing a downstream service past a threshold it rarely reached.

In other words: nobody did one dumb thing. Several reasonable choices aligned in an unreasonable way.

A useful disagreement at this point would be to argue about priorities—speed versus safety—and to pick a winner. Mira and Cion don’t do that. They argue about where to place the friction so it costs less the next time.

Cion proposes a technical fix: the redaction step must fail closed, even if it means returning an empty suggestion. Mira pushes for an operational fix: routing changes must be treated as behavior changes, and fallback models must meet the same policy guarantees as primary. Cion worries that failing closed will anger support agents who want something, anything, to send. Mira worries that failing open will leak private data into a customer email. They’re both right, and the disagreement becomes useful only when they acknowledge the real trade: user experience versus privacy risk, under specific conditions.

So they design it as a policy, not a one-off patch. If redaction fails, the assistant returns a short template that says, “I can’t generate a suggestion right now,” and it logs the failure with the request ID for follow-up. If the fallback model is used, the system sets a visible flag in the UI so agents know the suggestion may be limited. Cion gets reliability. Mira gets truth. Support gets clarity instead of silent inconsistency.

This is also how they handle the smaller conflicts that usually rot into resentment. Mira wants quarterly access reviews. Cion wants fewer interruptions. They compromise by making access reviews targeted: start with accounts that can change production prompts, rotate keys, alter retrieval corpora, or disable safety filters. Mira gets governance where it matters. Cion doesn’t have to chase a spreadsheet of low-risk accounts that will be wrong within a week anyway.

When Mira insists on documentation, Cion insists it be written for use, not for compliance theater. A runbook must include the two commands that actually matter, the dashboard link that actually helps, and the exact page where the logs live. Mira likes that because it makes accountability practical. Cion likes it because it reduces the burden on whoever is on call next month.

They also build rituals that keep disagreement from becoming a fight. Post-incident reviews are scheduled while memories are still fresh, but not so soon that everyone is still defensive. The rule is that claims need evidence. If you think the model changed, you point to the artifact hash. If you think retrieval got worse, you point to the index build ID. If you think governance slowed delivery, you point to the specific gate and the work it required, and you propose a better one. Complaints are allowed. Vagueness is not.

Mira’s best move is that she doesn’t treat “governance” as an abstract shield. She goes to the machine room. She watches a deployment. She sits beside an engineer during a rollback and sees what it actually takes to unwind a bad change when traffic is still coming in. Cion’s best move is that he doesn’t treat “controls” as moral judgments. He asks what harm looks like, in the real world, and how quickly it can spread.

Over time, their disagreements become a kind of early warning system. Cion spots fragility in performance and dependencies. Mira spots fragility in permissions and accountability. When they disagree, it’s often because they’ve found the same weak point from different sides.

The point isn’t harmony. They still frustrate each other. Mira still asks questions that land like speed bumps. Cion still pushes for exceptions when the system is burning and the business wants answers now. The difference is that the friction produces something tangible: a trace you can read, a change record you can audit, a rule you can test, a rollback you can execute without heroics.

In a world where AI systems are stitched across networks, vendors, data pipelines, and human workflows, disagreement is inevitable. The useful version isn’t louder. It’s more specific. It leaves receipts. It turns “I don’t like this” into “here is what will break, here is who will be affected, and here is what we can do about it before we learn the hard way.”

@Mira - Trust Layer of AI $MIRA #mira #MIRA