@Mira - Trust Layer of AI I was still at my kitchen table just after 6 a.m. with a cold mug of coffee beside my laptop fan when I started reading another round of AI rollout notes. I care about this now because the tools are leaving the demo stage and moving into real work, but can they really hold up?

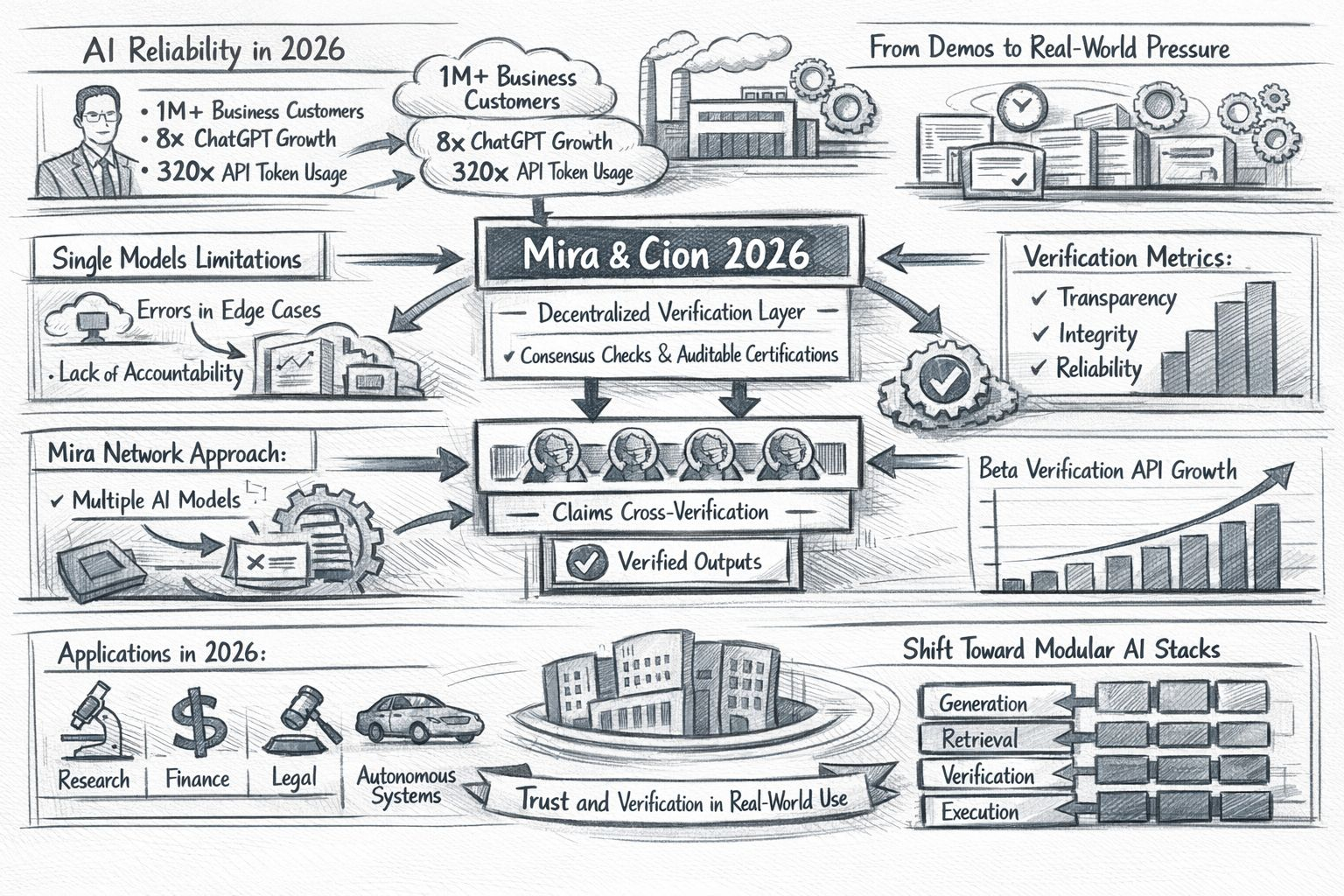

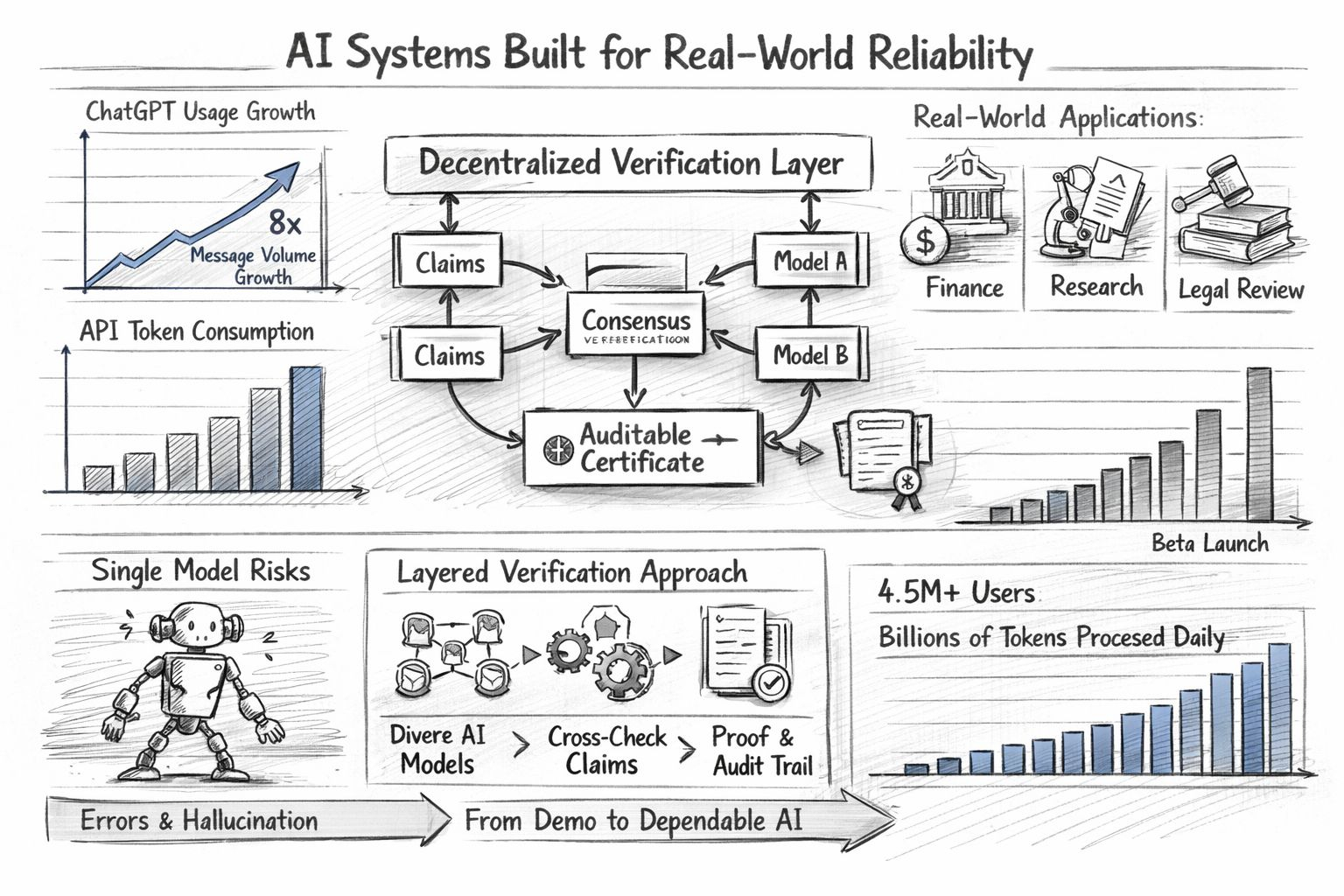

I keep coming back to that question because the market has changed shape. OpenAI says more than 1 million business customers now use its tools, and its 2025 enterprise report says ChatGPT message volume grew 8x while API reasoning token consumption per organization rose 320x year over year. Around the same time OpenAI also started releasing more building blocks for agents and described the challenge in simple terms as making them useful and reliable in production. That is why this topic is getting attention now. I do not think people are only chasing smarter chat. I think they are trying to find out whether AI can be trusted inside ordinary work where deadlines are real and data is often uneven.

That shift makes Mira Network more relevant to me than many louder projects. What stands out is that Mira is not trying to win the usual model race. Its public materials describe a decentralized verification layer that turns outputs into independently verifiable claims and then asks multiple AI models to check those claims through consensus. On Mira Verify the company says specialized models cross-check each claim and produce auditable certificates from input to consensus. I read that as an effort to move AI reliability away from confidence and toward a repeatable process.

I think that distinction matters because the weakness in today’s systems is already well known. NIST continues to frame trustworthy AI around accountability transparency safety validity and reliability, while tying information integrity to evidence verification and a clear chain of custody. The World Economic Forum has been making a related point from the security side as AI systems become more active inside organizations. Governance and visibility are starting to look like basic operating needs rather than a final layer of polish. That is the gap where Mira seems most relevant.

What gives Mira more weight for me is that its argument is technical rather than rhetorical. In its whitepaper Mira says single models run into a hard reliability boundary especially on edge cases and unfamiliar situations, which makes them a weak fit for autonomous systems exposed to messy real-world conditions. Its answer is to break complex outputs into structured claims and distribute those claims across diverse verifier models before attaching cryptographic proof to the result. I do not see that as a magic fix. I see it as a serious attempt to narrow the places where systems fail.

The 2026 update at least from where I sit is that Mira looks less like a concept deck and more like operating infrastructure. Its official materials now point to a beta verification API for autonomous applications, and Mira has also said its broader ecosystem serves more than 4.5 million users while processing billions of tokens each day. I am always careful with growth numbers from any project, but even with that caution this suggests movement beyond theory. Mira also describes developers building domain-specific uses across education research and other specialized workflows, which is where verification starts to feel practical instead of abstract. I also notice that this fits the broader movement toward modular AI stacks where generation retrieval verification and execution are treated as separate layers instead of being forced through one opaque model every time.

I should be honest about one thing. I still could not confirm a clear public 2026 record for Cion with the same level of confidence, so I do not want to invent details that I cannot support. What I can say is that the Mira side of this story already points to the larger shift that matters. I am seeing open AI systems slowly stop behaving like single all-knowing engines and start looking more like layered networks with checks logging incentives and audit trails. That direction feels healthier to me because it accepts a simple fact. Intelligence on its own is not enough.

I do not think reliability will come from one model suddenly becoming flawless. I think it will come from architecture that expects pressure disagreement drift and abuse and still keeps working. That is why Mira Network feels timely to me in 2026. It is trying to make verification a native part of AI operation rather than a cleanup step after something goes wrong. I care about that because once AI starts handling research finance legal review or autonomous action, the real test is not whether it sounds smart. The real test is whether it stays dependable when the room gets noisy.