Artificial intelligence feels magical sometimes. It can write a poem in seconds, summarize a novel, or even analyze markets better than some humans. Yet there’s a catch: AI often speaks with absolute confidence, even when it’s wrong. That confidence creates a strange kind of danger. You can’t always tell fact from fiction. You might trust a model to help with research, only to discover later that it hallucinated an entire citation or misread a legal precedent. This is where Mira Network steps in—not to make AI smarter, but to make it trustworthy.

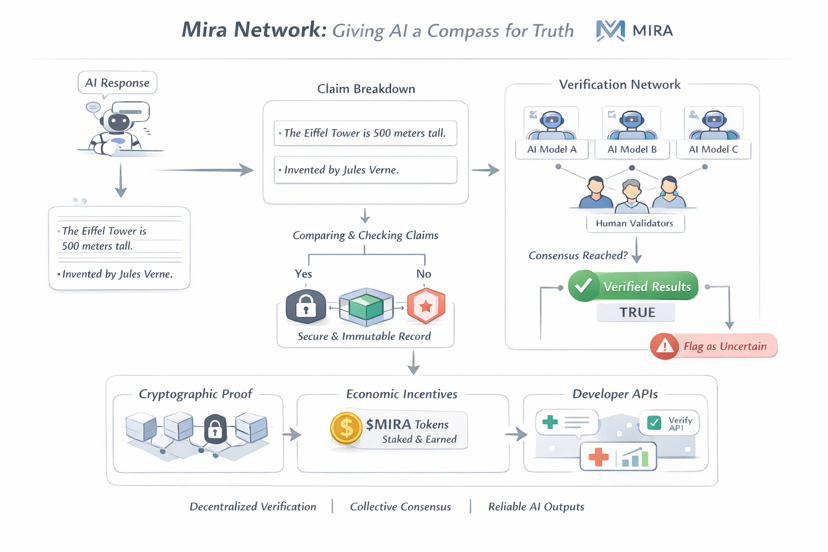

Mira isn’t about creating a single, flawless AI. Instead, it’s about building a network that checks AI’s work. Think of it like a neighborhood of fact-checkers for machines. When an AI generates a response, Mira breaks that answer into smaller statements, called claims. These claims don’t get passed along immediately. They’re sent to a network of independent AI models and validators who examine each one. If most agree the claim is accurate, it’s stamped as verified. If they disagree, the system flags it. Finally, every verified claim gets recorded with cryptographic proof—essentially a digital seal of trust.

What makes Mira powerful is its approach to diversity and consensus. Different AI models have different training, biases, and weaknesses. By combining their judgments, the network dramatically reduces the risk of errors going unchecked. No single model can dominate the truth. Mira treats reliability as a collective effort, like a choir where each voice balances the others, producing harmony instead of noise.

The network also uses economic incentives to ensure honesty. Participants stake the project’s token, $MIRA, to take part in verification. Accurate validators earn rewards; dishonest actors risk losing their stake. Truth becomes something people want to uphold, not just an abstract goal. Applications using Mira’s system pay for verification, and token holders even participate in governance, helping shape the rules and evolution of the network.

For developers, Mira offers something rare: a layer that handles verification automatically. Instead of building complex systems to check AI outputs, they can call Mira’s APIs to generate answers that are already verified—or run verification separately on AI-generated content. Industries where accuracy matters—healthcare, finance, research—can finally rely on AI outputs without the fear of unseen errors.

Mira has moved quickly from concept to reality. Its mainnet launch in 2025 turned the idea into a working system capable of processing millions of verification requests. Early test phases attracted hundreds of thousands of users who participated in the verification process, helping refine how claims are evaluated and consensus is reached. The ecosystem has grown to include not just developers but also everyday users, who can interact with AI while contributing to the reliability of the network.

What makes Mira exciting is not just the technology, but the philosophy behind it. Most AI today operates on a “trust me” model—you take what it says at face value. Mira flips that on its head. It says, “prove it first.” By shifting the burden from belief to verification, the network introduces a level of accountability that could become essential as AI begins influencing decisions in law, medicine, finance, and governance.

The bigger picture is even more intriguing. Mira could become the standard backbone for trustworthy AI. Autonomous agents, research platforms, and decision-making systems could all rely on a verification layer to ensure that what they act upon is accurate. In this world, the most important AI infrastructure might not be the models themselves, but the networks that check and validate them.

Mira Network reminds us that AI doesn’t have to be perfect to be useful—it just needs to be honest about what it knows. By combining decentralized consensus, economic incentives, and cryptographic verification, it’s giving artificial intelligence something it has never had before: a compass for truth. In an age where machines shape knowledge, decisions, and communication, that compass might be the most valuable tool of all.