I had a Mira permission claim clear earlier today, and instead of relaxing I reopened the tabs.

Green badge. Closed receipt. No obvious dispute. For a second I almost let it pass.

Then I checked the uncomfortable part, who had actually tried to make it fail.

That was the hitch.

The network had confirmed the line. Almost nobody had really pushed on the version most likely to break.

That’s the seam on Mira I keep coming back to.

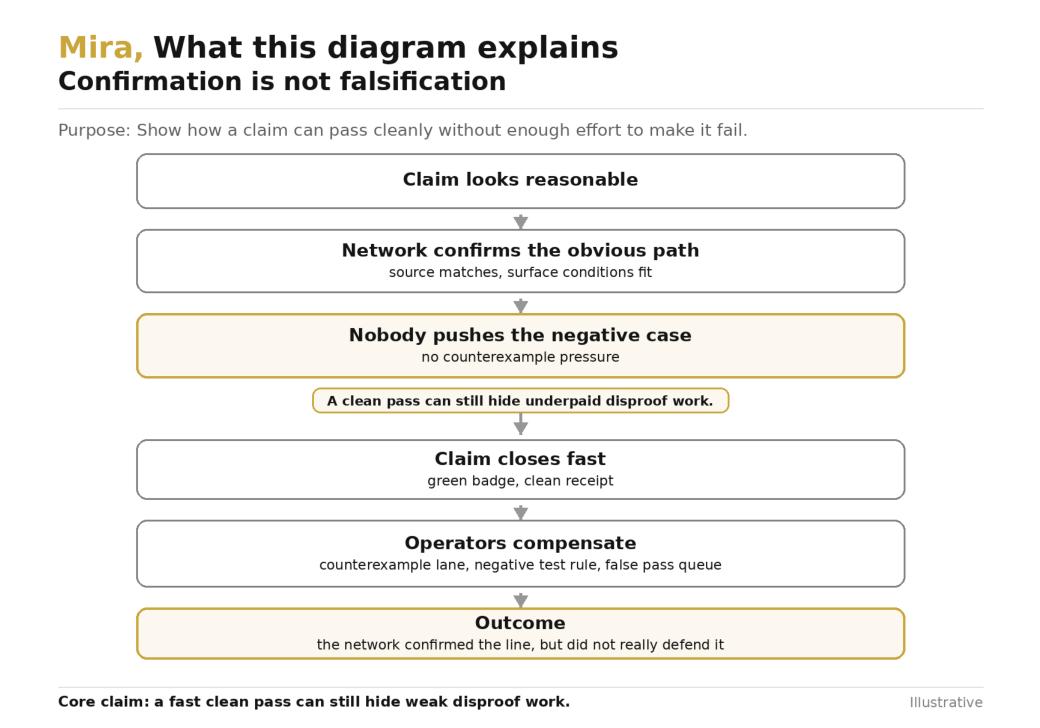

A network can get very good at confirming things that already look reasonable. That does not mean it’s equally good at disproving them. And those are different kinds of work.

Mira is easy to like from the outside. Take an output, break it into verifiable claims, send those claims across independent verifiers, then close what stands through proof and consensus. That’s much better than asking people to trust 1 big undifferentiated answer. Claims are cleaner. Receipts are cleaner. Responsibility is easier to localize.

But once a system is built around claims, 1 question starts mattering more than it sounds.

What kind of effort is the network actually paying for.

I ran into this on a claim that looked routine enough to clear quickly. The positive case was easy to check. The cited source looked consistent. The surface conditions matched. The line fit the rest of the bundle cleanly enough that nobody had to struggle with it. What never really happened was serious negative work. Nobody went hunting for the counterexample that would force the claim to fail. Nobody pushed on the part most likely to break the frame. The network had confirmed a plausible line. It had not really disproved its dangerous version.

That is not a small distinction.

A claim can pass because it is true.

A claim can also pass because nobody spent enough effort proving it false.

Those are not the same outcome.

A claim nobody seriously tried to break is not a claim the network really defended.

This matters more on Mira than in a normal model quality conversation, because Mira is not just trying to generate decent answers. It is trying to make verification operational. Once a receipt exists, people start treating the line as something that survived adversarial attention. That is a much stronger meaning than a few verifiers finding it acceptable.

And that stronger meaning only holds if the system pays for negative work, not just positive confirmation.

Confirmation is usually cheaper. It follows the shape of the claim. It checks whether the cited pieces line up. It asks whether the line still looks reasonable under a straightforward reading.

Disproof is different.

Disproof has to look for the awkward branch. The missed condition. The hidden exception. The stale assumption. The part of the line that only breaks when you press on the least convenient interpretation. It is slower. It is uglier. It is much easier to underpay.

That is where the drift begins.

If Mira rewards clean closure more than costly challenge work, the network starts learning a very predictable habit. It gets better at confirming what already looks confirmable. Claims that survive are not necessarily the claims most resistant to falsification. They are the claims least likely to trigger expensive negative exploration.

That kind of system still looks healthy on the surface.

The receipts keep arriving.

The dispute count stays calm.

The dashboard stays green.

Meanwhile the hard work starts moving somewhere else.

You can usually see it in the coping layers before anyone says it out loud.

First comes a counterexample lane for high impact claims, because somebody realizes the default path is too confirmation heavy. Then a negative test requirement appears for certain claim classes. Then a local rule says a claim is not really done until 1 verifier has attempted a structured disproof pass. Then a false pass queue shows up for the claims that cleared the protocol path but still made operators uneasy enough to rerun them under tighter conditions.

That is the tell.

The shared layer confirmed the line.

The operator still had to ask who tried to break it.

Once that happens, trust starts to split.

The protocol certifies that a claim passed its available checks.

The integrator privately certifies whether those checks included enough adversarial pressure to matter.

And private falsification discipline is where quiet centralization starts growing. Teams with better negative testing, better counterexample search, and better appetite for costly challenge work get safer automation. Everyone else gets a verified badge that still depends on a gut check.

That’s a bad place for a trust layer to end up.

Because a trust layer is not supposed to stop at nothing looked wrong enough to fail. It is supposed to narrow the space of what still needs private skepticism afterward.

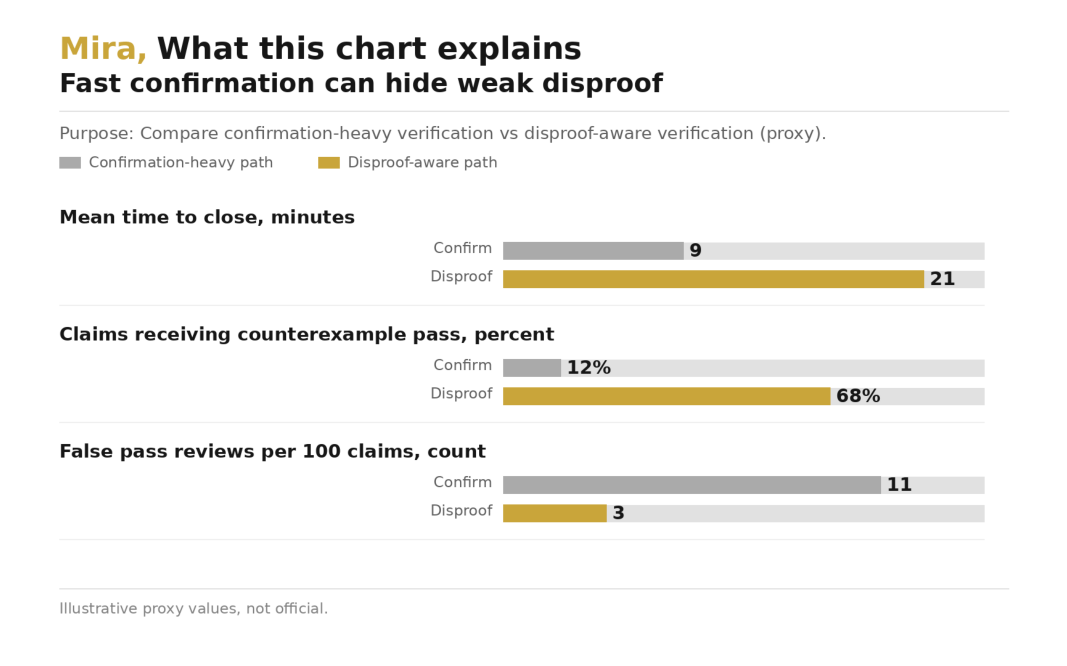

That’s why I don’t think confirmation and falsification can sit under 1 vague word like verification and be treated as equivalent. They are not equivalent in cost. They are not equivalent in latency. And they are definitely not equivalent in what they buy you operationally.

A system can be excellent at confirmation and still be weak exactly where serious users need it most.

At falsification.

The trade here is real and not especially pretty.

Push hard on counterexamples, and the network gets slower, more expensive, and more contentious. More lines enter challenge work. More receipts stay open longer. More verifiers spend time trying to break claims that would probably have been fine. Builders complain that obvious truths now take too much effort to close. They will not be entirely wrong.

Underpay negative work, and the opposite happens. Closure looks fast. The protocol feels smooth. The pretty metrics stay pretty. But the burden of asking what would make this fail moves into app logic, human review, and private post verification checks. The system gets cleaner right up until it matters.

You do not get to escape that bill.

You only choose where it lands.

If Mira carries it, falsification has to become a first class part of the design. Not as vague adversarial theater. As something measurable and routine. A claim class that requires negative work should say so. A verifier that attempts serious disproof should be distinguishable from 1 that only confirms the obvious path. A receipt should make clear whether the line survived direct counterexample pressure or just passed a confirmation sweep.

Without that, the network will slowly train users to overread what a green badge means. And once users start overreading receipts, the app layer will compensate by building private negative case rules behind the protocol.

Then the same pattern returns.

The protocol certifies surface safety.

The serious team certifies failure resistance.

That is not shared trust.

That is shared confirmation and private skepticism.

What makes this especially important on Mira is that claim level verification can hide this weakness surprisingly well. A claim does not need to be false to deserve a disproof pass. It only needs enough leverage downstream that a missed negative case becomes expensive later. Money movement. Permissions. Irreversible actions. Claims like that do not just need support.

They need pressure.

And pressure costs.

That’s where $MIRA earns relevance for me, if it earns relevance at all. Not as decoration around higher throughput. As operating capital for negative work. Counterexample search. Challenge depth. Longer open windows where needed. The boring machinery that makes it rational to pay for disproving clean looking claims before they harden into trusted receipts. If incentives only reward clean confirmation, the network will get good at agreeing with itself. If it has to pay for falsification at the right points, then verified can start meaning something harder to fake.

The checks I care about here are not glamorous.

When Mira is under load, do high impact claims still get real counterexample pressure, or does negative work quietly shrink while confirmations stay fast. Do teams delete their private false pass queues, or do those queues become the real product. And when a claim closes cleanly, can the receipt tell me whether the network only confirmed the obvious reading, or whether somebody actually tried to make it fail.

If Mira can carry that burden inside the shared layer, it gets closer to being a trust layer.

If not, it is not reducing uncertainty.

It is certifying whatever nobody paid enough to break.