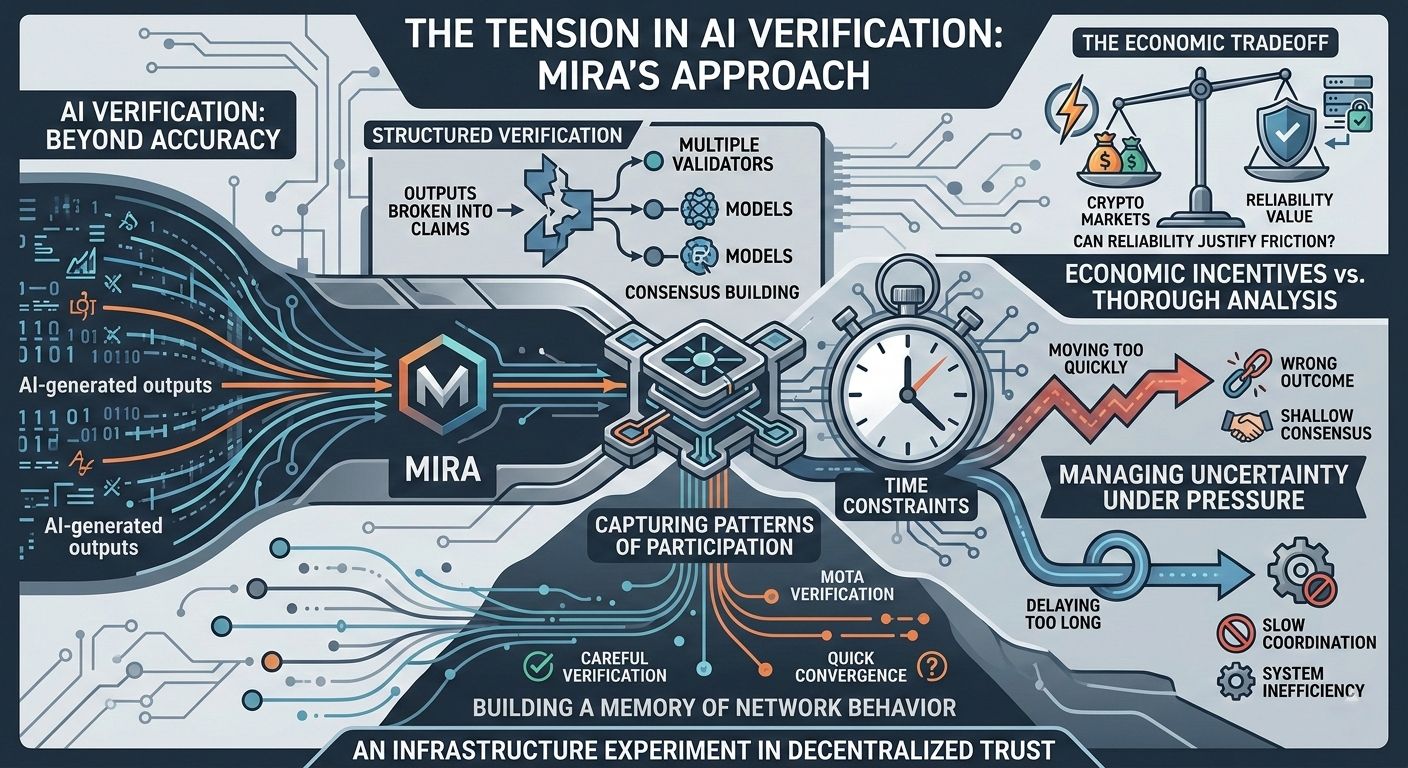

Most discussions around AI verification focus on accuracy. The assumption is simple - generate an answer, verify it, and then trust the result. But real systems rarely operate in such clean conditions.

In practice, networks operate under pressure. Verification takes time but decisions often cannot wait. A system that delays too long risks slowing coordination, while a system that moves too quickly risks validating the wrong outcome. The real challenge is not verification alone but how a network manages uncertainty under time constraints.

This tension is where Mira becomes interesting.

Mira’s model introduces structured verification, where outputs are broken into smaller claims and evaluated across multiple validators and models. The system attempts to build consensus before a final result is accepted. In theory, this creates a stronger trust layer for AI-generated outputs.

However, verification inside a live network introduces a different problem - timing.

A network cannot wait indefinitely for perfect certainty. At some point it must finalize a decision. That decision determines which validators are rewarded, which are penalized, and how the system continues moving forward.

If that moment arrives too early the network risks penalizing careful analysis simply because deeper verification required more time. If it arrives too late the system becomes inefficient and loses economic momentum.

The cost of deciding too soon is therefore not only technical. It is behavioral.

Participants quickly learn what the system rewards. If speed consistently wins over careful verification, actors will optimize for speed. Over time this can create shallow consensus, where agreement forms quickly but accuracy quietly deteriorates.

Mira attempts to address this by observing more than just final answers. The network also captures patterns of participation. Validators leave a trace of behavior each time they interact with the system. Over many rounds those traces begin to reveal patterns.

Some participants consistently verify claims carefully. Others may converge toward consensus too quickly. Distinguishing between these behaviors is critical because decentralized systems rely less on single events and more on long-term patterns.

This approach suggests that Mira is not only verifying outputs. It is gradually building a memory of network behavior.

That design choice gives the system a heavier role than simple AI validation layers. Instead of acting as a passive verification tool, the network becomes an active mechanism for shaping incentives around how verification occurs.

Yet the system still faces a difficult contradiction.

Crypto markets reward speed and rapid interpretation. Careful verification introduces friction, additional steps and higher operational cost. For a verification network to succeed the value of reliability must become large enough to justify that friction.

That is the economic question Mira ultimately has to answer.

If AI systems continue expanding into research, automation, and financial decision making, then reliability becomes more valuable than raw output generation. In those environments the cost of being wrong can exceed the cost of moving slower.

Under those conditions, structured verification networks may become a necessary layer rather than an optional improvement.

Mira positions itself around that possibility.

Not as a guarantee of perfect truth, but as an infrastructure experiment testing whether decentralized verification can create stronger trust in machine generated outputs.

If that layer becomes essential, networks that organize verification effectively may capture a significant part of the AI infrastructure stack.

#Mira $MIRA @Mira - Trust Layer of AI

Disclaimer: This article reflects analytical observations and research perspective only. It does not constitute financial advice. Always conduct independent research before making investment decisions.