I have been watching the slow convergence of AI and crypto from the vantage point of a student and an analyst and I keep returning to a single unease AI feels powerful and useful yet fragile when its outputs are taken at face value My first encounters with AI in research and in tooling often delivered answers that sounded convincing but that on inspection required careful verification That reality nudged me toward projects that try to build infrastructural guardrails rather than marketing narratives

@Mira - Trust Layer of AI $MIRA #Mira

Reliability in AI matters because decisions driven by models are migrating from experimentation into production and into the physical world Mistakes that were once contained to a lab or a chat window now have the capacity to cascade into operational failures legal exposure or worse The problem is not only hallucination it is opacity and the lack of auditable provenance for claims that systems make

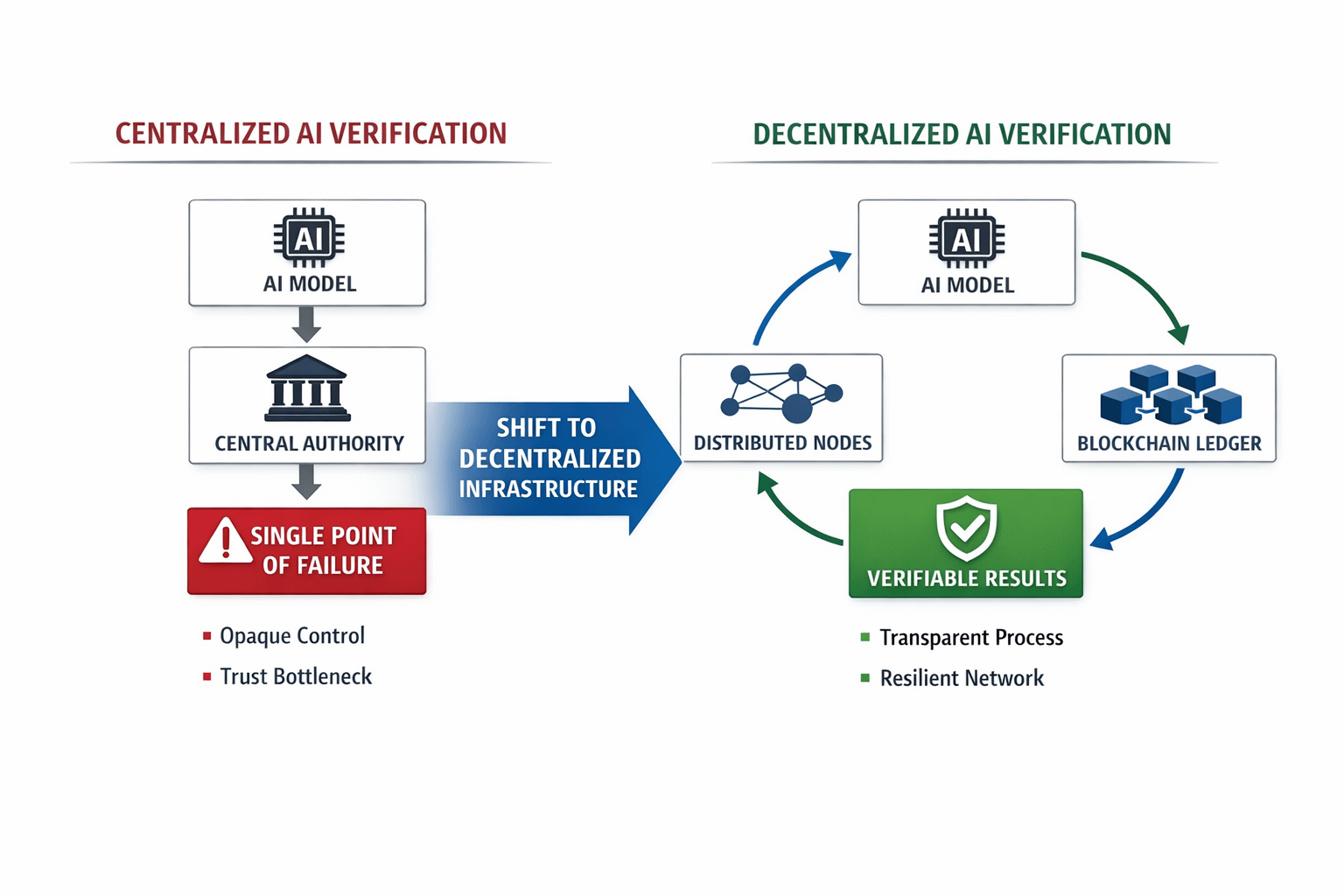

That concern leads naturally to decentralized infrastructure as a complementary axis to model improvements Centralized verification can help but it concentrates trust and creates a single point of failure A distributed verification layer promises a different tradeoff one that replaces opaque authority with verifiable process

Today AI outputs face a trio of interlocking challenges hallucinations where models invent facts bias that skews outcomes and verification gaps where no reliable audit trail exists Users and integrators cannot easily determine which model outputs are safe to act on This is especially acute for autonomous systems that interact with the real world and where false positives or false negatives carry tangible costs

Unverified AI is risky for real world autonomous systems because those systems must make decisions with measurable consequences When an AI recommendation lacks provenance or an agreed verification step the downstream system effectively gambles with incomplete information This is not merely theoretical it is an operational gap that slows adoption in industries that cannot tolerate uncertain outputs

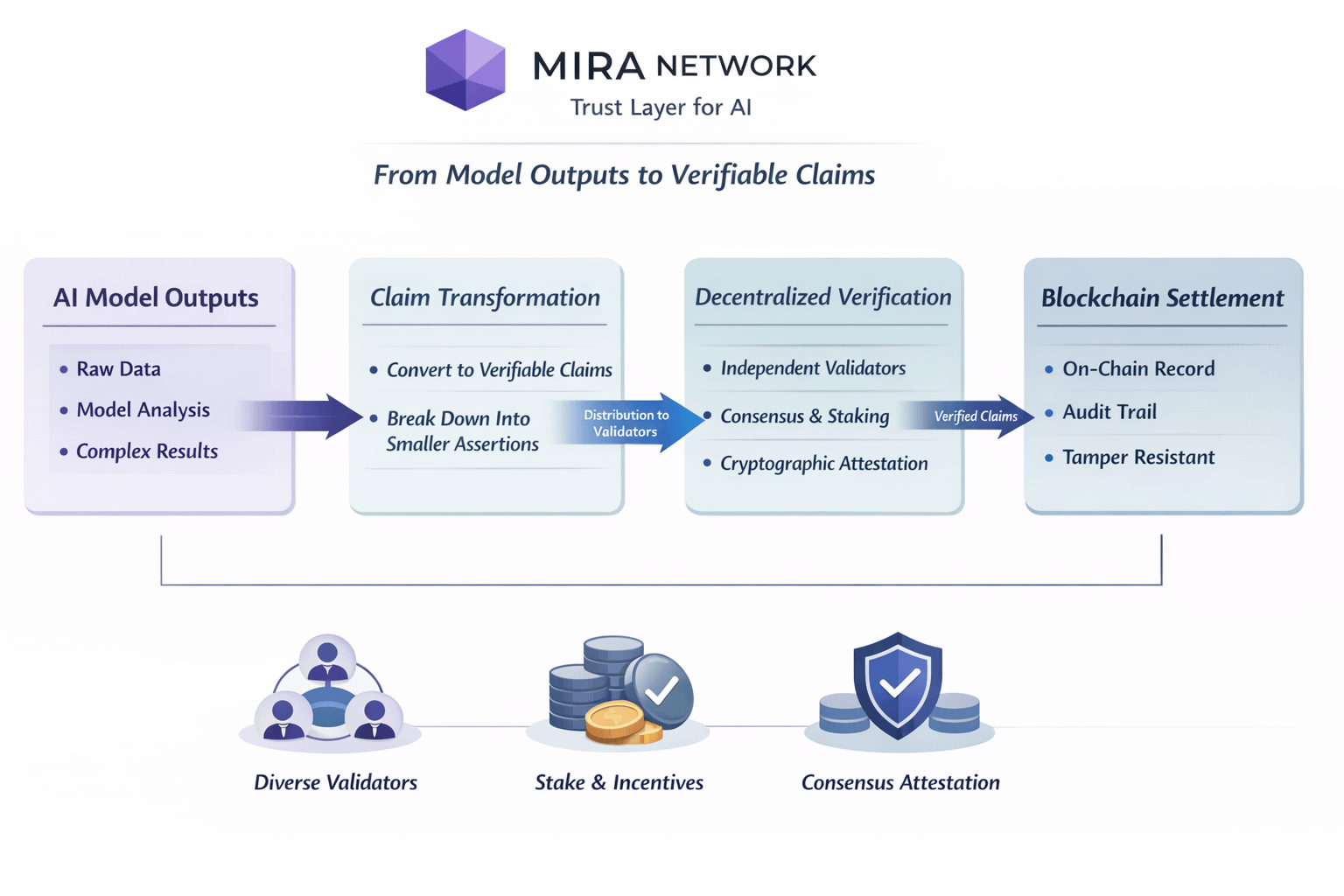

Enter Mira Network and the ecosystem behind it and the public handle @Mira - Trust Layer of AI which positions itself as a trust layer for AI Mira aims to convert model outputs into cryptographically verifiable claims and to route those claims through a distributed verification process that is recorded on chain This design seeks to ensure that assertions can be audited and that their verification history is tamper resistant.

Technically Mira transforms complex outputs into smaller verifiable claims that are distributed to independent validators whose consensus yields a cryptographic attestation This approach combines model diversity staking economics and blockchain settlement so that validators have both the tools and the incentives to perform honest verification The whitepaper outlines mechanisms for claim transformation economic penalties for dishonest behavior and consensus driven attestations.

A balanced critique is necessary Mira faces nontrivial scalability questions around throughput and latency and it must solve real world incentives to attract diverse validators without centralizing power There are also adoption barriers for legacy systems and regulatory concerns about how on chain attestations map to legal responsibility These are solvable but material obstacles that deserve candid attention

Breaking outputs into verifiable claims improves reliability because it narrows the verification scope and creates repeatable checks that can be independently audited This modularity also enables composability so other services can rely on the same attestations rather than re verifying the same content repeatedly

If networks like Mira can deliver on their promise the Web3 stack may gain a trustworthy AI substrate where data provenance and verification become first class primitives That could shift how societies build safety critical AI and how markets price verified intelligence

Practical adoption will hinge on developer tooling economic models for validators and clear integration patterns for enterprises The broader AI plus blockchain movement benefits when teams experiment with layered trust models and openly report results

This shift feels analogous to the early internet when trust layers such as TLS and code signing emerged to make open networks safe for commerce Building a verification layer for AI is the next phase in that trajectory and it will require technical rigor and pragmatic adoption strategies to realize its promise

$MIRA figures into these incentive discussions because tokenized staking and penalties form the backbone of the verification economy and they will influence both participation and governance. @Mira - Trust Layer of AI $MIRA #Mira