I’m waiting and watching the quieter parts of the AI and crypto world where the real ideas usually grow before anyone notices them. I’m looking beyond the excitement, the announcements, the endless stream of new tokens and promises. I’ve been noticing something that keeps returning no matter how advanced the technology becomes. I focus on the gaps that appear when powerful systems collide with the real world. And the more time I spend observing artificial intelligence, the more one uncomfortable truth keeps sitting in the background. AI can produce answers at incredible speed, but trusting those answers is still far more complicated than people like to admit.

There is a strange emotional moment that happens when you use modern AI for long enough. At first it feels impressive. The responses are fast, articulate, almost confident in a way that makes you forget you are talking to a machine. It feels helpful, almost reassuring, like having a knowledgeable assistant available at any moment.

But eventually something small breaks that illusion.

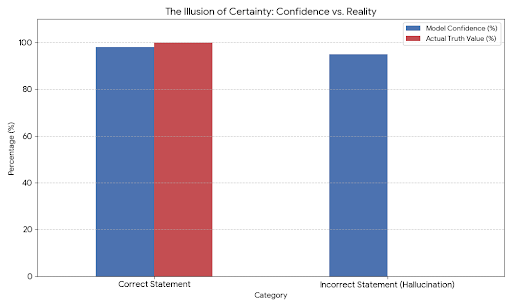

Maybe the system confidently states a statistic that turns out to be wrong. Maybe it references a study that does not exist. Maybe it connects facts in a way that sounds logical but quietly drifts away from reality. The answer still looks perfect on the surface, but the trust you felt a few seconds earlier suddenly feels fragile.

That moment stays with you.

Because the real problem is not that the AI made a mistake. Humans make mistakes all the time. The unsettling part is how confidently the mistake was delivered. The system had no hesitation, no pause, no signal that uncertainty existed.

And that creates a deeper question that keeps echoing in the background.

If AI becomes part of the systems that run our world, how do we know when it is telling the truth?

Right now most conversations about artificial intelligence revolve around capability. Bigger models. Faster responses. More complex reasoning. Every new version promises to be smarter than the last one.

But intelligence alone does not solve the real problem.

A system can be extremely intelligent and still be unreliable.

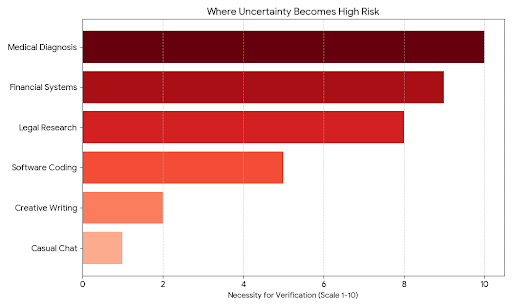

When people use AI casually, this uncertainty is easy to ignore. If someone asks a model to summarize an article or generate ideas for a presentation, a small mistake is rarely a disaster. Life moves on.

But imagine AI inside financial systems where numbers must be exact. Imagine it inside medical research where incorrect information could shape real decisions. Imagine autonomous agents negotiating contracts or executing transactions based on data they believe is accurate.

In those environments, uncertainty becomes something much heavier.

It becomes risk.

The more I observe the AI ecosystem, the more it feels like we are building powerful engines without installing the systems that check whether those engines are running safely. Everyone is racing to produce better answers, but very few people are focusing on verifying those answers before they spread through the rest of the digital world.

That is where something like Mira Network begins to feel different in a quiet but meaningful way.

Instead of trying to build another AI that sounds smarter than the others, the project seems to start from a different question entirely. It asks what happens after the answer is produced.

Not how fast the answer arrives.

Not how impressive it sounds.

But whether the answer can actually be trusted.

At first the idea feels almost simple. AI outputs are treated as claims rather than final truths. A large response from a model might contain dozens of small statements hidden inside it. Facts, numbers, assumptions, explanations. Normally those statements are delivered together as a single piece of text that people read without questioning each individual part.

But Mira approaches it differently.

The system breaks those responses into smaller claims that can be examined one by one. Each statement becomes something that can be checked rather than blindly accepted.

And this is where the process becomes interesting.

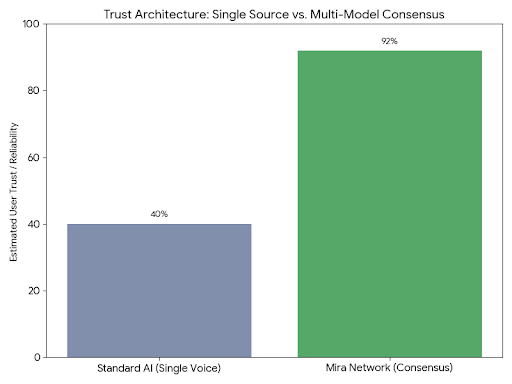

Instead of relying on one model to verify its own output, the claims are distributed across a network of independent AI systems. Each model evaluates the statement from its own perspective. If multiple systems reach the same conclusion, confidence begins to grow naturally. If the results conflict, the system recognizes uncertainty instead of pretending certainty exists.

There is something emotionally reassuring about that structure.

It feels closer to how humans naturally build trust.

When we hear something important, we rarely rely on a single source. We ask another person. We search for confirmation. We compare perspectives. Trust grows slowly as multiple signals begin to align.

AI systems have not worked that way until now. They usually act like a single voice delivering information without any visible process behind it.

Mira introduces that missing process.

What makes the approach even more interesting is how it uses decentralized infrastructure to support the verification. Instead of a central authority deciding what is correct, the network reaches consensus through many independent participants. Verification becomes a shared responsibility rather than a centralized decision.

Economic incentives reinforce the system. Participants who verify information accurately are rewarded, while unreliable behavior becomes costly. Over time this creates an environment where truth is not just expected but economically encouraged.

It is a subtle shift, but an important one.

For years blockchain technology has been used to verify transactions. The network ensures that money moves correctly from one place to another without needing a trusted intermediary.

Mira seems to be exploring a similar idea for information itself.

Instead of verifying money, the network verifies knowledge.

And in a world where AI is producing information faster than humans can read it, that idea starts to feel incredibly relevant.

What fascinates me most is how quietly this type of infrastructure develops. Projects focused on attention often dominate headlines for a few months and then slowly fade. But the systems that truly matter tend to grow in the background, slowly becoming part of the foundation that everything else depends on.

Verification feels like that kind of foundation.

The internet changed how quickly information could spread. Social platforms accelerated that speed even further. Artificial intelligence has now reached the point where information can be generated instantly at enormous scale.

But the systems responsible for checking that information have barely evolved.

Without verification, intelligence becomes fragile.

Without trust, even the most advanced technology begins to feel uncertain.

The longer I watch the direction AI is moving, the more I feel that the real breakthrough will not come from machines that speak more fluently or reason more deeply.

It will come from the quiet systems that stand behind those machines, patiently asking a simple but powerful question every time an answer appears.

Is this actually true.