@Fabric Foundation I was at my desk just after 6 a.m. The radiator kept clicking while a CSV file threw an ugly mismatch across my screen. That kind of error grabs me because it usually means the data was touched somewhere I cannot see and cannot audit with confidence. How much trust can I really give a record with no memory?

That is why Fabric Protocol caught me. I read its materials less as a robotics story and more as an argument about evidence. In its whitepaper Fabric describes a public ledger system built to coordinate robots AI workloads ownership and oversight while tying rewards to work that can be checked rather than merely claimed. I keep returning to that point because the hard part in modern systems is rarely storage. The harder question is whether I can tell what happened who did it and whether anyone can challenge the record later.

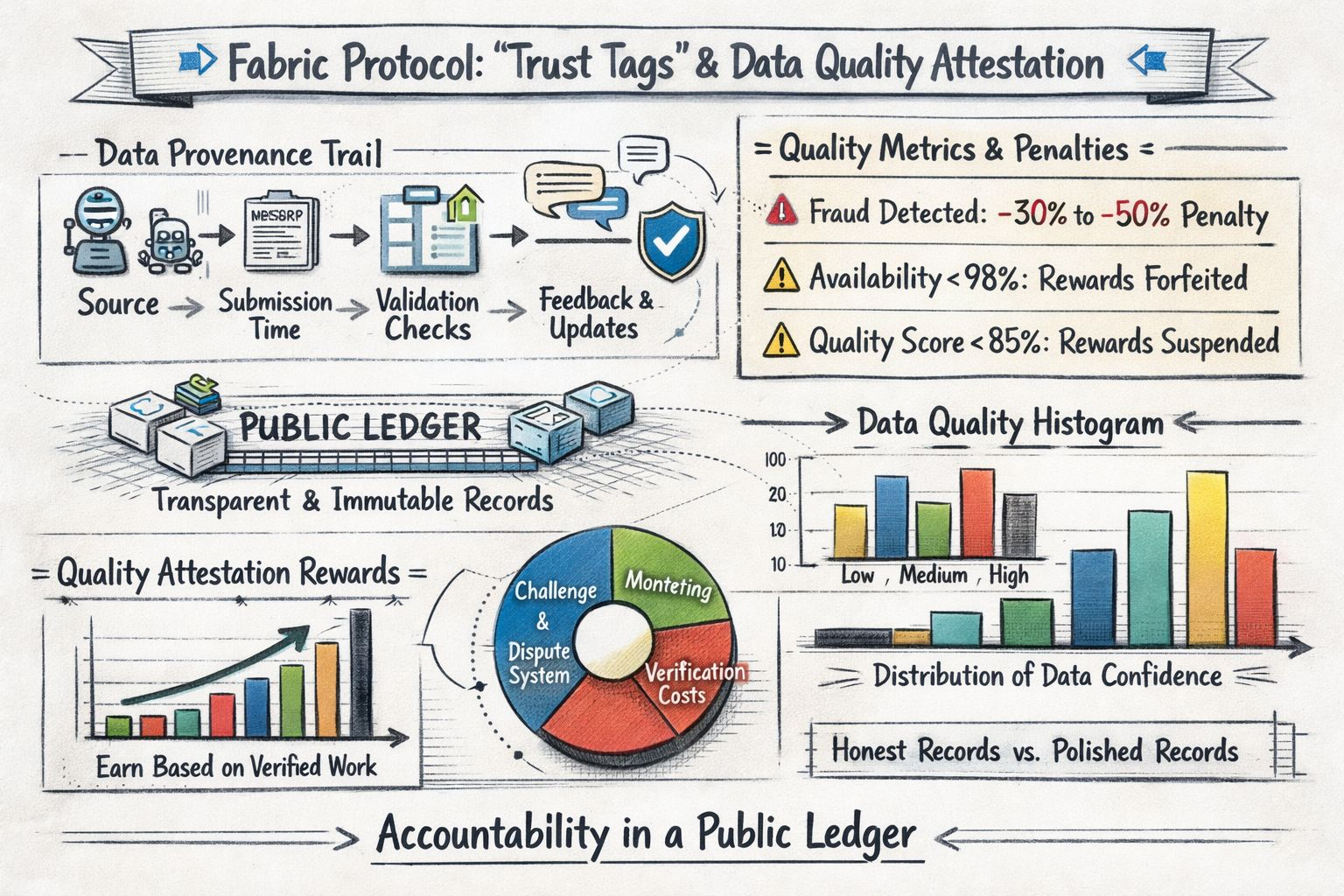

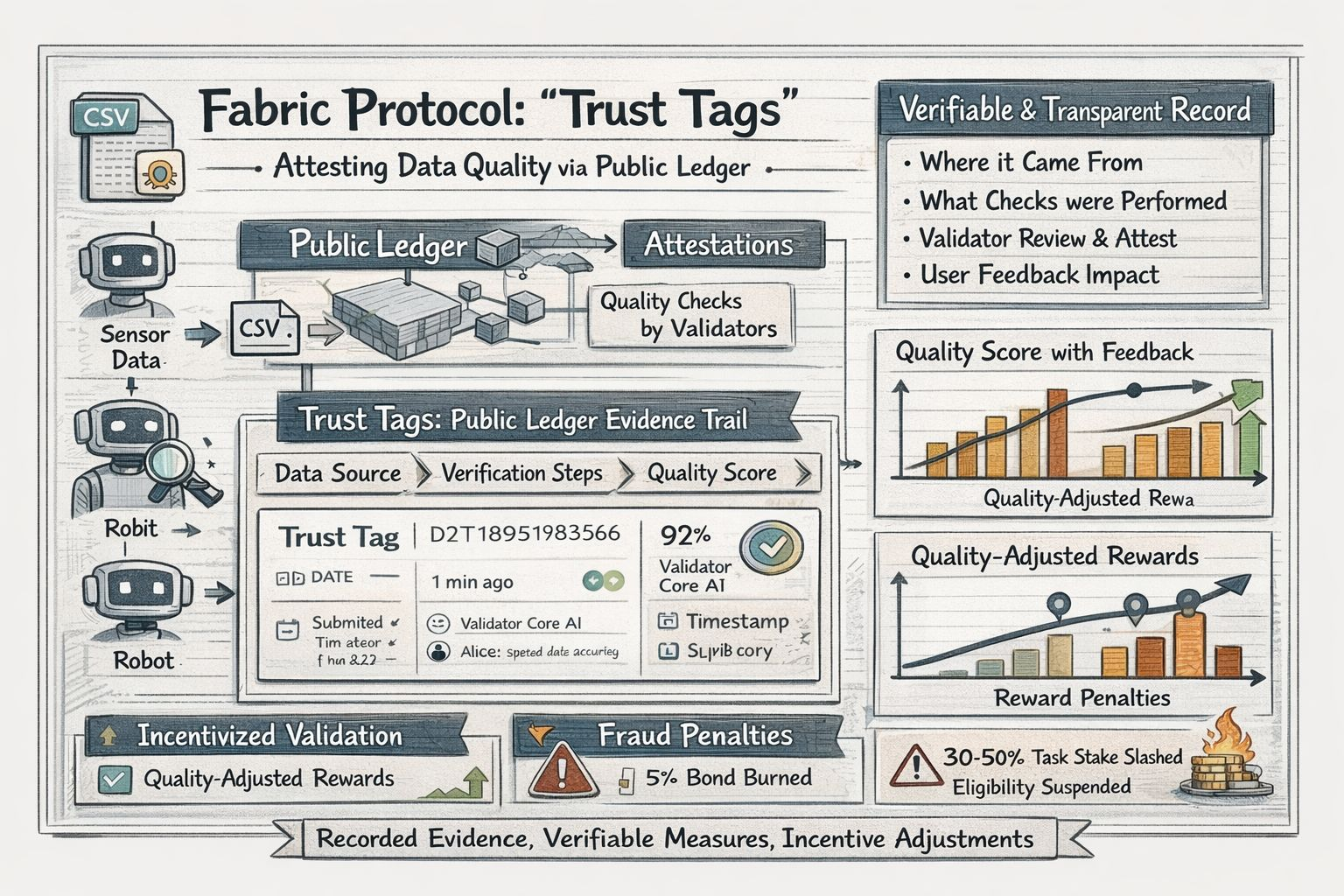

When I think about Fabric’s idea I think in terms of trust tags. I do not mean a glossy badge that declares data clean forever. I mean a visible trail attached to a record or task outcome that shows where it came from when it entered the system what checks were performed who attested to it and whether later feedback changed its standing. Fabric’s own language moves in that direction. The whitepaper describes standardized data quality units validation work through quality attestations and reward structures that adjust for quality through validator review and user feedback. That strikes me as more useful than broad talk about trustworthy AI because it treats quality as something I can inspect instead of something I am asked to accept on faith.

I also understand why this topic is surfacing now. Fabric’s whitepaper is labeled Version 1.0 and dated December 2025. The foundation then opened its ROBO airdrop eligibility and registration portal on February 20 2026. Binance followed with a listing notice for ROBO under its Seed Tag category which marks newer and higher risk listings. In the same whitepaper Fabric lays out a 2026 roadmap that starts with robot identity task settlement and structured data collection in Q1 before moving in Q2 toward incentives tied to verified task execution and data submission. That sequence matters to me because it suggests an early network trying to turn provenance into an operating rule instead of leaving it as a talking point.

The part that feels like real progress to me is the rulebook. Fabric does not stop at saying quality matters. Its whitepaper lays out explicit consequences for fraud downtime and weak performance. Proven fraud can trigger slashing of 30 to 50 percent of the earmarked task stake. If robot availability falls below 98 percent over a 30 day epoch the operator loses emission rewards for that period and 5 percent of the bond is burned. If an aggregated quality score falls below 85 percent the robot loses reward eligibility until the underlying problems are addressed. I like the plainness of that design even while I stay cautious about execution because public systems improve when penalties are visible before failure rather than improvised afterward.

There is another angle here that stays with me. Fabric seems to treat data quality as labor rather than background noise. In many AI systems I watch data collection disappear into a black box and return as confidence with very little explanation. Here at least on paper data submission validation work compute and task completion are all framed as measurable contributions inside the network. That also fits with a wider shift outside crypto. NIST’s recent guidance on AI and cybersecurity says that ensuring data quality throughout the lifecycle can be especially important when AI systems are making automated decisions or contributing data to other processes. I read Fabric as one attempt to make that concern economically visible instead of leaving it buried in governance language or compliance documents.

I still have reservations. A public ledger can preserve an evidence trail but it cannot by itself prove that the original sensor was honest or that the people doing the attestation were careful. Bad inputs can be recorded just as permanently as good ones. Fabric more or less admits this in its challenge based design because the whitepaper says universal verification of all tasks would be prohibitively expensive and instead relies on incentives monitoring and dispute resolution. That is sensible to me yet it is also where the real social difficulty begins. I worry less about whether a ledger can remember and more about whether a community can keep judging well when pressure and money start to build.

That is why I find the idea of trust tags worth following. I do not need Fabric to prove that data can become perfect. I need it to show that data can become more accountable in public and carry enough context that I can tell the difference between a clean record and a polished one. For me that is the real test now. It is not whether the ledger is permanent. It is whether the quality signals attached to it remain honest when money machines and reputation all start pulling at once.