I have spent a lot of time thinking about what people mean when they call artificial intelligence a "black box." The phrase is used so often that it almost feels like a permanent feature of the technology. Complex models produce outputs, but the path from input to decision can be difficult to explain clearly. Engineers may understand parts of the system, yet the reasoning behind specific outcomes can remain difficult to reconstruct. As AI systems move into finance, logistics, and automated decision-making, that opacity begins to matter more. That is what led me to look more closely at Mira Network. What caught my attention about Mira is that it does not try to eliminate the complexity inside AI models themselves. Instead, it attempts to build infrastructure around the activity of those systems. Rather than asking whether a model is fully explainable, the network focuses on whether its actions can be verified and recorded in a way that others can trust. From my perspective, that shift changes how transparency is approached.

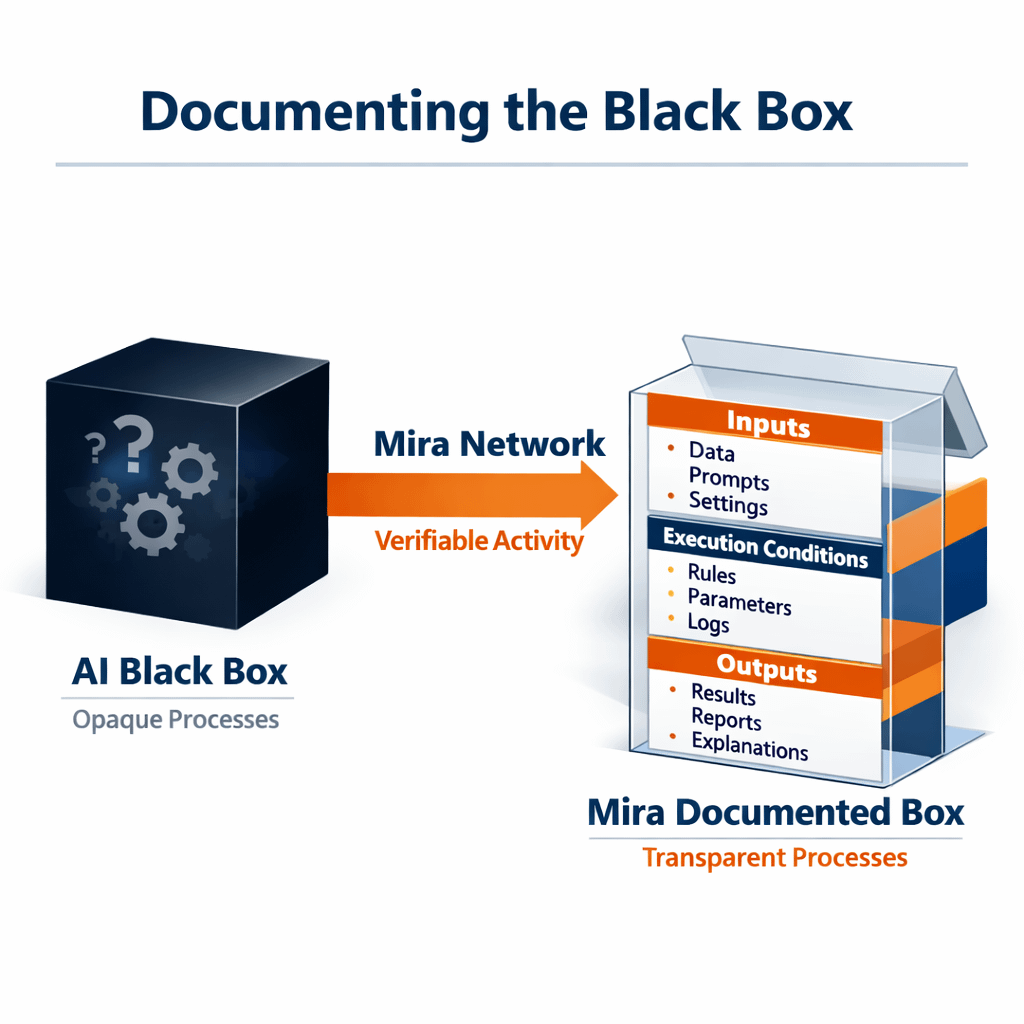

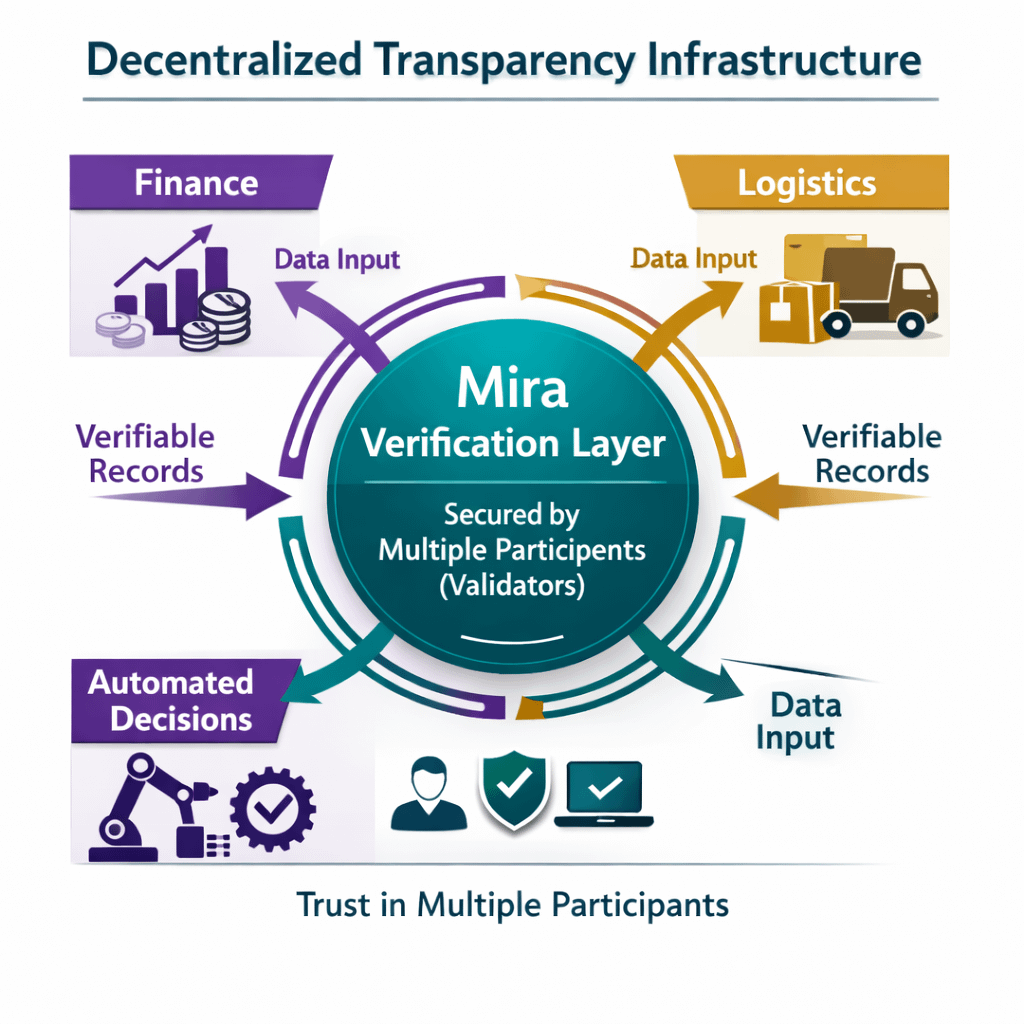

Most AI systems today operate within centralized environments. A company trains the model, deploys it, records its behavior, and investigates its outputs when something unusual happens. For many applications, that approach works well enough. But it also means that the detailed records of what an AI system did remain under the control of the same organization responsible for running it. I find myself wondering how sustainable that model will be as AI systems become more autonomous. Automated trading agents already execute financial decisions. AI systems manage complex logistics flows. Algorithms filter and prioritize information across global platforms. As these systems interact with other institutions and automated processes, the demand for verifiable records of their behavior becomes more noticeable. This is where Mira’s approach begins to make sense to me. Instead of relying entirely on internal logs, the network attempts to anchor records of AI activity in a decentralized verification layer. Inputs, execution conditions, and outputs can be recorded in a shared system that multiple participants can inspect. The model still performs its task, but the record of how that task was performed is no longer confined to a single operator. I think of this less as opening the black box and more as documenting what the box actually did. That distinction matters because AI models may never become completely transparent in the traditional sense. Deep learning systems are complex by design. Expecting them to produce simple explanations for every decision may not always be realistic. But it may still be possible to verify the conditions under which their decisions were made. Still, I approach the concept with some caution. Verification networks introduce their own forms of complexity. Validators must operate reliably. Data inputs must remain consistent. Governance structures must maintain credibility across participants. If any of those elements break down, the transparency promised by the system could become difficult to maintain. Another issue I think about is integration. Developers already use monitoring tools and logging systems to track AI behavior. For Mira’s verification infrastructure to become meaningful, it needs to integrate with those existing workflows rather than adding unnecessary friction. At the same time, the broader trajectory of AI development makes the transparency question increasingly difficult to ignore. As AI agents become more autonomous and begin interacting with other automated systems, the consequences of their decisions expand. Financial systems, infrastructure networks, and digital services all depend on reliable information about what those systems actually did.

In those environments, internal logs may not always be sufficient. That is where decentralized verification layers like the one Mira is attempting to build could become relevant. By providing a shared record of AI behavior, the network offers a way for multiple participants to examine how automated systems operate without relying entirely on the assurances of a single organization. Whether this approach ultimately reshapes how AI transparency is handled remains uncertain. Infrastructure often evolves slowly, and many technologies that appear promising in theory take years to prove their value in practice. For now, I see Mira Network less as the definitive end of the black box and more as an experiment in how transparency around AI activity might evolve. Instead of demanding perfect explainability from complex models, it attempts to create reliable records of their actions. If AI systems continue expanding their role in global infrastructure, that kind of record keeping may eventually become just as important as the intelligence inside the models themselves.