I have noticed that most bets on artificial intelligence tend to follow a familiar pattern. Investors, developers, and companies usually focus on building larger models, training more sophisticated systems, or scaling computational power. The assumption seems straightforward: the more capable the intelligence, the more valuable the system becomes. But the longer I observe how AI systems actually interact with financial platforms, institutions, and automated networks, the more I start to think the real opportunity may lie somewhere else. That thought led me to look more closely at Mira Network. What caught my attention about Mira is that it does not attempt to compete in the race for intelligence itself. It doesn’t promise the largest model or the most advanced reasoning system. Instead, it focuses on something less visible but potentially more structural: verifying what AI systems actually do. At first, that might sound like a smaller problem, but the implications start to grow once AI systems move beyond simple applications.

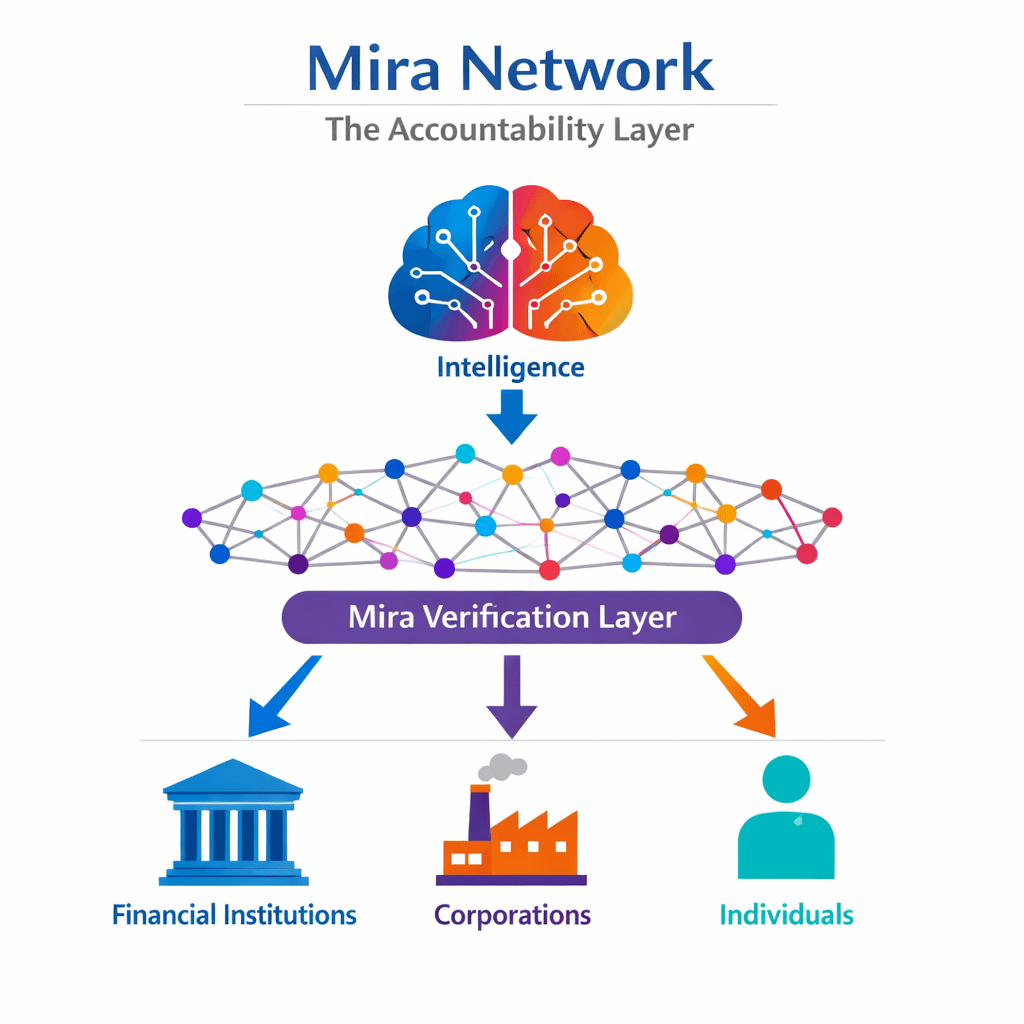

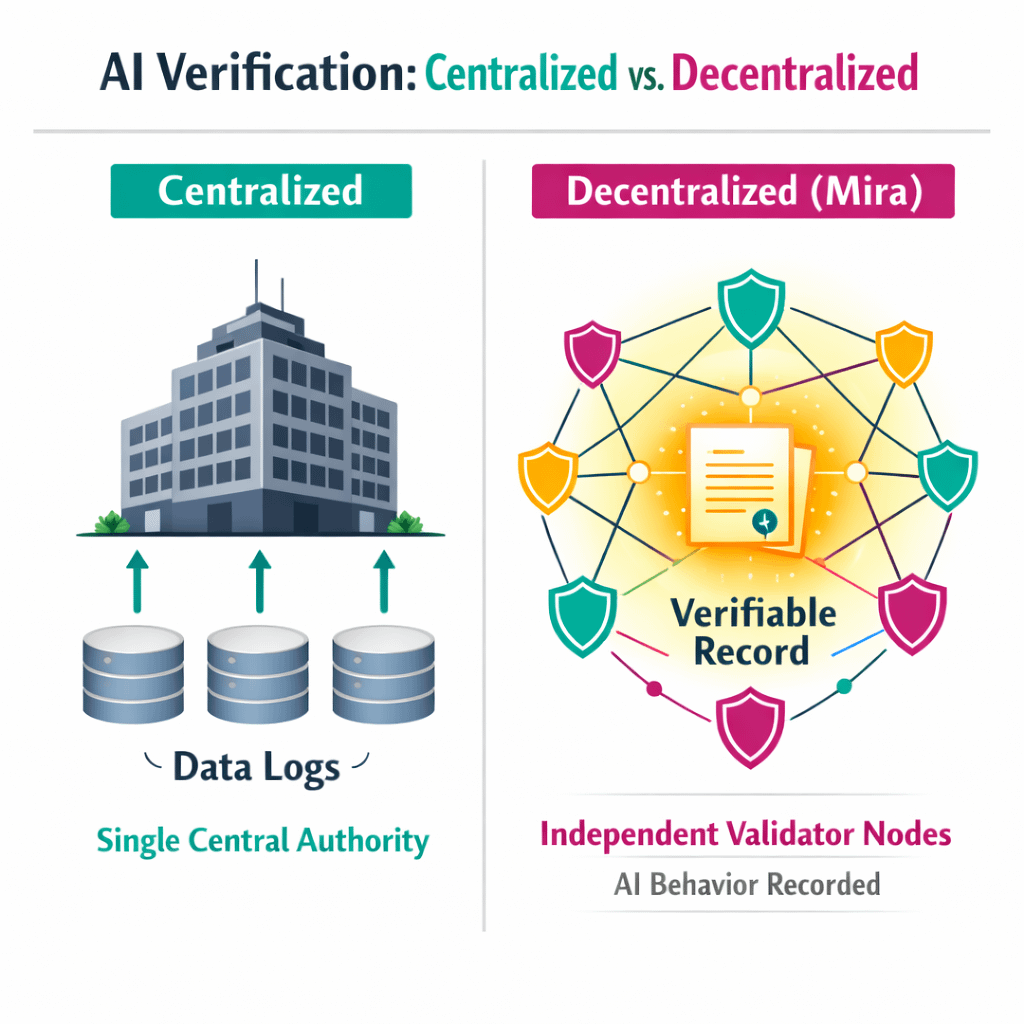

Today, many AI systems already operate in environments where their outputs trigger real consequences. Trading algorithms execute financial transactions, automated agents manage logistics decisions, and recommendation systems shape the flow of information across digital platforms. In these contexts, the question is not just whether the AI is capable; it is whether its actions can be verified and trusted by other systems. Most of the time, that verification still happens through centralized infrastructure. The organization running the AI records the system’s activity and provides explanations when something goes wrong. In many situations, that arrangement works well enough. But it also means that the evidence of what an AI system did remains under the control of the same entity responsible for the system itself. I keep thinking about how that dynamic might change as AI becomes more autonomous. If different AI agents begin interacting across financial systems, digital services, and automated infrastructure, the need for shared verification may become more important. Systems that rely solely on internal logs could start to look insufficient when multiple organizations depend on the same automated decisions. That’s where Mira’s infrastructure begins to make sense. Instead of focusing on intelligence, the network attempts to create a decentralized layer that records and verifies AI behavior. Inputs, execution parameters, and outputs can be anchored in a shared system that multiple participants can observe. In practical terms, that means the record of what an AI system did does not belong exclusively to the organization operating it. From my perspective, this is what makes Mira an asymmetric bet.

Most of the AI ecosystem is focused on making systems smarter. Mira is focused on making those systems accountable. If AI capabilities continue expanding rapidly, the infrastructure needed to verify their actions could become just as important as the intelligence itself. Still, I approach the idea with a fair amount of caution. Infrastructure projects often look compelling in theory but face significant challenges once they encounter real operational environments. Verification networks require reliable validators, incentive structures, and governance systems that function under pressure. If those elements fail, the credibility of the entire network can be undermined. Another factor I think about is integration. Developers already rely on numerous monitoring and logging tools to track AI behavior. For Mira’s infrastructure to become widely adopted, it needs to fit naturally into those workflows rather than introducing unnecessary complexity. Even with those uncertainties, the underlying problem Mira addresses seems unlikely to disappear. As AI systems become more autonomous and interconnected, the need for reliable records of their behavior will only increase. Institutions rarely rely on trust alone when automated systems begin affecting financial or operational outcomes. That is why the idea of a decentralized verification layer remains interesting to me. Whether Mira ultimately becomes that layer is still an open question. Infrastructure evolves slowly, and systems that appear promising early on often need years of testing before they become widely trusted. For now, what I see is a project placing a different kind of bet on the future of artificial intelligence. Instead of competing to build the most powerful AI, it focuses on building the infrastructure that verifies what those systems actually do. If AI continues expanding the way many expect, that layer of accountability may turn out to be more valuable than it initially appears.